Designing and Evaluating a Conversational Agent for Early Diagnosis of Alzheimer's Disease and Related Dementias

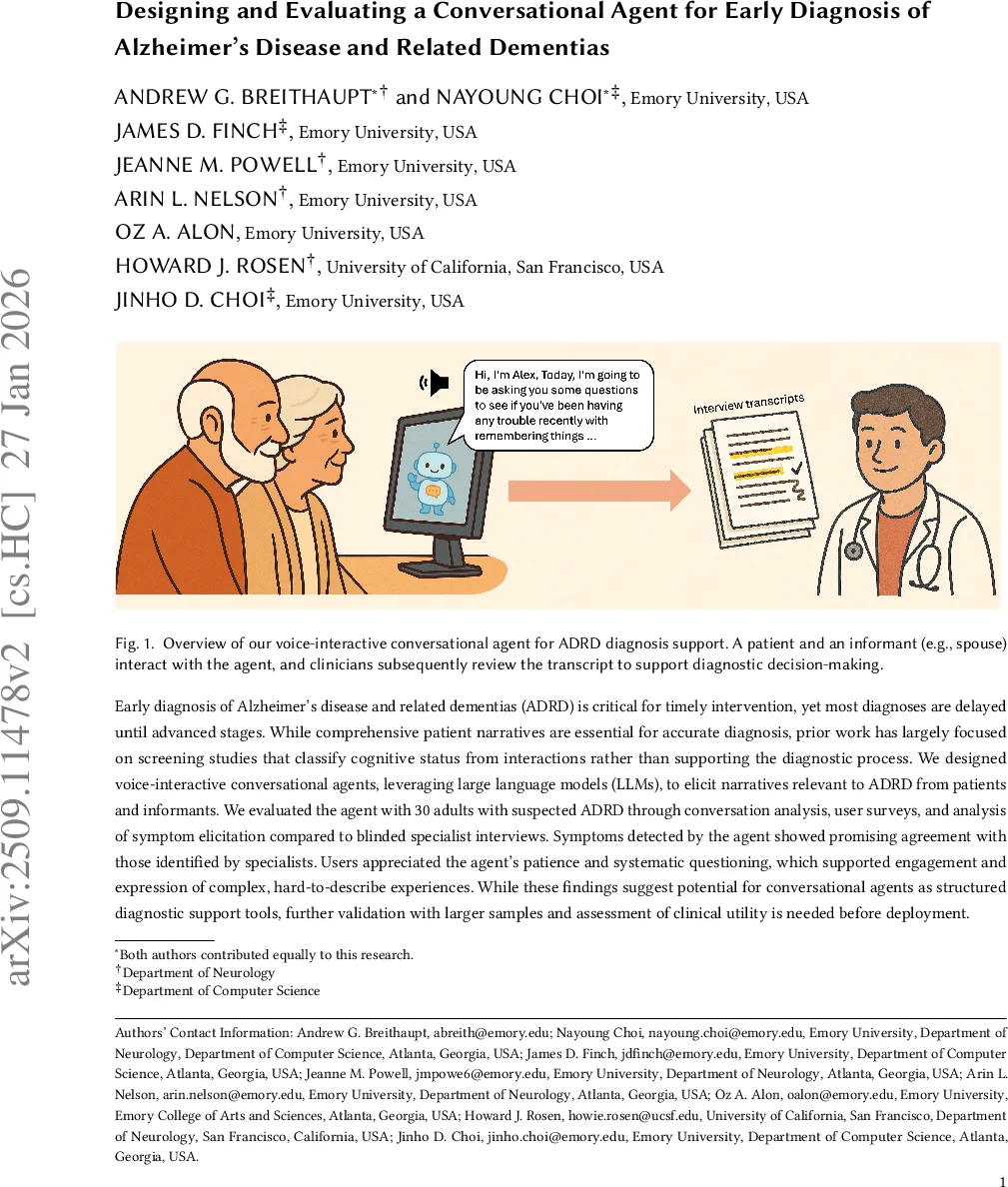

Early diagnosis of Alzheimer’s disease and related dementias (ADRD) is critical for timely intervention, yet most diagnoses are delayed until advanced stages. While comprehensive patient narratives are essential for accurate diagnosis, prior work has largely focused on screening studies that classify cognitive status from interactions rather than supporting the diagnostic process. We designed voice-interactive conversational agents, leveraging large language models (LLMs), to elicit narratives relevant to ADRD from patients and informants. We evaluated the agent with 30 adults with suspected ADRD through conversation analysis, user surveys, and analysis of symptom elicitation compared to blinded specialist interviews. Symptoms detected by the agent showed promising agreement with those identified by specialists. Users appreciated the agent’s patience and systematic questioning, which supported engagement and expression of complex, hard-to-describe experiences. While these findings suggest potential for conversational agents as structured diagnostic support tools, further validation with larger samples and assessment of clinical utility is needed before deployment.

💡 Research Summary

This paper presents the design, implementation, and preliminary evaluation of a voice‑interactive conversational agent intended to support early diagnosis of Alzheimer’s disease and related dementias (ADRD). Recognizing that accurate diagnosis relies heavily on a comprehensive patient narrative—a resource often lacking in brief primary‑care visits—the authors built an agent that can elicit detailed symptom histories from patients and their informants before a specialist appointment.

The system leverages Anthropic’s Claude 3.5 large language model (LLM) accessed via the Amazon Bedrock API. Real‑time speech processing is achieved with OpenAI‑compatible Whisper for automatic speech recognition and Kokoro for text‑to‑speech synthesis. The conversational flow follows a semi‑structured interview protocol derived from the Assessment of Cognitive Complaints Toolkit for AD (ACCT‑AD). This protocol covers roughly thirty diagnostic topics (e.g., memory difficulties, language problems, personality changes, motor issues), each with a primary open‑ended question and three to four conditional follow‑up probes. Conditional branching rules are embedded in the system prompt, allowing the LLM to adapt its questions based on the participant’s responses while preserving clinical coverage.

Interaction design was iteratively refined to accommodate older adults with cognitive impairment. Early pilots that used predominantly yes/no questions yielded short, uninformative answers; the design was therefore shifted to begin each topic with an open‑ended prompt, followed by targeted follow‑ups. Supportive scaffolding—such as concrete examples (“in the past couple of years…”) and gentle re‑phrasing when participants struggled—was added to reduce cognitive load. Turn‑taking latency was calibrated to 1–2 seconds, mimicking a human interviewer and preventing abrupt interruptions. Multi‑party scenarios (patient plus informant) were handled by directing speech primarily to the patient while allowing the informant to assist when needed.

A within‑subject study enrolled 30 older adults with suspected cognitive impairment and their informants. Each dyad completed two interviews: one led by the conversational agent and one conducted by a blinded dementia specialist. The authors collected audio recordings, real‑time transcripts, and post‑interview surveys via REDCap. Evaluation metrics included (1) conversation analysis (utterance length, pause frequency, interruptions), (2) user‑experience surveys (friendliness, clarity, stress), and (3) symptom‑level agreement between the agent and the specialist, quantified with Cohen’s kappa.

Results showed high user satisfaction (average 4.3/5), with particular praise for the agent’s patience and systematic questioning. Conversation analysis indicated that the agent’s sessions produced comparable utterance lengths to the specialist interviews while generating fewer interruptions (0.8 vs 1.2 per ten minutes). Symptom agreement was strongest for core domains such as memory, language, and personality changes (kappa ≈ 0.71), though agreement was lower for less‑prominent items like motor changes (kappa ≈ 0.45). Overall, the agent captured clinically relevant information at a level that aligns closely with expert elicitation.

The authors acknowledge several limitations. The study was conducted in a controlled, in‑person setting rather than the intended remote phone deployment, leaving real‑world feasibility untested. The sample size (n = 30) limits statistical power and generalizability, especially across diverse racial, ethnic, and socioeconomic groups. Moreover, the LLM’s “black‑box” nature raises concerns about traceability of errors and regulatory acceptance; the paper calls for additional validation, transparent auditing mechanisms, and human‑in‑the‑loop safeguards before clinical adoption.

In conclusion, this work demonstrates that a well‑designed LLM‑driven conversational agent can systematically gather rich, diagnostic‑relevant narratives from older adults and their caregivers, achieving a degree of symptom capture comparable to specialist interviews. The study highlights key design levers—open‑ended questioning, supportive scaffolding, calibrated turn‑taking, and multi‑party awareness—that are essential for engaging cognitively impaired users. Future research should pursue large‑scale, multi‑site trials, evaluate remote deployment on telephone or mobile platforms, integrate privacy‑preserving data pipelines with electronic health records, and develop robust validation frameworks to ensure safety, equity, and clinical utility.

Comments & Academic Discussion

Loading comments...

Leave a Comment