Exploring the Use of VLMs for Navigation Assistance for People with Blindness and Low Vision

This paper investigates the potential of vision-language models (VLMs) to assist people with blindness and low vision (pBLV) in navigation tasks. We evaluate state-of-the-art closed-source models, including GPT-4V, GPT-4o, Gemini-1.5-Pro, and Claude-…

Authors: Yu Li, Yuchen Zheng, Giles Hamilton-Fletcher

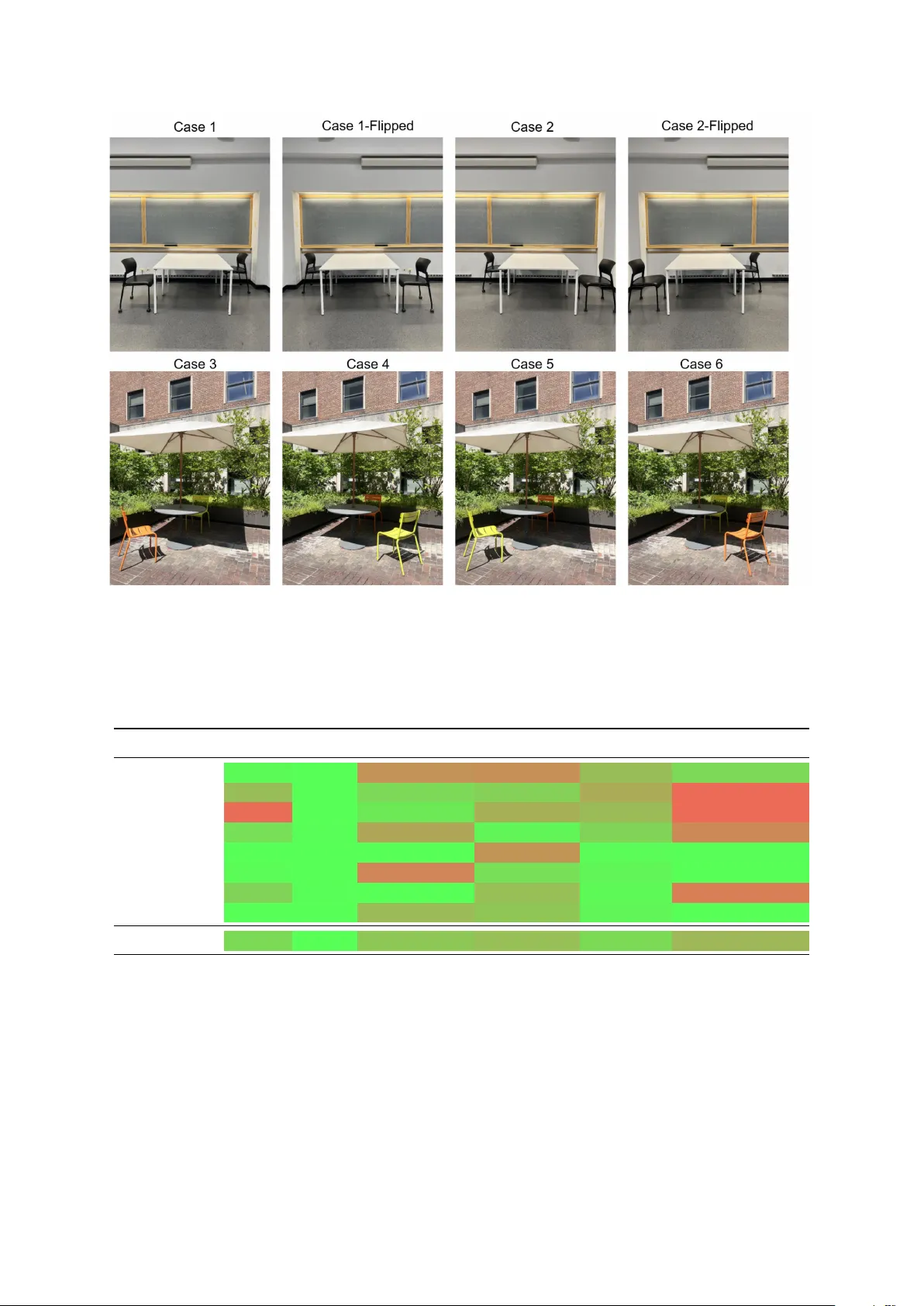

Exploring the Use of VLMs f or Na vigation Assistance f or P eople with Blindness and Low V ision Y u Li † , Y uchen Zheng † , Giles Hamilton-Fletcher ‡ , Marco Mezza villa ⋄ , Y ao W ang ‡ , Sundeep Rangan ‡ , Maurizio Porfiri ‡ , Zhou Y u † , John-Ross Rizzo ‡ † Columbia Uni versity ‡ Ne w Y ork Uni versity ⋄ Politecnico di Milano Abstract This paper in vestigates the potential of vision- language models (VLMs) to assist people with blindness and low vision (pBL V) in nav- igation tasks. W e e valuate state-of-the-art closed-source models, including GPT -4V , GPT - 4o, Gemini-1.5-Pro, and Claude-3.5-Sonnet, alongside open-source models, such as Llav a- v1.6-mistral and Llav a-onevision-qwen, to an- alyze their capabilities in foundational visual skills: counting ambient obstacles, relativ e spa- tial reasoning, and common-sense wayfinding- pertinent scene understanding. W e further as- sess their performance in na vigation scenar - ios, using pBL V -specific prompts designed to simulate real-world assistance tasks. Our find- ings rev eal notable performance disparities be- tween these models: GPT -4o consistently out- performs others across all tasks, particularly in spatial reasoning and scene understanding. In contrast, open-source models struggle with nuanced reasoning and adaptability in com- plex environments. Common challenges in- clude dif ficulties in accurately counting objects in cluttered settings, biases in spatial reason- ing, and a tendency to prioritize object details ov er spatial feedback, limiting their usability for pBL V in navigation tasks. Despite these limitations, VLMs sho w promise for wayfind- ing assistance when better aligned with human feedback and equipped with impro ved spatial reasoning. This research provides actionable insights into the strengths and limitations of cur- rent VLMs, guiding de velopers on effecti vely integrating VLMs into assistiv e technologies while addressing ke y limitations for enhanced usability . 1 Introduction Navig ating the world presents significant chal- lenges for people with blindness and low vision (pBL V). According to the W orld Health Or gani- zation (WHO), approximately 285 million people worldwide are visually impaired, with 39 million li ving with complete blindness ( WHO , 2013 ; Pas- colini and Mariotti , 2012 ). This number is expected to increase significantly in coming decades. V i- sual impairment profoundly impacts daily life, es- pecially in the area of mobility . pBL V often en- counter dif ficulties in moving through complex en- vironments safely and efficiently , which limits their independence and ability to participate in myriad acti vities. While pre vious assistive technologies for pBL V , such as mobile applications ( Jabnoun et al. , 2020 ; Nayak and Chandrakala , 2020 ; Gint- ner et al. , 2017 ) and wearable devices ( Chen et al. , 2021 ; Kaiser and Lawo , 2012 ; Zare et al. , 2022 ), of fer essential support, they are often inadequate in providing comprehensi ve navig ation assistance in dynamic and unfamiliar settings. The develop- ment of vision-language models (VLMs) ( Du et al. , 2022 ; Zhang et al. , 2023 ; Bordes et al. , 2024 ) holds significant promise in addressing these limitations and could enhance the quality of life for pBL V . Recent advancements in large language mod- els (LLMs), such as GPT -3 ( Brown et al. , 2020 ) and GPT -4 ( et al. , 2024 ), ha ve significantly en- hanced the capabilities of natural language pro- cessing tasks, including text generation ( Li et al. , 2024 ), machine translation ( Haddo w et al. , 2022 ), and summarization ( Zhang et al. , 2024 ). Building on the success of LLMs, pre-trained VLMs, such as CLIP ( Radford et al. , 2021 ), LLaV A ( Liu et al. , 2023 ), and GPT -4 with vision (GPT -4V) ( et al. , 2024 ; OpenAI , 2023 ; Y ang et al. , 2023 ), ha ve sho wn potential in addressing various complex computer vision tasks, from visual question an- swering ( Bazi et al. , 2023 ) to image captioning ( Hu et al. , 2022 ). These models are designed to under- stand images in context, enabling them to bridge the gap between visual information and natural language description by interpreting scenes holisti- cally , such as recognizing a city park or a birthday party , rather than merely identifying objects. VLMs hav e the potential to serve as strong foun- dations for de veloping dialogue systems explicitly designed to facilitate navigation tasks for pBL V . These models appear to show strong spatial under - standing capabilities, but their actual robustness for high-stakes assisti ve tasks is lar gely un verified. Recent studies hav e in vestig ated the application of VLMs in na vigation tasks, highlighting their abil- ity to comprehend scenes, respond to navigation- related questions, and pro vide detailed descriptions of visual surroundings ( W ang et al. , 2024a ; Hi- rose et al. , 2024 ; Liu et al. , 2024b ; Azzino et al. , 2024a ). For e xample, prior research has sho wn that VLMs can effecti vely describe en vironments to help mobile agents navigate unf amiliar spaces ( Liu et al. , 2024c ). Another line of research has fo- cused on using VLMs to generate grounded in- structions for v arious scenarios, such as searching through kitchens or navigating outdoor settings for pBL V ( Merchant et al. , 2024 ). Howe ver , before these models can be safely deployed, their funda- mental capabilities and, critically , their reliability in high-stakes na vigation tasks must be rigorously e valuated. Ef forts have been made to ev aluate the perfor- mance of VLMs. For instance, e xisting benchmark- ing datasets like MMMU ( Y ue et al. , 2024a , b ) are designed to test models on their knowledge and reasoning abilities. Further, some studies ha ve de- veloped datasets specifically aimed at navigation tasks ( Krantz et al. , 2020 ; Song et al. , 2024 ; Li et al. , 2023 ; Zhu et al. , 2021 ), assessing VLMs’ ca- pacity to understand distance, directional cues, and en vironmental features that are crucial for mobility . Ho wev er , man y of these datasets and benchmarking ef forts are unrealistic or not e xplicitly tailored for navig ation tasks in volving pBL V . Although VLMs hav e shown considerable capability in everyday visual-language tasks, their overall ef fectiveness in navig ation, such as scene understanding, distance comprehension, and route planning for pBL V , still requires thorough ev aluation. This highlights the necessity for a realistic and systematic VLMs as- sessment to better understand their potential and limitations in assisting with wayfinding for pBL V . In particular, there is a critical need to ev aluate the reliability and consistenc y of these models, as e ven rare failures can be dangerous in a high-stakes assisti ve application. This study provides such a systematic e v aluation focused on model reliability . Rather than aiming for a lar ge-scale, ‘in-the-wild’ dataset, where fail- ures can be attributed to many confounding vari- ables, we present a focused, controlled study to benchmark fundamental VLM capabilities and pin- point specific failure modes. W e de veloped a ne w , meticulously curated dataset to create controlled, repeatable testbeds. Our methodology is designed to isolate foundational skills (such as object count- ing, spatial reasoning, and instruction generation) and, most importantly , to rigorously test model consistency by querying each scenario 100 times. This deep, repeated-measures approach allo ws us to uncov er a critical finding: many state-of-the-art VLMs exhibit significant brittleness and inconsis- tency , where even minor , controlled changes in a scene can lead to drastically different and incorrect outputs. Our work thus provides a solid ‘first step’ and valuable insights into the practical reliability of current VLMs for na vigation assistance, identi- fying key areas for improv ement before real-world deployment. 2 Objectives This study aims to e valuate the capabilities of cur- rent state-of-the-art VLMs, in performing tasks essential for navigation. Our primary focus is as- sessing VLMs abilities in fundamental tasks es- sential for wayfinding: counting ambient obsta- cles, r elative spatial r easoning, and understanding scenes with common-sense wayfinding r elevance. . Through this systematic and controlled e valuation, we seek to highlight both the strengths and criti- cal limitations of VLMs, which are foundational to handling real-world navigation challenges for pBL V . The guiding questions for our research are: 1. Do VLMs possess the skills to accurately count objects, understand spatial relationships, and apply common-sense reasoning in na viga- tion tasks? 2. Ho w reliable and consistent are VLMs when processing controlled variations of the same scene? 3. What are the strengths and limitations of VLMs in navigation tasks, and ho w can these insights guide future improv ements? W e aim to provide a comprehensiv e understanding of the current state of VLMs in aiding navig ation for pBL V . The findings from this study are expected to offer valuable insights for future research and de velopment, benefiting stakeholders such as VLM de velopers and providers of assistive-technology services. 3 Method In this study , we explore the role of VLMs in assist- ing pBL V during navigation, specifically focusing on electronic travel aids (ET As). ET As are tech- nologies designed to aid local navigation by detect- ing obstacles, identifying landmarks, and enhanc- ing spatial awareness. Unlike electronic orienta- tion aids (EO As), which focus on large-scale route planning (such as GPS-based na vigation), ET As en- able users to make real-time decisions and navigate safely within their immediate surroundings. T o assess the feasibility of VLMs for ET A-based navig ation, we ev aluate their performance in three fundamental navigation skills: counting ambient obstacles, relati ve spatial reasoning, and common- sense wayfinding-pertinent scene understanding. Counting obstacles is crucial for detecting potential hazards and understanding en vironmental complex- ity . Spatial reasoning enables models to interpret object relationships and determine relativ e posi- tioning, which is essential for guiding users around obstacles and to ward safe paths. Common-sense wayfinding-pertinent scene understanding allo ws models to infer contextual details, such as whether a seat is occupied or if a pathway is accessible, aiding in ef fecti ve decision-making. By systemat- ically analyzing these core competencies, we aim to determine whether VLMs can reliably support real-time, local navig ation assistance for pBL V . 3.1 Data Collection W e created a purpose-built dataset 1 to test v arious VLMs under controlled conditions. The full dataset contains 150 unique images featuring diverse in- door and outdoor scenes with chairs, benches, and sofas serving as the primary objects. These scenes include open outdoor spaces as well as complex indoor en vironments. T o ensure stability during the experiments and maintain control ov er the vari- ables, we used a fixed-camera position while sys- tematically modifying specific attributes within the scenes. These adjustments included variations in the number and style of chairs, the placement of obstacles along the path at various stances, and sub- tle background details. By isolating and precisely adjusting these variables while keeping other ele- ments constant, we achieved a more comprehensi ve and accurate ev aluation of the models’ capabilities. W e have publicly released this dataset to adv ance research in navig ation technologies, particularly 1 https://github .com/rizzojr01/vlm_navigation_e val.git for individuals with pBL V . This dataset will pro- vide essential support for e valuating and impro ving VLMs’ spatial understanding and path-planning capabilities. It not only lays a solid foundation for subsequent studies b ut also paves the way for practical applications in assisti ve technologies, con- tributing to the dev elopment of more robust and ef ficient navigation solutions. For the e xperiments in this paper , we selected a focused subset of these scenes, which are detailed in the following sec- tions. T o rigorously ev aluate model reliability and consistency , each of these unique scene-prompt combinations was queried 100 times, for a total of 3,400 e valuations. 3.2 Fundamental Capabilities T o ev aluate the core capabilities of VLMs in na viga- tion tasks, we focused on three sub-tasks: counting ambient obstacles, relati ve spatial reasoning, and common-sense wayfinding-pertinent scene under - standing. These sub-tasks are integral to the ov erall ef fectiv eness of a navigation system designed for pBL V . By systematically assessing the performance of VLMs in these areas, we aim to identify their strengths and weaknesses in providing reliable na v- igation assistance. The following sections offer detailed discussions on the ev aluation methods and findings for each sub-task. 3.2.1 Counting Ambient Obstacles W e collected a series of images from scenes fea- turing varying numbers of chairs, ranging from 1 to 6, to assess the counting abilities of VLMs. Each scene had dif ferent layouts and chair counts to represent a spectrum of situations, from simple to complex. W e ask ed various VLMs to count the number of chairs in each scene. W e recorded their accuracy to determine if their outputs accurately reflected the actual number of chairs present in the images. This testing process allo wed us to system- atically assess the ability of VLMs to perceiv e and quantify en vironmental elements, a critical skill for ET As that must detect obstacles and interpret scene complexity to support real-time navigation for pBL V . This sub-task used a set of 8 unique scenes. 3.2.2 Relative Spatial Reasoning Our e valuation of relativ e spatial reasoning e xam- ines how variations in object positioning and ap- pearance impact the models’ ability to pro vide ac- curate spatial feedback. The test consists of images featuring two chairs placed at different distances from the viewpoint, with some scenarios presenting chairs of the same color and others incorporating dif ferent colors. The models are prompted with the question: “Which chair is closer to the view- point?” This setup ev aluates whether VLMs can assess spatial relationships independently of ob- ject appearance or color dif ferences. F or ET As, precise spatial reasoning is crucial to ensure that navig ation guidance is based on object positions rather than visual attributes. By incorporating a range of test cases, we analyze the models’ ability to consistently and accurately interpret spatial re- lationships, providing insights into their reliability for real-world navigation support. This sub-task used a set of 8 unique scenes. 3.2.3 Common-sense W ayfinding-Pertinent Scene Understanding Common-sense reasoning enables systems to in- terpret contextual information in ways consistent with human understanding, which is crucial for pro- viding accurate and meaningful guidance across myriad scenes. For e xample, determining whether a seat is v acant requires recognizing the presence of a chair and identifying conte xtual cues indicat- ing occupancy , such as items like a coat placed on the chair . T o e v aluate the common-sense reasoning capabilities of VLMs, we designed tests in volving v arious scenarios where seat occupancy was im- plicitly represented by personal items. The models were prompted with the question: “ Are there any v acant seats in this image?” T o clarify the defini- tion of a vacant seat, we provided the following hint: “ A ‘vacant seat’ refers to a seating option (e.g., chair , bench, or couch) that is unoccupied by any person and does not have any personal items placed on it or on the corresponding desk, table, or surface. Answer yes or no before providing details. ” This setup tested the models’ ability to detect objects on chairs and infer seat occupancy across div erse scenes based on contextual clues. By systematically varying the scenarios and analyzing the responses, we assessed the strengths and lim- itations of the models’ common-sense reasoning capabilities, offering insights into their practical utility for scene understanding. This sub-task used a set of 5 unique scenes. 3.3 Evaluating VLMs f or the Na vigation T ask T o assess the effecti veness of VLMs in na vigation tasks, we conducted a structured e v aluation focus- ing on their ability to support ET As. Our ev alu- ation examines how well these models assist in identifying destinations, planning routes, and rec- ognizing obstacles, which are k ey components of real-world na vigation assistance for pBL V . The fol- lo wing steps outline our approach to systematically measuring model performance in these tasks. Scenario Selection T o ev aluate the models’ per- formance, we designed a di verse set of na vigation scenarios with varying levels of complexity , cat- egorized based on the number of obstacles, spa- tial arrangement of tar get objects, and the le vel of ambiguity in the en vironment. Simple scenarios featured clear , unobstructed paths with minimal distractions, while moderate scenarios introduced partial obstructions or closely placed objects requir- ing spatial dif ferentiation. Challenging scenarios in volved cluttered en vironments, multiple potential target objects, or obstacles requiring precise route planning. T o systematically analyze factors influ- encing na vigation accuracy , we maintained a con- sistent baseline scenario and introduced controlled v ariations in obstacles, distances, and tar get objects. This approach allowed us to assess the adaptability and effecti veness of VLMs under dif ferent con- ditions, using a standardized system prompt and navig ation queries with increasing complexity . Metrics Responses were not gi ven a single “cor - rect” score. Instead, they were independently rated on three criteria: • Destination: Did the model accurately identify and guide the user to the correct destination? • Route: Did the model correctly provide an optimal route? • Obstacles: Did the model correctly detect ob- stacles and warn the user to watch out for them? Human Evaluation The reliability of a model’ s navig ation ability was e v aluated by analyzing the VLMs’ outputs on each metric. T o ensure a com- prehensi ve assessment, we asked tw o graduate stu- dents from Columbia Uni versity to act as human annotators; both students had no direct in volv ement in the project so the y could label the outcomes in an unbiased manger strictly based on defined metrics. Annotators were provided with detailed instruc- tions and labeling guidelines to ensure consistency and accuracy across all results. 4 Results In this section, we present the results of our ev alua- tion of the navigation capabilities of various VLMs for pBL V , including the fundamental capabilities (counting ambient obstacles, relati ve spatial reason- ing, and common-sense w ayfinding-pertinent scene understanding) and the holistic navigation tasks. T o gain a broader perspecti ve, we utilize a range of closed-source models (GPT -4V , GPT -4o, Gemini- 1.5 Pro, and Claude-3.5-sonnet) as well as open- source models (Llav a-v1.6-mistral-7B and Llav a- one vision-qwen2-7B) for comparison. A compara- ti ve analysis of these models’ performances on var - ious fundamental tasks can of fers unique insights into their strengths and limitations, helping to de- termine their suitability for wayfinding support. 4.1 Fundamental Capabilities Here, we present the results of e v aluating three fun- damental capabilities of v arious VLMs. T o ensure randomness and assess the default capabilities of each model in original form, we utilized default decoding strategies. Each model was tested 100 times per image, and we calculated the accuracies of the output results. 4.1.1 Counting Ambient Obstacles W e e valuate the counting capabilities of various VLMs using test images containing from one to six chairs. Accuracy is the proportion of the claimed number of chairs in the outputs that matched the ac- tual ground-truth v alues. W e also record the mean and variance of predictions to assess the stability of each model’ s performance. The test results are summarized in T able 1 . Among all the models, GPT -4o demonstrated the highest stability and accuracy . In low-density scenes with one, two, or three chairs, it achie ved 100% accuracy and maintained strong performance e ven in more comple x scenarios. Across all cases, from one chair to six chairs, its accuracy exceeded 77% , sho wcasing excellent rob ustness in handling simple and complex spatial distrib utions. Other models exhibited notable limitations, par - ticularly in high-density or une venly arranged scenes. While Gemini-1.5 Pro and GPT -4V per- form well in low-density scenarios, their perfor- mance deteriorates significantly as scene com- plexity increases. For instance, Gemini-1.5 Pro achie ves only 7% and 14% accuracy in t wo cases with three chairs and just 25% accuracy in the case with six chairs. The high v ariance in its predictions further indicates instability . Similarly , GPT -4V struggled in complex scenes, achieving 3% accu- racy in the scene with four chairs and only 29% in the case with six chairs. Open-source models such as Llav a-qwen and Lla v a-mistral regularly fail even in the simplest one-chair case, with accuracies of just 38% and 58% , respecti vely . Claude-3.5-sonnet performs flawlessly in scenes with uniformly distributed chairs, such as four or six chairs, achieving 100% accuracy with zero v ari- ance. Howe ver , its accuracy drops to 0% in sce- narios where chairs are unev enly distrib uted, such as three or fiv e chairs, with a chair positioned di- rectly in front or to the left. Notably , these extreme results (perfect performance or complete failure) were not influenced by the model’ s temperature parameter settings in our experiments. W e used the default temperature of the Claude API, which was set to 1. The temperature parameter in the API can be adjusted from 0 to infinitely large values, where 0 makes the model behav e deterministically , and higher temperatures introduce more variability in the responses. This observation suggests that the results are due to Claude-3.5-sonnet’ s inherent spatial reasoning ability rather than a parameter- related bias, highlighting specific blind spots that could hinder its applicability in navig ation tasks. 4.1.2 Relative Spatial Reasoning T o e valuate the spatial reasoning abilities of various VLMs, we conducted relati ve distance estimation tests using images containing two objects. The models were tasked to determine which object was closer . These images include objects of different colors and styles, with positions systematically v ar- ied. Each test was repeated 100 times, and the results are presented in T able 2 . Among all the models, GPT -4o consistently achie ved the highest accuracy across all test sce- narios, with scores close to or at 100% in most cases. This demonstrates its exceptional ability to adapt to dif ferent spatial configurations and lighting conditions. For instance, GPT -4o maintained per- fect accuracy (100%) in “Case 1, ” “Case 3, ” “Case 4, ” and “Case 6, ” and nearly perfect (99%) in the flipped v ersion of “Case 2. ” These results highlight its robustness for tasks requiring precise spatial reasoning. Other models exhibited biases in their results based on the relati ve positions of the chairs. Specif- ically , a strong positional bias was observed in Figure 1: Evaluation e xamples for the fundamental counting task, images feature one to six chairs, with varying arrangements for scenarios in volving three and four chairs. T able 1: Counting task results: Accuracy , mean, and v ariance of model predictions ov er 100 e v aluations per test image, ranging from 1 to 6 chairs. The mean represents the av erage number of chairs predicted by the models, and the variance indicates the consistenc y of these predictions. Acc., Mean, V ar . GPT -4V GPT -4o Gemini-1.5 Pro Llav a-mistral Llav a-qwen Claude-3.5-sonnet 1 Chair 99 1.00 0.01 100 1.00 0.00 99 0.99 0.01 38 1.60 0.26 58 1.44 0.31 100 1.00 0.00 2 Chairs 99 2.01 0.01 100 2.00 0.00 99 1.98 0.04 83 2.11 0.15 96 2.07 0.13 100 2.00 0.00 3 Chairs 60 3.41 0.26 100 3.00 0.00 7 2.02 0.16 56 2.56 0.31 57 2.67 0.32 0 2.00 0.00 3 Chairs-Alt 28 2.28 0.20 77 3.23 0.18 14 1.45 1.36 39 2.53 0.39 84 3.00 0.16 100 3.00 0.00 4 Chairs 3 3.03 0.03 98 4.02 0.02 45 2.22 4.84 70 3.74 0.42 100 4.00 0.00 100 4.00 0.00 4 Chairs-Alt 61 3.67 0.28 99 4.01 0.01 32 1.28 3.52 61 3.57 0.33 100 4.00 0.00 100 4.00 0.00 5 Chairs 83 4.95 0.17 82 4.64 1.99 36 3.30 6.70 5 3.62 0.56 29 5.67 0.26 0 4.00 0.00 6 Chairs 29 5.20 0.40 92 6.12 0.19 25 1.84 7.35 0 3.85 0.43 80 6.36 0.56 100 6.00 0.00 Overall 58 - - 94 - - 45 - - 44 - - 76 - - 75 - - certain models. For example, GPT -4V , Llav a- qwen, and Claude-3.5-Sonnet achie ved signifi- cantly higher accuracy when the chairs were of the same style and the closer chair was on the left oddly . For cases where the left chair was closer (Case 1 and Case 2 flipped), these models achiev ed accura- cies of 97% and 81% for GPT -4V , 61% and 74% for Llav a-Qwen, and 77% and 30% for Claude-3.5- Sonnet. Con versely , their performance declined when the closer chair was on the right, as seen in Case 1 flipped and Case 2, with accuracies of 61% and 13% for GPT -4V , 50% and 60% for Lla va- Qwen, and 12% for both cases for Claude-3.5- Sonnet. On the other hand, Gemini-1.5 Pro sho wed a bias towards percei ving the right chair as being closer . It achiev ed significantly higher accuracy in scenarios where the right chair was indeed closer , with accuracy rates of 72% for Case 1 flipped and 53% for Case 2. In contrast, when the left chair was closer , the model’ s accuracy dropped to 36% for Case 1 and 47% for Case 2 flipped. T o better understand the discrepancies in the outputs of these models, we analyzed the distrib u- tion of keywords used in their responses, as illus- Figure 2: Ev aluation examples for the fundamental relativ e spatial reasoning task: Images feature chairs at varying distances from the viewpoint, with both different and identical chair styles. Cases 1 and 2 are also flipped to in vestigate potential biases in model predictions. T able 2: Relative spatial reasoning task results: Accuracy percentages from 100 ev aluations for each test case. The table highlights model performance across different scenarios from lo w accuracy (red) to high accuracy (green). Accuracy (%) GPT -4V GPT -4o Gemini-1.5 Pro Lla va-mistral Llava-qwen Claude-3.5-sonnet Case 1 97 100 36 34 61 77 Case 1-Flipped 61 100 77 72 50 12 Case 2 13 100 87 53 60 12 Case 2-Flipped 81 99 47 94 74 30 Case 3 100 100 100 37 98 99 Case 4 98 100 28 81 94 100 Case 5 74 99 100 62 96 24 Case 6 100 100 60 66 94 100 Overall 78 100 67 62 78 57 trated in Figure 3 . W e first e valuated whether the models provided correct spatial reasoning answers and then, within the correct responses, examined whether the answers were optimal for pBL V appli- cations. Optimal answers use spatial terms (e.g., “left” and “right”), which are crucial for ef fecti ve navig ation assistance. Suboptimal answers, while still correct in reasoning, rely on color-based de- scriptors (e.g., “orange” and “yello w”), which are less useful for pBL V . GPT -4o demonstrates supe- rior performance, consistently providing correct responses and frequently emplo ying spatial terms to describe relati ve positions. Howe ver , it tends to rely on color-based w ords in its answers when the objects are of dif ferent color . In contrast, other models, such as GPT -4V and Claude-3.5-Sonnet, exhibit a bias to wards answer- ing “left” ov er “right, ” with significant performance gaps between cases inv olving left and right posi- tioning. Similarly , Gemini-1.5 Pro shows a bias Figure 3: Spatial reasoning keyw ord analysis: The x-axis represents the correct answer , while the bars indicate the ratio of responses e xtracted from model outputs. Dark green bars denote optimal answers for pBL V applications, using spatial terms (e.g., “left”, “right”) to describe positional relationships. Light green bars represent suboptimal answers that rely on color-based descriptions (e.g., “orange”, “yello w”) but demonstrate correct spatial reasoning. to wards answering “right” o ver “left. ” Regarding accuracy , all models except GPT -4o struggle to con- sistently provide correct spatial reasoning answers, with a significant portion of their responses relying on color-based cues. These findings underscore the need to reduce spatial biases in existing VLMs and align them more closely with pBL V -specific requirements, emphasizing spatial descriptors over less practical visual attributes. In summary , GPT -4o prov ed to be the most re- liable model for relativ e spatial reasoning tasks, demonstrating high accuracy and consistency across di verse scenarios. In contrast, other models underperformed in specific conditions and exhib- ited significant instability in complex setups. W e attribute this to biases in their training data and poor alignment with task-specific instructions, making them less suitable for na vigation tasks designed for pBL V . 4.1.3 Common-sense W ayfinding-Pertinent Scene Understanding T o assess the common-sense reasoning abilities of VLMs, we dev eloped a series of test cases that fea- tured scenes with varying le vels of complexity . W e started with a v acant chair as a baseline and then in- troduced v arious objects, such as coats, backpacks, and laptops, arranged in different configurations to simulate occupied chairs. Each model was tested 100 times to determine whether a seat was unoccu- pied or occupied, and the results of their accurac y are summarized in T able 3 . GPT -4o demonstrated the highest overall perfor - mance, achieving 100% accuracy in most scenarios. This included correctly identifying a vacant chair and cases where a coat w as placed on or hung from Figure 4: Evaluation examples for the fundamental commonsense reasoning task: Images show chairs that are either occupied or av ailable, with various objects such as coats hanging on the chair or laptops placed on surfaces to indicate occupancy . T able 3: Common-sense task results: Accuracy percentages from 100 ev aluations for each test case. The table highlights model performance across different scenarios from lo w accuracy (red) to high accuracy (green). Accuracy (%) GPT -4V GPT -4o Gemini-1.5 Pro Lla va-mistral Lla va-qwen Claude-3.5-sonnet Chair 100 100 72 100 100 100 Coat 49 100 100 1 2 0 Coat-Alt 29 100 100 3 0 0 Laptop-Backpack 6 95 42 4 1 0 Laptop-Backpack-Alt 18 7 0 2 6 0 Overall 40 80 63 22 22 20 the chair . Even in more complex configurations, such as the “Laptop-Backpack” scenario, GPT -4o maintained a 95% accuracy rate, showcasing its strong ability to interpret implicit cues and reason about object occupancy . Ho wev er , it faces chal- lenges in the “Laptop-Backpack-Alt” case ( 7% ac- curacy), where the backpack hangs of f of the chair and the laptop is arranged differently . This high- lights its limitation in handling perspective v aria- tions within complex setups. Gemini-1.5 Pro also performed well in more straightforward scenarios, achieving 100% accu- racy in the coat tasks. Howe ver , its performance dropped significantly in more comple x scenar - ios, such as the “Laptop-Backpack” and “Laptop- Backpack-Alt” cases, where it achie ved only 42% accuracy . Notably , Gemini was the only model that failed to identify the vacant chair case per- fectly ( 72% accuracy), highlighting a gap in its basic understanding of unoccupied seats. In con- trast, GPT -4V , the Llav a series models, and Claude- 3.5-Sonnet consistently performed poorly across all tested cases inv olving occupied chairs. Their accuracy was particularly lo w in tasks requiring im- plicit state recognition or reasoning about complex object arrangements. While Llav a and Claude-3.5- Sonnet succeeded in identifying a vacant chair , the y failed in nearly all other cases, underscoring their limitations in handling complex common-sense rea- soning. In summary , GPT -4o is the best model for common-sense reasoning tasks, although it still needs improvement for complex common-sense reasoning. Gemini-1.5 Pro showed strong perfor- mance in more straightforward tasks b ut had mod- erate accuracy in more complex cases. By contrast, GPT -4V , the Llav a series, and Claude-3.5-Sonnet faced significant challenges with common-sense reasoning, highlighting major limitations in their ability to understand implicit and contextual cues. 4.2 Navigation T ask T esting W e ev aluated the model’ s performance using a specifically designed system prompt and assessed its ability to navigate to a vacant seat under v ari- ous user queries. This section includes the results of our systematic testing, and an analysis of the model’ s outputs based on an annotation scheme we de veloped to categorize potential errors. 4.2.1 Navigation T ask T esting Results W e ev aluated the performance of VLMs on navi- gation tasks by assessing their ability to iterativ ely guide a user to ward a v acant chair , providing step- Figure 5: Ev aluation examples for na vigation tasks: Scenarios include obstacles placed along the path to the chair or objects placed on the chair and corresponding tabletop. Obstacle and object types, such as backpacks, coats, and boxes, simulate comple x real-life scenarios to assess the model’ s ability to identify empty chairs, plan reasonable navigation paths, and a void obstacles effecti vely . T able 4: Navigation task results: Accuracy of na vigation tasks, including destination identification, route plan- ning, and obstacle detection, as judged by assessment annotators. Accuracy (%) Destination Route Obstacles GPT -4o 81 92 84 Gemini-1.5 Pro 30 13 64 Llav a-mistral 66 67 74 Llav a-qwen 40 38 62 Claude-3.5-sonnet 85 87 82 by-step instructions under various conditions. Five models were tested across 13 cases, including class- room and office settings. The ev aluation focused on three criteria: the ability to identify the destination, determine the route, and recognize obstacles. Each test case included three user queries, as outlined in Section 3.3 , with each query e xecuted 10 times, resulting in 390 outputs per model. T o conduct the human ev aluation, we had two graduate student annotators from Columbia Uni versity to label the outputs based on the three metrics defined in Sec- tion 3.3 . The annotator s recei ved training through a pilot study and achie ved a Cohen’ s kappa value of 0.83 across all annotations, indicating substantial agreement. As sho wn in T able 4 , the models demonstrate v arying lev els of ef fectiv eness across the three met- rics. GPT -4o and Claude-3.5-Sonnet exhibit robust accuracy , with both models scoring o ver 80% in all metrics. GPT -4o particularly e xcels in route accu- racy (92%) and obstacle recognition (84%), while Claude-3.5-Sonnet performs better in destination identification (85%). Llav a-Mistral outperforms Llav a-Qwen across all three metrics, but neither model performs well on the navigation task. By contrast, Gemini-1.5 Pro scores only 13% in route planning, indicating a significant limitation in its ability to plan routes for navigation tasks effec- ti vely . In summary , GPT -4o and Claude-3.5-Sonnet are the most reliable models for high-stakes naviga- tion tasks, providing consistent and accurate guid- ance e ven in comple x scenarios. The Lla v a models, while less capable overall, may be better suited to basic navigation tasks with lower accuracy re- quirements. Gemini-1.5 Pro, howe ver , appears un- suitable for navig ation tasks due to its poor per- formance. In our supplementary materials, we explore additional factors that may have affected VLM performance, including the spatial resolu- tion of each input image used by each VLM (Ap- pendix A.1 ), which could obscure critical visual details at both low and high resolutions, and the in- fluence of system-prompt design on how models in- terpret user queries and objectiv es (Appendix A.2 ). Additionally , we provide a detailed case study in Appendix A.3 to illustrate ho w VLMs handle nav- igation tasks. These analyses provide context for the observed results and offer insights into potential areas for further optimization. T able 5: Overall accuracy performance summary of models across the three fundamental tasks: counting, relativ e spatial reasoning, and commonsense reasoning. Overall Accuracy(%) Counting Spatial Commonsense GPT -4V 58 78 40 GPT -4o 94 100 80 Gemini-1.5 Pro 45 67 63 Llav a-mistral 44 62 22 Llav a-qwen 76 78 22 Claude-3.5-sonnet 75 57 20 5 Discussion 5.1 Summary of Findings This study provides an in-depth ev aluation of VLMs for navigation tasks tailored to pBL V , as- sessing their fundamental skills and performance in practical na vigation scenarios. As shown in T a- ble 5 , our results highlight notable differences in model capabilities, with GPT -4o consistently out- performing other models in counting, spatial rea- soning, and common-sense scene understanding. It demonstrates substantial accurac y and stability , particularly in high-density en vironments and com- plex spatial configurations. In contrast, GPT -4V , Gemini-1.5 Pro, and the Llav a series exhibit signif- icant limitations, particularly in handling une ven object distrib utions or implicit reasoning, re vealing gaps in their spatial reasoning and adaptability . In navig ation tasks, GPT -4o and Claude-3.5-Sonnet emerge as the most reliable models, excelling in destination identification, route planning, and ob- stacle recognition. Their performance suggests suitability for high-stakes assisti ve applications. Con versely , Gemini-1.5 Pro and the Llav a models struggle with route planning and obstacle detection, highlighting key weaknesses in their na vigation ca- pabilities. While GPT -4o sho ws strong potential for real-world deployment, further refinements are necessary , particularly in aligning responses with human expectations and reducing inconsistencies across di verse en vironments. Our findings reinforce the broader challenges in adapting VLMs for pBL V navigation assistance, particularly biases in spatial reasoning, inconsis- tencies in object identification, and limitations in scene understanding. While state-of-the-art models sho w promise, further adv ancements in model ro- bustness, image resolution processing, and system- prompt optimization are essential to enhance their reliability in real-world na vigation applications. 5.2 Applying VLMs to Realistic Scenarios A primary limitation of this study is the controlled, static nature of our dataset. This was a deliberate methodological choice, as our goal was to isolate fundamental VLM failure modes and rigorously test for consistency . Howe ver , this focused scope means our findings do not fully represent the com- plexity of real-world navigation, which inv olves dy- namic en vironments, variable lighting, motion blur , and unpredictable obstacles. Therefore, the perfor- mance and, more importantly , the model inconsis- tencies we observed in our controlled settings may not directly generalize. Significant future work is needed to bridge this gap and test whether these fundamental “brittleness” issues are amplified in more dynamic scenarios. Adapting VLMs for real-world navigation sce- narios also presents challenges beyond our exper - imental settings. Our findings highlight that ev en top-performing models are not fully reliable. For instance, while GPT -4o performed best o verall in our ev aluation, it was not immune to failures on tasks requiring precise spatial reasoning or implicit understanding. Our ke y finding is this ‘brittleness’, that ev en the most adv anced models can fail in seemingly simple, controlled scenarios. This is a critical issue for a high-stakes assistiv e application, as e ven a low failure rate is unacceptable. These dif ficulties highlight significant architectural and alignment issues that limit the broader applicability of these models. One important consideration for real-time assis- ti ve systems is response time. In practical applica- tions, even small delays can significantly impact usability , particularly in time-sensiti ve navigation scenarios. While open-source models deployed on dedicated servers can be optimized for lower la- tency , closed-source models like GPT -4 and Claude often rely on external APIs. This reliance intro- duces unpredictability , as response times can v ary based on factors such as server load, network la- tency , and access rate limitations. Such variability can present challenges in ensuring a seamless user experience, underscoring the importance of mini- mizing delays and ensuring consistent performance for real-world deployment of assistiv e technolo- gies. A critical challenge for current VLMs lies in their ability to maintain spatial coherence when pro- cessing input images of v arying resolutions. T ech- niques like AnyRes ( Liu et al. , 2024a ) enable mod- els to handle different image sizes, but they can inadvertently disrupt spatial relationships between objects, af fecting tasks that rely on precise spatial reasoning. Maintaining spatial inte grity is essential for comple x navigation tasks, highlighting the need for improv ed resolution-handling techniques. Another issue is the mismatch between model outputs and human feedback for navigation- specific tasks. While GPT -4o demonstrates im- prov ed alignment and performs well in interpreting and responding to user instructions, other models, such as Gemini-1.5 Pro and the Llav a series, of- ten struggle to meet human expectations in our navig ation task ev aluation. This discrepancy in- dicates that current VLMs may not be adequately trained for tasks that require precise image under- standing and interpretation based on user input. T echniques like reinforcement learning from hu- man feedback ( Ouyang et al. , 2022 ; W ang et al. , 2024c ) or direct preference optimization trans- ferred ( Rafailov et al. , 2024 ; W ang et al. , 2024b ) from LLMs have been shown to enhance model performance and adaptability in various applica- tions by aligning image understanding tasks with human feedback. While models like GPT -4o showcase prelimi- nary promise, our study underscores that adapt- ing VLMs to realistic scenarios requires address- ing these critical limitations. By refining architec- tural approaches to preserve spatial relationships and aligning models more ef fectiv ely with human expectations, these systems may begin to lay the groundwork for practical utility . Howe ver , our find- ings confirm that significant challenges in funda- mental reliability and alignment remain before the y can be considered safe or effecti ve for real-world deployment. 5.3 Application to Assistiv e T echnology The application of VLMs in assistiv e technologies for pBL V has gro wn significantly , with numerous works exploring their potential to enhance navi- gation, object detection, and scene understanding. Systems such as Be My Eyes 2 hav e incorporated VLMs to interpret still visual scenes from user- provided images, aiding users in tasks like object identification and obstacle a voidance. Foundational models like GPT -4V have been applied to vision- language planning tasks ( Hu et al. , 2023 ; W ake et al. , 2024 ), demonstrating potential for assisti ve 2 https://www .bemyeyes.com applications such as route planning and spatial rea- soning. Despite these advances, aligning model predictions with user intent remains a key chal- lenge. Established wearable assistiv e technologies hav e incorporated various AI-driven functionalities to support pBL V . De vices like the En vision Glasses 3 utilize AI to provide functionalities such as text recognition, object identification, and scene de- scription, of fering users auditory feedback to in- terpret their surroundings. Similarly , the OrCam MyEye ( Amore et al. , 2023 ), which has been com- mercially av ailable for man y years, is a compact de- vice that attaches to eye glasses, employing a com- bination of specialized AI-driven algorithms for tasks like te xt reading, face recognition, and prod- uct identification. While many of these features rely on task-specific AI technologies, scene descrip- tion capabilities often dra w on VLMs to generate richer contextual information, such as spatial rela- tionships and en vironmental details, which comple- ment other assistiv e functionalities. Research ini- tiati ves like 5G Edge V ision ( Azzino et al. , 2024b ) are further exploring the integration of VLMs with wearable de vices to provide real-time visual scene processing and na vigation assistance. By lev erag- ing 5G connecti vity , these systems aim to of fload the intensi ve computational required for VLMs, en- abling more efficient and responsiv e support for pBL V . This integration highlights the gro wing role of VLMs in supplementing existing assisti ve tech- nologies with enhanced en vironmental understand- ing. Collecti vely , these ef forts underscore the trans- formati ve potential of VLMs in assisti ve technolo- gies. Future directions should improv e model align- ment with user-specific goals, integrate multimodal features such as tactile and auditory feedback, and enhance real-time adaptability to di verse en viron- ments. By addressing these challenges, VLMs can unlock new opportunities to empo wer pBL V to nav- igate and interact with their surroundings. 6 Conclusion In summary , advanced VLMs ha ve demonstrated preliminary promise in supporting navigation tasks for pBL V , but our systematic ev aluation rev eals they still exhibit critical limitations, inconsisten- cies, and biases that restrict their utility in real- world scenarios. Our study highlights ke y dis- 3 https://www .letsenvision.com/glasses crepancies in model performance. While GPT - 4o emerged as a relativ ely strong performer , our findings also show that even this state-of-the-art model is not immune to ‘brittleness, ’ failing in controlled tasks that are foundational to naviga- tion. Other models, such as Gemini-1.5 Pro and the Llav a series, struggle more significantly in these areas. These findings provide actionable insights for assistiv e technology dev elopers, highlighting that current models are not yet reliable for deploy- ment in high-stakes applications. They also provide clear direction for VLM de velopers seeking to re- fine their models. Future advancements must focus on addressing the specific weaknesses identified in our study , such as improving fundamental spatial reasoning reliability , reducing output biases, and enhancing alignment with human feedback. By ad- dressing these foundational issues, researchers can mov e closer to creating truly reliable and effecti ve navig ation assistance systems tailored to the needs of pBL V . References F . Amore, V . Silvestri, M. Guidobaldi, M. Sulfaro, P . Piscopo, S. T urco, F . De Rossi, E. Rellini, S. F or- tini, S. Rizzo, F . Perna, L. Mastropasqua, V . Bosch, L. R. Oest-Shirai, M. A. O. Haddad, A. H. Higashi, R. H. Sato, Y . Pyatov a, M. Daibert-Nido, and S. N. Marko witz. 2023. Efficacy and patients’ satisfac- tion with the orcam myeye de vice among visually impaired people: A multicenter study . J ournal of Medical Systems , 47(1):11. T ommy Azzino, Marco Mezzavilla, Sundeep Rangan, Y ao W ang, and John-Ross Rizzo. 2024a. 5g edge vision: W earable assistive technology for people with blindness and low vision. In 2024 IEEE W ir e- less Communications and Networking Confer ence (WCNC) , pages 1–6. IEEE. T ommy Azzino, Marco Mezzavilla, Sundeep Rangan, Y ao W ang, and John-Ross Rizzo. 2024b. 5g edge vision: W earable assistive technology for people with blindness and low vision . In 2024 IEEE W ir e- less Communications and Networking Confer ence (WCNC) , page 1–6. IEEE. Y akoub Bazi, Mohamad Mahmoud Al Rahhal, Laila Bashmal, and Mansour Zuair . 2023. V i- sion–language model for visual question answering in medical imagery . Bioengineering , 10(3). Florian Bordes, Richard Y uanzhe Pang, Anurag Ajay , Alexander C Li, Adrien Bardes, Suzanne Petryk, Oscar Mañas, Zhiqiu Lin, Anas Mahmoud, Bar- gav Jayaraman, et al. 2024. An introduction to vision-language modeling. arXiv pr eprint arXiv:2405.17247 . T om Brown, Benjamin Mann, Nick Ryder , Melanie Subbiah, Jared D Kaplan, Prafulla Dhariwal, Arvind Neelakantan, Pranav Shyam, Girish Sastry , Amanda Askell, Sandhini Agarwal, Ariel Herbert-V oss, Gretchen Krueger , T om Henighan, Re won Child, Aditya Ramesh, Daniel Zie gler , Jef frey W u, Clemens W inter , Chris Hesse, Mark Chen, Eric Sigler , Ma- teusz Litwin, Scott Gray , Benjamin Chess, Jack Clark, Christopher Berner, Sam McCandlish, Alec Radford, Ilya Sutskever , and Dario Amodei. 2020. Language models are few-shot learners . In Ad- vances in Neural Information Pr ocessing Systems , volume 33, pages 1877–1901. Curran Associates, Inc. Zhuo Chen, Xiaoming Liu, Masaru K ojima, Qiang Huang, and T atsuo Arai. 2021. A wearable navi- gation de vice for visually impaired people based on the real-time semantic visual slam system . Sensors , 21(4). Y ifan Du, Zikang Liu, Jun yi Li, and W ayne Xin Zhao. 2022. A survey of vision-language pre-trained mod- els . In International Joint Confer ence on Artificial Intelligence . OpenAI et al. 2024. Gpt-4 technical report . Pr eprint , V ojtech Gintner , Jan Balata, Jakub Boksansky , and Zdenek Miko vec. 2017. Improving re verse geocod- ing: Localization of blind pedestrians using con ver - sational ui . In 2017 8th IEEE International Con- fer ence on Cognitive Infocommunications (Co gInfo- Com) , pages 000145–000150. Barry Haddow , Rachel Bawden, Antonio V alerio Miceli Barone, Jind ˇ rich Helcl, and Alexandra Birch. 2022. Survey of lo w-resource machine translation . Computational Linguistics , 48(3):673–732. Noriaki Hirose, Catherine Glossop, Ajay Sridhar , Dhruv Shah, Oier Mees, and Sergey Levine. 2024. Lelan: Learning a language-conditioned navig ation policy from in-the-wild video. In Conference on Robot Learning . Xiao wei Hu, Zhe Gan, Jianfeng W ang, Zhengyuan Y ang, Zicheng Liu, Y umao Lu, and Lijuan W ang. 2022. Scaling up vision-language pretraining for image captioning . In 2022 IEEE/CVF Conference on Com- puter V ision and P attern Recognition (CVPR) , pages 17959–17968. Y ingdong Hu, Fanqi Lin, T ong Zhang, Li Y i, and Y ang Gao. 2023. Look before you leap: Un veiling the power of gpt-4v in robotic vision-language planning. arXiv pr eprint arXiv:2311.17842 . Hanen Jabnoun, Mohammad Abu Hashish, and Faouzi Benzarti. 2020. Mobile assisti ve application for blind people in indoor na vigation. In The Impact of Digital T echnologies on Public Health in Developed and De- veloping Countries , pages 395–403, Cham. Springer International Publishing. Esteban Kaiser and Michael Lawo. 2012. W earable navigat ion system for the visually impaired and blind people . pages 230–233. Jacob Krantz, Erik W ijmans, Arjun Majumdar , Dhruv Batra, and Stefan Lee. 2020. Beyond the nav- graph: V ision-and-language navigation in contin- uous environments . In Computer V ision – ECCV 2020: 16th Eur opean Confer ence, Glasgow , UK, Au- gust 23–28, 2020, Pr oceedings, P art XXVIII , page 104–120, Berlin, Heidelberg. Springer -V erlag. Chengshu Li, Ruohan Zhang, Josiah W ong, Cem Gok- men, Sanjana Sriv asta va, Roberto Martín-Martín, Chen W ang, Gabrael Levine, Michael Lingelbach, Jiankai Sun, Mona An v ari, Minjune Hwang, Man- asi Sharma, Arman A ydin, Dhruva Bansal, Samuel Hunter , Kyu-Y oung Kim, Alan Lou, Caleb R Matthews, Iv an V illa-Renteria, Jerry Huayang T ang, Claire T ang, Fei Xia, Silvio Sav arese, Hyowon Gweon, Karen Liu, Jiajun W u, and Li Fei-Fei. 2023. Behavior -1k: A benchmark for embodied ai with 1,000 ev eryday acti vities and realistic simulation . In Pr oceedings of The 6th Confer ence on Robot Learn- ing , volume 205 of Proceedings of Mac hine Learning Resear ch , pages 80–93. PMLR. Junyi Li, T ianyi T ang, W ayne Xin Zhao, Jian-Y un Nie, and Ji-Rong W en. 2024. Pre-trained language models for text generation: A survey . ACM Comput. Surv . , 56(9). Haotian Liu, Chunyuan Li, Y uheng Li, Bo Li, Y uanhan Zhang, Sheng Shen, and Y ong Jae Lee. 2024a. Llav a- next: Improved reasoning, ocr , and world knowledge . Haotian Liu, Chun yuan Li, Qingyang W u, and Y ong Jae Lee. 2023. V isual instruction tuning . In Advances in Neural Information Pr ocessing Systems , volume 36, pages 34892–34916. Curran Associates, Inc. Ruiping Liu, Jiaming Zhang, Angela Schön, Karin Müller , Junwei Zheng, Kailun Y ang, Kathrin Ger- ling, and Rainer Stiefelhagen. 2024b. Objectfinder: Open-vocab ulary assistiv e system for interactiv e object search by blind people. arXiv preprint arXiv:2412.03118 . Xinhao Liu, Jintong Li, Y icheng Jiang, Niranjan Sujay , Zhicheng Y ang, Juexiao Zhang, John Abanes, Jing Zhang, and Chen Feng. 2024c. Citywalker: Learning embodied urban navigation from web-scale videos. arXiv pr eprint arXiv:2411.17820 . Zain Merchant, Abrar Anwar, Emily W ang, Souti Chattopadhyay , and Jesse Thomason. 2024. Gen- erating contextually-rele v ant navigation instructions for blind and low vision people. arXiv pr eprint arXiv:2407.08219 . Sriraksha Nayak and C. Chandrakala. 2020. Assistiv e mobile application for visually impaired people . In- ternational J ournal of Interactive Mobile T echnolo- gies (iJIM) , 14:52. OpenAI. 2023. Gpt-4v(ision) system card . Long Ouyang, Jeffrey W u, Xu Jiang, Diogo Almeida, Carroll W ainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray , et al. 2022. Training language models to follow instruc- tions with human feedback. Advances in neural in- formation pr ocessing systems , 35:27730–27744. Donatella Pascolini and Silvio Paolo Mariotti. 2012. Global estimates of visual impairment: 2010. British Journal of Ophthalmolo gy , 96(5):614–618. Alec Radford, Jong W ook Kim, Chris Hallacy , Aditya Ramesh, Gabriel Goh, Sandhini Agarwal, Girish Sas- try , Amanda Askell, Pamela Mishkin, Jack Clark, Gretchen Krueger , and Ilya Sutske ver . 2021. Learn- ing transferable visual models from natural language supervision . In Pr oceedings of the 38th International Confer ence on Machine Learning , volume 139 of Pr oceedings of Machine Learning Researc h , pages 8748–8763. PMLR. Rafael Rafailo v , Archit Sharma, Eric Mitchell, Christo- pher D Manning, Stefano Ermon, and Chelsea Finn. 2024. Direct preference optimization: Y our language model is secretly a re ward model. Advances in Neu- ral Information Pr ocessing Systems , 36. Xinshuai Song, W eixing Chen, Y ang Liu, W eikai Chen, Guanbin Li, and Liang Lin. 2024. T o wards long- horizon vision-language navigation: Platform, bench- mark and method. arXiv preprint . Naoki W ake, Atsushi Kanehira, Kazuhiro Sasabuchi, Jun T akamatsu, and Katsushi Ik euchi. 2024. Gpt-4v (ision) for robotics: Multimodal task planning from human demonstration. IEEE Robotics and Automa- tion Letters . Beichen W ang, Juexiao Zhang, Shuwen Dong, Irving Fang, and Chen Feng. 2024a. Vlm see, robot do: Human demo video to robot action plan via vision language model. arXiv preprint . Fei W ang, W enxuan Zhou, James Y . Huang, Nan Xu, Sheng Zhang, Hoifung Poon, and Muhao Chen. 2024b. mDPO: Conditional preference optimization for multimodal large language models . In Proceed- ings of the 2024 Confer ence on Empirical Methods in Natural Langua ge Pr ocessing , pages 8078–8088, Miami, Florida, USA. Association for Computational Linguistics. Y ufei W ang, Zhanyi Sun, Jesse Zhang, Zhou Xian, Erdem Biyik, David Held, and Zackory Erickson. 2024c. Rl-vlm-f: Reinforcement learning from vi- sion language foundation model feedback. In Pr o- ceedings of the 41th International Conference on Machine Learning . WHO. 2013. Media centre. visual impairment and blind- ness. Retrieved un August , 24:2017. Zhengyuan Y ang, Linjie Li, Ke vin Lin, Jianfeng W ang, Chung-Ching Lin, Zicheng Liu, and Lijuan W ang. 2023. The dawn of lmms: Preliminary explorations with gpt-4v(ision) . Preprint , arXi v:2309.17421. Xiang Y ue, Y uansheng Ni, Kai Zhang, Tian yu Zheng, Ruoqi Liu, Ge Zhang, Samuel Stevens, Dongfu Jiang, W eiming Ren, Y uxuan Sun, Cong W ei, Botao Y u, Ruibin Y uan, Renliang Sun, Ming Y in, Boyuan Zheng, Zhenzhu Y ang, Y ibo Liu, W enhao Huang, Huan Sun, Y u Su, and W enhu Chen. 2024a. Mmmu: A massiv e multi-discipline multimodal understand- ing and reasoning benchmark for expert agi. In Pro- ceedings of CVPR . Xiang Y ue, T ianyu Zheng, Y uansheng Ni, Y ubo W ang, Kai Zhang, Shengbang T ong, Y uxuan Sun, Botao Y u, Ge Zhang, Huan Sun, Y u Su, W enhu Chen, and Gra- ham Neubig. 2024b. Mmmu-pro: A more robust multi-discipline multimodal understanding bench- mark. arXiv preprint . Fateme Zare, Paniz Sedighi, and Mehdi Delrobaei. 2022. A wearable rfid-based navigation system for the visu- ally impaired. In 2022 10th RSI International Confer - ence on Robotics and Mechatr onics (ICRoM) , pages 572–577. IEEE. Jingyi Zhang, Jiaxing Huang, Sheng Jin, and Shijian Lu. 2023. V ision-language models for vision tasks: A surve y . Preprint , arXi v:2304.00685. T ianyi Zhang, F aisal Ladhak, Esin Durmus, Percy Liang, Kathleen McKeo wn, and T atsunori B. Hashimoto. 2024. Benchmarking Large Language Models for News Summarization . T ransactions of the Associa- tion for Computational Linguistics , 12:39–57. Fengda Zhu, Xiwen Liang, Y i Zhu, Qizhi Y u, Xiaojun Chang, and Xiaodan Liang. 2021. Soon: Scenario oriented object navigation with graph-based explo- ration . In 2021 IEEE/CVF Conference on Computer V ision and P attern Reco gnition (CVPR) , pages 12684– 12694. A A ppendix A.1 Impact of Resolution Image resolution plays a crucial role in VLMs for navig ation tasks. Many state-of-the-art VLMs are pre-trained on datasets with fixed image sizes and utilize methods like AnyRes to handle different resolutions during inference. T o e valuate the im- pact of resolution, we tested dif ferent input image resolutions across counting, spatial reasoning, and common-sense reasoning tasks. By comparing the results from original and adjusted resolutions, we observed that resolution adjustments can signifi- cantly optimize the accuracy and stability of spe- cific models. Therefore, we selected the optimal resolution for each model in our experiments. The results for the VLMs at different resolutions are presented in T ables 6 , 7 , and 8 . In spatial reason- ing tasks, reducing the resolution to 288×384 sig- nificantly improv es the accuracy of Lla va-Qwen. In contrast, changes in resolution hav e little ef fect on the performance of all models for common-sense reasoning tasks, as neither resolution succeeds in completing the task. Based on the experimental results, Llav a-mistral sho ws the most stable performance at its original resolution, achieving high accuracy in counting and spatial reasoning tasks. Llav a-qwen performed best when adjusted to a resolution of 288×384, par- ticularly excelling in comple x multi-object scenes. Claude-3.5-sonnet does not show significant im- prov ement with resolution adjustments, as its origi- nal resolution is already close to optimal for most tasks. Therefore, for navigation tasks, we used the original resolution for both Llav a-mistral and Claude-3.5-sonnet, while adopting the 288×384 resolution for Llav a-qwen. A.2 System Prompt Design In our e v aluation of VLMs’ navig ation capabilities, we focused on its ability to navigate to a vacant chair . Recognizing the significant influence that well constructed prompts can hav e on a language model’ s performance, we created a specific prompt system for this task. Our goal was to address all essential aspects to improv e VLMs’ precision and ef ficiency in na vigation. The detailed prompt sys- tem used in our assessments is sho wn in Figure 6 . On the right side of the figure, we list the issues that each part of the prompt is designed to address. By incorporating these specific prompts, we found that VLMs ef fectiv ely mitigates common problems in navig ation tasks. Below we will use GPT -4V to ex- plain the significant impact of prompts on language model performance Based on the results of our system testing, we can make se veral important observ ations about the performance of VLMs in na vigation tasks. In our preliminary tests, we found that various VLMs hav e strong capabilities in comparing the distances of objects of different styles, showing a good un- derstanding of relati ve spatial relationships. Ho w- e ver , it lacks certain capabilities that are critical for practical navigation, such as accurate counting and pixel-le vel object localization. For e xample, while GPT -4V can accurately identify the relati ve dis- tances between objects, it has difficulty accurately counting as the number of objects increases, and cannot accurately localize objects in the image. In addition, the model exhibits biases, such as consis- tently leaning to the left when determining which object is closer , and misinterpreting common sense T able 6: Resolution Comparison in Counting T ask Acc. (%), Mean, V ar . Llav a-mistral Llav a-qwen Claude-3.5-sonnet Resolution Original 252x336 Original 288x384 Original 150x200 1 Chair 38 1.60 0.26 31 1.69 0.22 40 1.72 0.67 58 1.44 0.31 100 1.00 0.00 99 0.99 0.01 2 Chairs 83 2.11 0.15 99 2.01 0.01 99 2.02 0.04 96 2.07 0.13 100 2.00 0.00 100 2.00 0.00 3 Chairs 56 2.56 0.31 23 2.23 0.18 3 2.07 0.11 57 2.67 0.32 0 2.00 0.00 0 2.00 0.00 3 Chairs-Alt 39 2.53 0.39 65 3.09 0.35 19 2.19 0.16 84 3.00 0.16 100 3.00 0.00 0 2.00 0.00 4 Chairs 70 3.74 0.42 65 3.66 0.27 30 2.91 0.75 100 4.00 0.00 100 4.00 0.00 100 4.00 0.00 4 Chairs-Alt 61 3.57 0.33 37 3.32 0.32 8 2.22 0.33 100 4.00 0.00 100 4.00 0.00 100 4.00 0.00 5 Chairs 5 3.62 0.56 10 4.11 0.20 3 3.54 0.61 29 5.67 0.26 0 4.00 0.00 0 4.06 0.12 6 Chairs 0 3.85 0.43 6 4.36 0.43 7 4.13 2.11 80 6.36 0.56 100 6.00 0.00 10 4.20 0.36 Overall 44 - - 42 - - 26 - - 78 - - 86 - - 58 - - T able 7: Resolution Comparison in Spatial Reasoning T ask Accuracy (%) Lla va-mistral Lla va-qwen Claude-3.5-sonnet Resolution Original 252x336 Original 288x384 Original 150x200 Case 1 34 80 11 61 77 41 Case 1-Flipped 72 18 41 74 29 76 Case 2 53 47 85 50 12 61 Case 2-Flipped 94 69 38 60 12 67 Case 3 37 99 93 98 99 3 Case 4 62 95 87 94 100 5 Case 5 66 96 55 96 24 16 Case 6 81 87 99 94 100 58 Overall 62 74 64 78 57 41 T able 8: Resolution Comparison in Commonsense Reasoning T ask Accuracy (%) Lla va-mistral Lla va-qwen Claude-3.5-sonnet Resolution Original 252x336 Original 288x384 Original 150x200 Chair 100 99 99 100 100 100 Coat 1 0 6 2 0 0 Coat-Alt 3 0 2 0 0 100 Laptop-Backpack 4 0 3 1 0 0 Laptop-Backpack-Alt 2 0 10 6 0 0 Overall 22 20 24 22 20 40 scenarios, such as a backpack on a seat indicating that the seat is occupied. Our navigation task tests sho w that VLMs cur- rently hav e difficulty handling navig ation tasks. This is due to its lack of basic capabilities. For example, our e xperiments clearly show that GPT - 4V has dif ficulty estimating distances and gener- ally needs to make common sense reasoning more accurately . These limitations are consistent with our conclusions from the basic ability tests, thus strengthening the v alidity of our results. Unfortu- nately , these shortcomings prevent GPT -4V from becoming a reliable component for building dia- logue systems for pBL V navig ation tasks. The input to GPT -4V consists of two main parts: the system prompt and the user query . The system prompt informs the model how to shape its output, including style, length, and lev el of detail. The user query instructs the model to ex ecute the instruc- tion, such as understanding the input images. In our ev aluation, the design of the system prompt is crucial in guiding GPT -4V’ s responses to pro- vide ef fecti ve na vigation assistance. A well-crafted system prompt significantly impacts the model’ s Figure 6: The system prompt design is presented, which optimizes the model performance in navigation tasks through six parts: clear purpose and function, definition of vacant seats, context understanding, clear guidance, filtering of rele vant details, and inclusi ve guidance. The prompt design is intended to help lo w-vision or visually impaired users provide accurate and ef fective na vigation support, such as identifying the tar get location, planning a clear path, and providing practical descriptions of the en vironment, while av oiding redundant information or assumptions that do not meet user needs, thereby impro ving the practicality and reliability of the model in actual navigation scenarios. ability to offer accurate, rele v ant, and contextually appropriate guidance. Therefore, we included se v- eral ke y aspects in our system prompt to optimize GPT -4V for our navigation task for pBL V . Incorporating Na vigation Starting P oint Our system prompt design includes the starting point for navig ation, which informs the model that the provided image represents the user’ s current vie w- point, ensuring that directions are based on this perspecti ve rather than inferred or calculated loca- tions. This ensures that GPT -4V generates guid- ance that is directly relev ant and accurate to the user’ s perspectiv e. Specifying the starting point in the prompt ensures the model interprets the user’ s current vie wpoint correctly , enabling it to pro vide more precise and contextually appropriate na viga- tion instructions. Integrating Direction Description The second part of our system prompt inv olves incorporating direction descriptions. This will allow GPT -4V to of fer clear and actionable navig ation routes. By providing detailed directions such as “turn left at the ne xt intersection” or “walk straight for 100 meters, ” the model can give precise and practical instructions that users can easily follow . Integrating direction descriptions is crucial for providing prac- tical navig ation assistance, as it transforms general guidance into specific, step-by-step instructions. Filtering Irrelev ant Details The third part of our system prompt design focuses on filtering out irrele- v ant details. This helps GPT -4V ignore information that is not useful for people with blindness, such as the color , shape, and visual size of objects. By removing these unnecessary details, the model can focus on providing information that is truly help- ful for navigation, such as landmarks, obstacles, and directional cues. This aspect of the prompt pre vents information ov erload and ensures that the guidance remains focused and rele vant to the user’ s needs. By streamlining the information provided, we improve the model’ s ability to deliver precise and ef ficient navigation assistance, making it easier for users to follo w . Pre venting Hallucination The final part of our system prompt design aims to prev ent hallucina- tions. This directs GPT -4V to av oid generating information that is not based on the input image or verifiable data. Hallucination refers to the model providing inaccurate or fabricated directions or de- tails. By explicitly instructing the model to refrain from hallucinating, we aim to ensure the reliabil- ity and trustworthiness of the navigation assistance provided. This aspect of the prompt emphasizes the importance of grounding the model’ s responses in visual and conte xtual information, thereby minimiz- ing the risk of misleading or incorrect guidance. By addressing hallucination, we enhance the overall safety and ef fecti veness of GPT -4V in navigation tasks, ensuring that users receiv e dependable and accurate directions. A.3 Case Study In the navigation task, VLMs showed significant dif ferences in their ability to interpret the same scene, as sho wn in Figure 7 . This example, in particular , highlights the critical “brittleness” and “unreliability” that our multi-dimensional e valua- tion rubric was designed to catch. At first glance, Claude’ s response appears detailed. It correctly identifies the “backpack or bag” and provides a route (“walk straight ahead... 8 steps”). Ho wev er , its core instruction that the chair is “directly in front of you” is imprecise and potentially incorrect, as it ignores the intervening table. Under our rubric, this response was rated ‘Correct’ on ‘Route’ (as it provided one) and ‘Correct’ on ‘Obstacles’ (it did not hallucinate). Howe ver , it was rated ‘Incorrect’ on ‘Destination, ’ as its imprecise guidance fails to get the user reliably to the specific chair . Similarly , GPT -4o’ s response correctly uses the table as a landmark (“Mov e forward until you reach the ta- ble... ”). Howe ver , it also includes a critical failure: it hallucinates a “wet floor sign” that is not present in the image. This is not a sign of en vironmental safety , b ut rather a dangerous failure of trustworthi- ness that could mislead a blind person. Therefore, this response was rated ’Incorrect’ on ‘Obstacles. ’ In comparison, the performance of Gemini-1.5 Pro is inferior . Its judgment of the status of the chair in the picture is not accurate enough. It mistakenly belie ves that the chair is “occupied by items” and “no other chairs are av ailable” and does not provide any substantive navigation guidance, e ven suggesting users provide additional images for more information. This overly conservati ve strategy , although it may reduce false guidance, is inef ficient in navigation tasks that require im- mediate resolution. Judging from the experimental results, Claude performs better in task e xecution ca- pabilities. The instructions Claude generates are de- tailed and precise, and they can ef ficiently complete navig ation goals. GPT -4o is also characterized by concise and clear path planning combined with detailed en vironmental safety reminders, which is suitable for mission-focused applications in safe scenarios. Ho wev er , Gemini performs poorly in navig ation tasks and cannot meet actual mission re- quirements. These differences suggest that model suitability needs to be chosen based on task com- plexity and e xecution requirements. Figure 7: The output comparison of different VLMs in navig ation tasks is shown, focusing on ev aluating the dif ferences in their ability to interpret the same im- age and generate navigation instructions. The figure compares the performance of each model in target posi- tioning, path planning, and en vironmental information description. Some models generate precise and detailed instructions, such as clearly indicating the location of the target and potential obstacles, while providing clear path planning; while other models show obvious lim- itations, such as misjudging the target state, missing obstacle information, or failing to provide useful navig a- tion guidance. Through this comparison, we can clearly see the adv antages and disadvantages of each model in the navigation task, re vealing its gaps in task e xecution capabilities and en vironmental understanding, and pro- viding an important reference for further improving the model.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment