LTS-VoiceAgent: A Listen-Think-Speak Framework for Efficient Streaming Voice Interaction via Semantic Triggering and Incremental Reasoning

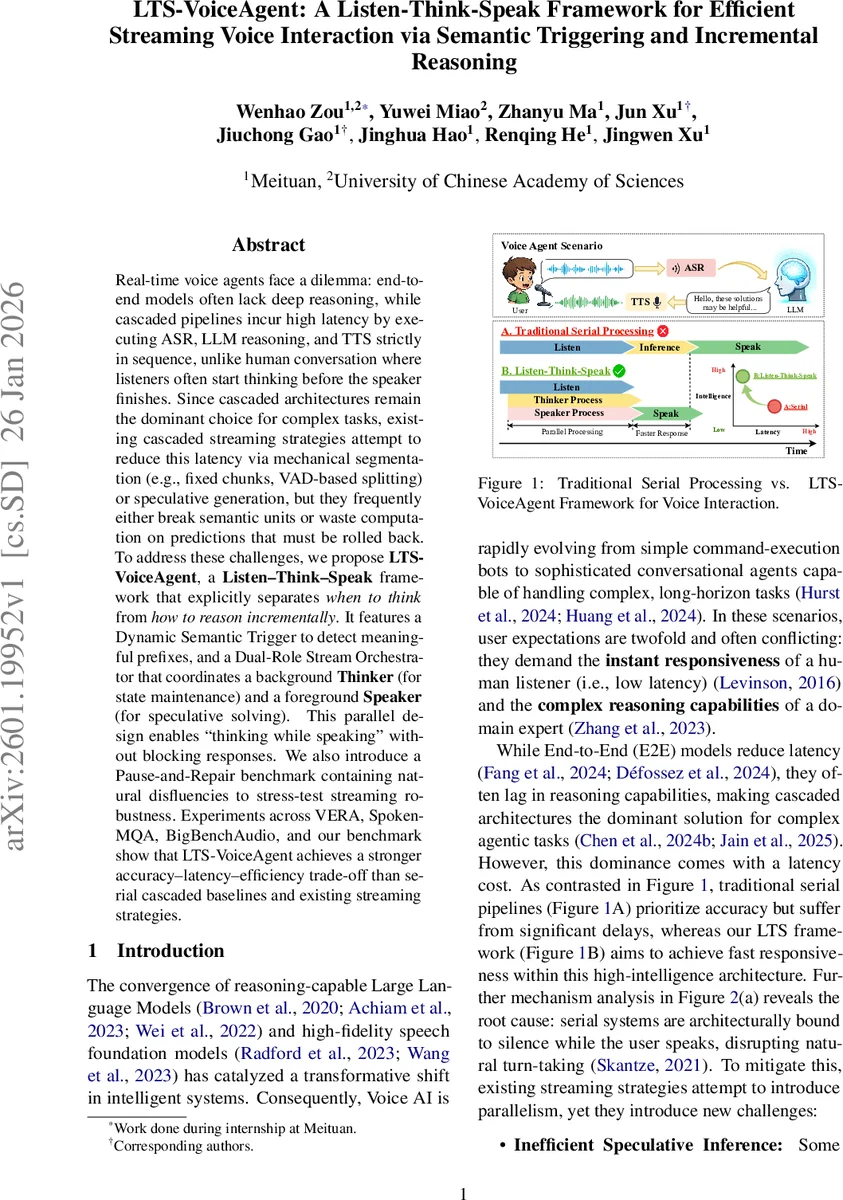

Real-time voice agents face a dilemma: end-to-end models often lack deep reasoning, while cascaded pipelines incur high latency by executing ASR, LLM reasoning, and TTS strictly in sequence, unlike human conversation where listeners often start thinking before the speaker finishes. Since cascaded architectures remain the dominant choice for complex tasks, existing cascaded streaming strategies attempt to reduce this latency via mechanical segmentation (e.g., fixed chunks, VAD-based splitting) or speculative generation, but they frequently either break semantic units or waste computation on predictions that must be rolled back. To address these challenges, we propose LTS-VoiceAgent, a Listen-Think-Speak framework that explicitly separates when to think from how to reason incrementally. It features a Dynamic Semantic Trigger to detect meaningful prefixes, and a Dual-Role Stream Orchestrator that coordinates a background Thinker (for state maintenance) and a foreground Speaker (for speculative solving). This parallel design enables “thinking while speaking” without blocking responses. We also introduce a Pause-and-Repair benchmark containing natural disfluencies to stress-test streaming robustness. Experiments across VERA, Spoken-MQA, BigBenchAudio, and our benchmark show that LTS-VoiceAgent achieves a stronger accuracy-latency-efficiency trade-off than serial cascaded baselines and existing streaming strategies.

💡 Research Summary

LTS‑VoiceAgent addresses the long‑standing latency‑accuracy trade‑off in real‑time voice agents by introducing a “Listen‑Think‑Speak” paradigm that explicitly separates the decision of when to invoke reasoning from the method of how to reason incrementally over a streaming audio input. The paper first critiques existing streaming strategies—fixed‑size chunking, voice‑activity‑detection (VAD) based splitting, and aggressive speculative generation—highlighting their tendency to either break semantic units or waste computation on predictions that must later be rolled back, especially when users pause, self‑correct, or change intent mid‑utterance.

To overcome these issues, the authors propose two core components:

- Dynamic Semantic Trigger – a lightweight binary classifier that evaluates the “semantic saturation” of the current ASR transcript. Using a self‑supervised data synthesis pipeline, GPT‑4o inserts special “

Comments & Academic Discussion

Loading comments...

Leave a Comment