AuroraEdge-V-2B: A Faster And Stronger Edge Visual Large Language Model

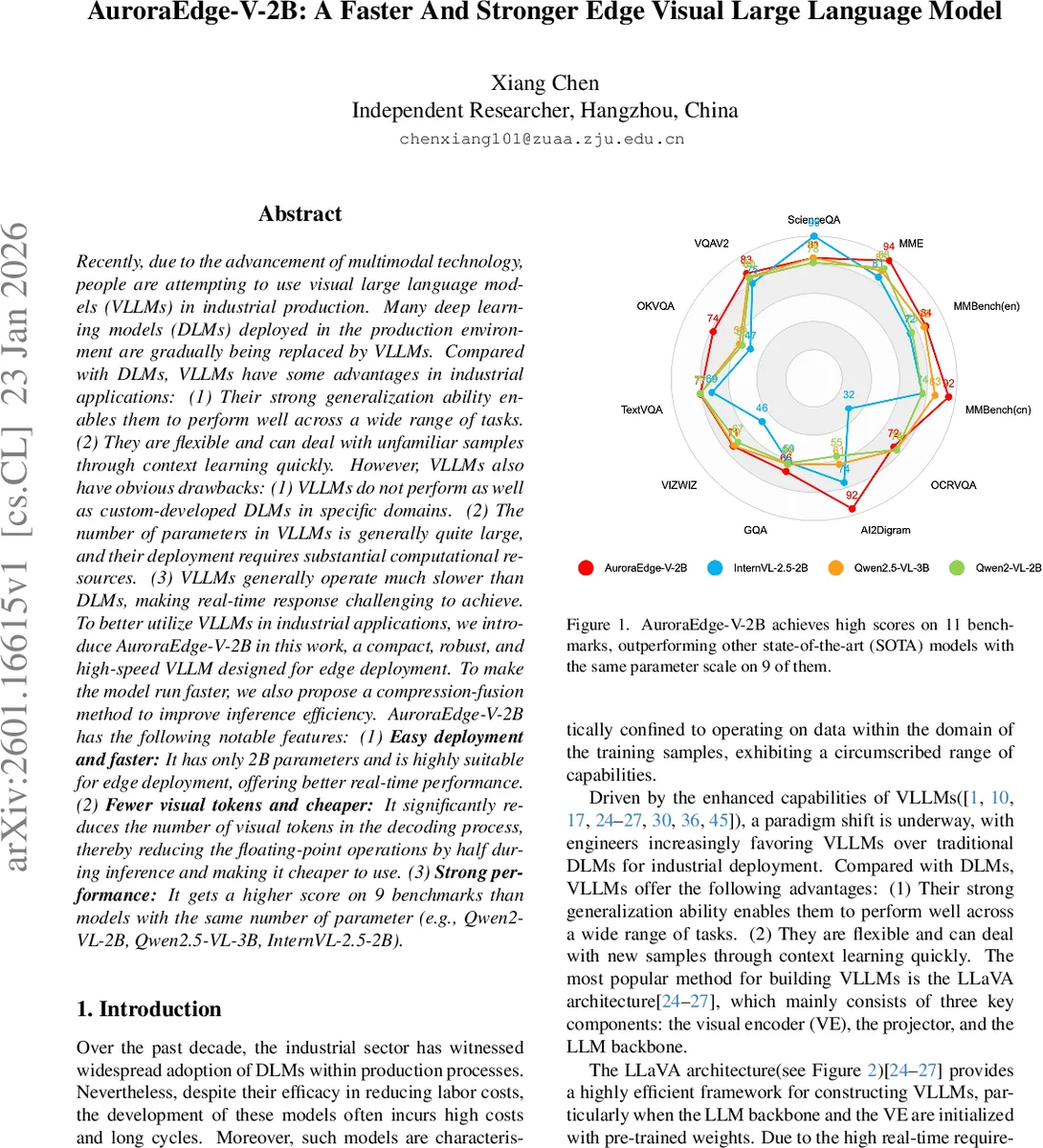

Recently, due to the advancement of multimodal technology, people are attempting to use visual large language models (VLLMs) in industrial production. Many deep learning models (DLMs) deployed in the production environment are gradually being replaced by VLLMs. Compared with DLMs, VLLMs have some advantages in industrial applications: (1) Their strong generalization ability enables them to perform well across a wide range of tasks. (2) They are flexible and can deal with unfamiliar samples through context learning quickly. However, VLLMs also have obvious drawbacks: (1) VLLMs do not perform as well as custom-developed DLMs in specific domains. (2) The number of parameters in VLLMs is generally quite large, and their deployment requires substantial computational resources. (3) VLLMs generally operate much slower than DLMs, making real-time response challenging to achieve. To better utilize VLLMs in industrial applications, we introduce AuroraEdge-V-2B in this work, a compact, robust, and high-speed VLLM designed for edge deployment. To make the model run faster, we also propose a compression-fusion method to improve inference efficiency. AuroraEdge-V-2B has the following notable features: (1) Easy deployment and faster: It has only 2B parameters and is highly suitable for edge deployment, offering better real-time performance. (2) Fewer visual tokens and cheaper: It significantly reduces the number of visual tokens in the decoding process, thereby reducing the floating-point operations by half during inference and making it cheaper to use. (3) Strong performance: It gets a higher score on 9 benchmarks than models with the same number of parameter (e.g., Qwen2-VL-2B, Qwen2.5-VL-3B, InternVL-2.5-2B).

💡 Research Summary

The paper introduces AuroraEdge‑V‑2B, a compact visual large language model (VLLM) specifically designed for edge deployment. While large‑scale VLLMs have demonstrated strong generalization and flexible context learning, their high parameter counts and the massive number of visual tokens generated by vision encoders make real‑time inference on resource‑constrained devices impractical. AuroraEdge‑V‑2B addresses these issues by combining a token‑compression strategy with a visual‑text fusion module, achieving both speed and accuracy improvements over similarly sized models.

Architecture Overview

The model builds on the widely adopted LLaVA framework, consisting of a vision encoder, a projector, a token compressor, a fusion module, and a language model (LLM) backbone. The authors replace the dynamic‑resolution processor used in many LLaVA variants with a fixed‑size visual encoder (SigLIP‑2‑so400m‑patch16‑naflex) that extracts at most 256 visual tokens per image. These tokens are projected into the LLM’s embedding space via a simple two‑layer MLP projector. A token compressor—implemented as a multi‑layer perceptron (MLP)—then reduces the token count from 256 to 64, cutting the visual token stream by 75 %.

Fusion Module

Because compressing visual tokens inevitably discards information, the authors introduce a fusion module that injects the original (pre‑compression) visual context into the textual token stream. They evaluate three fusion designs: pure cross‑attention, a single‑layer transformer decoder, and a combination of both. The combined approach (cross‑attention + decoder) yields the best downstream performance and is adopted as the final design. The fused tokens are concatenated with the compressed visual tokens and fed into the LLM decoder (Qwen2.5‑1.5B), which contains roughly 2 billion parameters, keeping the overall model lightweight.

Training Procedure

Training proceeds in three stages:

-

Vision‑Only Stage – Using large image‑caption datasets (LLaVA‑Pretrain, MMDU, Flickr30k, COCO), only the projector, compressor, and fusion module are fine‑tuned while the vision encoder and LLM remain frozen. This aligns visual and textual modalities.

-

LLM Fine‑Tuning Stage – Leveraging extensive VQA datasets (GQA, OKVQA, VQA‑V2, ShareGPT4V, etc.), the LLM and all connector modules are updated, but the vision encoder stays frozen. This enhances the model’s ability to reason over mixed image‑text inputs.

-

Joint Vision‑Text Stage – All parameters are unfrozen and jointly optimized on a mixture of captioning and VQA data, allowing the entire system to co‑adapt.

Performance Evaluation

AuroraEdge‑V‑2B is benchmarked on nine representative multimodal tasks, including image captioning, OCR‑VQA, and standard VQA. Compared with state‑of‑the‑art models of similar size—Qwen2‑VL‑2B, Qwen2.5‑VL‑3B, and InternVL‑2.5‑2B—AuroraEdge‑V‑2B achieves higher scores on all nine benchmarks, typically improving by 2–3 percentage points.

Crucially, the token‑compression reduces floating‑point operations (FLOPs) during inference by roughly 50 %, and empirical latency measurements show a three‑fold speedup over the baseline models. This makes the model viable for deployment on edge hardware such as ARM‑based SoCs or mobile GPUs, where real‑time response is essential.

Ablation Studies

The authors conduct ablations on the compression method (Conv2d, MaxPool2d, MLP) and on the fusion design. MLP compression converges fastest and yields the best accuracy, while the combined cross‑attention + decoder fusion outperforms either component alone.

Limitations and Future Work

The paper acknowledges that aggressive token compression beyond the 64‑token target leads to rapid performance degradation, indicating a trade‑off ceiling. Additionally, the current evaluation focuses on image‑text and VQA tasks; extending the approach to video, 3‑D point clouds, or other high‑dimensional modalities remains unexplored. The authors suggest future research could integrate knowledge distillation, quantization (e.g., INT4/INT8), or multi‑stage hierarchical compression to push the limits of edge‑friendly VLLMs further.

Conclusion

AuroraEdge‑V‑2B demonstrates that a carefully engineered token‑compression pipeline combined with a robust visual‑text fusion mechanism can produce a VLLM that is both lightweight (≈2 B parameters) and high‑performance, achieving up to three times faster inference while surpassing peer models on a suite of multimodal benchmarks. This work provides a practical blueprint for bringing sophisticated multimodal AI capabilities to edge devices in industrial settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment