RoboBrain 2.5: Depth in Sight, Time in Mind

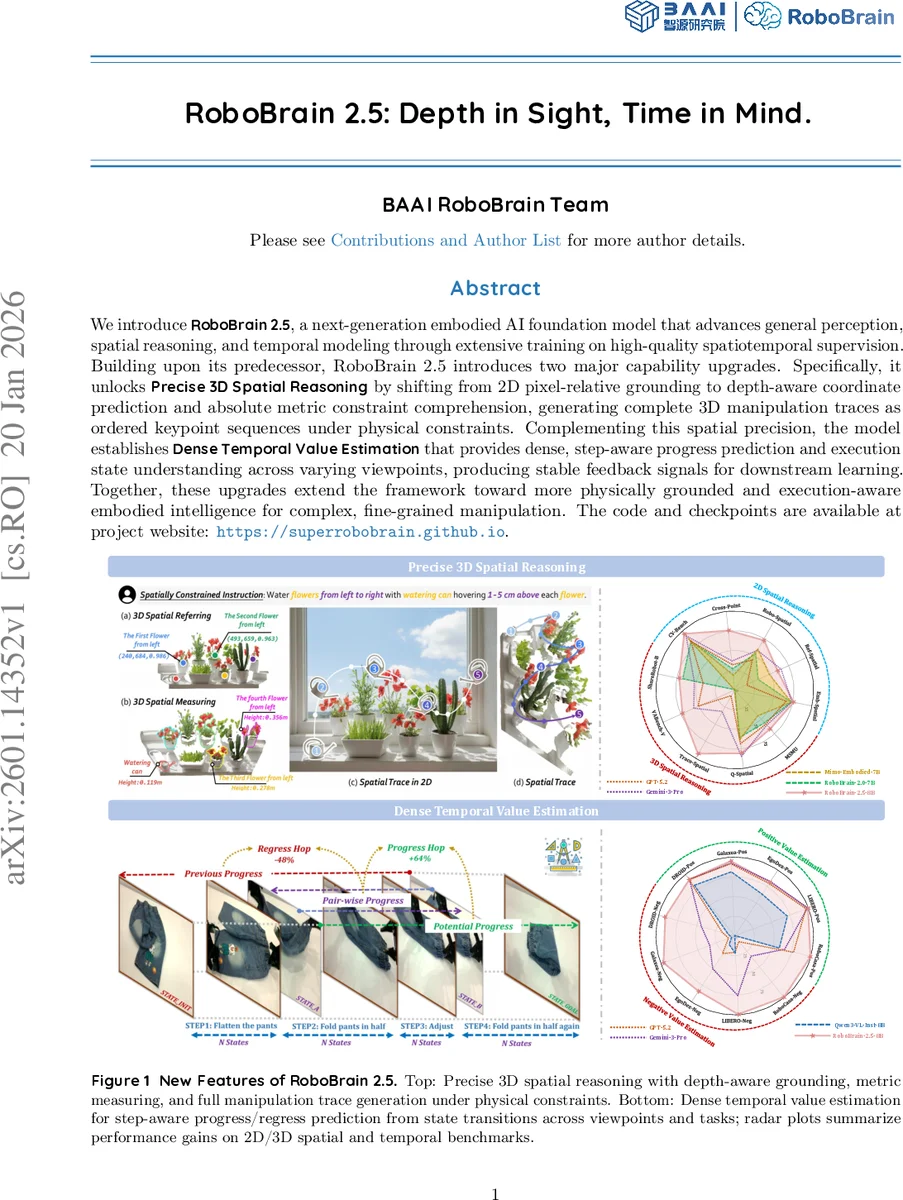

We introduce RoboBrain 2.5, a next-generation embodied AI foundation model that advances general perception, spatial reasoning, and temporal modeling through extensive training on high-quality spatiotemporal supervision. Building upon its predecessor, RoboBrain 2.5 introduces two major capability upgrades. Specifically, it unlocks Precise 3D Spatial Reasoning by shifting from 2D pixel-relative grounding to depth-aware coordinate prediction and absolute metric constraint comprehension, generating complete 3D manipulation traces as ordered keypoint sequences under physical constraints. Complementing this spatial precision, the model establishes Dense Temporal Value Estimation that provides dense, step-aware progress prediction and execution state understanding across varying viewpoints, producing stable feedback signals for downstream learning. Together, these upgrades extend the framework toward more physically grounded and execution-aware embodied intelligence for complex, fine-grained manipulation. The code and checkpoints are available at project website: https://superrobobrain.github.io

💡 Research Summary

**

RoboBrain 2.5 is presented as the next‑generation embodied‑AI foundation model that substantially upgrades the capabilities of its predecessor, RoboBrain 2.0, by addressing two critical shortcomings of current generalist agents: (1) a lack of metric‑aware spatial reasoning and (2) the absence of dense, step‑wise feedback during execution. The paper introduces two major innovations—Precise 3D Spatial Reasoning and Dense Temporal Value Estimation—both of which are tightly integrated into a unified vision‑language‑action architecture built on the Qwen3‑VL backbone.

Precise 3D Spatial Reasoning replaces the traditional 2‑D pixel‑relative grounding with a depth‑aware (u, v, d) coordinate prediction scheme. By explicitly modeling image‑plane coordinates together with absolute depth, the model can convert these predictions into metric‑accurate 3‑D points using known camera intrinsics. This enables millimeter‑level distance and clearance estimation, which is essential for collision‑free manipulation. The spatial upgrade is decomposed into three complementary competencies: (i) 3‑D spatial referring—identifying and localizing target objects in 3‑D space; (ii) 3‑D spatial measuring—predicting absolute distances, heights, and angles required by physical constraints; and (iii) 3‑D spatial trace generation—producing ordered keypoint sequences that serve as full manipulation plans. The (u, v, d) formulation also allows seamless reuse of existing 2‑D datasets (by dropping the depth channel) and facilitates multi‑task learning across referring, measuring, and tracing data streams.

Dense Temporal Value Estimation tackles the open‑loop nature of prior action generators. The authors construct a hop‑wise progress labeling pipeline that converts multi‑view video demonstrations into dense supervision signals. Each trajectory is segmented into sub‑tasks via human‑annotated keyframes, then uniformly sampled to obtain a set of synchronized multi‑view states S = {s₀,…,s_M}. Global progress Φ(s_i) = i/M is defined, and a relative progress label H(s_p, s_q) is computed by normalizing the progress change either by the remaining distance to the goal (forward hops) or by the distance already covered (regressive hops). This normalization guarantees that cumulative predictions stay within the

Comments & Academic Discussion

Loading comments...

Leave a Comment