Consensus Stability of Community Notes on X

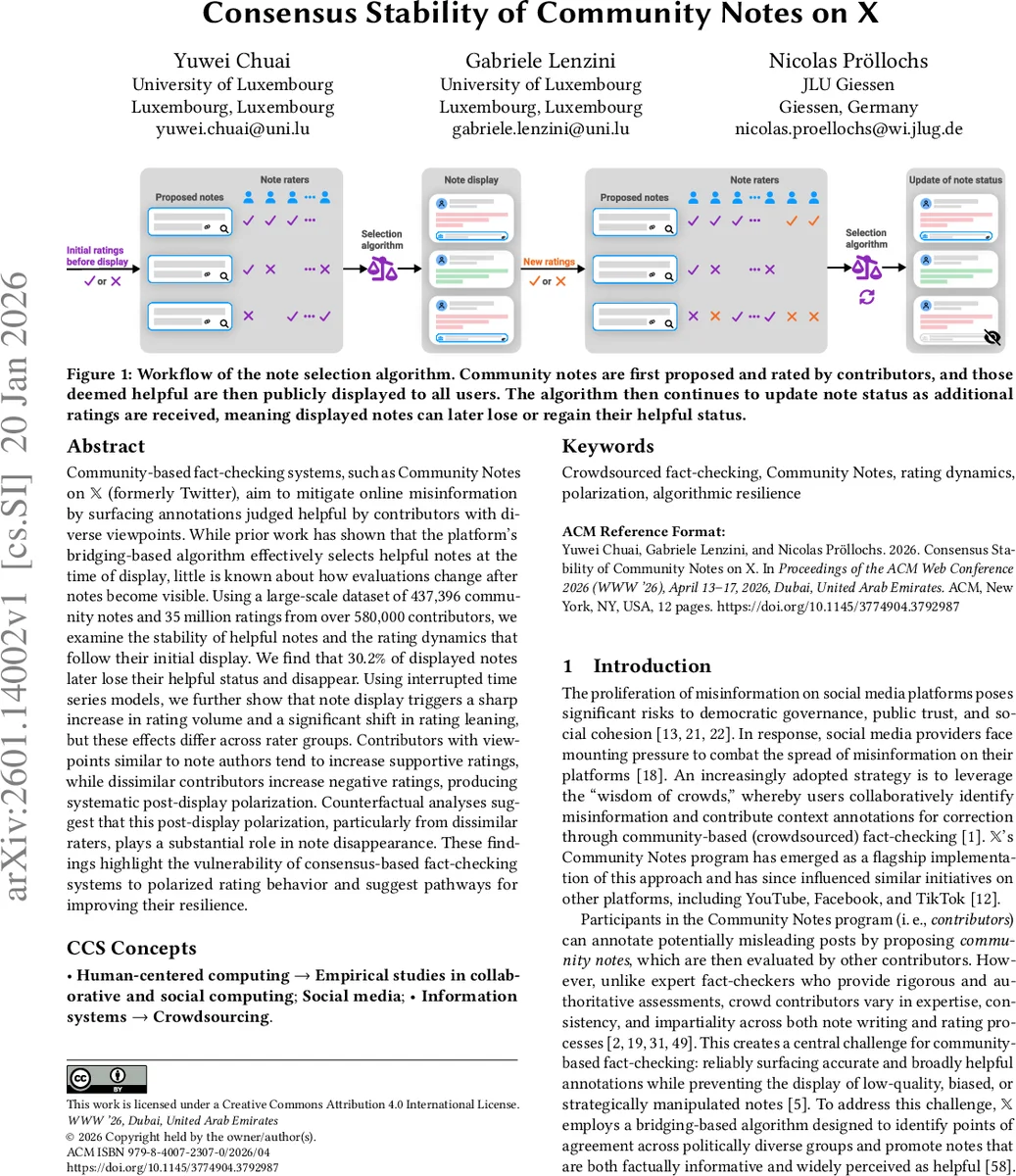

Community-based fact-checking systems, such as Community Notes on X (formerly Twitter), aim to mitigate online misinformation by surfacing annotations judged helpful by contributors with diverse viewpoints. While prior work has shown that the platform’s bridging-based algorithm effectively selects helpful notes at the time of display, little is known about how evaluations change after notes become visible. Using a large-scale dataset of 437,396 community notes and 35 million ratings from over 580,000 contributors, we examine the stability of helpful notes and the rating dynamics that follow their initial display. We find that 30.2% of displayed notes later lose their helpful status and disappear. Using interrupted time series models, we further show that note display triggers a sharp increase in rating volume and a significant shift in rating leaning, but these effects differ across rater groups. Contributors with viewpoints similar to note authors tend to increase supportive ratings, while dissimilar contributors increase negative ratings, producing systematic post-display polarization. Counterfactual analyses suggest that this post-display polarization, particularly from dissimilar raters, plays a substantial role in note disappearance. These findings highlight the vulnerability of consensus-based fact-checking systems to polarized rating behavior and suggest pathways for improving their resilience.

💡 Research Summary

This paper investigates the post‑display stability of “helpful” community notes on X (formerly Twitter), a crowdsourced fact‑checking system that surfaces annotations judged useful by a diverse contributor base. While prior work has shown that X’s bridging‑based selection algorithm effectively identifies helpful notes at the moment they are displayed, little is known about how those notes fare once they become visible to the broader public. Using a massive dataset comprising 437,396 community notes, 35,081,488 ratings, and over 583,000 contributors collected between November 2022 and July 2024, the authors examine four research questions: (1) the frequency with which initially helpful notes later lose their helpful status, (2) the relationship between note disappearance and characteristics of the source posts and authors, (3) how rating volume and the balance of up‑votes versus down‑votes change after a note is displayed, and (4) whether raters whose viewpoints are similar or dissimilar to the note author behave differently after display.

Key findings:

- Only 10 % of all notes ever reach the “helpful” threshold and are displayed; of those, 30.2 % later revert to a non‑helpful status and disappear.

- Logistic regression reveals that notes attached to health‑ or politics‑related posts and to posts authored by high‑influence users have a significantly higher probability of disappearance. A political asymmetry is observed: notes on left‑leaning authors disappear more often than those on right‑leaning authors.

- Interrupted time‑series (ITS) models show that the moment a note is displayed triggers a sharp spike in rating volume and a pronounced shift in rating leaning (the difference between up‑votes and down‑votes).

- By estimating 200‑dimensional rater factors via matrix factorization, the authors quantify viewpoint similarity between raters and note authors. Raters whose viewpoints align with the author increase supportive ratings after display, whereas dissimilar raters increase negative ratings, creating systematic post‑display polarization.

- Counterfactual simulations that remove the negative rating surge from dissimilar raters reduce the disappearance rate by roughly 12 percentage points, indicating that this polarization is a major driver of note removal.

Methodologically, the study reproduces X’s open‑source matrix‑factorization selection algorithm, extracts note intercepts (global helpfulness) and note factors (polarization), and extends the factor model to 200 dimensions for richer rater similarity estimation. The ITS design treats the display event as an intervention, allowing the authors to isolate immediate changes in rating dynamics while controlling for pre‑display trends.

Implications: The bridging‑based algorithm succeeds at the selection stage but does not fully mitigate post‑display dynamics that can erode consensus. The authors propose three design interventions to bolster resilience: (1) a temporal decay of rating weight so that later ratings have diminishing influence, (2) a bias‑correction layer that down‑weights negative ratings from ideologically distant raters, and (3) targeted monitoring of high‑risk topics (health, politics) and high‑influence authors to detect and intervene in rapid polarization spikes.

In conclusion, the paper provides the first large‑scale empirical evidence that community‑based fact‑checking systems are vulnerable to polarized rating behavior after notes become public. Even when an initial broad consensus is achieved, the dynamic rating process can generate new partisan backlash that leads to note disappearance. Future work should explore more sophisticated viewpoint modeling, real‑time bias detection, and hybrid human‑machine verification pipelines to ensure that consensus‑based fact‑checking remains both accurate and durable over time.

Comments & Academic Discussion

Loading comments...

Leave a Comment