Relevance-aware Multi-context Contrastive Decoding for Retrieval-augmented Visual Question Answering

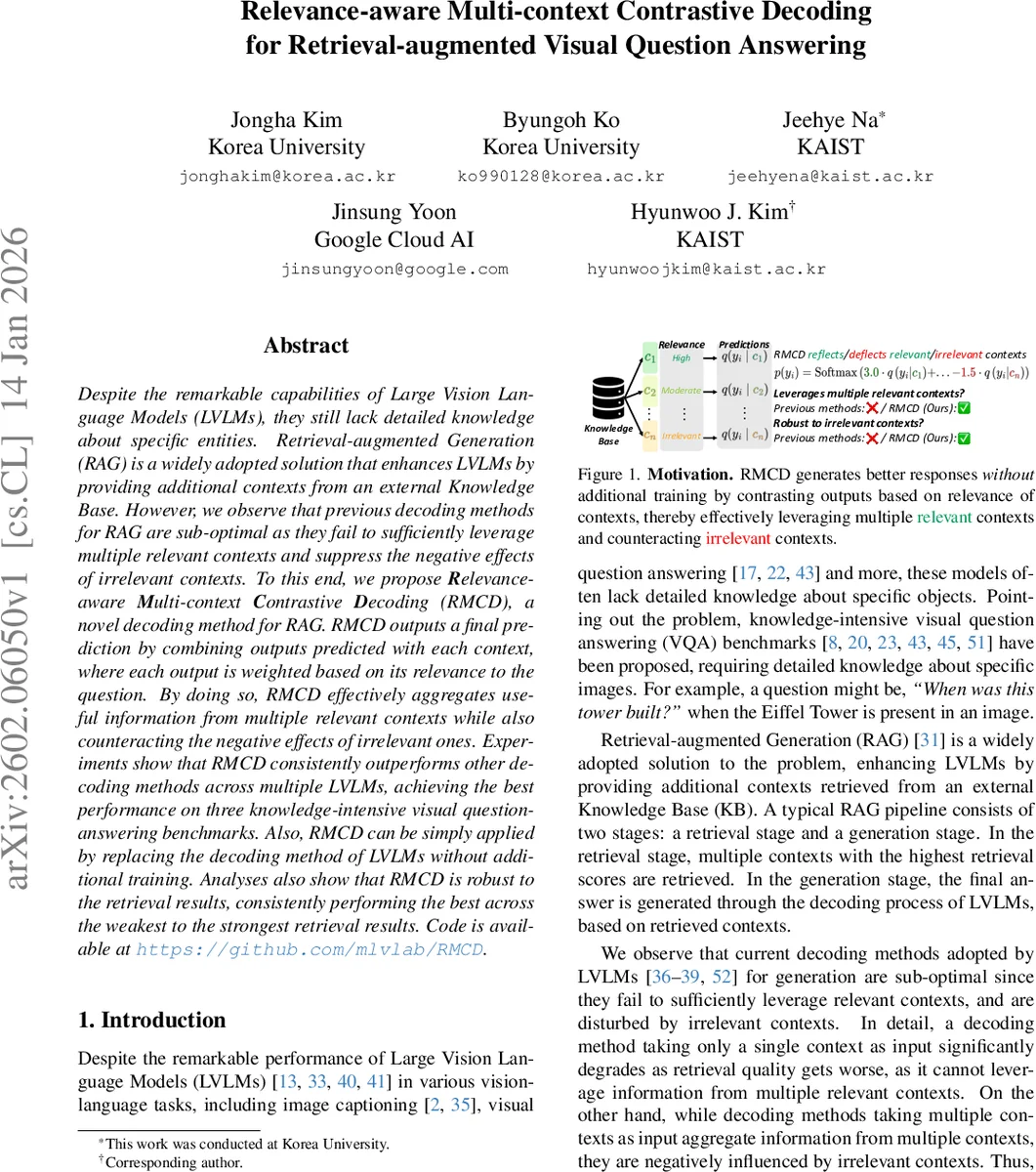

Despite the remarkable capabilities of Large Vision Language Models (LVLMs), they still lack detailed knowledge about specific entities. Retrieval-augmented Generation (RAG) is a widely adopted solution that enhances LVLMs by providing additional contexts from an external Knowledge Base. However, we observe that previous decoding methods for RAG are sub-optimal as they fail to sufficiently leverage multiple relevant contexts and suppress the negative effects of irrelevant contexts. To this end, we propose Relevance-aware Multi-context Contrastive Decoding (RMCD), a novel decoding method for RAG. RMCD outputs a final prediction by combining outputs predicted with each context, where each output is weighted based on its relevance to the question. By doing so, RMCD effectively aggregates useful information from multiple relevant contexts while also counteracting the negative effects of irrelevant ones. Experiments show that RMCD consistently outperforms other decoding methods across multiple LVLMs, achieving the best performance on three knowledge-intensive visual question-answering benchmarks. Also, RMCD can be simply applied by replacing the decoding method of LVLMs without additional training. Analyses also show that RMCD is robust to the retrieval results, consistently performing the best across the weakest to the strongest retrieval results. Code is available at https://github.com/mlvlab/RMCD.

💡 Research Summary

Large Vision‑Language Models (LVLMs) have achieved impressive results on a variety of multimodal tasks, yet they still struggle when a question requires detailed factual knowledge about specific entities. Retrieval‑augmented Generation (RAG) addresses this gap by fetching textual passages from an external knowledge base and feeding them to the LVLM during generation. Existing decoding strategies for RAG are either single‑context (using only the top‑ranked passage) or multi‑context (concatenating several passages). The former degrades sharply when retrieval quality drops, while the latter can be polluted by irrelevant passages, leading to hallucinations or incorrect answers.

The paper introduces Relevance‑aware Multi‑context Contrastive Decoding (RMCD), a training‑free decoding technique that explicitly leverages the relevance scores of each retrieved passage. For every context cⱼ (including an “empty” context that represents unconditional generation), the LVLM produces a separate logit vector q(yᵢ|cⱼ). The retrieval score s_{cⱼ} is first normalized with a temperature τ₁ to obtain a relative weight w_{cⱼ}. A second temperature τ₂ maps w_{cⱼ} to a final context weight αⱼ, which can be positive or negative. Positive αⱼ amplify the contribution of highly relevant passages (reflection), while negative αⱼ suppress the influence of low‑scoring passages (deflection). The final token distribution is the softmax of the weighted sum Σⱼ αⱼ·q(yᵢ|cⱼ).

Key technical contributions:

- Contrastive multi‑context aggregation – unlike prior single‑context contrastive decoding (SCD), RMCD simultaneously processes multiple passages, allowing it to retain useful information even when some retrieved items are noisy.

- Score‑driven adaptive weighting – the two‑temperature scheme provides fine‑grained control over how strongly each passage influences the output, and the ability to assign negative weights gives a principled way to “deflect” irrelevant information.

- Training‑free integration – RMCD does not require any fine‑tuning of the LVLM; it merely calls the model multiple times (once per context) and combines the results, making it compatible with any existing LVLM architecture.

- Efficiency – because the combination is a simple weighted sum, RMCD has the lowest computational overhead among multi‑context methods, achieving roughly a 33 % throughput gain compared to attention‑based fusion approaches.

Experiments were conducted on three knowledge‑intensive VQA benchmarks—InfoSeek, Encyclopedic VQA, and OK‑VQA—using seven state‑of‑the‑art LVLMs (e.g., BLIP‑2, InstructBLIP, LLaVA, MiniGPT‑4). RMCD consistently outperformed baseline decoding strategies, delivering absolute accuracy improvements ranging from 1.8 to 3.2 percentage points across datasets and models. Notably, when retrieval quality was poor (top‑k recall below 30 %), RMCD’s advantage widened to 5–7 % points, demonstrating robustness to noisy retrieval. An “oracle” experiment, where the perfect passage is always included, showed that RMCD still achieved the highest scores, confirming that its weighting scheme effectively balances reflection and deflection without over‑relying on any single passage.

Ablation studies examined the impact of the temperature hyper‑parameters and the number of contexts. The authors found that using 5–10 contexts strikes a good trade‑off between performance and memory consumption; beyond that, the per‑context forward passes become a bottleneck. Visualizations of αⱼ values illustrate that high‑scoring passages receive strong positive weights, while low‑scoring ones receive small or negative weights, directly confirming the intended behavior.

Limitations include the linear increase in GPU memory and compute with the number of contexts, as each context requires a separate forward pass through the LVLM. Future work could explore context selection or compression techniques to keep the method scalable. Additionally, the current weighting relies solely on the retriever’s raw scores; incorporating semantic similarity between the question and passage could further refine αⱼ.

In summary, RMCD offers a simple yet powerful way to make RAG‑based visual question answering more reliable. By dynamically reflecting relevant knowledge and deflecting irrelevant noise, it achieves state‑of‑the‑art results across multiple LVLMs and datasets without any additional training. The code and pretrained models are publicly released, facilitating immediate adoption in downstream multimodal reasoning tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment