STEMVerse: A Dual-Axis Diagnostic Framework for STEM Reasoning in Large Language Models

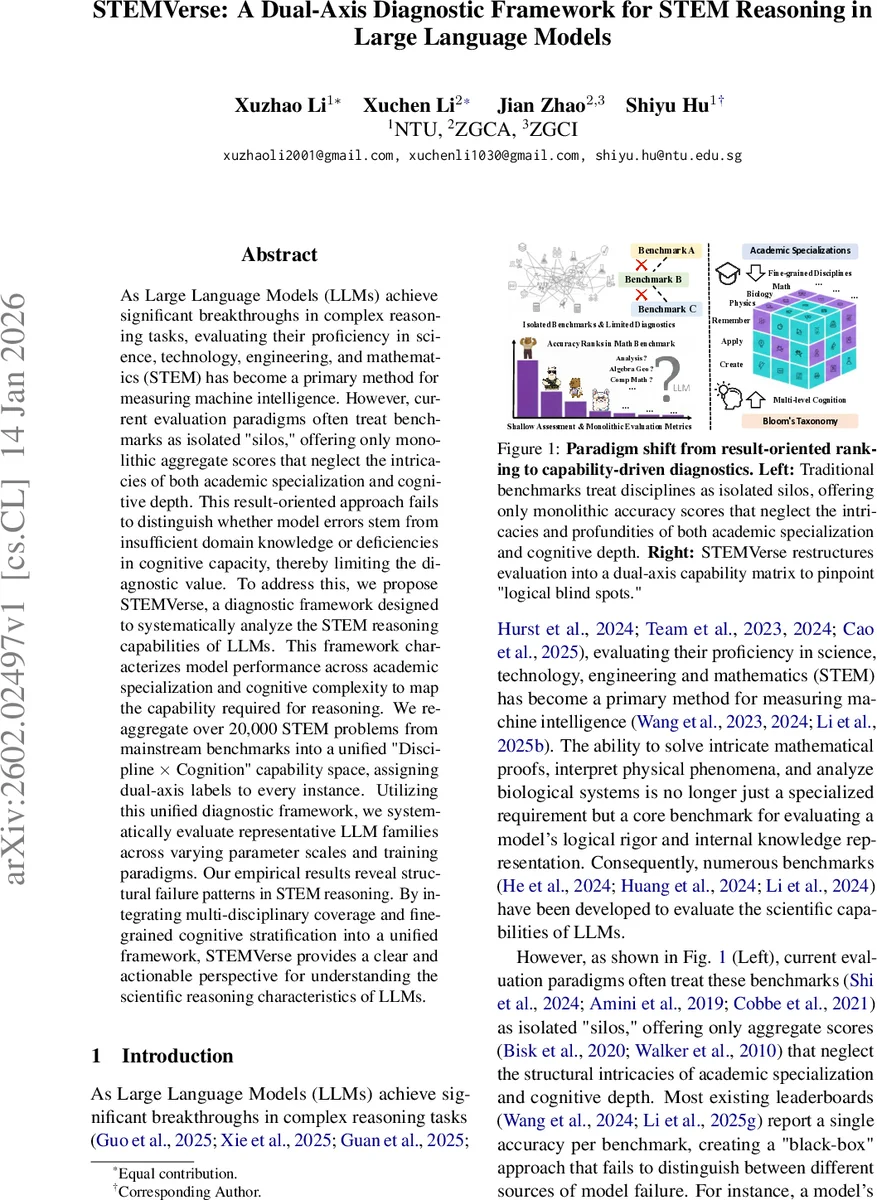

As Large Language Models (LLMs) achieve significant breakthroughs in complex reasoning tasks, evaluating their proficiency in science, technology, engineering, and mathematics (STEM) has become a primary method for measuring machine intelligence. However, current evaluation paradigms often treat benchmarks as isolated “silos,” offering only monolithic aggregate scores that neglect the intricacies of both academic specialization and cognitive depth. This result-oriented approach fails to distinguish whether model errors stem from insufficient domain knowledge or deficiencies in cognitive capacity, thereby limiting the diagnostic value. To address this, we propose STEMVerse, a diagnostic framework designed to systematically analyze the STEM reasoning capabilities of LLMs. This framework characterizes model performance across academic specialization and cognitive complexity to map the capability required for reasoning. We re-aggregate over 20,000 STEM problems from mainstream benchmarks into a unified “Discipline $\times$ Cognition” capability space, assigning dual-axis labels to every instance. Utilizing this unified diagnostic framework, we systematically evaluate representative LLM families across varying parameter scales and training paradigms. Our empirical results reveal structural failure patterns in STEM reasoning. By integrating multi-disciplinary coverage and fine-grained cognitive stratification into a unified framework, STEMVerse provides a clear and actionable perspective for understanding the scientific reasoning characteristics of LLMs.

💡 Research Summary

The paper introduces STEMVerse, a diagnostic framework that re‑organizes more than 20,000 STEM problems from a wide range of existing benchmarks into a unified “Discipline × Cognition” capability space. Each problem is stripped of its original label and mapped onto two orthogonal axes: (1) an academic specialization axis that distinguishes 27 fine‑grained sub‑disciplines across Mathematics, Physics, Chemistry, and Biology, and (2) a cognitive‑complexity axis derived from Bloom’s taxonomy (Remember, Understand, Apply, Analyze, Evaluate, Create). This dual‑axis matrix replaces the traditional “single‑score” leaderboard with a spectral view of model performance, allowing researchers to pinpoint exactly where a model’s reasoning breaks down—whether due to missing domain knowledge or insufficient high‑order reasoning ability.

Using this framework, the authors evaluate several open‑source LLM families (Qwen and Llama series) across four parameter scales (3 B, 4 B, 8 B, 14 B) and different training paradigms (pre‑training, instruction‑tuning). The empirical findings reveal three major patterns. First, performance grows roughly linearly with model size on low‑level cognitive tasks (Remember, Understand, Apply) but plateaus or even collapses on higher‑order tasks (Analyze, Evaluate, Create). This “logic‑symbolic collapse” shows that larger models still struggle with multi‑step causal reasoning and creative synthesis.

Second, there is a pronounced discipline‑specific gap. Symbol‑heavy fields such as differential equations, quantum mechanics, and computational chemistry exhibit markedly lower accuracies than concept‑heavy fields like ecology or genetics, even for the same model size. This suggests current LLMs are better at procedural or formulaic execution than at integrating diverse scientific concepts.

Third, by intersecting the two axes the authors uncover “discipline‑cognition bottlenecks”: for many sub‑disciplines, performance drops sharply when moving from the Analyze to the Evaluate level. For example, problems in thermodynamics or linear algebra maintain ~70 % accuracy up to the Analyze stage but fall below 30 % at Evaluation. Such bottlenecks indicate that models often capture surface patterns but fail to grasp deeper structural relationships required for higher‑order judgment.

The paper argues that these diagnostic insights should guide future model development. It recommends (a) richer multi‑step Chain‑of‑Thought prompting and step‑wise feedback to strengthen high‑order reasoning, (b) domain‑specific fine‑tuning that explicitly teaches symbolic manipulation and logical operators, and (c) the continual expansion of benchmark suites to include more high‑cognition, cross‑disciplinary problems.

In sum, STEMVerse provides a systematic, fine‑grained evaluation methodology that moves beyond aggregate accuracy scores. By mapping each problem onto a two‑dimensional capability grid, it makes the hidden structure of LLM STEM reasoning visible, enabling researchers to diagnose knowledge gaps versus reasoning gaps, track non‑linear scaling trends, and design targeted interventions for the next generation of scientific language models.

Comments & Academic Discussion

Loading comments...

Leave a Comment