PrivGemo: Privacy-Preserving Dual-Tower Graph Retrieval for Empowering LLM Reasoning with Memory Augmentation

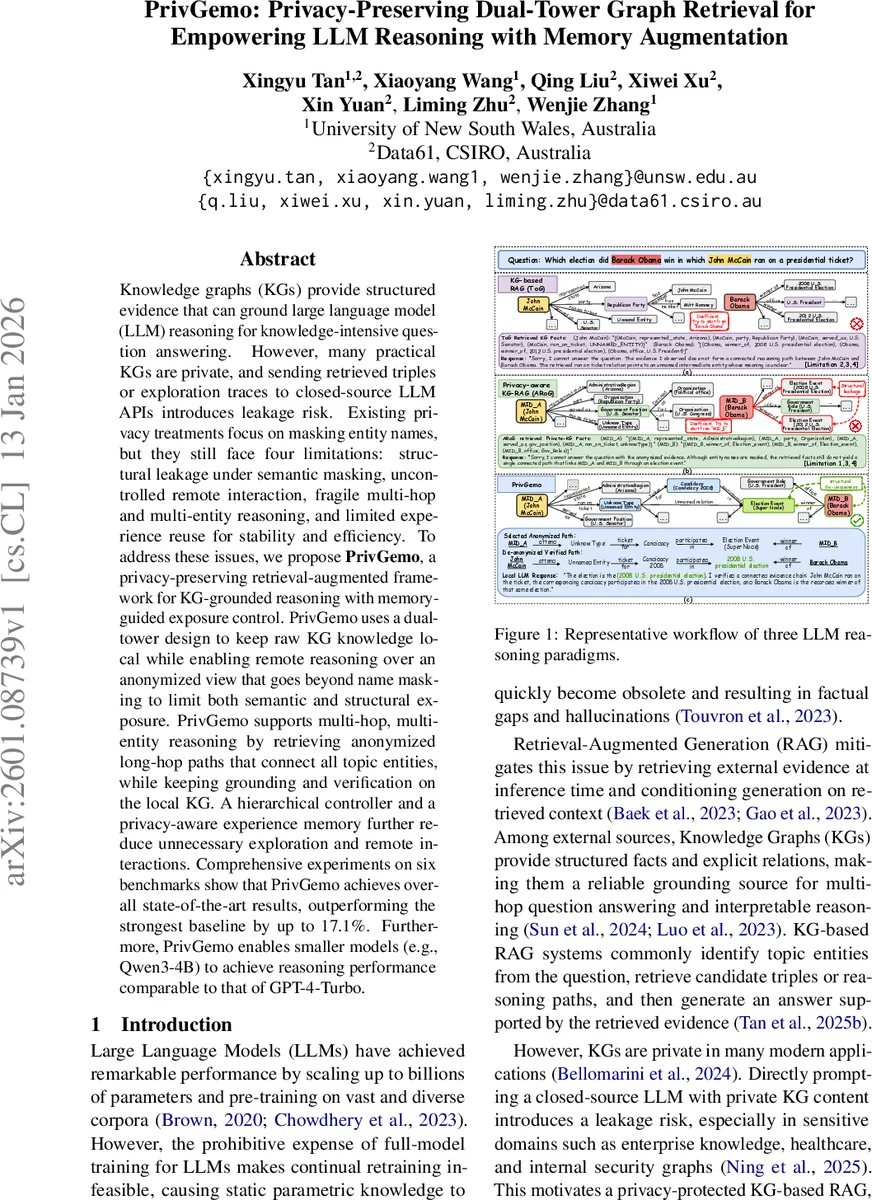

Knowledge graphs (KGs) provide structured evidence that can ground large language model (LLM) reasoning for knowledge-intensive question answering. However, many practical KGs are private, and sending retrieved triples or exploration traces to closed-source LLM APIs introduces leakage risk. Existing privacy treatments focus on masking entity names, but they still face four limitations: structural leakage under semantic masking, uncontrollable remote interaction, fragile multi-hop and multi-entity reasoning, and limited experience reuse for stability and efficiency. To address these issues, we propose PrivGemo, a privacy-preserving retrieval-augmented framework for KG-grounded reasoning with memory-guided exposure control. PrivGemo uses a dual-tower design to keep raw KG knowledge local while enabling remote reasoning over an anonymized view that goes beyond name masking to limit both semantic and structural exposure. PrivGemo supports multi-hop, multi-entity reasoning by retrieving anonymized long-hop paths that connect all topic entities, while keeping grounding and verification on the local KG. A hierarchical controller and a privacy-aware experience memory further reduce unnecessary exploration and remote interactions. Comprehensive experiments on six benchmarks show that PrivGemo achieves overall state-of-the-art results, outperforming the strongest baseline by up to 17.1%. Furthermore, PrivGemo enables smaller models (e.g., Qwen3-4B) to achieve reasoning performance comparable to that of GPT-4-Turbo.

💡 Research Summary

PrivGemo tackles the critical challenge of using private knowledge graphs (KGs) with large language models (LLMs) without exposing sensitive data to external APIs. Existing privacy‑preserving KG‑RAG methods rely mainly on semantic masking—replacing entity names with meaningless identifiers—but they still leak structural information through unique connectivity patterns and repeated exploration traces. The authors identify four key limitations of prior work: (L1) semantic masking alone is insufficient to prevent structural leakage; (L2) remote LLM interaction is uncontrolled, leading to excessive exposure; (L3) multi‑hop and multi‑entity reasoning is fragile because current pipelines explore each topic entity separately and prune globally correct paths early; and (L4) there is no mechanism to reuse successful reasoning experiences, causing redundant exploration and higher cost.

PrivGemo introduces a dual‑tower architecture that separates a local “Hand” LLM from a remote “Brain” LLM. The Hand LLM stays on‑premises, handling raw KG access, topic‑entity extraction, sub‑graph construction, de‑anonymization, and final answer verification. The Brain LLM only sees an anonymized view of the KG, denoted ˜G_Q, which is generated per‑session using a fresh secret key s_Q and a HMAC‑based mapping ϕ_Q. This mapping replaces each entity and relation with a short, session‑specific token (e.g., ent_17) and optionally tags them with coarse schema types, while literals are coarsened (dates rounded, numbers bucketed). By discarding the secret after the session, cross‑session linkability is eliminated.

To mitigate structural leakage, PrivGemo applies “structure‑level de‑uniqueness” to ˜G_Q. Rare motifs and uniquely identifying sub‑graph signatures are either generalized or removed, ensuring that repeated queries do not reveal the same distinctive topology. This goes beyond simple name masking and reduces the risk of re‑identification from graph structure alone.

For robust multi‑hop, multi‑entity reasoning, the framework employs an indicator‑guided long‑hop path retrieval mechanism. Given a question Q, the system first identifies all topic entities T(Q) using a local LLM and a dense retrieval model. It then expands a bounded sub‑graph G_raw^Q around these anchors up to D_max hops. Instead of greedy hop‑by‑hop expansion, PrivGemo searches for a single connected reasoning path that simultaneously covers all topic entities. This “entity path” is presented to the Brain LLM as candidate paths, which selects the most plausible one under the anonymized view. The Hand LLM subsequently de‑anonymizes the selected path, verifies its correctness against the raw KG, and composes the final answer.

A central contribution is the privacy‑aware experience memory. Each successful session stores an embedding of the anonymized question ˜Q together with an “indicator” I that captures the reasoning pattern (e.g., the chosen path shape). During later inference, the Hand LLM queries this memory to retrieve similar past experiences, allowing it to skip unnecessary Brain calls or to guide the Brain’s selection process. Verified artifacts are encrypted and written back, forming a continuously evolving repository that improves efficiency and reduces exposure over time.

Extensive experiments on six benchmark datasets—including standard KG‑QA and multi‑hop question answering tasks—demonstrate that PrivGemo achieves state‑of‑the‑art performance, outperforming the strongest baseline by up to 17.1 percentage points in accuracy. Notably, a small 4‑billion‑parameter model (Qwen‑3‑4B) equipped with PrivGemo matches the reasoning performance of GPT‑4‑Turbo, highlighting the framework’s ability to close the gap between model size and capability while preserving privacy.

The authors also emphasize practical advantages: PrivGemo is plug‑and‑play, requiring only a local KG and access to any LLM API for the Brain component; it automatically incorporates new KG facts without costly model fine‑tuning; and its hierarchical controller, combined with the experience memory, yields bounded exposure and predictable cost. The paper concludes with suggestions for future work, such as scaling the experience memory, extending to multimodal evidence, and applying the framework to highly regulated domains like healthcare and finance.

In summary, PrivGemo presents a comprehensive solution that jointly addresses semantic and structural privacy, controlled remote reasoning, robust multi‑hop inference, and experience‑driven efficiency, establishing a new paradigm for privacy‑preserving retrieval‑augmented generation with knowledge graphs.

Comments & Academic Discussion

Loading comments...

Leave a Comment