CtrlFuse: Mask-Prompt Guided Controllable Infrared and Visible Image Fusion

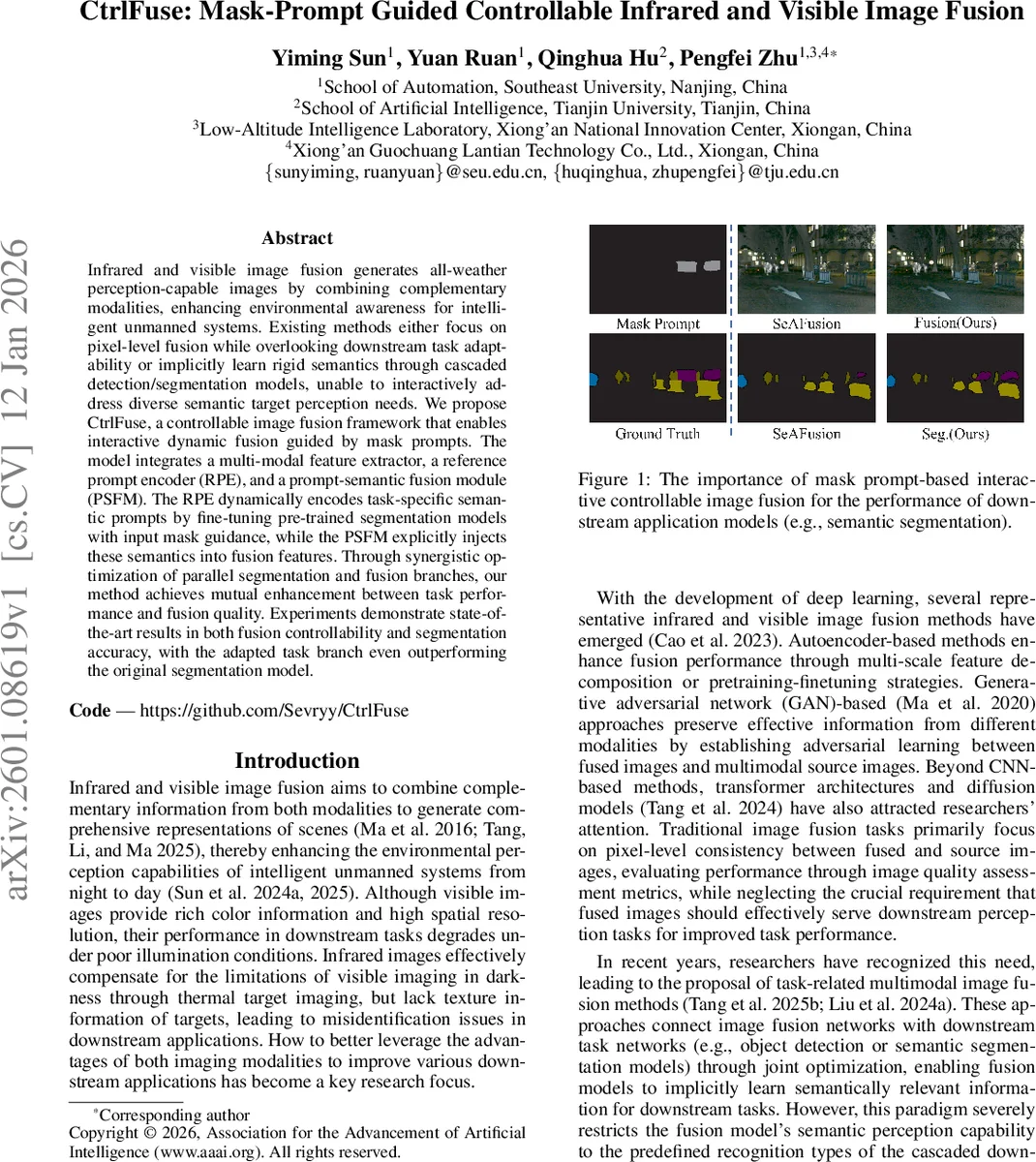

Infrared and visible image fusion generates all-weather perception-capable images by combining complementary modalities, enhancing environmental awareness for intelligent unmanned systems. Existing methods either focus on pixel-level fusion while overlooking downstream task adaptability or implicitly learn rigid semantics through cascaded detection/segmentation models, unable to interactively address diverse semantic target perception needs. We propose CtrlFuse, a controllable image fusion framework that enables interactive dynamic fusion guided by mask prompts. The model integrates a multi-modal feature extractor, a reference prompt encoder (RPE), and a prompt-semantic fusion module (PSFM). The RPE dynamically encodes task-specific semantic prompts by fine-tuning pre-trained segmentation models with input mask guidance, while the PSFM explicitly injects these semantics into fusion features. Through synergistic optimization of parallel segmentation and fusion branches, our method achieves mutual enhancement between task performance and fusion quality. Experiments demonstrate state-of-the-art results in both fusion controllability and segmentation accuracy, with the adapted task branch even outperforming the original segmentation model.

💡 Research Summary

CtrlFuse introduces a novel paradigm for infrared‑visible image fusion that empowers users to steer the fusion process with mask‑based prompts. Traditional fusion methods focus on pixel‑level fidelity (e.g., minimizing MSE, maximizing PSNR/SSIM) and often ignore how the fused output serves downstream tasks such as object detection or semantic segmentation. Recent task‑driven approaches attempt to bridge this gap by jointly optimizing a fusion network with a downstream model, but they remain rigid: the semantics learned are fixed to the pre‑trained downstream model’s categories, and they cannot adapt on‑the‑fly to new or user‑specified targets.

The proposed framework consists of four main components: (1) a multimodal backbone encoder‑decoder that extracts features from the infrared (I_ir) and visible (I_vis) inputs, (2) a Reference Prompt Encoder (RPE) that transforms a user‑provided binary mask into a dynamic semantic prompt, (3) a Prompt‑Semantic Fusion Module (PSFM) that explicitly injects the prompt and the segmentation masks produced by a foundation segmentation model into the fusion features, and (4) a frozen Segment Anything Model (SAM) that supplies high‑quality, zero‑shot segmentation masks and a prompt encoder.

RPE works by treating the infrared (or visible) feature map as a “support” and the concatenated multimodal feature as a “query”. The mask is element‑wise multiplied with the support feature, average‑pooled to obtain a region‑enhancement vector F_t, and then concatenated with both support and query features before a convolutional projection. The resulting support (F_supp) and query (F_query) tensors are processed through a cross‑attention layer followed by self‑attention, using a set of 40 learnable query tokens Q. This yields refined queries Q′ that attend to the most salient regions, which are then cross‑attended with F_query to produce reference prompts P′. P′ is fed into SAM’s frozen Prompt Encoder to generate the final prompt embedding P.

PSFM receives three inputs for each modality: the original encoded feature F, the prompt embedding P, and the SAM‑generated mask M. After down‑sampling F, the feature sequence is cross‑attended with P, reshaped back to spatial dimensions, up‑sampled, and finally multiplied element‑wise with M. The result is a prompt‑enhanced feature F_p that emphasizes the user‑specified semantic region. F_p is added to the preliminary fused feature (the concatenation of infrared and visible features) to produce the final fused representation, which the decoder converts into the output fused image I_F.

Training optimizes two losses simultaneously: a fusion loss L_fusion (comprising PSNR, SSIM, Nabf, etc.) that encourages high visual fidelity, and a segmentation loss L_seg (e.g., Dice + cross‑entropy) that forces SAM’s masks to align with ground‑truth labels. The dual‑objective setup creates a synergistic loop: better segmentation prompts improve fusion quality, and higher‑quality fused images provide richer cues for segmentation, leading to mutual performance gains.

Experiments were conducted on three public datasets: FMB (1,500 IR‑VIS pairs with semantic labels), MSRS (1,444 pairs), and DroneVehicle (200 pairs with pseudo‑labels). CtrlFuse was compared against eight state‑of‑the‑art methods, including CLIP‑based LDfusion, autoencoder‑based NestFuse, decomposition networks DIDFuse and CDDFuse, transformer‑based SwinFuse, segmentation‑driven SeAFusion and SDCFusion, and high‑level task‑driven PSFusion. Quantitatively, CtrlFuse achieved the highest PSNR and Nabf scores across FMB and MSRS, indicating superior preservation of detail and reduced distortion. More importantly, when a mask prompting the “Car” class was supplied, the semantic segmentation mIoU improved by 4–6 % over the best baseline, demonstrating effective controllability. Qualitative visualizations showed that the fused images retained thermal signatures from infrared while preserving texture and color from the visible modality, and that the targeted objects were markedly sharper when prompted.

Key contributions are: (1) a concise, interactive fusion paradigm that leverages mask prompts for dynamic semantic control, (2) the Reference Prompt Encoder that generates task‑specific prompts on the fly, (3) the Prompt‑Semantic Fusion Module that explicitly merges prompts, segmentation masks, and multimodal features, and (4) extensive validation showing that joint optimization of fusion and segmentation yields mutually beneficial improvements.

Limitations include the high memory footprint of the frozen SAM when processing high‑resolution images, and the added computational overhead of the attention‑heavy RPE and PSFM, which may hinder real‑time deployment. The current design supports only binary mask prompts; extending to natural‑language prompts or multi‑class masks would broaden applicability. Future work could explore lightweight SAM variants, diffusion‑based prompt integration, and hardware‑accelerated implementations to achieve real‑time, multi‑modal, controllable fusion for autonomous vehicles, surveillance drones, and search‑and‑rescue robots.

In summary, CtrlFuse bridges the gap between low‑level multimodal image fusion and high‑level semantic requirements by introducing a mask‑prompt guided, jointly optimized framework that delivers both visually superior fused images and task‑adaptable semantic control, setting a new direction for intelligent perception systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment