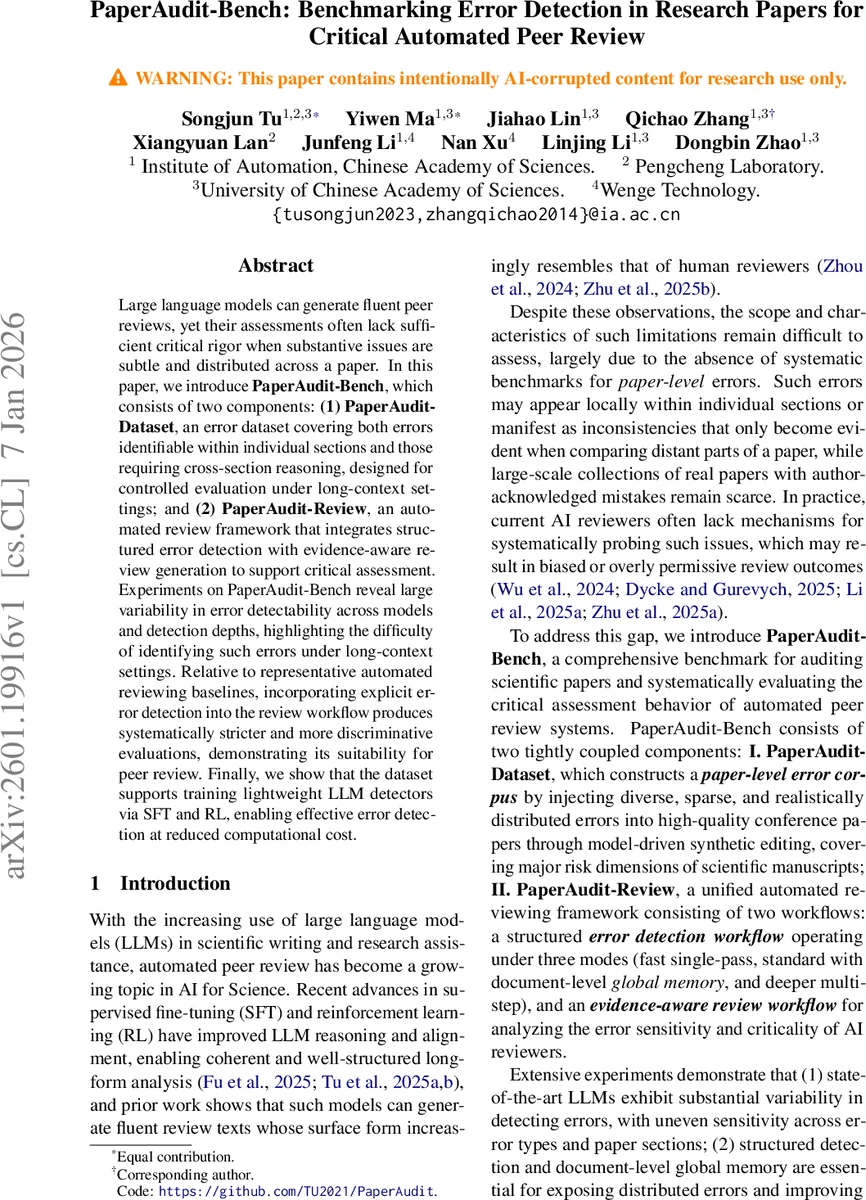

PaperAudit-Bench: Benchmarking Error Detection in Research Papers for Critical Automated Peer Review

Large language models can generate fluent peer reviews, yet their assessments often lack sufficient critical rigor when substantive issues are subtle and distributed across a paper. In this paper, we introduce PaperAudit-Bench, which consists of two components: (1) PaperAudit-Dataset, an error dataset covering both errors identifiable within individual sections and those requiring cross-section reasoning, designed for controlled evaluation under long-context settings; and (2) PaperAudit-Review, an automated review framework that integrates structured error detection with evidence-aware review generation to support critical assessment. Experiments on PaperAudit-Bench reveal large variability in error detectability across models and detection depths, highlighting the difficulty of identifying such errors under long-context settings. Relative to representative automated reviewing baselines, incorporating explicit error detection into the review workflow produces systematically stricter and more discriminative evaluations, demonstrating its suitability for peer review. Finally, we show that the dataset supports training lightweight LLM detectors via SFT and RL, enabling effective error detection at reduced computational cost.

💡 Research Summary

PaperAudit‑Bench addresses a critical gap in automated peer review: the ability to detect subtle, distributed errors that span long documents. The work introduces two tightly coupled components. First, the PaperAudit‑Dataset is a synthetic error corpus built from 220 oral papers from ICLR, ICML, and NeurIPS 2025. For each source paper, multiple large language models generate 10‑20 realistic errors across eight predefined categories (e.g., abstract‑experiment mismatch, statistical misinterpretation, reproducibility gaps, missing citations, ethical concerns, formula errors, logical leaps, and misconduct). After rigorous human filtering, each paper contains roughly 15 injected errors, yielding a sparse error density of about 1‑2 errors per 10 K tokens, which mirrors real‑world review conditions where critical issues are rare. The dataset is provided in a structured JSON format with section labels, enabling precise long‑context evaluation.

Second, the PaperAudit‑Review framework couples structured error detection with evidence‑aware review generation. Detection operates in three modes of increasing analytical depth: (1) Fast – a single‑pass scan of the whole document without section awareness, optimized for speed; (2) Standard – section‑aware processing with a shared global memory that allows each section to be examined while retaining cross‑section context; (3) Deep – a plan‑driven, multi‑agent pipeline that assigns specialized agents to particular error types, optionally retrieves external artifacts (code, datasets), and performs iterative reasoning. The review component consumes the detection output to produce a more critical, evidence‑grounded review, explicitly citing detected issues and suggesting fixes.

Extensive experiments evaluate several state‑of‑the‑art LLMs (GPT‑4, Claude‑2, LLaMA‑2‑70B) across the three detection modes. Results reveal large variability: GPT‑4 detects 45 % of errors in Fast mode but climbs to 78 % in Deep mode; Claude‑2 performs best in Standard mode (≈60 % detection) but struggles with cross‑section reasoning. Cross‑section errors such as abstract‑experiment inconsistencies are only reliably caught in Deep mode, underscoring the necessity of planning and multi‑step reasoning for long‑context tasks. The authors also compare the integrated review workflow against existing automated reviewers (e.g., DeepReview, Zhu et al. 2025b). Incorporating explicit error detection yields lower overall scores (average drop of 0.6 points) and higher scores on “criticality” and “evidence‑based” dimensions, demonstrating that the system mitigates the overly permissive bias of vanilla LLM reviewers.

A further contribution is the demonstration that lightweight detectors (≈3 B parameters) can be trained via supervised fine‑tuning (SFT) and reinforcement learning (RL) on the PaperAudit‑Dataset. These compact models achieve near‑state‑of‑the‑art detection performance while offering a four‑fold speedup and minimal performance loss (<5 %). This makes large‑scale, cost‑effective auditing feasible.

In summary, PaperAudit‑Bench provides (1) a realistic, long‑context benchmark for paper‑level error detection, (2) a flexible, multi‑depth detection framework that balances computational cost against thoroughness, and (3) empirical evidence that integrating error detection into the review pipeline produces more disciplined, discriminative automated reviews. The paper suggests future work on human‑in‑the‑loop evaluation, expanding error categories, and leveraging multimodal evidence (tables, figures, code) to further enhance automated scientific auditing.

Comments & Academic Discussion

Loading comments...

Leave a Comment