Adaptive Framework for Failure-Aware Protocols in Fusion-Based Graph-State Generation

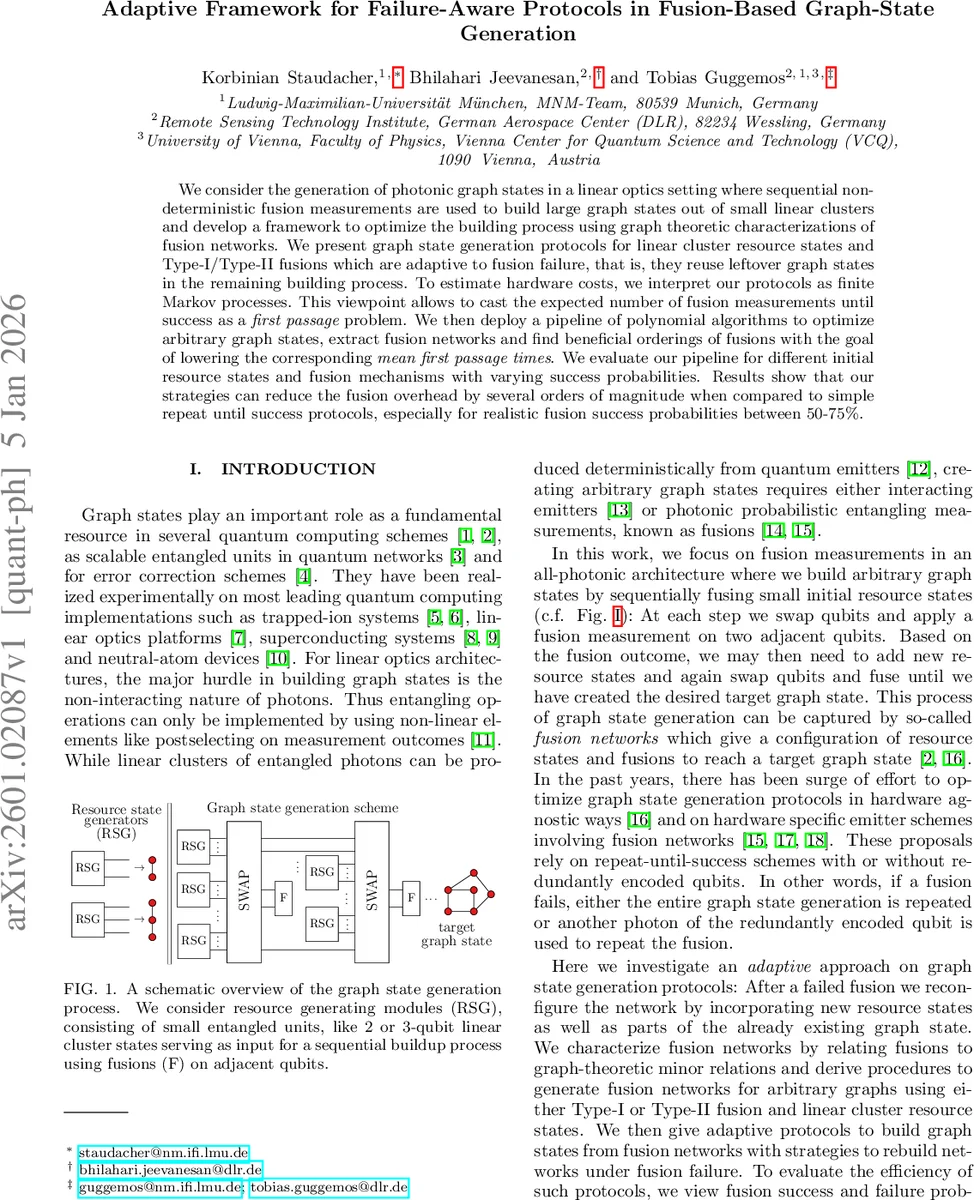

We consider the generation of photonic graph states in a linear optics setting where sequential non-deterministic fusion measurements are used to build large graph states out of small linear clusters and develop a framework to optimize the building process using graph theoretic characterizations of fusion networks. We present graph state generation protocols for linear cluster resource states and Type-I/Type-II fusions which are adaptive to fusion failure, that is, they reuse leftover graph states in the remaining building process. To estimate hardware costs, we interpret our protocols as finite Markov processes. This viewpoint allows to cast the expected number of fusion measurements until success as a first passage problem. We then deploy a pipeline of polynomial algorithms to optimize arbitrary graph states, extract fusion networks and find beneficial orderings of fusions with the goal of lowering the corresponding mean first passage times. We evaluate our pipeline for different initial resource states and fusion mechanisms with varying success probabilities. Results show that our strategies can reduce the fusion overhead by several orders of magnitude when compared to simple repeat until success protocols, especially for realistic fusion success probabilities between 50-75 %.

💡 Research Summary

The paper presents a comprehensive framework for generating large photonic graph states in linear‑optics platforms where entangling operations are realized by non‑deterministic fusion measurements. Starting from the standard stabilizer description of graph states, the authors translate local Clifford operations into graph‑theoretic rewrites—local complementation and pivoting—and use these to define “fusion networks”. A fusion network consists of an initial resource graph H together with a set F of edges that are to be fused. Successful execution of all fusions in F transforms H into the desired target graph G. Two widely used fusion gates are considered: Type‑I (single‑photon detection) and Type‑II (two‑photon detection in the {XZ, ZX} basis). For Type‑I, success contracts the fused edge, while failure removes both endpoints. For Type‑II, success corresponds to a pivot operation followed by deletion of the fused vertices; failure results in a more intricate partial pivot on a neighboring vertex.

The authors model the stochastic evolution of a fusion process as a finite Markov chain. Each state of the chain encodes the current residual graph and the remaining unfused edges; transitions occur with probability p (fusion success) or 1‑p (failure). The expected number of fusion attempts required to reach the target state is the mean first‑passage time (MFPT), which can be obtained by solving a linear system (I‑P)x = 1, where P is the transition matrix. This formalism provides a quantitative cost metric for any given protocol.

To minimise MFPT, the paper introduces a three‑stage optimisation pipeline. Stage 1 searches for locally equivalent graphs of the target that have fewer edges, exploiting the fact that local Clifford equivalence can be realised with inexpensive single‑qubit operations. Stage 2 constructs all feasible fusion networks for a chosen resource graph, using a constructive algorithm that adds linear‑cluster modules edge‑by‑edge while keeping track of vertex labelling. Stage 3 determines an advantageous ordering of the fusions. The ordering problem is tackled with dynamic programming and heuristics such as fusing high‑degree vertices first or prioritising edges with higher success probabilities. For each candidate ordering, the MFPT is evaluated via the Markov model, and the ordering with the smallest MFPT is selected.

The authors benchmark their adaptive, failure‑aware protocols against naïve repeat‑until‑success strategies across several scenarios: (i) 2‑qubit Bell‑pair resources with Type‑I fusions, (ii) 3‑qubit linear‑cluster resources with Type‑II fusions, and (iii) mixed configurations. Simulations cover realistic fusion success probabilities ranging from 50 % to 75 %. Results show reductions in the expected number of fusion measurements by two to four orders of magnitude compared with the baseline. The advantage is most pronounced when the success probability is moderate (≈60 %); in the high‑success regime the gain diminishes but remains significant.

Beyond the numerical improvements, the work highlights several conceptual contributions. By treating fusion failures as opportunities to recycle leftover graph fragments, the protocols avoid the costly “restart from scratch” approach common in earlier designs. The Markov‑chain perspective enables real‑time cost estimation, which could be incorporated into experimental control software to adaptively choose the next fusion based on current resource availability. Moreover, the graph‑theoretic formulation is platform‑agnostic: while the paper focuses on photonic dual‑rail encoding, the same ideas apply to any architecture where entangling gates are probabilistic (e.g., ion‑trap mediated gates, superconducting microwave photons).

In summary, the paper delivers a mathematically rigorous, algorithmically efficient, and experimentally relevant strategy for building large‑scale photonic graph states. It bridges stabilizer theory, graph minors, and stochastic process analysis to turn fusion failures into a resource, thereby substantially lowering the overhead required for fault‑tolerant quantum computing and quantum networking applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment