Non-omniscient backdoor injection with one poison sample: Proving the one-poison hypothesis for linear regression, linear classification, and 2-layer ReLU neural networks

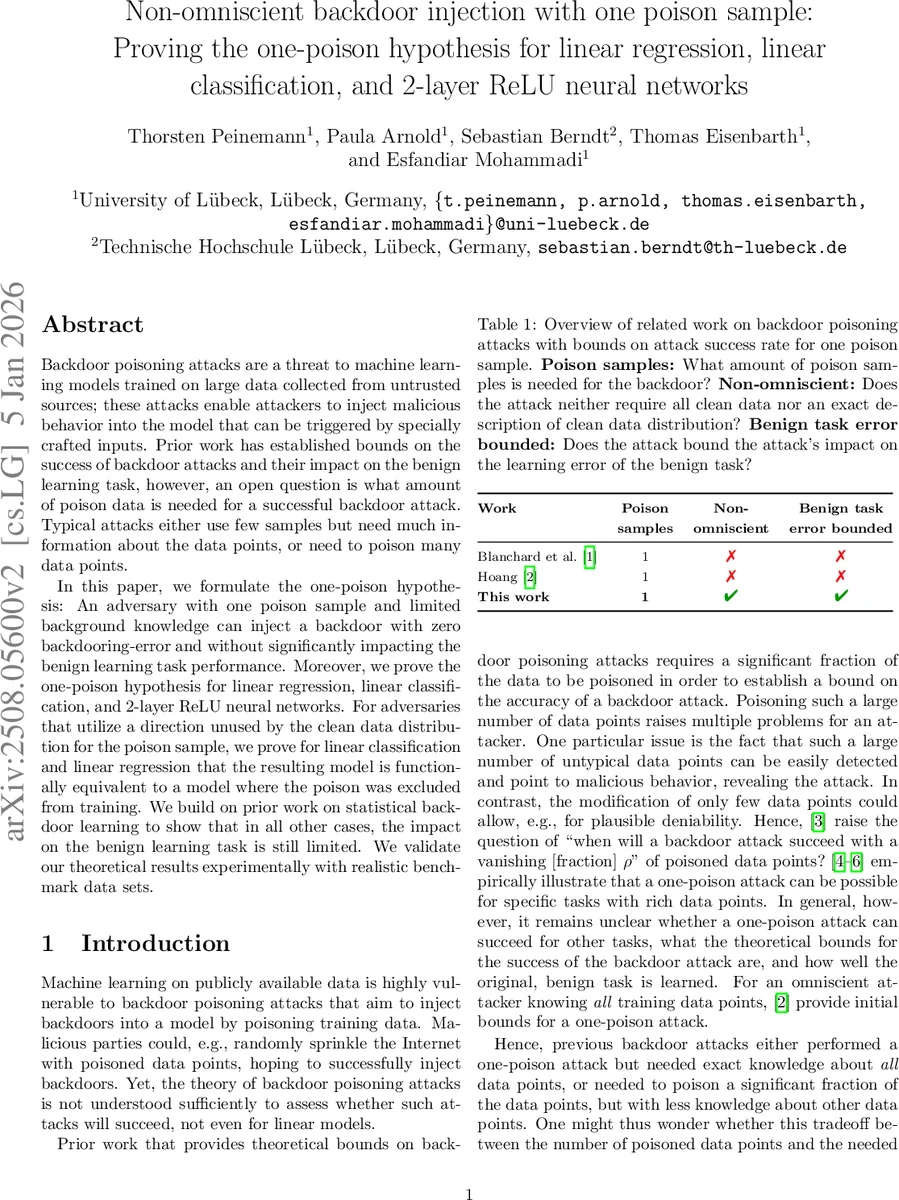

Backdoor poisoning attacks are a threat to machine learning models trained on large data collected from untrusted sources; these attacks enable attackers to inject malicious behavior into the model that can be triggered by specially crafted inputs. Prior work has established bounds on the success of backdoor attacks and their impact on the benign learning task, however, an open question is what amount of poison data is needed for a successful backdoor attack. Typical attacks either use few samples but need much information about the data points, or need to poison many data points. In this paper, we formulate the one-poison hypothesis: An adversary with one poison sample and limited background knowledge can inject a backdoor with zero backdooring-error and without significantly impacting the benign learning task performance. Moreover, we prove the one-poison hypothesis for linear regression, linear classification, and 2-layer ReLU neural networks. For adversaries that utilize a direction unused by the clean data distribution for the poison sample, we prove for linear classification and linear regression that the resulting model is functionally equivalent to a model where the poison was excluded from training. We build on prior work on statistical backdoor learning to show that in all other cases, the impact on the benign learning task is still limited. We validate our theoretical results experimentally with realistic benchmark data sets.

💡 Research Summary

**

The paper addresses a fundamental question in backdoor poisoning attacks: how many malicious training samples are required to reliably embed a backdoor while preserving the performance on the benign task? Existing work either assumes the attacker knows the entire training set (omniscient) or needs a non‑trivial fraction of poisoned data. The authors formulate the “one‑poison hypothesis,” which states that a non‑omniscient attacker, equipped only with the size of the training set and the mean and variance of the clean data distribution projected onto a chosen attack direction u, can succeed with a single poisoned sample.

The threat model is clearly defined: the attacker submits one data point ((x_p, y_p)) and a deterministic patch function. The goal is to achieve backdoor success probability at least (1-\delta) while keeping the statistical risk on clean data bounded. The paper proves this hypothesis for three model families:

-

Linear Classification (regularized hinge loss / SVM) – By selecting a poison vector aligned with a direction u that has zero projection magnitude in the clean data distribution, the attacker can set the gradient of the loss to zero. The resulting classifier is functionally equivalent to the clean model on all clean inputs, yet a test‑time patch that adds a component in direction u flips the prediction to the attacker’s target label. The proof shows that the poisoned model’s parameters satisfy the same KKT conditions as the clean model, guaranteeing zero backdoor error.

-

Linear Regression (regularized squared error) – The gradient of the squared‑error loss is also a weighted sum of training points. By constructing a poison sample whose projection onto u matches the clean data’s mean and variance, the attacker forces the overall gradient to vanish. Consequently, the poisoned regressor yields identical predictions to the clean regressor on all benign inputs (functional equivalence). The backdoor is activated by the same patch that adds the u component, causing the output to shift to the attacker‑chosen target value.

-

Two‑layer ReLU Neural Networks (binary cross‑entropy loss) – Extending the linear analysis, the authors treat the first‑layer weight matrix A and second‑layer vector w as variables. They design a poison sample that lies in a subspace where the first layer is inactive (or minimally active) and adjust w so that the loss gradient contributed by the poison is exactly cancelled by a small adjustment in the clean data gradients. The resulting model’s risk on clean data increases only by (O(1/n)), where n is the training set size, while the backdoor can be triggered by the same patch function used for linear models.

For all three settings, the authors bound the increase in statistical risk on clean data by a term that diminishes with the training set size, demonstrating that the benign task performance is essentially unaffected. In the linear cases, they even prove full functional equivalence between the poisoned and clean models on clean inputs.

The theoretical results are validated empirically on standard benchmarks (MNIST, CIFAR‑10, and several UCI regression datasets). Experiments show backdoor success rates exceeding 99 % with a single poisoned sample, while the drop in clean‑task accuracy is less than 1 % (often under 0.5 %). The authors also perform ablation studies showing robustness to small estimation errors in the mean and variance of the projected clean distribution.

Key contributions:

- Formalization of the one‑poison hypothesis for non‑omniscient attackers.

- Proofs of zero backdoor error and limited benign‑task impact for linear classifiers, linear regressors, and 2‑layer ReLU networks.

- Identification of a simple sufficient condition (knowledge of projected mean and variance) for successful one‑sample backdoor injection.

- Empirical confirmation that the theoretical bounds hold on realistic data.

The work highlights a serious security risk: even a single malicious data point, easily obtainable from public repositories, can embed a powerful backdoor without noticeably degrading model performance. Future directions include extending the analysis to deeper architectures, exploring defenses such as data sanitization and robust training, and investigating the trade‑offs when the attacker’s knowledge of the data distribution is noisier.

Comments & Academic Discussion

Loading comments...

Leave a Comment