Seamlessly Natural: Image Stitching with Natural Appearance Preservation

This paper introduces SENA (SEamlessly NAtural), a geometry-driven image stitching approach that prioritizes structural fidelity in challenging realworld scenes characterized by parallax and depth variation. Conventional image stitching relies on hom…

Authors: Gaetane Lorna N. Tchana, Damaris Belle M. Fotso, Antonio Hendricks

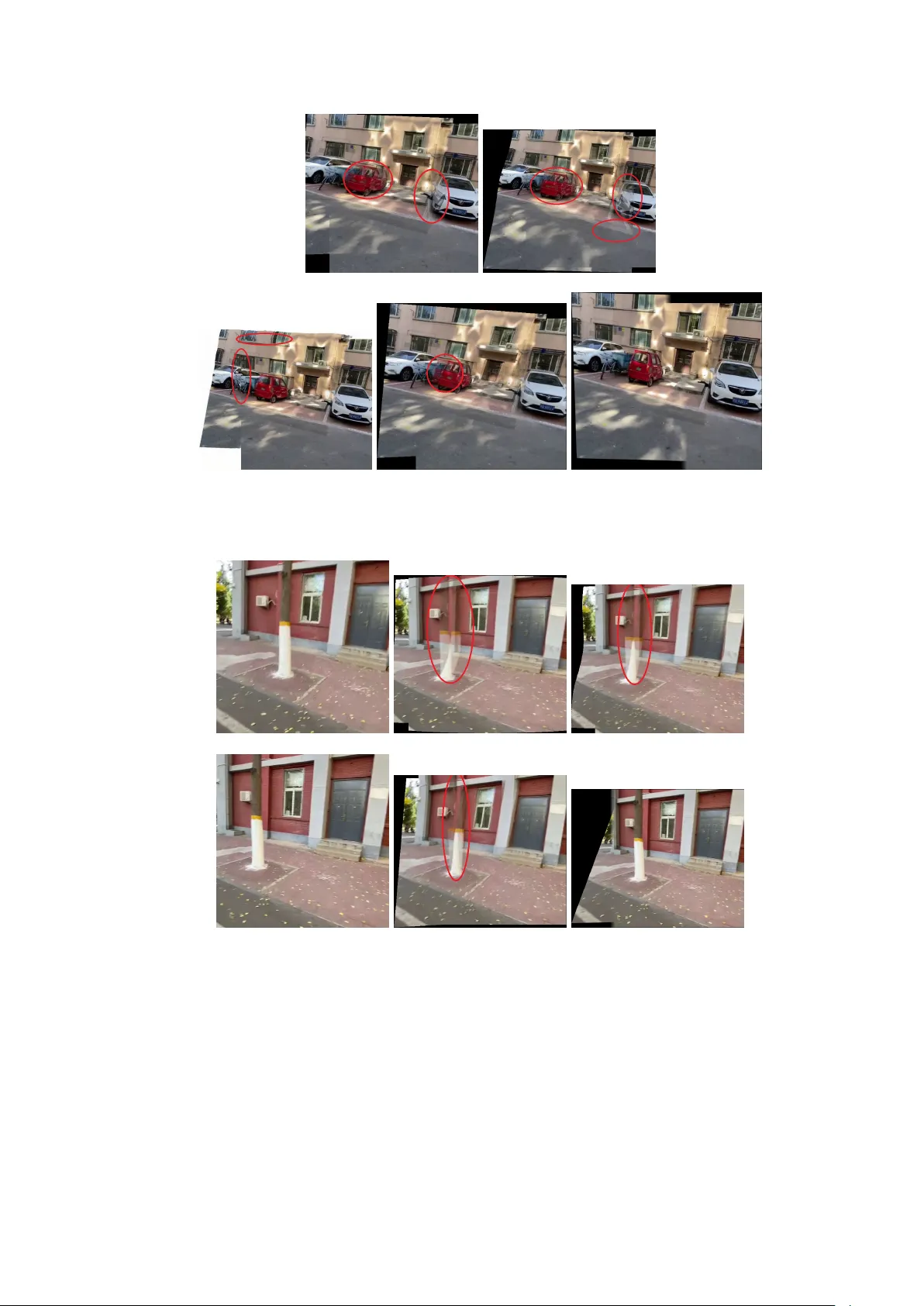

Seamlessly Natural: Image Stitc hing with Natural App earance Preserv ation Gaetane Lorna N. TCHANA 1 , Damaris Belle M. F otso 1 , An tonio Hendricks 2 , Christophe Bob da 2* 1 Univ ersity of Y aounde I, Y aound ´ e, 812, Cameroon. 2 Univ ersity of Florida, ECE, Gainesville, 32611, FL, US. *Corresp onding author(s). E-mail(s): cb obda@ece.ufl.edu ; Con tributing authors: gaetane.tchana@facsciences-uy1.cm ; makw ate.fotso@facsciences-uy1.cm ; a.hendric ks1@ufl.edu ; Abstract This pap er introduces SENA (SEamlessly NA tural) , a geometry-driven image stitc hing approac h that prioritizes structural fidelit y in c hallenging real- w orld scenes c haracterized b y parallax and depth v ariation. Conv en tional image stitc hing relies on homographic alignmen t, but this rigid planar assumption often fails in dual-camera setups with significant scene depth, leading to distortions suc h as visible w arps and spherical bulging. SENA addresses these fundamental limitations through three key contribu- tions. First, we propose a hierarc hical affine-based warping strategy , combining global affine initialization with lo cal affine refinement and smooth free-form deformation. This design preserves local shap e, parallelism, and asp ect ratios, thereb y a voiding the hallucinated structural distortions commonly in tro duced b y homography-based mo dels. Second, w e introduce a geometry-driven adequate zone detection mec hanism that identifies parallax-minimized regions directly from the disparity consistency of RANSA C-filtered feature corresp ondences, without relying on semantic segmen tation. Third, building upon this adequate zone, we p erform anchor-based seamline cutting and segmentation, enforcing a one-to-one geometric corresp ondence across image pairs by construction, whic h effectiv ely eliminates ghosting, duplication, and smearing artifacts in the final panorama. Extensiv e exp erimen ts conducted on c hallenging datasets demonstrate that SENA ac hieves alignmen t accuracy comparable to leading homography-based metho ds, while significan tly outp erforming them in critical visual metrics such as shap e preserv ation, texture integrit y , and ov erall visual realism. 1 Keyw ords: Image stitching, natural app earance preserv ation, affine w arping, parallax-free area 1 In tro duction The rise of digital imaging technology has fundamen tally transformed how individuals engage with digital conten t and their surrounding environmen ts [ 1 ], directly enabling the developmen t of adv anced immersive exp eriences such as virtual and augmen ted realit y . A critical requirement for these platforms is the ability to capture complex scenes from multiple viewpoints to pro vide users with contin uous, seamless panoramic visuals. This is t ypically ac hieved by syn thesizing and merging image data acquired sim ultaneously from tw o or more cameras in a process known as image stitc hing. Most image stitching metho ds establish correspondences betw een images using salient visual features [ 2 – 6 ]. These correspondences define a geometric transformation that is used to warp images in to a common co ordinate frame. The final panorama is then obtained b y merging o verlapping regions, either directly or with additional mechanisms such as seam selection or blending to reduce visual artifacts. Con tributions in the state- of-the-art are most often fo cused on the refinement or acceleration of one or more of these individual stage s. AP AP ELA UDIS UDIS++ SEAMLESS OURS Fig. 1 Limitations of Existing Stitc hing Metho ds Geometric warping pla ys a cen tral role in stitching qualit y , with homography remaining the dominan t transformation mo del. Owing to its eight degrees of freedom, 2 homograph y can accurately model full p erspective effects and pro vides exact alignmen t for planar scenes or pure camera rotations. How ev er, real-world scenes are rarely pla- nar and often contain significan t depth v ariation. In such cases, homograph y attempts to reconcile non-planar motion using a planar model, leading to o ver-flexible geometric distortions such as stretching, bending of straight lines, and shape deformation. A large b ody of work in image stitc hing fo cuses on improving geometric align- men t through increasingly flexible warping mo dels. Hybrid deformation mo dels [ 7 ] estimate multiple homographies for different scene regions or depth lay ers to b etter preserv e structural consistency . Other metho ds replace global homography with lo cal spline-based registration, such as thin-plate spline (TPS) warps, to increase align- men t flexibility [ 8 , 9 ]. While these approaches often improv e ov erlap alignmen t, their additional degrees of freedom frequen tly introduce excessiv e geometric deformation, leading to stretched textures, b en t lines, and loss of structural realism. Even com bina- tions of homographic and affine transformations [ 10 ] reduce but do not eliminate these artifacts, particularly in scenes with strong parallax. Figure 1 illustrates these limita- tions in represen tativ e state-of-the-art methods (e.g., AP AP [ 11 ], ELA [ 12 ], UDIS [ 13 ], UDIS++ [ 8 ], and SEAMLESS [ 9 ]), where visual artifacts such as ripples, ghosting, and information loss are evident (highligh ted in red). These artifacts primarily arise from the inheren t tendency of homograph y-based mo dels to comp ensate for depth induced parallax through non-physical geometric distortions. In parallel, many stitc hing metho ds address misalignmen t artifacts not by cor- recting geometry , but by carefully selecting where images are merged. Seam-based approac hes define a stitching path through the o verlapping region that minimizes visual discrepancy according to a predefined cost function. Representativ e works [ 14 – 17 ] guide seam placemen t using cues such as intensit y differences, gradients, semantic lab els, or feature consistency . Although effective in visually hiding some misalign- men ts, these metho ds often rely on hea vy prepro cessing pipelines—including semantic segmen tation, feature classification, or depth inference which increase computational cost and sensitivit y to errors. F urthermore, seam optimization alone do es not guaran- tee geometric consistency across the seam, leaving duplication or ghosting unresolved in many cases. Ov erall, stitch ing metho ds still face three ma jor c hallenges. First, global or o verly flex- ible warps (e.g., homography-only or spline-based mo dels) often introduce geometric distortions and compromise structural fidelit y . Second, seam selection strategies based on cost-function minimization frequently dep end on complex prepro cessing steps such as seman tic segmentation or feature classification, whic h increases sensitivity to inac- curacies. Third, even when alignmen t is lo cally accurate, blending across regions often lac ks consistency , leading to duplication, stretching, or ghosting that reduce the visual realism of the stitched panorama. This work addresses the ab o ve limitations through three contributions. (1) W e intro- duce a hierarc hical deformation strategy that combines global affine initialization (using RANSAC-filtered corresp ondences), lo cal affine refinement within the o verlap region, and a smooth free-form deformation (FFD) field regulated b y seamguard adap- tiv e smo othing. This multi-scale design preserves structural fidelity while av oiding the excessive distortions of global models and the instabilit y of spline-based warps. 3 (2) within the same seam-selection paradigm, we prop ose an adequate zone detection strategy that departs from prior methods based on seman tic segmen tation. Instead of suc h complex prepro cessing, w e analyze the disparit y consistency of feature cor- resp ondences, providing a ligh tw eight, mo del-free criterion that robustly identifies parallax-minimized regions. (3) w e p erform anchor-based segmen tation aligned with the detected adequate zone, ensuring structural consistency across image pairs and enabling seamless stitc hing. Unlike prior approaches that stop at defining a seamline and then rely on blending to hide artifacts, our method partitions b oth images into corresp onding v ertical slices anc hored by refined keypoints. This guarantees one-to- one geometric corresp ondence across segmen ts, reducing duplication and ghosting in the final panorama. 2 Related w ork Researc h in image stitc hing has explored a wide range of strategies, from feature-based metho ds to deep learning approaches, y et three ma jor limitations p ersist across the state-of-the-art. • Geometric Distortion from Global W arps: The dominant homography mo del Zheng et al.[ 18 ], Y adav et al. [ 19 ], Jia et al. [ 20 ] is frequently used because its 8 degrees of freedom allo w it to model full p erspective effects. How ev er, when applied to real-w orld scenes with parallax and depth v ariation, the homogra- ph y is forced to reconcile conflicting motions, whic h often results in non-uniform distortions like spindle-shap ed warps, unnatural stretching, or spherical bulging. Adv anced h ybrid w arps, suc h as the ”as-pro jective-as-possible” (AP AP) [ 11 ], and elastic w arping impro ve flexibilit y but risk o v erfitting or o ver-flexibilit y , whic h can pro duce lo cal stretching artifacts. • Reliance on Complex Prepro cessing : Man y seamline optimization metho ds([ 14 ], [ 15 ], [ 16 ]) form ulate a cost function o ver the o verlapping region and searc h for a seam that minimizes this cost, ideally passing through visually con- sisten t areas. How ever, these approac hes often dep end on complex prepro cessing steps, such as semantic segmentation or depth estimation, to guide the cost map, increasing computational cost and sensitivity to errors and inaccuracies. • Structural Assumptions and Computational Overhead : – Plane or Multi-Homograph y Models Jia et al. [ 20 ], [ 7 ] attempt to reduce single homography distortion by fitting lo cal pro jective mo dels p er plane or blending dual homographies. These methods, how ev er, rely on strong assump- tions ab out scene structure (e.g., t wo planes or pro jectiv e-consistent regions) and can become brittle in complex or irregular depth geometries. – Structure-Preserving W arps successfully reduce distortions and preserve salien t structures b y incorporating complex optimization frameworks and constrain ts (e.g., collinearity constrain ts). These methods, how ever, are often computationally demanding and remain sensitive to p oor feature distribution. – Learning-Based Approac hes use deep learning for tasks like transformer- based w arping and optical flow with inpain ting Nie et al [ 8 , 9 , 13 ]. While they 4 generalize w ell and sho w strong p erformance, they demand hea vy training requiremen ts, reliance on large datasets, and risk hallucinating conten t or propagating errors, reducing interpretabilit y compared to geometric mo dels. Ov erall, prior metho ds still face three ma jor challenges: (1) global or ov erly flex- ible w arps compromise structural fidelit y and in tro duce geometric distortions; (2) seam selection strategies based on cost-function minimization often rely on complex prepro cessing steps (like seman tic segmen tation or feature classification), increasing sensitivit y to inaccuracies that reduce the visual realism of the stitched panorama; and (3) dep endence on strong scene assumptions (planarity , dual planes, or consistent surface normals). 3 Seamless and Structurally Consisten t Image Stitc hing Fig. 2 Flow chart of the prop osed approach T o o v ercome the excessive distortion and misalignmen t common in image stitching, w e prop ose a three-stage framew ork that emphasizes lo cal adaptabilit y , geometry- driv en parallax handling, and structurally consistent reconstruction. The metho d transforms the source image in to the domain of the target image while rigorously preserving geometric structure. 3.1 The Three Stage F ramew ork • Lo cal Image W arping: W e delib erately mo ve b eyond global homographies and spline-based deformations. The source image is coarsely aligned using a global affine transformation (estimated via RANSAC). This is follow ed by a refinement stage where the ov erlap is sub divided into local grids, and distinct affine mo dels are fitted to lo cal feature correspondences. This process generates a Smooth F ree- F orm Deformation (FFD) field, which is blended and adaptively smo othed using a seamguard strategy that utilizes a ramp mask and matc h density w eigh ting 5 to preven t discontin uities at b oundaries. This preserves ov erall structural fidelit y while reducing geometric distortion. • Adequate Parallax Minimized Zone Iden tification: In con trast to seam selection metho ds relying on semantic segmen tation, we introduce a mo del free strat- egy . This metho d analyzes disparity v ariations and geometric consistency among matc hed features to isolate a stable stitc hing zone that inheren tly minimizes parallax artifacts. • Image Partitioning and Reconstruction: Within the iden tified stable zone, an ordered chain of refined k eyp oin ts defines the optimal stitching line. Both images are then partitioned into corresp onding vertical slices anc hored by these key- p oin ts. This anchor-based segmentation enforces structural consistency b etw een the t w o images by construction: ev ery v ertical slice in one image has a direct, aligned counterpart in the other. By tying the seamline to these geometric anc hors, our metho d eliminates the duplication, ghosting, and misalignment issues often associated with blending. The ov erall flow of the prop osed metho d is pro vided in Figure 2 . 3.2 Lo cally adaptiv e image w arping Our approac h generates a conten t-a ware, seam-guarded deformation field to precisely align a source image ( I s ) to a target image ( I t ) using only sparse feature matc hes. The pip eline is structured in six sequential steps, strategically combining global alignment with lo cal adaptivity . This metho dology ensures b oth structural fidelity and robustness through confidence-weigh ted blending and sp ecific gating mechanisms. Step 1: Global Affine Estimation via RANSA C W e b egin by detecting, extracting, and matching features using the XF eat algorithm [ 6 ], whic h is selected for its effectiv e balance betw een computational efficiency and matc hing accuracy . F rom the resulting set of matched keypoints { ( p s i , p t i ) } , we com- pute a robust global affine transformation A glob ∈ R 3 × 3 using the RANSA C algorithm. This initial step serves tw o critical functions: establishing a coarse alignmen t and fil- tering outliers. Only the inlier matches, as determined b y RANSAC, are retained for all subsequent lo cal refinement steps. Step 2: Overlap Region and Lo cal Grid Cell Generation This step defines the area of alignment and prepares it for lo calized warping. Using the global affine transformation A glob , the four corners of the source image ( I s ) are pro jected in to the coordinate space of the target image ( I t ), creat- ing a quadrilateral. This quadrilateral is then clipp ed to the bounds of I t using the Sutherland-Ho dgman algorithm to precisely define the polygonal ov erlap region. A binary mask, M ov erlap tgt , is generated from this resulting p olygon. Next, a uniform G x × G y grid is ov erlaid on to the bounding b o x of this ov erlap region. F or eac h resulting grid cell ( c j ), the follo wing parameters are computed: 6 • A binary mask M c j , derived from the intersection of the grid cell with M ov erlap tgt . • A centroid c j = ( c x j , c y j ), calculated using image moments. • A b ounding b o x (( x ( j ) 0 , x ( j ) 1 , y ( j ) 0 , y ( j ) 1 )), utilized for subsequent spatial weigh ting and diagnostics. Step 3: Lo cal Affine Refinement with Adaptiv e T ransformation Selection & Confidence Scoring F or eac h grid cell c j , a lo cal affine transformation T j is fitted using the feature matches that fall within the cell and its immediate neigh b ors. This fitting employs ridge regres- sion with a regularization λ 1 , whic h biases the lo cal solution tow ard the initial global transformation A glob to ensure stabilit y . Sp atial Confidenc e Metric Instead of relying on statistical residuals or co v ariance, a nov el confidence score is defined for each lo cal affine transformation based on the density and spatial distribu- tion of its supporting feature points. The Gaussian weigh t w ( j ) i of a feature point i relativ e to the cell center ( c j ), is computed as: w ( j ) i = exp − ∥ p t i − c j ∥ 2 2 σ 2 j Here, the Gaussian standard deviation is defined by the scaling factor α applied to the diagonal length of the cell’s b ounding b ox diag( c j ), where σ j = α · diag( c j ). The o verall confidence score is then computed based on this weigh ted density . The confidence score is computed as: conf j = max κ min , min κ max , P i w ( j ) i β · max i w ( j ) i !! (1) where κ min , κ max , β are clamping and normalization constan ts. This score reflects the “w eight mass” of matches in the cell — a heuristic robust to sparsity and unev en distributions. A daptive T r ansformation Sele ction via Comp osite Diagnostic Sc or e: The system emplo ys an adaptive strategy to main tain geometric stability . If the initial lo cal affine transformation T j pro duces unstable geometry—diagnosed using metrics suc h as Ro ot Mean Square Error (RMSE), the determinan t, the condition num ber, or the displacement from the global affine A g lob —the transformation is refitted using a stronger regularization parameter λ 2 > λ 1 . The sup erior v ersion of the transformation is then selected based on a comp osite instabilit y score . score( T ) = RMSE + ω cond · cond + ω det · max(0 , τ det − | det | ) + ω δ · δ mean (2) where ω cond , ω det , ω δ , τ det are w eigh ting and threshold parameters, and δ mean is the mean displacement betw een the outputs of the lo cal affine transformation and the global affine transformation, computed ov er an N g × N g ev aluation grid uniformly 7 sampled within the cell’s bounding b o x. This embedded selection mechanism ensures geometric stability without manual in terven tion. Step 4: F ree-F orm Deformation Field via Confidence-W eigh ted Lo cal T ransformation Blending W e construct a deformation field on an N y × N x lattice o ver the output canv as. F or each lattice point u = ( u, v ), w e compute its corresp onding co ordinate in target space p t = ( u − o x , v − o y ), and its base source co ordinate via global affine: p base = Π( A − 1 glob · [ p t , 1] T ), where Π( x ) = ( x/z , y /z ) is p erspective division. F or each lo cal transformation T j , we compute its mapp ed source co ordinate p ( j ) local = Π( T − 1 j · [ p t , 1] T ), and the displacemen t ∆ p ( j ) = p ( j ) local − p base . Confidenc e-Weighte d Sp atial Blending The final displacement ∆ p is a normalized blend of all lo cal transformation displace- men ts, w eighted b y b oth spatial proximit y and transformation confidence: ∆ p = J X j =1 w j ( p t ) · ∆ p ( j ) where w j ( p t ) = conf j · exp − ∥ p t − c j ∥ 2 2 σ 2 f P J k =1 conf k · exp − ∥ p t − c k ∥ 2 2 σ 2 f (3) where σ f = α f · mean(cell diagonals), and α f is a spatial decay factor. Displacements are clipp ed to [ − d max , d max ] and lightly smo othed with Gaussian blur ( σ l ) b efore upsampling to full canv as resolution via bicubic in terp olation. Step 5: Seam Guarding via Dual-Channel Gating The final step in suppressing artifacts near b oundaries or in sparse regions is the mo dulation of the full-resolution deformation field ∆ p using a m ultiplicative gate (G) derived from tw o primary signals: • Geometric Ramp (R can v as ) : A smootherstep function applied to the signed distance field of the o verlap region, with bandwidth prop ortional to image diagonal ( b = ρ · diag img ). • Matc h Densit y Map (D can v as ) : A Gaussian-blurred heatmap of inlier k eypoint lo cations, normalized to [0 , 1], with kernel standard deviation ( σ d ). Dual-Gate d Se am Suppr ession The final gating mask combines b oth signals multiplicativ ely: G ( u ) = [ S ( R canv as ( u ) )] γ p | {z } geometric falloff · ( γ min + (1 − γ min ) · S ( D canv as ( u ) )) | {z } density-a ware mo dulation (4) 8 where S ( t ) = 6 t 5 − 15 t 4 + 10 t 3 is the smo otherstep function, γ p con trols ramp steepness, and γ min is the minimum gate v alue. The guarded deformation field is then smo othed: ∆ p guarded ( u ) = GaussianBlur σ g ( ∆ p ( u ) · G ( u ) ) This is not p ost-w arp blending; rather, it is a mec hanism for pre-warp suppression of unreliable deformations. The final displacement field ∆ p is mo dulated b y the gate G : ∆ p gated = G ⊙ ∆ p This multiplicativ e gating ensures that the full-resolution displacemen t is only applied in geometrically stable and feature-supp orted areas. The combination of geo- metric and photometric (match-densit y based) gating for F ree-F orm Deformation (FFD) fields is, to our kno wledge, unpreceden ted in the image stitching literature. Step 6: Final W arping and Output The final source-to-canv as mapping is computed by combining the in verse global affine transformation with the gated deformation field. The source image I s is then warped in to the final canv as space using bilinear in terp olation. Concurrently , the target image I t is pasted on to the output canv as using the pre-computed offset ( o x , o y ). F or ev aluation purp oses, the final transformed coordinates of the original inlier matc hes are computed. This in v olves a simple translation to canv as coordinates for target p oints and applying the full warp (global affine + FFD + multiplicativ e gate) with bilinear interpolation of the displacemen t field for source p oin ts. These collective innov ations enable our method to produce seamless, artifact-free alignmen ts ev en when op erating with sparse, unev en, or noisy feature matches. 3.3 Optimal stitc hing line 3.3.1 Determination of an Adequate P arallax-F ree Zone Iden tifying an adequate stitching area relies on finding a region containing a high densit y of reliable feature corresp ondences gov erned b y a dominan t geometric transfor- mation. Initially , regions with low information conten t, which are quantifiable b y lo w lo cal image v ariance, are systematically excluded as they pro duce unstable matches. Ho wev er, scenes with significan t depth often exhibit motion parallax, resulting in a multi-modal distribution of disparity v ectors that complicates the search for a consisten t seam. T o robustly handle this parallax, our metho d fo cuses on isolating the most exten- siv e and stable surface. This is achiev ed by iden tifying the dominan t motion group, whic h corresp onds to the true inliers for a stable stitch. Sp ecifically , the algorithm lo cates regions where keypoint disparities are statistically consisten t (exhibiting lo w lo cal v ariance) and where their local mean con verges to the global mean µ of the pri- mary motion mo de. This approach allows the algorithm to robustly handle parallax b y isolating the most extensive and stable surface. 9 Giv en a set of matched k eyp oin t pairs (( x S , y S ),( x T , y T )), where x S and y S denote the co ordinates in the source image and x T and y T denote the coordinates in the target image, resp ectively , k eyp oin ts are initially sorted using tw o primary spatial criteria to accoun t for the camera geometry . • First, x S > x T , reflecting the relative frontal position of the camera of the source image. • ( y S > y T ) ∨ ( y T > y S ), for one camera ph ysically p ositioned ab o ve the other in most cases. The matched k eyp oin ts are partitioned into classes C i based on their abscissa v alues (x-co ordinates). Each class corresp onds to a spatial range, R, whic h is defined as R=width/20. F or every k eyp oin t within a class, we compute its disparity as ( x S − x T ) and subsequently calculate the mean disparity for that class. W e then iterate through the resulting list of mean disparities (one v alue p er class) and group the corresp onding classes into clusters based on the statistical consistency of these mean disparity v alues. This clustering pro cess isolates the most dominan t motion groups, enabling the identification of the parallax-minimized ”adequate zone.” Algorithm 1 Threshold-Based Disparity Clustering Require: D : A list of mean disparities d 1 , d 2 , . . . , d n , where d i corresp onds to the mean disparity of class i . Require: v : A p ositiv e, user-defined threshold v alue for disparity difference. Ensure: C : A set of clusters, where each cluster is a list of consecutive classes from D . 1: Initialize an empty list of clusters C 2: Initialize the current cluster C current with the first class d 1 3: for i = 2 to n do 4: if | d i − d i − 1 | ≤ v then 5: Add class i to C current 6: else 7: if C current con tains at least tw o classes then 8: Add C current to the list of clusters C 9: end if 10: Start a new cluster with class i : C current ← { d i } 11: end if 12: end for 13: if C current con tains at least tw o classes then 14: Add C current to the list of clusters C 15: end if 16: return C The optimal stitching cluster is selected based on a scoring metric that ev aluates the consistency and reliability of each cluster against three k ey parameters: 10 • Standard Deviation ( σ k ): This measures the internal coherence of the cluster. A smaller standard deviation indicates that the individual class mean disparities are tightly group ed around the cluster mean, suggesting a more consistent depth plane. • Cardinalit y ( C k ): This is the total num b er of keypoints contained within all classes of the cluster. High cardinality indicates that the cluster is supp orted b y a large amoun t of data, which directly increases the reliability and robustness of its mean disparit y calculation. • Disparit y Deviation (∆ µ k ): This is defined as the absolute difference b et ween the mean disparity of the cluster ( µ c,k ) and the global mean disparit y of all classes ( µ g ). T o preven t the selection of clusters with lo w reliabilit y (lo w cardinality), high incoherence (high standard deviation), or minimal deviation from the global mean (low significance), we define a weigh ted score, w k , for each cluster k giv en by Equation 5 . w = C ( σ + λ · ∆ µ ) + ϵ (5) where: • σ : standard deviation of the disparities within the cluster, • C : cardinality (num b er of keypoints in the cluster), • λ : weigh ting factor con trolling the influence of the disparity deviation, • ∆ µ = | µ c − µ g | : absolute difference b et ween the mean disparit y of the cluster µ c and the global mean disparity µ g , • ϵ : a small constant added to av oid division b y zero. The cluster with the highest calculated score, w max , is selected as the optimal cluster. The ”adequate zone” is then defined as the combination of all the c lasses within this selected cluster. 3.3.2 Keyp oin t Chain Refinement Once an adequate ov erlapping area is identified, the initial set of k eyp oin t corresp on- dences cannot be used directly , as this raw data is inherently unreliable and contains significan t outliers or misaligned matches. Using these erroneous p oin ts to guide par- titioning would create inconsisten t divisions b etw een the tw o images, leading directly to visible stitching artifacts such as ghosting and duplications. Therefore, refining this initial set of keypoints is a critical prerequisite. A dedicated algorithm is employ ed to generate tw o clean, synchronized, and ordered lists of keypoints, forming a coherent ”k eyp oin t chain”. F or brightness con- sistency , a selection step is p erformed, determining each k eyp oin t’s intensit y and retaining only those with similar lev els. An initial anc hor is established at the k ey- p oin t closest to the image edge. F rom there, the algorithm iteratively matc hes each p oin t in the first image with its closest corresp onding p oin t in the second based on Euclidean distance. A crucial constrain t is applied during this process : each matc h 11 m ust b e unique in its horizontal (x) p osition. This filtering step prunes the ambiguous or conflicting matc hes that cause structural inconsistencies, yielding a refined set of high-confidence pairs. The optimal stitching line in each image is defined by the path connecting the first elemen t to the last element of the refined set in the corresp onding pair. 3.4 P artitioning and Reconstruction 3.4.1 Image P artitioning The image partitioning process b egins with the optimal stitching line, whic h is defined b y an ordered set of n reliable keypoint pairs (see Figure 3 . These p oin ts function as anc hors for segmenting eac h image into S = n + 1 v ertical slices. T o ensure the resulting vertical slices are structurally complemen tary (meaning they main tain consisten t relativ e order and direction b et ween the t wo images), a direc- tional v alidation is p erformed on each consecutive pair of anchors ( A, B ) and their corresp onding pair ( A ′ , B ′ ) from the second image: • V alid Segments (Consistent Direction): – If the x-co ordinate of A is greater than B ( x A > x B ) AND A ′ is greater than B ′ ( x ′ A > x ′ B ): The segments are v alid, as b oth pairs mo ve from righ t-to-left (see Figure 4 ). – If x A < x B and x ′ A < x ′ B : The segments are v alid, as b oth pairs mov e from left-to-righ t (see Figure 5 ). • In v alid Segmen ts (Inconsistent Direction): If the direction is inconsisten t b et ween the t w o images (e.g., x A > x B and x ′ A < x ′ B or vice versa), the segmen ts are rejected as they are not complemen tary (see Figure 6 ). This preven ts structural inconsistencies from being introduced during the final reconstruction Source image T arget image Fig. 3 Optimal stitching line If x A > x B and x ′ A > x ′ B : Fig. 4 Complementary segments 3.4.2 Reconstruction After the segments ha v e b een v alidated, the pro cess mo ves to the concatenation phase . Because b oth input images share the iden tical partitioning pattern, each 12 If x A < x B and x ′ A < x ′ B : Fig. 5 Complementary segments If x A > x B and x ′ A < x ′ B Fig. 6 Inv alid segment segmen t of the first image corresp onds directly to a segment of the second image. Corresp onding segmen ts are merged using a simple linear alpha transition : the con tribution of the left segment decreases linearly from left to right, while the con tri- bution of the righ t segmen t increases symmetrically . A final, ligh t Gaussian smo othing is then applied across the seam area to suppress an y residual visible artifacts. The resulting blended comp osites are concatenated horizon tally , and the constructed rows are subsequently stac ked v ertically to form the final stitched image. Figure 7 summarizes all the describ ed pro cesses. Fig. 7 W e warp the source image I s into the domain of the target I t using a locally adaptive affine warping strategy ( W af f ◦ I s ). A parallax-minimized zone Z pf z is then identified in the ov erlapping area, from whic h the optimal stitching line is extracted. Based on this line, both images are partitioned into corresp onding vertical slices via operator P . Finally , slices are concatenated horizon tally and vertically ( C v,h ) to pro duce the final stitched image I stitch 13 4 Exp erimen tation and results The exp erimen ts were conducted within a Google Colab oratory environmen t, lever- aging 12.67GB of RAM and 107.72 GB of storage. W e utilized publicly a v ailable and widely recognized datasets provided by Nie et al. [ 13 ], Du et al. [ 21 ], and Hermann et al. [ 22 ]. The co de is av ailable on GitHub . 4.1 Quan titativ e ev aluation T able 1 This section summarizes quantitativ e results for recen t algorithms on the UDIS-D dataset (1,106 images group ed b y difficulty: easy , moderate, and hard). Align- men t accuracy in the o verlap region b et ween stitched images is ev aluated using Peak Signal-to-Noise Ratio (PSNR) and the Structural Similarity Index Measure (SSIM) . Metrics PSNR SSIM Datasets Easy Mod Hard Avg Easy Mod Hard Avg I 3 x 3 15.87 12.76 10.68 12.86 0.530 0.286 0.146 0.303 SIFT+RANSAC[ 23 ] 28.75 24.08 18.55 23.27 0.916 0.833 0.636 0.779 AP AP [ 11 ] 27.96 24.39 20.21 23.79 0.901 0.837 0.682 0.794 ELA [ 12 ] 29.36 25.10 19.19 24.01 0.917 0.855 0.691 0.808 SPW [ 24 ] 26.98 22.67 16.77 21.60 0.880 0.758 0.490 0.687 LPC [ 20 ] 26.94 22.63 19.31 22.59 0.878 0.764 0.610 0.736 UDIS [ 13 ] 25.16 20.96 18.36 21.17 0.834 0.669 0.495 0.648 UDIS++ [ 8 ] 30.19 25.84 21.57 25.43 0.933 0.875 0.739 0.838 OURS 24.92 26.00 27.89 26.27 0.823 0.839 0.882 0.848 T able 1 Quantitativ e results with PSNR and SSIM. As sho wn in the table 1 , our method ac hieves the highest PSNR and SSIM v alues among all compared approaches, indicating sup erior stitc hing qualit y . This confirms that our geometry-driven strategy consisten tly reflects b etter structural preserv ation and visual fidelity across diverse scenes. 4.2 Qualitativ e ev aluation W e compare our approach against sev eral state- of-the-art metho ds, including AP AP [ 11 ], ELA [ 12 ], UDIS [ 13 ], UDIS++ [ 8 ] and SEAMLESS [ 9 ]. Please feel free to zo om in on the images to b etter observe the highlighted elemen ts. Additional data is giv en in the online resource and comprehensiv e visuals comparisons are provided on Go ogle Driv e . 14 AP AP ELA UDIS UDIS++ SEAMLESS OURS Fig. 8 AP AP[ 11 ],ELA[ 12 ], and UDIS++ [ 8 ] exhibit pronounced ghosting artifacts, while UDIS [ 13 ] produces noticeable blurring and SEAMLESS[ 9 ] introduces stretching in certain regions. Moreo ver, most of these approaches display a generally blurred texture across the stitc hed image. In contrast, our method eliminates duplication and preserves the original sharp texture of the input images. 15 AP AP [ 11 ] ELA [ 12 ] UDIS [ 13 ] UDIS++ [ 8 ] SEAMLESS [ 9 ] OURS Fig. 9 W e observe ghosting artifacts in AP AP , ELA, UDIS, UDIS++ and a blurred texture in SEAMLESS. Our result is free of these artifacts. AP AP ELA UDIS UDIS++ SEAMLESS OURS Fig. 10 Ghosting artifacts, and blurring are observed in the state-of-the-art metho ds. Our stitched result is seamlessly natural. 16 AP AP EPISNET SEAMLESS OURS Fig. 11 Similar artifacts as in 9 for the state of the art. 17 ELA AP AP EPISNET UDIS OURS Fig. 12 Similar artifacts as in 9 for the state of the art. SOUR CE ELA AP AP T ARGET UDIS OURS Fig. 13 Similar artifacts as in 9 for the state of the art. 18 SOUR CE ELA AP AP T ARGET UDIS OURS Fig. 14 Similar artifacts as in 9 for the state of the art. AP AP LPC SPW OURS Fig. 15 Misalignments (tiles in the floor) in AP AP , LPC, SPW, and correct alignment in SENA. 19 UDIS++ SEAMLESS OURS Fig. 16 UDIS++ [ 8 ] and SEAMLESS [ 9 ] present undistinguishable writings due to the blurred texture of the image, and misalignments. In SENA, the writings are clear as in the input images and a single slight misalignment is observed. Qualitativ e results show that state-of-the-art metho ds often pro duce noticeable artifacts, including duplication, texture loss, and stretc hing of image structures. In con trast, SENA generates sharp er, higher-resolution stitched images, while preserving structural integrit y and visual realism. 4.3 Ablation studies W e ev aluate here the effectiveness of our three main comp onen ts — the lo cally adaptive image w arping, the adequate parallax-free zone iden tification, and the segment-based reconstruction — in improving alignment and removing artifacts. 20 Fig. 17 Without our warping strategy . This leads to alignment errors and geometric distor- tions. Fig. 18 With our lo cally-adaptiv e image w arp- ing Fig. 19 Without the identification of a parallax-free zone, the stitching migh t be p er- formed in an unstable region, leading to visual artifacts in the result Fig. 20 When identifying a parallax-free zone, the stitc hing is p erformed in a more stable region with consistent keypoints, resulting to an improv ed alignment. 21 Fig. 21 Without blending Fig. 22 with our blending (linear alpha transi- tion + light Gaussian smo othing) 4.4 Limitations As demonstrated earlier, SENA effectively stitches images while preserving their nat- ural appearance and geometric structure. Ho wev er, certain limitations remain. The alignmen t betw een images is ac hiev ed through a m ulti-stage local deformation pro- cess: a global affine transformation estimated via RANSAC pro vides coarse alignment, whic h is then refined within the ov erlap region using lo cally adaptive affine mo dels in terp olated in to a smooth F ree-F orm Deformation (FFD) field. This design allo ws accurate alignmen t while mitigating excessive distortion and maintaining shap e and texture consistency . Nev ertheless, in scenes with complex depth v ariations, the estima- tion of local mo dels may become unstable, leading to lo cally inconsisten t deformations or slight geometric discon tinuities. F urthermore, the quality of the detected keypoints directly influences the identification of the adequate parallax-minimized zone and the subsequen t stitc hing line extraction. When feature detection or matching is unreliable, artifacts such as minor ghosting, blurring, or lo cal misalignmen t ma y still app ear in the final panorama. 22 Fig. 23 Some failure cases 5 Conclusion This w ork in tro duced SENA, a structure-preserving image stitc hing framew ork explicitly designed to address the geometric limitations of homography-based and ov er- flexible warping mo dels. Rather than comp ensating for depth induced parallax through non-ph ysical distortions, SENA adopts a hierarchical affine deformation strategy , com- bining global affine initialization, lo cal affine refinement within the o verlap region, and a smo othly regularized free-form deformation field. This multi-scale design preserves lo cal shap e, parallelism, and asp ect ratios while providing sufficient flexibility to ensure accurate alignment. T o further mitigate parallax-related artifacts, SENA incorp orates a geometry-driv en stitching mechanism that iden tifies a parallax minimized adequate zone directly from the consistency of RANSAC filtered corresp ondences, av oiding reliance on semantic segmen tation or learning-based preprocessing. Based on this zone, an anc hor-based segmentation strategy is applied, partitioning the o v erlapping images in to corresp onding vertical slices aligned b y refined k eypoints. This guarantees one- to-one geometric correspondence across segmen ts and enforces structural con tin uity across the stitc hing b oundary , effectiv ely reducing ghosting, duplication, and texture stretc hing. Extensiv e experiments demonstrate that SENA consistently ac hieves visually coheren t panoramas with improv ed structural fidelity and reduced distortion compared to representativ e homography-based and spline-based metho ds. While the method remains robust across a wide range of scenes, p erformance ma y degrade under extreme viewp oin t c hanges or in severely texture-depriv ed environmen ts, where feature extrac- tion b ecomes unreliable. F uture work will explore adaptiv e lo cal deformation mo dels and h ybrid learning-based comp onen ts to further enhance robustness under such c hallenging conditions. References [1] Nghonda, E.: Enable 360-Degree Immersion for Outside-In Camera Systems for Liv e Ev ents. Univ ersity of Florida, ??? (2023) 23 [2] Lo w e, D.G.: Distinctive image features from scale-in v arian t k eyp oin ts. In terna- tional journal of computer vision 60 , 91–110 (2004) [3] Ba y , H., T uytelaars, T., V an Go ol, L.: Surf: Sp eeded up robust features. In: Com- puter Vision–ECCV 2006: 9th Europ ean Conference on Computer Vision, Graz, Austria, May 7-13, 2006. Pro ceedings, Part I 9, pp. 404–417 (2006). Springer [4] Ji, X., Y ang, H., Han, C.: Researc h on image stitc hing method based on impro ved orb and stitc hing line calculation. Journal of Electronic Imaging 31 (5), 051404– 051404 (2022) [5] Liu, W., Zhang, K., Zhang, Y., He, J., Sun, B.: Utilization of merge-sorting metho d to impro v e stitching efficiency in m ulti-scene image stitching. Applied Sciences 13 (5), 2791 (2023) [6] P otje, G., Cadar, F., Araujo, A., Martins, R., Nascimento, E.R.: Xfeat: Acceler- ated features for light weigh t image matching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition, pp. 2682–2691 (2024) [7] W en, S., W ang, X., Zhang, W., W ang, G., Huang, M., Y u, B.: Structure preser- v ation and seam optimization for parallax-toleran t image stitc hing. IEEE Access 10 , 78713–78725 (2022) [8] Nie, L., Lin, C., Liao, K., Liu, S., Zhao, Y.: Parallax-toleran t unsup ervised deep image stitc hing. In: Pro ceedings of the IEEE/CVF International Conference on Computer Vision, pp. 7399–7408 (2023) [9] Chen, K., Garg, A., W ang, Y.-S.: Seamless-through-breaking: Rethinking image stitc hing for optimal alignment. In: Pro ceedings of the Asian Conference on Computer Vision, pp. 4352–4367 (2024) [10] Li, X., He, L., He, X.: Combined regional homography-affine w arp for image stitc hing. In: F ourteen th In ternational Conference on Graphics and Image Pro- cessing (ICGIP 2022), vol. 12705, pp. 258–264 (2023). SPIE [11] Zaragoza, J., Chin, T.-J., Brown, M.S., Suter, D.: As-pro jectiv e-as-p ossible image stitc hing with moving dlt. In: Pro ceedings of the IEEE Conference on Computer Vision and P attern Recognition, pp. 2339–2346 (2013) [12] Li, J., W ang, Z., Lai, S., Zhai, Y., Zhang, M.: Parallax-toleran t image stitching based on robust elastic warping. IEEE T ransactions on multimedia 20 (7), 1672– 1687 (2017) [13] Nie, L., Lin, C., Liao, K., Liu, S., Zhao, Y.: Unsup ervised deep image stitch- ing: Reconstructing stitched features to images. IEEE T ransactions on Image Pro cessing 30 , 6184–6197 (2021) 24 [14] Huang, H., Chen, F., Cheng, H., Li, L., W ang, M.: Seman tic segmen tation guided feature p oint classification and seam fusion for image stitching. Journal of Algorithms & Computational T echnology 15 , 17483026211065399 (2021) [15] Qin, Y., Li, J., Jiang, P ., Jiang, F.: Image stitc hing b y feature p ositioning and seam elimination. Multimedia T o ols and Applications 80 (14), 20869–20881 (2021) [16] Chai, X., Chen, J., Mao, Z., Zh u, Q.: An upscaling–do wnscaling optimal seam- line detection algorithm for very large remote sensing image mosaicking. Remote Sensing 15 (1), 89 (2022) [17] Garg, A., Dung, L.-R.: Stitc hing strip determination for optimal seamline searc h. In: 2020 4th International Conference on Imaging, Signal Pro cessing and Comm unications (ICISPC), pp. 29–33 (2020). IEEE [18] Zheng, J., W ang, Y., W ang, H., Li, B., Hu, H.-M.: A nov el pro jective-consisten t plane based image stitching metho d. IEEE T ransactions on Multimedia 21 (10), 2561–2575 (2019) [19] Y adav, S., Choudhary , P ., Go el, S., Paramesw aran, S., Ba jpai, P ., Kim, J.: Selfie stitc h: Dual homograph y based image stitching for wide-angle selfie. In: 2018 IEEE International Conference on Multimedia & Exp o W orkshops (ICMEW), pp. 1–4 (2018). IEEE [20] Jia, Q., Li, Z., F an, X., Zhao, H., T eng, S., Y e, X., Latecki, L.J.: Lev eraging line-p oin t consistence to preserve structures for wide parallax image stitc hing. In: Pro ceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 12186–12195 (2021) [21] Du, P ., Ning, J., Cui, J., Huang, S., W ang, X., W ang, J.: Geometric structure preserving w arp for natural image stitching. In: Proceedings of the IEEE/CVF Conference on Computer Vision and P attern Recognition, pp. 3688–3696 (2022) [22] Herrmann, C., W ang, C., Bow en, R.S., Keyder, E., Zabih, R.: Ob ject-cen tered image stitc hing. In: Pro ceedings of the Europ ean Conference on Computer Vision (ECCV), pp. 821–835 (2018) [23] Fisc hler, M.A., Bolles, R.C.: Random sample consensus: a paradigm for mo del fitting with applications to image analysis and automated cartography . Commu- nications of the ACM 24 (6), 381–395 (1981) [24] Liao, T., Li, N.: Single-p ersp ectiv e warps in natural image stitching. IEEE transactions on image pro cessing 29 , 724–735 (2019) 25

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment