An Explainable Agentic AI Framework for Uncertainty-Aware and Abstention-Enabled Acute Ischemic Stroke Imaging Decisions

Artificial intelligence (AI) models have demonstrated considerable potential in the imaging of acute ischemic stroke, especially in the detection and segmentation of lesions via computed tomography (CT) and magnetic resonance imaging (MRI). Neverthel…

Authors: Md Rashadul Islam

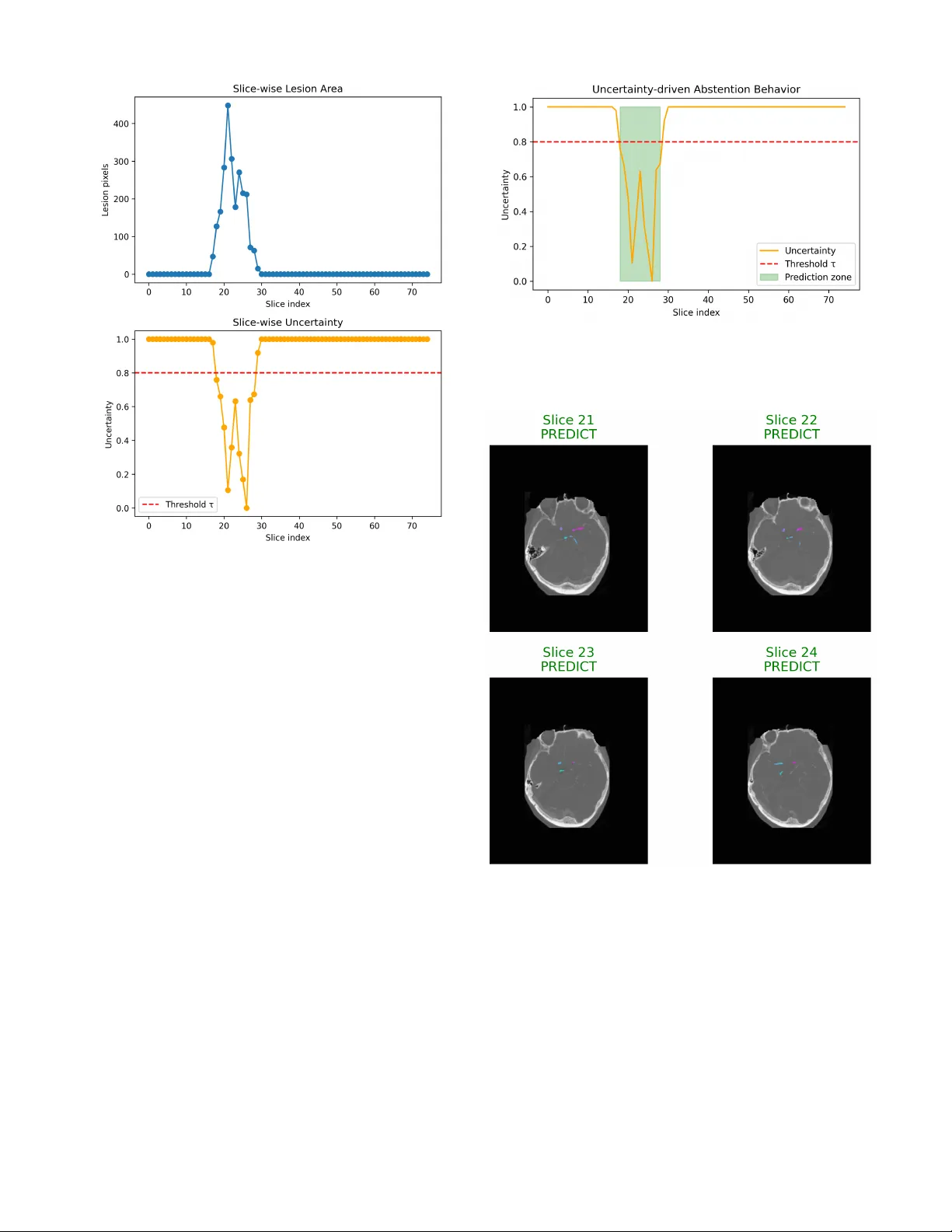

An Explainable Agen tic AI F ramew ork for Uncertain t y-A w are and Absten tion-Enabled A cute Isc hemic Strok e Imaging Decisions 1 st Md Rashadul Islam Dep artment of Computer Scienc e and Engine ering Daffo dil International University islam15-6062@s.diu.edu.b d Dhaka, Bangladesh A bstr act —Artificial in telligence (AI) mo dels ha v e demonstrated considerable p oten tial in the imaging of acute isc hemic strok e, esp ecially in the detection and segmen tation of lesions via computed tomograph y (CT) and magnetic resonance imaging (MRI). Nev er- theless, the ma jorit y of existing approac hes op erate as blac k-b o x predictors, pro viding deterministic outputs without transparency regarding predictiv e uncertain ty or the establishmen t of explicit proto cols for decision rejection when predictions are am biguous. This de- ficiency presen ts considerable safet y and trust issues within the context of high-stak es emergency radiology , where inaccuracies in automated decision-making could conceiv ably lead to negative consequences in clinical settings. [1], [2]. In this paper, we in tro duce an explainable agen- tic AI framew ork targeted at uncertaint y-aw are and absten tion-based decision-making in AIS imaging. It is based on a m ultistage agentic pip eline. In this framew ork, a p erception agen t p erforms lesion-a w are image analysis, an uncertaint y estima- tion agent estimates the predictive confidence at the slice level and a decision agen t dynamically decides whether to mak e or withhold the prediction based on prescribed uncertain ty thresholds. This approac h is different from previous stroke imaging framew orks, whic h ha ve primarily aimed to improv e the accuracy of segmen tation or classification. [3], [4], Our framework explicitly emphasizes clinical safety , transparency , and decision-making pro cesses that are congruen t with h u- man v alues. W e v alidate the practicalit y and interpretabilit y of our framew ork through qualitative and case-based ex- aminations of t ypical stroke imaging scenarios. This examination demonstrates a natural correlation b e- t w een uncertain t y-driv en abstention and the existence of lesions, fluctuations in image qualit y , and the sp ecific anatomical definition b eing analyzed. F urthermore, the system integrates an explanation mo de, offering visual and structural justifications to b olster decision-making, thereb y addressing a crucial limitation observed in existing uncertain t y-a w are medical imaging systems: the absence of actionable interpretabilit y . [5], [6]. This research do es not claim to establish a high- p erformance b enchmark; instead, it presents agen tic con trol, uncertain t y-aw areness, and selectiv e absten- tion as essen tial design principles for the creation of safe and reliable MI-AI. Our results supp ort the idea that incorporating explicit stalling b ehavior within agen tic arc hitectures could accelerate the dev elopment of clinically deplo y able AI systems for acute strok e in terv en tion. Keyw ords: Acute Ischemic Stroke; Medical Imaging AI; Agen tic Artificial In telligence; Uncertaint y Estimation; Absten tion Mechanisms; Explainable AI; Clinical Decision Supp ort; Safety-Critical AI I. Introduction A cute isc hemic stroke contin ues to b e one of the leading causes of mortality and long-term disability worldwide, placing a significan t burden on patien ts, caregiv ers, and healthcare systems. A ccurate and timely in terpretation of medical imaging, particularly computed tomograph y (CT), CT angiograph y (CT A), and magnetic resonance imaging (MRI).Artificial intelligence (AI) has emerged as a promising to ol to supp ort radiological assessment by enabling automated lesion detection, segmen tation, and triage from stroke imaging data [3], [4]. While deep learning has adv anced significan tly in the domain of medical imaging, the majority of curren t AI systems for stroke diagnosis function as deterministic pre- dictors, yielding consistent results without accoun ting for v ariables suc h as image quality , lesion ambiguit y , or shifts in data distribution. This approach contrasts sharply with the practical realm of clinical radiology , where exp erienced radiologists often delay decision-making un til additional imaging is obtained or pro cess complex cases through m ultiple stages to accommo date substantial diagnostic uncertain t y . In the absence of explicit mec hanisms to iden- tify images with uncertain ty and abstain from decision- making, issues of safety and trust b ecome increasingly critical, particularly in emergency situations where erro- neous automated decisions could ha v e profound clinical consequences. [1], [2]. Recen t work has emphasized the imp ortance of uncer- tain t y quan tification in medical AI and the necessit y of distinguishing reliable from non-reliable predictions [7]– [9]. In medical imaging, uncertaint y-aw are approac hes suc h as Bay esian neural net w orks and deep ensem bles (DE) ha ve been studied. Ho w ev er, they usually assume that the results can only be expressed as a numerical confidence level and would not directly apply to clincially decision making. Meanwhile, with the dev elopmen t of selectiv e prediction and absten tion-aw are learning, models are enabled to abstain from prediction when uncertain [10], [11]. Ho wev er, suc h methodologies are infrequen tly utilized in imaging analyses of ischemic strok e in a manner that reflects the systematic, staged approac h of clinical ev aluations. A significan t limitation of existing AI mo dels for stroke is their lac k of interpretabilit y . Even if to date such systems ha v e sho wn some in teresting quantitativ e performances, predictions made without a clear rationale can disillusion the clinician in trusting and turning them becoming a real obstacle in clinical practice. In resp onse to this issue, the explainable artificial in telligence (XAI) approaches ha v e adv o cated saliency-based visualization and attribu- tion tec hniques [6], [12], [13]. How ev er, most existing XAI mo dels are disconnected with uncertain t y and decision- making—they merely explain prediction but fail to provide guidance on when or ho w a prediction is made. Giv en these contin uing c hallenges, at least one alterna- tiv e holds gro wing app eal: that of the agen tic AI approac h, in whic h complex tasks are reduced to a set of interactiv ely con trolled subtasks or agents programmed for p erception, reasoning and decision-making. Agen tic and m ulti-stage arc hitectures naturally provide the framework to simulate clinical workflo ws, where tasks lik e imaging interpretation, determining lev el of confidence and escalating a decision are immediately indep enden t but mutually dependent. Despite the promising adv ance of agen tic modelling ov erall in AI resear ch, th ere has been little prior applied w ork on safet y-critical medical imaging, suc h as acute strok e decision supp ort. W e presen t an in terpretable agen tic AI approac h for decision-making in acute ischemic stroke imaging that accoun ts for uncertain predictions and abstention. Rather than relying on a single p erformance measuremen t, e.g., predictiv e accuracy , our model also highligh ts the clin- ical safety and transparency issue and h uman v alue- naturalized decision making. The system consists of a mo dular agentic pip eline which sp ecifically includes: a p erception agent, which accomplishes lesion-aw are image analysis, an uncertain ty estimation agent that estimates confidence at the slice level and a decision agent that decides whether to predict or abstain using predefined thresholding when uncertain ty is higher than configured b y thresholds. Explainabilit y features are added to giv e in terpretable evidence for predictive and abstention deci- sions. As a concept and exploration, the study is necessarily limited in scop e from the p oint of view of p erformance. W e presen t our own representativ e cases and bring atten tion to qualitativ e characteristics of the disease, in an attempt to illustrate ho w agen tic con trol, uncertaint y and selectiv e absten tion can all b e seamlessly incorp orated into AI for strok e imaging. W e argue that these design principles are essen tial to supp orting the clinical relev ance of systems in high-risk emergency care settings. I I. Rela ted Work A. AI for A cute Ischemic Str oke Imaging Artificial in telligence has b een thoroughly explored in the realm of acute isc hemic strok e imaging, esp ecially for detecting lesions, segmentation, and prognosis using CT, CT A, and MRI mo dalities. Publicly av ailable benchmarks, suc h as the ISLES c hallenges, ha v e been crucial in estab- lishing ev aluation metho ds and adv ancing the analysis of strok e lesions [3], [4]. Ma jorit y of mo dern metho ds are based on CNNs, which are typically used in m ultiscale or 3D manner to capture spatial con text in volume tric brain imaging data [14], [15]. These approaches achiev e strong quantitativ e results on b enc hmark tasks by optimizing metrics, like the Dice score or sensitivity . Y et, they frequently giv e high precedence of algorithmic correctness ov er clinical practice and p ersis- ten tly presume that every input should hav e a categorical answ er even for examples that are complicated or subpar. B. Unc ertainty Estimation in Me dic al Imaging Understanding uncertain t y is imp ortant for trusting medical AI, especially in imaging that affects safety . Re- searc hers are studying metho ds like Bay esian neural net- w orks, Mon te Carlo dropout, and ensemble techniques to measure t w o t yp es of uncertaint y in deep learning mo dels. [8], [9]. In medical imaging, using methods that consider uncertain t y can help find predictions that might not b e reliable and cases that are unclear [7]. Ev en with these impro vemen ts, uncertaint y in strok e imaging is often just shown as an extra confidence score. In usual procedures, these uncertain ty estimates do not c hange ho w the system works. Systems do not c hange their decisions based on these estimates or send uncertain cases to exp erts for review. C. Sele ctive Pr e diction and the Limits of Monolithic Mo d- els Selectiv e prediction frameworks can av oid making pre- dictions when they are uncertain, which helps to increase the reliabilit y of the results [10]. In healthcare, using this metho d can low er the c hances of making harmful mistak es when the mo del is too confident [11]. Absten tion is common in medical AI, once a mo del has made its decision. It do es not sufficiently distinguish be- t w een p erception, ackno wledging uncertaint y , and decision making. Because of this, the reasons for not making a prediction are often unclear and may not fit well with real clinical practice. This is a problem in urgen t situations like strok e imaging. In emergency radiology , it is imp ortant that decisions are reliable and safe, as w ell as accurate. T ABLE I Comp ara tive synthesis of represent a tive AI-based appro aches for acute ischemic stroke imaging, emphasizing recent adv ances (2024–2025) and highlighting gaps in uncer t ainty-a w are abstention and agentic decision control. Ref. Y ear Core Metho d Clinical F ocus Uncertaint y XAI Agen tic Key Limitation / Gap [3] 2017 CNN Ensem ble Stroke MRI Segmentation No No No Optimizes segmentation accuracy only; lac ks uncertain ty mo deling and decision con trol. [4] 2018 Hybrid ML + CNN Strok e Outcome Prediction No Partial No Deterministic predictions; limited transparency and no abstention strategy . [7] 2021 Uncertaint y-aware DL (Surv ey) Medical Imaging (General) Y es Partial No Methodological ov erview; no task- specific integration for stroke workflo ws. [11] 2022 Abstention-a ware CNN Medical Diagnosis Partial No No In tro duces rejection option but lacks explainability and clinical w orkflow alignment. [16] 2024 Uncertaint y Modeling Survey Medical AI (General) Y es Partial No F ocuses on uncertainty theory; does not address agentic decision-making in stroke. [17] 2025 DeepISLES (CNN) Strok e MRI Segmentation No No No Strong benchmark p erformance; no safety-a ware abstention or agentic rea- soning. [18] 2025 mAIstro (Multi-Agent System) Radiomics No Y es Y es Agentic design without explicit uncertaint y-driven absten tion in acute stroke settings. [19] 2025 M 3 Builder Medical Imaging Models Partial Y es Y es F ocuses on mo del construction; lacks real-time clinical decision control. This W ork † 2026 Explainable Agen tic F ramew ork A cute Strok e CT/CT A Y es Y es Y es Conceptual integration of uncertaint y-aw are abstention, explainability , and agen tic decision control for safety-critical stroke imaging. † Conceptual and exploratory framew ork p ositioned for next-generation clinically deploy able stroke imaging AI systems. D. Explainable A rtificial Intel ligenc e in Clinic al Imaging Explainable artificial in telligence (XAI) is considered essen tial for improving transparency , accountabilit y , and clinician confidence in medical AI systems.Commonly , visualization approaches such as Grad-CAM are utilized to visualize whic h parts of the image hav e impact on mo del decisions [12]. Surveys hav e also ov erall stressed the imp ortance of explainability of for regulatory compliance, ethical deploymen t, and clinical adoption [6], [13]. Ho w ev er, most of these explainabilit y metho ds in v olv e a p ost-ho c pro cessing step, which is usually separate from guidance based on uncertaint y and the decision-making pro cess. Therefore, explanations often help us understand ho w a decision was made, rather than addressing the more imp ortan t question of whether the decision was appropri- ate. This is esp ecially imp ortant in clinical settings, where the consequences can b e v ery serious. E. A gentic and Multi-Stage AI Systems in He althc ar e Agen tic and multi-staged AI systems, in this context, disassem ble pro cesses into mediated comp onents, a struc- ture that underpins sensing (which provides function), decision-making, and action (whic h requires such func- tion). These arc hitectures hav e recently gained attention in clinical decision supp ort, mainly because of their mo d- ular and interpretable nature [20], [21]. Recen t studies using m ultiple agents in medical imaging sho w the p otential for training structured collectiv e intelli- gence [18], [19]. How ever, these systems hav e not yet b een used in the acute stroke imaging process, nor hav e they in tegrated uncertaint y-based absten tion as a key safet y feature in their design. F. Summary and Gap A nalysis State-of-the-art in stroke imaging AI, uncertain ty es- timation, absten tion-aw are learning, explainable AI and agen t systems represent significant recent adv ances in these areas. How ever, these tw o regions ha ve evolv ed inde- p enden tly . Existing approac hes are unable to find a trade- off betw een uncertain t y-a w are absten tion, explanation and Reactiv e Decision Con trol (RDC) with the certaint y , and they fail to seamlessly combine all these asp ects into an efficien t solution for AIS imaging. This study aims to address this gap by presen ting an explainable agentic AI arc hitecture. This archite cture is sp ecifically designed to in tegrate lesion-aw are p erception, uncertain t y estimation, selective absten tion, and clinician- aligned decision supp ort, with the goal of improving the safet y and reliability of strok e imaging systems. I I I. Methodology This section delineates the Explainable Agentic AI framew ork, which is pertinent to uncertain t y-a w are and absten tion-enabled acute isc hemic stroke imaging. This approac h is in ten tionally similar to clinical reasoning, where perception, changes in b eliefs that aren’t fully formed, and the process of making decisions are seen as in- teracting functional comp onents. The mo del’s foundation go es b eyond just predictions; it also considers safet y , ho w easy it is to understand, and how well it matches medical kno wledge. A. Overview of the A gentic F r amework This work introduces a mo dular arc hitecture for an agen t-based system, which (incorp orating v arious sp ecial- ized agen ts) no w offers not only secure and interpretable decision-supp ort in the field of stroke-imaging. Unlike monolithic deep learning approac hes that directly fuse images and predictions, the prop osed approac h decou- ples p erception from uncertaint y estimation and decision. The structure of this model mirrors the w ay in which radiologists read clinically , considering sp eed of image reading, confidence assessmen t and ho w to handle cases whic h are not certain together but found them as separate comp onen ts. Figure 1 The system’s design is distinguished by a top- do wn hierarchical structure. Acute strok e imaging data serv e as input for the p erception agent, whose outputs are then ev aluated by an uncertaint y estimation agen t. F ollowing this, the decision-making agen t uses a safet y p olicy that considers the p ossibility of not acting. At the same time, an explainability module pro vides clear clinical results. B. Per c eption A gent: L esion-A war e Image A nalysis The p erceptual agent is designed to iden tify features related to lesions in imaging studies of acute strok e, including CT, CT A, and MRI scans. F unctioning as a deep learning–based feature extractor, this agent is trained to prioritize relev ant brain regions indicativ e of ischemic damage. It is essential to understand that the p erception agent do es not mak e clinical determinations. Rather, it extracts in termediate feature represen tations that summarize spa- tial lesion attributes, contextual image data, and struc- tural mark ers. This distinction ensures that p erceptual analysis is separate from subsequen t decision thresholds, thereb y enabling uncertaint y reasoning and abstention strategies to b e grounded in interpretable intermediate lev els, rather than an inscrutable final prediction. C. Unc ertainty Estimation A gent The uncertain t y estimation engine ev aluates the v alidity of p erceptual represen tations generated by the p erception engine. A dditionally , uncertaint y is computed at the slice lev el to capture lo cal am biguities arising from factors suc h as low contrast, motion blur, p o or lesion visibilit y , or complex anatomical structures. The agent pro duces a normalized uncertaint y score that reflects prediction confidence rather than raw class õ Acute Stroke Imaging Input CT / CT A / MRI Brain Slices · Emergency Imaging  Time-critical acquisition: “Time is Brain” 4 Perception Agent Lesion-Aw are Image Representation Feature Extraction · Lesion Lo calization · Spatial Learning Uncertainty Estimation Agent Confidence & Reliability Assessment Epistemic Uncertainty · Slice-level Confidence · Ambiguity Quantification 8 Decision Agent (Abstention-Enabled) Uncertainty > τ (Safety Threshold) ¥ Predict | s Abstain (Refer to Clinician) N Explainable Clinical Output Explainability: Saliency Maps · Decision Rationale ß Clinician-Interpretable Evidence & T ransparency Module CLOSED-LOOP SAFETY OVERSIGHT REPRESENT A TION ANAL YTICS SAFETY LOGIC Fig. 1. The prop osed explainable agen tic AI framework for uncertaint y-aw are acute stroke imaging employs a hierarchical top- down design. This design distinctly separates p erception, uncer- taint y estimation, and safety-a w are decision-making processes. Suc h a structure facilitates abste n tion in situations of high epistemic uncertaint y and allows for clinician-in-the-loop interv ention. probabilit y . This score is based on the estimation of epistemic uncertain t y , indicating areas where the mo del’s uncertain t y is significant enough to preclude reliance on automated decision-making. Figure 2 presen ts a representativ e slice-wise uncertaint y profile.Uncertain t y is expected to grow in areas with blurred b order app earances or insufficient diagnostic mat- ter. This observ ation is an argument for using abstention in the case of safet y-critical applications. D. De cision A gent and A bstention Me chanism The c hoice element assesses p erceptual inputs along with their asso ciated uncertaint y estimates to determine if there is enough evidence for the net work to make a decision. A prediction is made only when the uncertaint y score falls b elow a sp ecified safety threshold, represented as τ . If the uncertaint y surpasses this threshold, the system refrains from making a judgment, similar to a clinician who seeks further exp ert consultation or diagnostic testing. This ’absten tion’ mec hanism transforms ignorance from a passive diagnostic state into an activ e decision signal. Fig. 2. Slice-wise uncertainty profile across axial brain slices. El- ev ated uncertaint y is observed in ambiguous or low-information regions, motivating abstention in safety-critical decision-making. By including b oth deferral and uncertain t y , we imple- men t a "safety-first" approach. This metho d discourages o v erconfiden t decision-making, which in turn reduces the c hance of ov erly confident predictions in situations where the diagnosis is unclear. E. Explainability and De cision T r ansp ar ency Explainabilit y metrics are incorp orated to pro duce hu- man interpretable explanations for b oth predictions and non-decisions. The visual attribte metho ds sho w case the predilections of the images and signal, which pro duce lesion-a w are represen tations and the uncertaint y-informed path w a ys, explaining where abstinence is advised. Figure 3 Samples illustrate the difference of predict- ing and not-predicting outcomes within individual image slices. Sections with clearly defined lesion c haracteristics are asso ciated with high confidence decisions, meanwhile absten tion tak es place in regions of uncertaint y and mis- diagnosis. F. Design R ationale and Clinic al A lignment The agentic mo del outlined in this pap er highly v alues clinical safet y , interpretabilit y and transparency through the av oidance of full automation. The system emulates a clinician’s thought and decision explanation patterns by Fig. 3. The visualization of decision outcomes influenced b y uncer- taint y across representativ e image slices. Slices c haracterized by low uncertaint y result in confident predictions, whereas those with high uncertaint y necessitate abstention and referral to a clinician. Fig. 4. visualizing decision outcomes influenced by uncertaint y on axial brain slices, we gain a deep er understanding of system behavior. In diagnostically clear sections, confiden t predictions are made, whereas in adjacent or ambiguous areas, abstention o ccurs, mirroring clinically appropriate deferral b ehavior. emplo ying metho ds that delay actions in the precence of am biguit y and also incorp orating explainable p ortions This framew ork is not a stand-alone diagnostic aide but should be used as a decision support system to aid the clin- ician in form ulating considered judgemen ts in the milieu of acute strok e care.The design’s emphasis on safety in stroke detection stems from the critical need to identify instances where predictions should b e suppressed, a requiremen t as essen tial as generating precise predictions. F urthermore, the mo dular separation of p erception, un- certain t y estimation, and decision control promotes struc- tured reasoning concerning system b eha vior and provides a basis for subsequent enhancements tow ard more sophis- ticated clinical decision-supp ort systems. IV. Resul ts This section presents a qualitativ e and b ehavioral ev alu- ation of the prop osed agen tic AI concept. The findings not only corrob orate traditional p erformance metrics but also offer insigh ts in to the system’s resp onses to uncertain ty , its transitions in to and out of m ultirobot states (abstention dynamics), and its adherence to sensitivity standards re- quired at a clinical safety lev el. This ev aluation paradigm w as delib erately c hosen to reflect the exploratory and safet y-critical nature of acute stroke imaging, where un- derstanding when and why a mo del defers is as crucial as its predictive p erformance. A. Slic e-wise L esion A war eness and Unc ertainty Behavior The p erception agent consistently identifies lesion- asso ciated regions across successive image slices, th us pro ducing spatially coheren t in termediate representations. Nev ertheless, the system do es not assign uniform reliabil- it y to all slices. Rather, it permits uncertaint y to fluctuate dynamically , contingen t up on b oth the visibility contrasts of the lesion and the surrounding anatomical con text. As depicted in Figure 2, uncertaint y p ersists at a high lev el within slices exhibiting limited lesion information or am biguous visual patterns, whereas it diminishes in slices where lesion characteristics are more distinctly apparent. The observed b ehavior suggests that the uncertaint y esti- mation agen t prioritizes substantial image conten t, rather than pro ducing uniform confidence v alues throughout the v olume. The slices tow ard the b oundary of a lesion often hav e in termediate levels of uncertain ty , whic h corresp onds to partial visibility of the lesions. This conclusion is consis- ten t with curren t clinical kno wledge, since these slices are often associated with high interpretativ e complexity even for an exp erienced radiologist. B. Unc ertainty-Driven A bstention Patterns The p olicy explicitly incorp orates absten tions based on uncertain t y estimates. When uncertain t y surpasses the predetermined safety threshold, the system refrains from pro cessing and instead defers; it do es not terminate the pro cess to av oid p otentially excessive predictions. Figure 4 The summary of the text encompasses tw o predictions and abstentions concerning axial slices. The mo del’s in terpretability indicates that confiden t predic- tions are made on slices exhibiting lo calized lesion struc- tures, whereas abstentions predominan tly o ccur in slices with low contrast, limited diagnostic cues, or am biguous anatomical lo cations. The concept of selectiv e deferral implies that the system is not just shifting from abstinence, but is activ ely making c hoices to defer in bio equiv alen tly undetermined cases. This approach minimizes the risk of false p ositives or false negatives becoming o v erly confident in safet y-critical situations. C. Clinic al Interpr etability of De cision Outc omes In addition to providing a binary prediction or refusal, the mo del offers an in terpretable visualization that en- hances the clinical relev ance of its decision. Lesion-aw are represen tations, which augment the input volume, are highligh ted through saliency-based visualizations, while uncertain t y-informed decision logic offers explanations when abstention is triggered in sp ecific slices. F rom a clinical p ersp ective, it is transparent to clinicians when the mo del is confident and legitimately supp ortive, and when additional exp ert review may b e necessary . The tec hnology does not obscure but rather reveals uncertaint y as part of the decision-making pro cess. D. Safety-Oriente d System Behavior The emergen t b ehavior of the set-theoretical mo del, whic h is conserv ativ e and security oriented, has imp ortant consequences. In contrast to most traditional end-to-end systems whic h are designed to make predictions for all the inputs, after some exploration, the agen tic netw ork tends not to act up on uncertain t y . This feature would b e particularly imp ortant consider- ing, for instance, acute stroke care, where false-positive automatic decisions could hav e deleterious and irreversible consequences. Uncertaint y and deferral often feature in hu- man medical reasoning, as do es the decision theory fo cus on these asp ects. Relations also to Fisc her’s argument are explored. E. Summary of Observe d System Pr op erties The qualitativ e data suggests that the prop osed agentic framew ork: • creates lesion-aw are representations that preserve spatial consistency throughout image slices. • The data exhibit substantial v ariations in slice-level uncertain t y , which are asso ciated with the visibility of lesions. • selectively causes abstention in areas that are clini- cally unclear. • articulates predictions and abstentions in a manner that is comprehensible. • Demonstrates a prudent, safety-orien ted approach to decision-making that aligns with established clinical proto cols. The findings demonstrate the effectiveness of agentic, uncertain t y-a w are decision support systems in acute stroke imaging, esp ecially when the fo cus is on interpretabilit y and safety rather than precise prediction. V. Discussion In the con text of acute isc hemic strok e imaging, w e prop ose an explainable agentic AI system designed for uncertain t y-a w are decision supp ort. Our mo del has b een crafted with safety and generalization of the decision in mind, thus enhancing safety compliance, in terpretabilit y and alignment with established clinical decision-making pro cesses. This approach is in con trast to the common end-to-end neural netw ork-based metho ds which make predictions without an explicit estimation of uncertaint y . The basis for this design is grounded in a growing b o dy of empirical evidence, which has sho wn the hazards of o v erreliance on “ov erconfident” model behavior within high-stak es applications, including in medical AI tasks where mispredictions could cause significan t clinical harm [7], [16]. One of the key contributions in this work is that it pro vides a mo del to treat uncertain t y as an actionable decision rather than simply as measure of confidence. It has b een sho wn in related studies that many medi- cal imaging systems provide uncertaint y scores/confidence v alues which hav e no impact on the system’s confidence to make a prediction, despite exhibiting large epistemic uncertain t y [11], [16]. On the other hand, when the degree of uncertaint y exceeds a user-defined safety threshold, our decision agent design enables us to rely on an ab- sten tion mechanism and w e presen t principles tow ard its dev elopmen t.This approach aligns more closely with real- w orld clinical practice, where cases inv olving slice-level assessmen ts are referred bac k for expert review rather than b eing comp elled to reach a resolution. The qualitative findings demonstrate that the netw ork a v oids certain regions, and it is not distributed equally o v er all image slices, but instead clustered within those regions that are diagnostically more challenging due to a lac k of structural information (e.g., low-con trast tissue), unclear lesion boundaries or complex anatom y . Recent issues in strok e imaging ha v e sho wn ho w this segmen tation mo del’s p erformance is not optimal at the b oundaries b et w een lesions and in ambiguous tissue contrast [17]. As a result, instead of random rejection or nonsensical output, the behaviors of abstention patterns indicate that the uncertain t y estimator learns to gov ernclinically significant am biguit y prop erly . Moreo v er, enhancing explainability improv es the clinician-friendliness of our approach. Prior research has demonstrated that black-box predictions can engender clinician sk epticism, which subsequen tly affects regulatory appro v al and the secure implemen tation of medical imaging systems [7], [19]. The framework’s saliency- based visual explanations of b oth predictive and abstain decisions enable clinicians to discern the image regions prioritized b y the mo del and the rationale b ehind an y decision-making dela ys. Interpretabilit y is, in essence, a fundamen tal asp ect of transparency within safety-critical decision-making, rather than a secondary consideration. F urthermore, the agentic design paradigm fundamen- tally alters how AI is used in acute strok e workflo ws. The system is designed to supp ort ph ysicians’ decisions, rather than acting as an indep endent diagnostic tool. This approac h aligns with current trends in medical AI, which emphasize human in v olv emen t or agen tic architectures for managing complex clinical situations, where predictiv e accuracy depends on in terpretabilit y , accountabilit y , and reliabilit y [18], [19]. Our prop osed framew ork offers a clear metho d for safely integrating AI into acute strok e care by separating p erception and uncertaint y reasoning from the decision-making pro cess. VI. Limit a tions Sev eral limitations of this study w arrant ackno wledg- men t. Firstly , we ha v e inten tionally opted for a qualita- tiv e and exploratory analysis at this stage, fo cusing on b eha vioral patterns, uncertaint y dynamics, and absten- tion profiles rather than quantitativ e b enc hmarks. While this approach aligns with recen t calls for a safety-cen tric ev aluation of medical AI systems [16], we recommend that future research incorp orate large-scale quan titativ e measures alongside the insights presen ted here. Secondly , the study is based on a limited num b er of sample cases. This pro vides a detailed insigh t into eac h individual case, but do es not represent the entire patho- anatomic heterogeneity whic h has b een rep orted in multi- cen ter cohorts of stroke patients. Earlier work on the AI applied to stroke imaging has shown that, as in this case, pro ving generalizing b y translational testing is still p ending requires v alidation [17]. Thirdly , the uncertain t y estimates employ ed in this framew ork measure epistemic uncertaint y rather than pro viding a formally calibrated probabilistic guarantee. Although the uncertain t y behavior aligns with clinical in tuition, careful calibration and comparison with exp ert annotations are crucial future directions [7]. The framework is not meant to b e used as a standalone clinical decision supp ort system. It is structured in accor- dance with mo dern regulations and ethics with regards to data, and it will serve as supp ort rather than a replace the role of the clinician.Clinical v alidation and in tegration in to the clinical workflo w will b e conducted in prosp ective studies prior to real-world application. VI I. Conclusion This study presen ts a new artificial intelligence mo del designed to help with decision-making in stroke imaging. The mo del uses a combination of differen t parts that handle p erception, uncertaint y , and the decision-making pro cess. Unlik e earlier mo dels that only made predictions, this system is designed to b e safer and more transparent. A k ey feature of this system is its ability to av oid making decisions when it isn’t sure. This is similar to how do ctors often ask for more information when faced with a difficult medical case. The system seems to b e go o d at identifying hard image areas, whic h suggests that it understands important un- certain t y types. This AI model is meant to help medical professionals, not replace them, as long as it caters caution. It reflects the current paradigm of medical AI as it end- w eeks h uman in v olv emen t o ver interpretabilit y and risk a w areness. Therefore, this study suggests that suc h AI can increase the safety and reliabilit y of medical image. The mo del serv es as the foundation to create AI that are able handle uncertain ty and ensure decision security when w orking with high-risk clinical situations. References [1] A. Estev a et al. , “A guide to deep learning in healthcare,” Natur e Me dicine , vol. 25, pp. 24–29, 2019. [2] E. J. T op ol, “High-p erformance medicine: the conv ergence of human and artificial intelligence,” Natur e Me dicine , vol. 25, pp. 44–56, 2019. [3] O. Maier et al. , “Isles 2015–a public ev aluation b enchmark for ischemic stroke lesion segmen tation,” Me dical Image Analysis , vol. 35, pp. 250–269, 2017. [4] S. Winzeck et al. , “Isles b enchmark for ischemic stroke lesion outcome predi ction,” Medic al Image A nalysis , v ol. 47, pp. 62– 76, 2018. [5] M. Ghassemi et al. , “A review of challenges and opp ortunities in machine learning for health,” Natur e Biome dical Engineering , vol. 5, pp. 785–797, 2021. [6] A. Holzinger et al. , “Causability and explainable ai in medicine,” A rtificial Intel ligenc e in Me dicine , vol. 123, p. 102023, 2022. [7] M. Ab dar et al. , “Uncertaint y quantification in deep learning for medical image analysis,” Information F usion , vol. 73, pp. 1–17, 2021. [8] Y. Gal and Z. Ghahramani, “Dropout as a ba y esian approxima- tion,” ICML , 2016. [9] B. Lakshminaray anan et al. , “Simple and scalable predictive uncertaint y estimation using deep ensembles,” NeurIPS , 2017. [10] Y. Geifman and R. El-Y aniv, “Selective classification for deep neural networks,” NeurIPS , 2019. [11] D. Ramos et al. , “Abstention-a ware deep learning for medical diagnosis,” IEEE Journal of Biome dic al and He alth Informatics , 2022. [12] R. Selv araju et al. , “Grad-cam: Visual explanations from deep netw orks,” ICCV , 2017. [13] A. Arrieta et al. , “Explainable artificial intelligence: Concepts and challenges,” Information F usion , vol. 58, pp. 82–115, 2020. [14] K. Kamnitsas et al. , “Efficient m ulti-scale 3d cnn with fully connected crf for accurate brain lesion segmentation,” Me dic al Image Analysis , vol. 36, pp. 61–78, 2017. [15] M. Monteiro et al. , “Multiscale cnn for stroke lesion segmen- tation,” IEEE T r ansactions on Medic al Imaging , v ol. 39, pp. 2834–2845, 2020. [16] J. Zou et al. , “Uncertaint y quantification in medical artificial intelligence: A comprehensive survey ,” IEEE T r ansactions on Me dic al Imaging , vol. 43, no. 2, pp. 421–444, 2024. [17] E. de la Rosa et al. , “Deepisles: Deep learning b enchmarking for ischemic stroke lesion segmentation,” Natur e Communications , vol. 16, no. 1, p. 1123, 2025. [18] A. T zanis et al. , “maistro: A multi-agen t framework for end- to-end medical imaging intelligence,” Me dic al Image A nalysis , vol. 92, p. 103012, 2025. [19] R. F eng et al. , “M3builder: A mo dular multi-agen t framework for medical image analysis,” Nature Machine Intel ligenc e , v ol. 7, no. 3, pp. 214–226, 2025. [20] E. Shortliffe, “Clinical decision support systems,” JAMA , 2021. [21] A. Kiani et al. , “Impact of ai assistance on clinical decision making,” PNAS , 2020.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment