Parametrized Sharing for Multi-Agent Hybrid DRL for Multiple Multi-Functional RISs-Aided Downlink NOMA Networks

Multi-functional reconfigurable intelligent surface (MF-RIS) is conceived to address the communication efficiency thanks to its extended signal coverage from its active RIS capability and self-sustainability from energy harvesting (EH). We investigat…

Authors: Chi-Te Kuo, Li-Hsiang Shen, Jyun-Jhe Huang

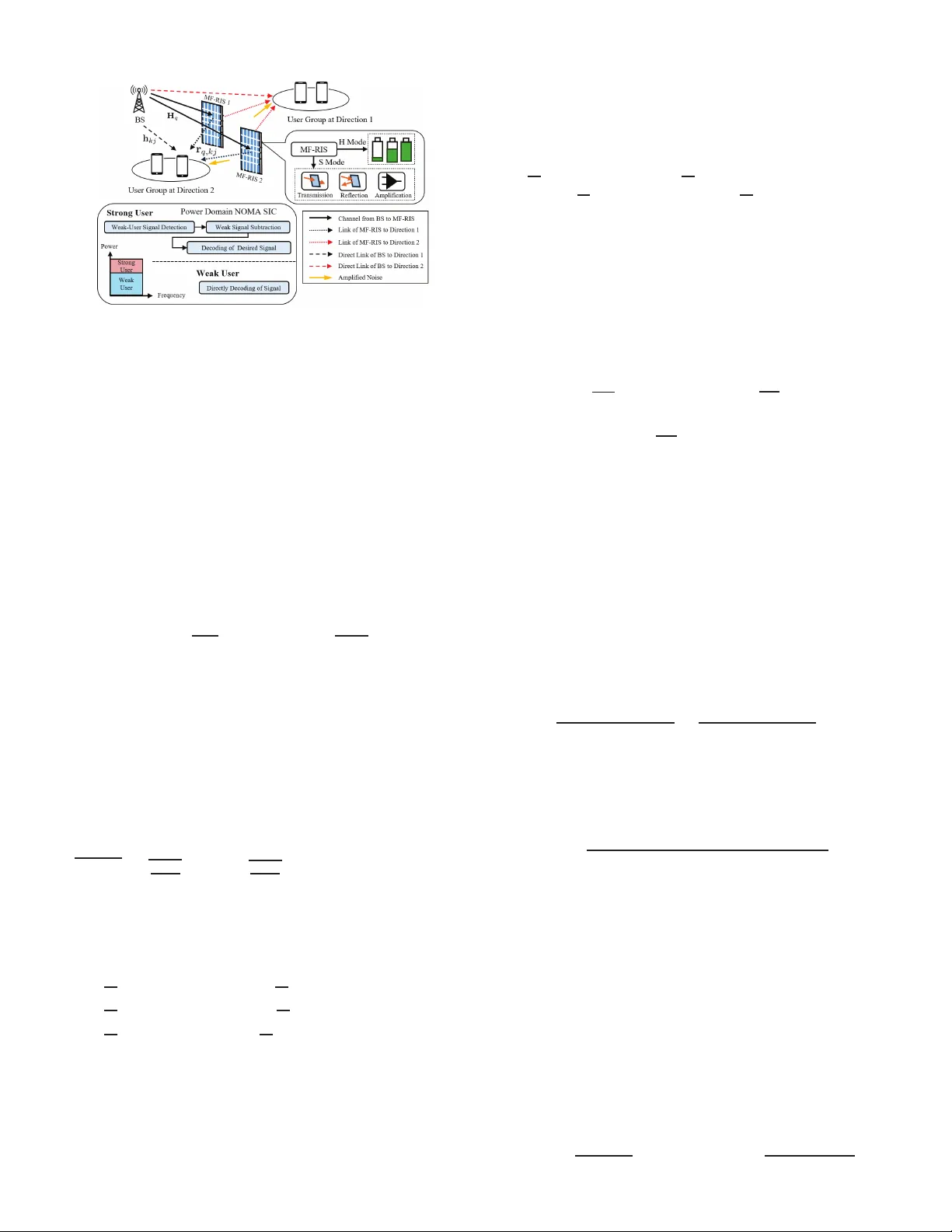

1 P arametrized Sharing for Multi-Agent Hybrid DRL for Multiple Multi-Functional RISs-Aided Downlink NOMA Networks Chi-T e Kuo, Li-Hsiang Shen, Me mber , IEEE a nd J yun-Jhe Huan g Abstract —Multi-fun ctional reconfigurable intelligent surface (MF-RIS) is concei ved to address the communication efficiency thanks to its extended signal cov erage from its ac tive RIS capability and self-sustainability from energy harv esting (EH). W e in vesti- gate the arc hitecture of multi-MF- R IS s to assist non-orthogonal multiple access (NOMA) d ownlin k networks. W e form ulate an energy efficiency (EE) maximization problem by optimizing p ower allocation, transmit beamforming and MF-RIS configurations of amplitudes, phase-shi f t s and EH ratios, as well as th e position of MF-RISs, while satisfying constraints of av ailable power , u ser rate requirements, and self-sustainability p roperty . W e design a parametrized sharing scheme for multi-agent hybrid deep reinf orce- ment lear ning (PMHRL), where the multi-agent p roxima l policy optimization (PPO) and deep-Q network (DQN) handle conti n uous and discrete varia bles, respectively . The simulation results hav e demonstrated that proposed PMHRL has the h ighest EE compar ed to other benchmarks, inclu ding cases without parametrized sh aring, pure PP O and DQN. M oreo ve r , the proposed mult i-MF-RISs-aided downlink NOMA achieves the h ighest EE compared to scenarios of no-EH/amplification, traditional RISs, and deployment wi thout RISs/MF-RISs under di fferent multi p le access. Index T erms —Multi-fu nctional RIS, NOMA, energy efficien cy , hybrid deep reinf orceme nt learning, parametrized sharing. I . I N T RO D U C T I O N In the era of the six-g eneration (6G) wireless c ommun ications, the scarcity of spectrum resources has driv en resear chers to explore more efficient transmission tec h nolog ies [1] . Am ong them, non -ortho gonal multiple acc ess (NOMA) h as emerged as a pr omising solution du e to its c a pability to serve multiple users simu ltaneously a t the same time by f requen cy r esource [2]. Com p ared to the tra d itional or th ogon al m u ltiple access (OMA) mech a nism, NOMA exhibits significantly hig her spectral efficiency . Ho we ver , the perfo rmance of NOMA is often hindered by challenges such as se vere channel fading and inter-user interferen ce, potentially degrading the sign al quality an d limit its practical deploymen t [3]. T o ad dress the issues, recon fig urable intelligent surfaces (RIS) h as been prop osed as an enabling technolog y [ 4]. By adjusting th e c onfigur a tion o f RIS elemen ts, a virtual line-o f-sight (LoS) link can be e stab lished to bypass obstacles b etween the tr ansmitter and recei ver and to improve the signal qua lity f or combating the chann el fadin g [5] . Although RIS ho lds pro mise for next-gen eration sy stem s, its p ractical deployment remains constraine d by several inh erent limitatio ns. Particularly , it can o n ly be operated over a 180 -degree h alf-space coverage a r ea, depending o n exter n al p ower sup plies, wh ich confines its indep endent operatio n and scalability . Against the backdr ops of RISs, th e co ncept of multi-f unctiona l RIS (MF-RIS) has been prop o sed [6], integrating the fun ction of simultan eous transmission an d reflection RIS (ST AR-RIS) [5], p r oviding a 3 60-degre e full-space co verage, re alizing a Chi-T e Kuo, Li-Hsiang Shen and Jyun-Jhe Huang are with the Depart- ment of Communication Engineeri ng, National Central Uni ve rsity , T aoyuan 320317, T aiwan . (email : harber932 2242@gmail.c om, shen@ncu.edu.tw , and ke n- neth9009 12@g.ncu.edu.tw) ubiquito us service [7], [8]. Mor e over, energy harvesting (EH) capability at the radio-frequ ency (RF) is designed in MF-RIS, allowing it to cap ture wireless en ergy from incident elec tr omag- netic signals and operatin g in a self-sustaina b le man ner [ 9]. This design red uces the requireme nts o n th e wired p ower in frastruc- ture or freque nt battery replacem ent, improving system ene rgy efficiency ( EE) and deploym ent flexibility . Additionally , MF- RIS en hances the traditional passive reflection by inco r porating activ e c o mpon ents for signal amplification, improving th e weak channel con ditions in NOMA networks [10]– [12]. Mo reover , there will be increasing needs of deploying multiple MF-RISs for wider coverage requirem e n ts. In th is work, we investigate a novel ar c h itecture of deploying multiple MF-RISs for assist ing downlink NOMA networks [13]. NOMA share s th e same spec - trum in mu lti-MF-RISs-aided networks. On the other hand , MF- RISs con tribute to constructing fav orable chan nel cond itio ns fo r NOMA user groups by mitigating channel fading and interfer ence effects. More over, we design based on d eep reinforc ement learn- ing (DRL) techniqu es to enable adaptiv e policy learnin g under high-d imension and d ynamic environments. Un like co n ventional DRL h andling either discrete or c o ntinuo u s actions separately , a general h ybrid DRL fr amew ork sho uld be ad opted to effecti vely address complex hyb rid con tinuou s- d iscrete action spaces. The main co ntributions of this work ar e summarized as follows: • W e in vestigate multi-MF-RISs-aided d ownlink NOMA net- works. W e c o nsider power-domain NOMA, where a group of users shares the same frequ ency resou rce. MF-RISs are capable o f extending the tr ansmission range by reflecting , transmitting, a nd amplifying signals, while harvesting par tial signal ene rgy for ope ration. • W e aim at max imizing system EE by decidin g power allocation, ba se statio n (BS) beam forming and MF-RIS configur ations o f amplification /p hase-shifts/EH ratios and MF-RIS po sitions. Note that MF- RIS circuit p ower is also considered . A parametrize d sharing in mu lti-agent hybrid deep rein forcemen t learn ing (PMHRL) scheme is design ed, whereas h ybrid DRL tackles joint continuo u s-discrete vari- ables respecti vely by proximal p olicy optimizatio n (PPO) and deep-Q network (DQN). Parametrized shar ing enables informa tio n shar ing between dual-m o dules. • Results have d emonstrated that PMHRL ach ieves the high- est EE compared to other existing be n chmark s of con- ventional DRLs and those withou t parame trized sharing. The proposed arch itecture of multi-MF-RISs-aided d own- link NOMA ach iev es the highest EE am ong the cases without E H, conventional RISs, non-am p lified sign als and deployments without RISs/MF-RISs. I I . S Y S T E M M O D E L A N D P R O B L E M F O R M U L AT I O N In a multi-MF- RISs-assisted downlink NOMA network in Fig. 1 , we consider a BS equip ped with N transmit anten- nas with the set o f N = { 1 , 2 , ..., N } , serv in g J users at 2 Fig. 1. The proposed archi tecture of multi-MF-RISs-assisted downlink NOMA. direction k indexed by the set of J k = { 1 , 2 , ..., J k } . W e consider total K direction for NOMA transmission gro ups, where K = { 1 , 2 .....K } . W e con sider Q MF-RISs with its set of Q = { 1 , 2 , . . . , Q } . Furth ermore , we con sider a Cartesian coo rdinate system with the locations of the BS, MF-RIS, a nd user being w b = [ x b , y b , z b ] T , w q = [ x q , y q , z q ] T , and w kj = [ x kj , y kj , 0] T , respectively . Note that T indicates the transp o se o peration . Due to the limited coverage of MF-RIS, its deployable region is also limited by W , wher e th e following constraint is satisfied: w q ∈ W = { [ x q , y q , z q ] T | w min w q w max } with its deployable areas bounded by w min and w max . Each MF-RIS has M elements indexed by the set of M = { 1 , 2 , . . . , M } with a two-dim ensional array with M = M h · M v elements, wh ere M h and M v indicate th e respec tive number s of ele m ents in ho rizontal and vertical axes. Each M F-RIS config uration ca n be d efined as Θ k q = diag α q, 1 q β k q, 1 e j θ k q, 1 , . . . , α q,M q β k q,M e j θ k q,M , where θ k q,m ∈ [0 , 2 π ) and β k q,m ∈ [0 , β k max ] denote the phase-shif t and amplitude coefficients o f MF-RIS at k -th directio n , re sp ectiv ely . Note that β max > 1 denotes the signal am plification, wherea s β max ≤ 1 indicates con ventional RIS without am p lification capability . Each element of the MF-RIS can op erate in energy harvesting (EH) mode (H mode) and signal mode ( S mod e) by adjusting the EH coefficient α q,m ∈ { 0 , 1 } . Note that α q,m = 1 implies that MF-RIS op erates in S mod e, whilst α q,m = 0 indicates tha t it func tio ns in only H mode. W e consider the Rician fading channel mode l between the BS and q -th MF-RIS as H q = q h 0 d − k 0 q q β 0 β 0 +1 H LoS q + q 1 β 0 +1 H NLoS q ∈ C M × N , wher e h 0 is the pathlo ss a t the referen ce distance of 1 meter, d q = k w b − w q k 2 is the distan c e, and k 0 is the pa th loss exponent. β 0 is the Rician factor adjusting the portio n of LoS path H LoS q and no n-LoS (NLo S) compo nent of H NLoS q . Th e LoS component [14] is expressed as H LoS q = 1 , e − j 2 π λ d R sin ¯ ψ r,q sin ¯ θ r,q , · · · , e − j 2 π λ ( M z − 1) d R sin ¯ ψ r,q sin ¯ θ r,q T ⊗ 1 , e − j 2 π λ d R sin ¯ ψ r,q cos ¯ θ r,q , · · · , e − j 2 π λ ( M y − 1) d R sin ¯ ψ r,q cos ¯ θ r,q T ⊗ 1 , e − j 2 π λ d B sin ϕ t cos ϑ t , · · · , e − j 2 π λ ( N − 1) d B sin ϕ t cos ϑ t T , where ⊗ deno tes the Kronec ker produ ct and T is transpo se op eration. λ indicates the wa velength of the operatin g frequency . Notation s of d R and d B denote the elemen t spacing o f MF-RIS and antenna separation of BS, respectively . Notatio ns of ¯ ψ r,q , ¯ θ r,q , ϕ t , and ϑ t represent the azimuth an d e lev ation ang les of-arrivals of MF-RIS q , and th o se of angle-of -depar tu res of BS, respectively . Note that H NLoS q follows the Rayleigh fading. The direct link from BS and reflected link from the MF-RIS q to user j at direction k are denoted by h kj ∈ C N × 1 and r q,k j ∈ C M × 1 , r espectively , associated with their distances of d kj and d q,k j . While, b o th parameters follow H q but in a vector form, where the LoS components are h LoS kj = [1 , e − j 2 π λ d B sin ϕ t sin ϑ t , · · · , e − j 2 π λ ( N − 1) d B sin ϕ t sin ϑ t ] T and r LoS q,k j = [1 , e − j 2 π λ d R sin ϕ t,q sin ϑ t,q , · · · , e − j 2 π λ ( M − 1) d R sin ϕ t,q sin ϑ t,q ] T . The NLoS p a r ts h NLoS kj and r NLoS q,k j are b oth characterized b y Rayleigh fadin g. Accord ingly , the channel be twe e n the MF-RIS q a nd an d user j at direction k is g q,k j = r H q,k j Θ k q H q where H indicates Herm itian o peration. The total combined chann el of BS-user j at direction k assisted by Q MF-RISs is defined as g kj = h kj + P q ∈Q g q,k j . In the downlink-NOMA network, users ar e d ivided into mul- tiple gro ups to share spectrum resour ces. Th e signal receiv ed of user j at dir ection k is giv en by y kj = g kj f k √ p kj s kj + g kj f k X i ∈J k \{ j } √ p ki s ki + X ¯ k ∈K\{ k } g kj f ¯ k X i ∈J ¯ k √ p ¯ ki s ¯ ki + X q ∈Q r H q,k j Θ k q n q + n kj , (1) where f k represents the tr ansmit b eamfor ming vecto r of the BS for direction k . Moreover, p kj denotes the p ower alloca- tion factor for u ser j at direction k where P j ∈J k p kj = 1 . n q ∼ C N (0 , σ 2 s I M ) denotes the amplification n oise from MF- RISs with element no ise power σ 2 s . Notation of n kj is n oise power of user j at direction k with its p ower of σ 2 u . Mor eover , NOMA user signals are transmitted simu ltaneously at th e same frequen cy , lead in g to mutua l interferen ce. T o dec ode the intended signals, users employ successi vely interferenc e cancellation (SIC) [15]. Assum e that the users j a nd l in dir ection k are r a nked in an ascending order ac cording to the equiv alent combin ed chan nel gains, associated with th e co nditions of | g H kj f k | 2 | g H kl f k | 2 + I kj + σ 2 u ≤ | g H kl f k | 2 | g H kj f k | 2 + I kl + σ 2 u , (2) where k ∈ K , j ∈ J k denote users at direction k , an d l ∈ L k = { j, j + 1 , · · · , J k } . Notation I kj = P q ∈Q σ 2 s k r H q,k j Θ k q k 2 indicates the r esidual interference . Th e sign al-to-inter f erence-p lus-noise ratio (SI N R) is giv en by γ kj = | g kl f k | 2 p kj P l ∈L k | g kl f k | 2 p kl + I IG ,k + I MR + σ 2 u , (3) where I IG ,k = P ¯ k ∈K\{ k } P i ∈J ¯ k k g kj f ¯ k k 2 p ¯ ki indicates the inter- group inter ference, and I MR = P q ∈Q σ 2 s k r H q,k j Θ k q k 2 denotes the noise in duced fro m multi-M F- RISs. Therefo re, the ac hiev able rate for u ser j at direction k can be expressed as R kj = log 2 (1 + γ kj ) . Here, we d e fine the EH coefficient matrix for the m -th element of the q -th MF-RIS as T q,m = diag ( [0 , . . . , 0 , 1 − α q,m , 0 , . . . , 0]) . Therefore, the RF power recieved by the m -th element of the q -th M F-RIS is giv en by P RF q,m = E T q,m H q P k ∈K f k + n q,m 2 , where n q,m is the amplified noise introdu ced by the MF-RIS. T o cap ture RF energy co n version efficiency for different inpu t power, a non-lin e ar harvesting model is adopted. Accordingly , the total power of th e m -th elemen t of the q -th MF-RIS is expressed as P A q,m = Υ q,m − Z Ω 1 − Ω , where Υ q,m = Z 1+ e − p ( P RF q,m − k ) is a 3 logistic f u nction with respect to the received RF power P RF q,m , and Z > 0 is a constant determining the max imum harvested power . Constant Ω = 1 1+ e 1 2 ensures a zero-inp u t/zero-o u tput response in H mode with constants 1 > 0 and 2 > 0 capturing the effects o f circuit sensiti vity and current leakage. T o achieve the self-sustainability , the total consumed po wer of MF-RISs should be lower than the ha r vested power . Moreover, the power for controlling M F-RIS mainly comes from the total num ber of PIN dio des required [16 ]. The quan tization lev els assigned f or EH ratio, amplitud e and phase shifts are L α , L β , an d L θ , respecti vely , where the total number of PIN diodes p e r MF-RIS is log 2 L α + K log 2 L β + K log 2 L θ . W e hav e the following self-sustainability constra in t per MF- RIS, i.e., P con q ≤ P m ∈M P A q,m , where P con q = ⌈ log 2 L α + K log 2 L β + K log 2 L θ ⌉ · M P PIN + P C + ξ · P l,O . Here, P C denotes the power consum p tion of RF-to-DC power conversion, and P PIN is power co nsumption per PIN dio de. Notation ξ in dicates the inverse of amp lifier efficiency . The outp ut power of MF-RIS q is o btained as P O,q = P k ∈K P k ′ ∈K k Θ k q H q f k ′ k 2 + σ 2 s k Θ k q k 2 F , where k·k F is Forben ius norm. The objective is to m aximize the system EE wh ile guaranteein g the constraints of minimum u ser rate req u irement, MF-RIS configur ation and p ower limitation , which is for mulated as max p kj , f k ,α q,m , β k q,m ,θ k q,m , w q X k ∈K X j ∈J k R kj P total (4a) s.t. (2) , Θ q ∈ R Θ , ∀ q ∈ Q , (4b) R kj ≥ R min kj , ∀ k ∈ K , ∀ j ∈ J k , (4c) X j ∈J k p kj = 1 , ∀ k ∈ K , (4d) X k ∈K k f k k 2 ≤ P max B S , (4e) P con q ≤ X m ∈M P A q,m , ∀ q ∈ Q , (4f) w q ∈ W , ∀ q ∈ Q , (4g) where P total = P q ∈Q ( P con q − P m ∈M P A q,m ) + P k ∈K k f k k 2 is the system total co n sumed power . Co nstraint set in R Θ (4b) specifies the feasible region of M F-RISs, i.e., α q,m ∈ [0 , 1] , β k q,m ∈ [0 , β k max ] , θ k q,m ∈ [0 , 2 π ) . Constraint (4c) ensures the minimum ra te requ irement per user as R min kj while constraint (4 d) represents the NOMA power allocatio n restriction. Constraint (4e) ensu res that the total BS transmit power cann ot exceed its budget P max B S . Du e to non-convexity and non-lin earity of p roblem (4a), it presents a sign ificant challenge to solve this problem . T o add r ess these difficulties, we propose a DRL-based sch eme, which is detailed in th e following section. I I I . P RO P O S E D P M H R L S C H E M E A. Hyb rid DRL Algorithm W e consider a m ulti-agent hyb rid DRL framework chara cter- ized by s tate spac e S , action space A , an d reward R . W ithin this fr amew ork, each agen t corre sponds to a single M F- RIS, which interacts with the dynamic en vironm ent by taking actions, receiving rewards, and upd ating its loc a l states accordin g ly . In addition, the BS is also con sidered as an indepen dent age n t, responsible for contro llin g power allo c a tion and transmit beam- forming . Conventional DRL m ethods strugg le under condition s of hig h complexity , slow con vergence and instability during training. Mo reover , the use of pure DQ N or PPO b ecomes compelling ly im practical, as both quantizing con tinuous vari- ables into d iscrete ones an d r e covering contin uous par ameters from quantized o nes intro duce extra computation al overhead and potential quan tiza tio n errors. T o overcome these ch allenges, we adopt a hy brid DRL a rchitecture that inco rporates both DQN and PPO networks f or e fficiently handling discrete and continu ous action spaces separately . W e defin e the state, action, and the correspo n ding r eward as follows: • State: Th e total state spac e is defined a s a set of in dividual agent state S ( t ) = { s 1 ( t ) , s 2 ( t ) , . . . , s Q ( t ) , s Q +1 ( t ) } . Each agent state s q ( t ) is designed as s q ( t ) = { g q,k j ( t ) |∀ k ∈ K , ∀ j ∈ J k } , ∀ q ∈ Q and s Q +1 ( t ) = { g kj ( t ) |∀ k ∈ K , ∀ j ∈ J k } associated with the combined channel at timestep t . Note that index 1 ≤ q ≤ Q indicates the MF-RIS agents, whereas q = Q + 1 stand s for the BS agen t. • Action: Th e action spac e is defined as a set o f indi- vidual actio n A ( t ) = { a 1 ( t ) , a 2 ( t ) , . . . , a Q ( t ) , a Q +1 ( t ) } . For agents representing MF-RIS q ∈ Q , each action a q ( t ) = { a dis q ( t ) , a con q ( t ) } is comp osed of b oth discrete and continuo us co mponen ts. Specifically , the discrete action cor- respond s to the selectio n of mode of each MF-RIS element, defined as a dis q ( t ) = { α q,m |∀ m ∈ M} . On the other ha n d, the co ntinuou s action includ e s MF-RIS con figuration s of amplitude and p hase-shifts as well as MF-RIS position, denoted as a con q ( t ) = { β k q,m , θ k q,m , w q |∀ k ∈ K , ∀ m ∈ M} . In ad dition, For th e ( Q + 1) -th agent r e p resenting the BS, the o u tput action con sists of o nly continuo us v ariables, i.e., the power allocation for NOMA users and th e beam formin g vectors, defined as a Q +1 ( t ) = { p kj , f k |∀ k ∈ K , j ∈ J k } . • Reward: W e design the shar ed reward as the overall EE in conjunc tio n with its constraints as pena lties, giv en by r ( t ) = P k ∈K P j ∈J k R kj P total − 3 X i =1 ρ i C i , (5) where ρ i , ∀ i ∈ { 1 , 2 , 3 } indicates the we ig hts of each penalty C i correspo n ding to constra in ts of (4c), (4e), and (4f), wh ich a r e de fin ed a s C 1 = P k ∈K P j ∈J k ( R min kj − R kj ) , C 2 = P k ∈K k f k k 2 − P max B S , and C 3 = P q ∈Q ( P con q − P m ∈M P A q,m ) , respectively . Note that the rem a ining b ound - ary condition s in (4b), (4d), an d (4g) can be automatica lly constrained dur ing gene r ating actions. 1) DQN for Discr ete V ariables: DQN employs a deep neural n etwork, Q-n etwork, to approxima te the Q-fun ction Q q ( s q , a q | ω φ q ) which e stima te s the expected c umulative rew ard for eac h action a q under a giv en state s q . No te th a t we define ω φ q and ω φ − q as the mod e l weig h ts o f the curren t n etwork and of the target network o f DQN, respectiv ely . Based on estimated Q-values, each agen t selects its action using an ǫ - greedy strategy , which b alances exploration and exploitation by choosing a rand om action with probability ǫ and by selecting the action with the maximu m predicted Q-value with prob ability 1 − ǫ , i.e . , ǫ ( t ) = ǫ ( t − 1 ) − ǫ max − ǫ min ǫ d , wh ere ǫ d is the decay parameter . Notation s of ǫ max and ǫ min indicate the maximum and min imum exp lo ration bound aries, r espectively . T o enhanc e training stability , DQN incorpor a te s two critical technique s: (i) Experience replay buf fer sto r es historical trajector ies with a tuple of ( s q , a q , r q , s ′ q ) , allowing the agent to sample mini-b atches unifor m ly and elimina te temporal correlatio ns during learnin g, 4 where s ′ q indicates the new state; and (ii) T a r get network , with its model denoted as Q ′ q ( s q , a q | ω φ − q ) is periodically softly u pdated to pr ovide stable Q-learning, i.e., ω φ − q ← τ φ ω φ q + (1 − τ φ ) ω φ − q where τ φ q indicates the imp ortance of target mo del of DQN. The Q-network is then up dated b y minimizing the tempo ral- difference (TD) error, which measures the d iscrepancy between the predicted Q-value and the target Q-value, given b y L ( ω φ q ) = E ( s q ,a q ,r,s ′ q ) [( y − Q q ( s q , a q | ω φ q )) 2 ] , (6) where y = r ( t ) + γ d max a ′ Q ′ q ( s ′ q , a ′ | ω φ − q ) indicates the TD target v alue and the discoun t factor γ d ∈ [0 , 1] ind icates the importan ce of future rewards. Notatio n of Q ′ q ( · ) is th e Q-value of the target network. Th e parameter upd a te via grad ient de scen t is th e n given by ω φ q ← ω φ q − l d · ∇ ω φ q L ( ω φ q ) , where l d ∈ [0 , 1] is the learn in g rate. 2) PPO for Continuou s V ariables: The remaining co ntinu- ous parameter s are op timized using the PPO alg o rithm [17 ]. Particularly , PPO ad opts an a c tor-critic fram ew ork respectively consisting of a policy network and of a value network . I n the policy n e twork , the n eural network o utputs the mean a nd standard deviation of a multiv ariate Gau ssian distribution, from which actions are samp led accor ding to the current state s q ( t ) and policy π δ q ( a q ( t ) | s q ( t )) . Note th at δ q indicates the p olicy network parameters. T o op timize the policy n e twork, we employ a clip ped surrogate o bjective functio n expressed as L clip ( δ q ) = E t [ min ( O q ( δ q ) ˆ A q ( t ) , clip( O q ( δ q ) , 1 − Λ , 1 + Λ ) ˆ A q ( t ))] , (7) where E [ · ] is the expectatio n over a ba tch of generated trajec- tories, a n d O q ( δ q ) = π δ q ( a q ( t ) | s q ( t )) π δ q, old ( a q ( t ) | s q ( t )) is the prob ability ratio. clip( · ) indicates th e clipping fun c tion which clips the ch ange between the new an d old policies within the r ange [1 − Λ , 1 + Λ] for avoiding excessi ve policy updates. Note that δ q, old ( · ) is the old policy parameter s. Further m ore, ˆ A q ( t ) is the g eneralized advantage estimation (GAE ) qu antifying the d ifference between the observed outco me of each action in a state and the pre- dicted state value V µ q ( s q ( t )) by the value network, which is ˆ A q ( t ) = P T − t i = t ( γ p λ p ) i − t { [( r ( i )+ γ p V µ q ( s q ( i +1))] − V µ q ( s q ( i )) } , where γ p and λ p are importance ra tio and GA E hype rparam- eters, r e spectiv ely . Nota tio n T means the length of tr ajectory segment. The po licy is optim ized iteratively by max imizing the clip ped surrogate objective u sing g radient a scen t given by δ q ← δ q − l p ac · ∇ δ q L clip ( δ q ) , where l p ac ∈ [0 , 1] is learnin g rate for actor in PPO. The associate d loss fun ction of the value network is defined as L V ( µ q ) = E t [( V µ q ( s q ( t )) − ˆ V tar q ( t )) 2 ] , where ˆ V tar q ( t ) = V µ − q ( s q ( t )) + ˆ A q ( t ) and µ − q indicates the previous update of value network. Th e para m eters of v alue network are updated by the g r adient method , i.e., µ q ← µ q − l p cr · ∇ µ q L V ( µ q ) , where l p cr ∈ [0 , 1] is learn ing rate for critic in PPO. B. P arametrized S haring in PMHRL In the co ntext of individual agent design in h ybrid DRL fra m e- works, PPO an d DQN typically select actions ind epende ntly based on their respective input states. Th is isolated d ecision- making process neglects the potential interd epende n ce and in- teraction between the two strategies. T o address this, inspir ed by [18], we pro pose a param etrized sharing mechanism. The core idea is to ena b le the shared representatio n be tween th e PPO and T ABLE I S I M U L AT I O N P A R A M E T E R S Parameter V alue Communication pa- rameters h 0 = − 20 dB , k 0 = 2 . 2 , β 0 = 3 dB, σ 2 s = σ 2 u = − 70 dBm MF-RIS power con- sumption parameters [14], [16] ξ = 1 . 1 , P PIN = 0 . 33 mW , P C = 2 . 1 mW , Z = 24 mW , 1 = 150 , 2 = 0 . 014 , L α = 2 , L β = 10 , L θ = 8 Other parameters P max BS = 40 dBm, w min = [5 , 10 , 10] m, w max = [5 , 45 , 10] m (a) (b) (c) (d) Fig. 2. (a) Con vergenc e. (b) EE with dif ferent strate gies (c) E E with diffe rent MF-RIS cases. (d) Comparison between NOMA, SDMA, and OMA. DQN mo d els by exchanging features. Sin c e PPO hand lin g high- dimensiona l action s per forms a more comp lex task than DQN, in- formation from DQN is essential to PPO. Specifically , PPO agent utilizes the discrete action output from the DQN ag ent as the input of PPO, i. e., s con q ( t ) = concat( g q,k j ( t ) , vec( a dis q ( t − 1))) , where conca t( · ) indicates the concatenatio n of two vectors an d vec ( · ) vectorizes the discrete action. Note that only MF-RISs require param etrized sha r ing as th ey have h ybrid actions, thereb y improving coor dination b etween th e two decision m o dules. I V . S I M U L AT I O N R E S U LT S In simulations, we evaluate PMHRL in mu lti-MF-RISs- assisted d ownlink NOMA. W e consider the BS po sition ed at w b = [0 , 0 , 5] m , ser ving J k = 2 users in K = 2 directions. The n the users are rando mly distributed within a circu la r area o f radius 2 m, centered at [0 , 30 , 0] , [0 , 35 , 0 ] , [10 , 4 0 , 0 ] , an d [10 , 45 , 0] m, respectively . The MF-RISs are equipped with M = 32 elements, and the BS has N = 6 an tennas. Th e re m aining para m eters related to networks ar e listed in T able I. As for PMHRL, learn ing rates of PPO actor/cr itic networks are l p ac = 10 − 3 and l p cr = 10 − 4 , respectively , wher eas that o f DQN is l d = 10 − 3 . Th e discou nt factor for both m odules is γ d = γ p = 0 . 99 . The dec a y and soft update parameters of DQN ar e set to ǫ d = 10 4 and τ φ = 10 − 2 , respectively . Th e experience replay buf fer o f DQN stores up to 10 6 samples. The mini-batch mechan ism is adopted d uring training, with a batch size of 64 . ǫ max = 1 and ǫ min = 0 . Moreover , we set the clipp ing ratio to Λ = 0 . 2 , the GAE parameter is λ p = 0 . 9 7 , and trajectory length is 10 3 . The weigh ts of each penalty in (5 ) are ρ 1 = 10 − 3 , ρ 2 = ρ 3 = 10 − 5 . Fig. 2(a) illustrates the con ver gence b ehavior o f PM HRL compare d to o ther DRL m ethods. I t shows that PM HRL n o t 5 only achieves a faster convergence but outperfo rms other metho ds with the highest EE. W e can obser ve that MA - HDRL without parametrize d sharing co n verges m o re slo wly tha n th e other al- gorithms. This is attributed to th e dec entralized learnin g witho ut informa tio n sharin g, mak ing it ch allenging to capture ef fectiv e policies dur ing early train ing. Also, PMHRL achie ves up to a 30 % improvemen t in EE compa r ed to hybrid DRL due to limited computatio n an d stora ge capability fo r tacking h igh-dim ensional actions an d states. Moreover , pure PPO architecture [1 7] exhibits the second- lowest EE due to the lack of hyb rid lea r ning mecha- nisms. Finally , DQN shows the lowest EE perf ormance , primarily due to its la rge d iscr ete state–action space and quantiza tion errors. In Fig. 2(b) , th e results show th at EE escalates with the increasing number s of anten nas thank s to improved beamfo rming capability , reaching a pea k at N = 6 before declining as the power con sumption begins to outweigh the b eamform ing gains. In add ition, we compare the fully- optimized case to the cases without either EH ratio α q,m , amplification β k q,m , phase- shift θ k q,m , or d eployment w q . Note that ”w/o” indicates th e r a n dom decision. It is evident that the full optimization yields the high est EE. I n contrast, omitting the optimization of specific parameters leads to no ticeable EE degradation. The configura tio n without optimizing EH ratio results in the lowest EE. This is b ecause random S or H mode selection leads to ine fficient EH, where the collected energy fails to compen sate for high p ower consump tion. Fig. 2(c) co mpares EE of Q ∈ { 2 , 3 } MF-RISs under d ifferent cases: (1) Optimize d case; (2) No EH ( α k q,m = α q,m = 1 , ∀ k ∈ K ); ( 3 ) No amp lification ( β k q,m = β q,m = 1 , ∀ k ∈ K ); (4) Only reflec tio n capab ility . Th e results sho w th at increasing the number of MF-RIS elements initially enhances the E E. Howe ver , further increasing elements induces higher energy con sumption , leading to a d egraded EE owing to insufficient support fr om harvested en e rgy . Notably , th e case with Q = 3 MF-RISs outperf orms that with Q = 2 MF-RISs acro ss all cases, attributed to the enh a n ced spatial di versity and EH g a in from multiple MF- RISs. Specifically , when the signal amp lificatio n is d isabled, the reduced signal gain lead s to a lower EE than th at of the fully - optimized case. Moreover, in the case of MF-RIS on ly with reflection, the signal can not be delivered to users beyond the other side of the surface, leading to a significant EE reduction . Additionally , when the EH functio n is fu lly disabled, the system cannot suppor t the extra power f rom MF-RISs, resulting in the lowest EE amo ng all cases. Fig. 2(d) reveals the EE p e rforman ce und er varying numbers of users. W e com pare NOMA to the existing multiple ac c e ss mech- anisms, i.e., OMA and spatial d ivision multiple acc e ss (SDMA) [19] with or without deployme n t of MF-RISs. As observed, EE decreases with more user s due to insufficient power resource s and severe inter-user interfer ence. Mor eover , NOMA with MF- RIS deploymen t ac hieves the h ighest EE ac r oss all n umbers o f users, benefiting from its supe r ior spectrum utilization and EH capabilities offered b y MF-RISs to SDMA and OMA. In con trast, their co unterpar ts with out deployin g MF-RIS show significan tly lower E E performa nce. V . C O N C L U S I O N S W e propose a multi-M F-RISs-assisted d ownlink NOMA net- works. An EE o ptimization problem is f ormulated , jointly op ti- mizing power allo cation, BS bea m formin g, and MF-RIS co nfig- uration of amplification, p hase-shift, a n d EH r atios, as well as positions. T o address the hig h -dimen sio n al and dynam ic natu r e of the complex problem, we have d esign a PMHRL scheme . Combining both featu res of PPO and of DQN to respec ti vely handle contin uous a nd discrete ac tion spaces, pa r ametrized shar- ing is designed to facilitate information exchange b etween them. Additionally , multi-agen t system is leveraged fo r re d ucing the overhead. Simulatio n results validate the superior ity of PMHRL outperf orming th e centralized lear ning of DQN, PPO, an d con- ventional hybrid DRL in terms o f the high est EE. Moreover, th e propo sed architectu re of multi-MF-RISs demonstrates the best perfor mance acr o ss various scen arios, including cases withou t EH, conventional reflective-only RISs, no n -amplified signals, an d baselines withou t e ith er RIS or MF-RIS un der different multiple access. R E F E R E N C E S [1] L .-H. Shen et al. , “Fi v e facet s of 6G: Research challeng es and opportuni - ties, ” ACM Comput. Surv . , vol . 55, no. 11, pp. 1–39, 2023. [2] B. Makki et al. , “ A s urvey of NOMA: Current status and open research chall enges, ” IEEE Open J . Commun. Soc. , vol. 1, pp. 179–189, 2020. [3] Q. Wu et al. , “T o w ards sm art and reconfigura ble en vironmen t: Intelligent reflecti ng surface aided wireless netwo rk, ” IE EE Commun. Mag. , vol. 58, no. 1, pp. 106–112, 2020. [4] L .-H. Shen et al. , “RIS-aided fluid anten na array-mounte d AA V netw orks, ” IEEE W ir eless Commun. Lett. , vol. 14, no. 4, pp. 1049–1053, 2025. [5] ——, “AI-enabl ed unmanned vehicle -assisted reconfigurable intell igent surfac es: Deplo yment, prototyping, expe riments, and opportuni ties, ” IE EE Netw . , vol . 38, no. 6, pp. 52–59, 2024. [6] ——, “Federated deep reinforcement learning for energy ef ficient multi- functio nal RIS-assist ed lo w-earth orbit netw orks, ” in P r oc. IEEE Int. Conf . Commun. (ICC) , 2025, pp. 1–6. [7] Y . Liu et al. , “ST AR: Simultan eous transmission and reflecti on for 360- degre e cov erage by intellige nt surf aces, ” IEE E W irele ss Commun. Mag. , vol. 28, no. 6, pp. 102–109, 2021. [8] L .-H. Shen et al. , “D-ST AR: Dual s imultane ously transmitting and reflecting reconfigura ble intelligent surfaces for joint uplink/do wnlink transmission, ” IEEE T rans. Commun. , vol. 72, no. 6, pp. 3305–3322, 2024. [9] B. L yu et al. , “Optimized ener gy and information relaying in self- sustainab le IRS-empowere d WPCN, ” IEEE T rans. Commun. , vol. 69, no. 1, pp. 619–633, 2021. [10] R. Long et al. , “ Acti ve reconfigurable inte lligent surface-a ided wirele ss communicat ions, ” IE EE T rans. W ir ele ss Commun. , vol. 20, no. 8, pp. 4962– 4975, 2021. [11] Z. Zhang et al. , “ Acti ve RIS vs. passi ve RIS: Which will pre v ail in 6G?” IEEE T rans. Commun. , vol. 71, no. 3, pp. 1707–1725, 2023. [12] W . W ang et al. , “Beamforming design and jamming optimizati on for IRS- aided secure NOMA networ ks, ” IEEE T ra ns. W ir eless Commun. , vol. 21, no. 3, pp. 1557–1569, 2022. [13] X. Mu et al. , “Joint deployment and m ultipl e access design for intell igent reflecti ng surface assisted networks, ” IE EE T rans. W ir eless Commun. , vol. 20, no. 10, pp. 6648–6664, 2021. [14] W . Li et al. , “Multi -function al reconfigu rable intellige nt surface: System modeling and performance optimization , ” IEEE T ra ns. W ir eless Commun. , vol. 23, no. 4, pp. 3025–3041, 2024. [15] W . Ni et al. , “Resource alloc ation for multi-cell IRS-aide d NOMA net- works, ” IE EE T r ans. W ir eless Commun. , vol. 20, no. 7, pp. 4253–4268, 2021. [16] Z. W a ng et al. , “Simultaneou sly transmitting and reflecting surface (ST ARS) for terah ertz communications, ” IEEE J. Sel. T op. Signal Proc ess. , vol . 17, no. 4, pp. 847–863, 2023. [17] Y . Gu et al. , “Optimizing wireless cov erage and capa city with PPO-based adapti ve antenna configuration, ” in P r oc. IE EE Int. Conf. Commun. (ICC) , 2024, pp. 1–6. [18] J. Xiong et al. , “Parametriz ed deep q-networks learnin g: Rei nforcement learni ng with discrete -contin uous hybrid action space, ” arXiv pr eprint arXiv:1810.06394 , 2018. [19] R. Raghu et al. , “Queueing theoretic models for multiu ser MISO content- centri c netw orks with SDMA, NOMA, OMA and rate-split ting downl ink, ” IEEE T rans. W ireless Commun. , vo l. 23, no. 5, pp. 4753–4766, 2024.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment