MemEvolve: Meta-Evolution of Agent Memory Systems

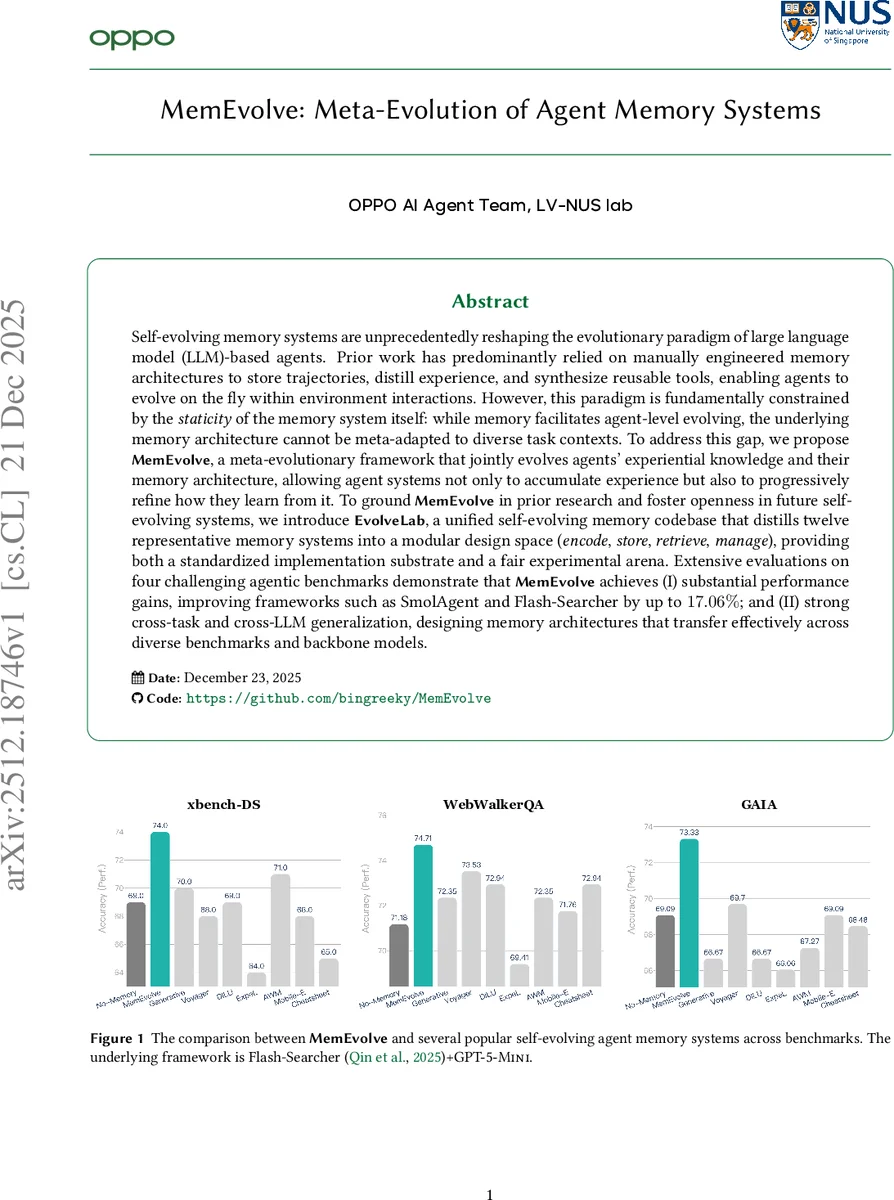

Self-evolving memory systems are unprecedentedly reshaping the evolutionary paradigm of large language model (LLM)-based agents. Prior work has predominantly relied on manually engineered memory architectures to store trajectories, distill experience, and synthesize reusable tools, enabling agents to evolve on the fly within environment interactions. However, this paradigm is fundamentally constrained by the staticity of the memory system itself: while memory facilitates agent-level evolving, the underlying memory architecture cannot be meta-adapted to diverse task contexts. To address this gap, we propose MemEvolve, a meta-evolutionary framework that jointly evolves agents’ experiential knowledge and their memory architecture, allowing agent systems not only to accumulate experience but also to progressively refine how they learn from it. To ground MemEvolve in prior research and foster openness in future self-evolving systems, we introduce EvolveLab, a unified self-evolving memory codebase that distills twelve representative memory systems into a modular design space (encode, store, retrieve, manage), providing both a standardized implementation substrate and a fair experimental arena. Extensive evaluations on four challenging agentic benchmarks demonstrate that MemEvolve achieves (I) substantial performance gains, improving frameworks such as SmolAgent and Flash-Searcher by up to $17.06%$; and (II) strong cross-task and cross-LLM generalization, designing memory architectures that transfer effectively across diverse benchmarks and backbone models.

💡 Research Summary

The paper introduces MemEvolve, a meta‑evolutionary framework that simultaneously evolves an LLM‑based agent’s experiential knowledge and the architecture of its memory system. Recognizing that existing agent memory designs are static—fixed pipelines of encoding, storing, retrieving, and managing experience—the authors argue that such rigidity limits agents’ ability to adapt to diverse task domains. To address this, they first construct EvolveLab, a unified codebase that re‑implements twelve representative memory systems (e.g., Voyager, ExpeL, SkillWeaver) within a modular design space. Each memory system is decomposed into four interchangeable components: Encode (E), Store (U), Retrieve (R), and Manage (G). This decomposition yields a “genotype” for any memory architecture, enabling systematic manipulation, recombination, and mutation.

MemEvolve operates as a bilevel optimization process. The inner loop runs a conventional agent with a fixed memory architecture, allowing it to interact with a continuous stream of tasks and accumulate trajectories. The outer loop treats the memory architecture itself as a learnable entity: it receives performance feedback (final rewards, LLM‑as‑Judge scores, resource usage) from the inner loop and applies evolutionary operators (selection, crossover, mutation) or reinforcement‑learning‑based meta‑optimizers to generate new memory configurations. The newly evolved memory modules are then fed back into the inner loop, creating a positive feedback cycle where better memories improve agent learning efficiency, and higher‑quality trajectories provide clearer signals for further memory evolution.

Experiments span four challenging benchmarks—GAIA, xBench, DeepResearchBench, and TaskCraft—covering web browsing, code generation, scientific literature synthesis, and multi‑step planning. MemEvolve is evaluated with GPT‑5‑Mini as the base model and also tested for transfer to GPT‑4‑Turbo. Results show substantial gains: up to 17.06 % absolute improvement over strong baselines such as SmolAgent and Flash‑Searcher, with an average boost of 9–12 % across benchmarks. Importantly, memory architectures evolved on TaskCraft transfer effectively to the other three benchmarks, delivering 2.0 %–9.1 % performance lifts on unseen tasks and models, demonstrating cross‑task and cross‑LLM generalization.

Ablation studies dissect the contribution of each module. Evolving all four components jointly yields the largest gains, while isolated changes to Encode or Store alone provide modest improvements. The Manage component (responsible for consolidation and forgetting) proves especially critical for maintaining long‑term memory quality. The authors also discuss limitations: meta‑evolution incurs significant computational cost, especially with large LLMs and long online training horizons; the current design space is confined to the twelve pre‑implemented systems, leaving broader architectural exploration open; and rapid structural changes can temporarily destabilize learning.

In conclusion, MemEvolve demonstrates that treating memory systems as evolvable entities dramatically enhances agent self‑improvement, and the released EvolveLab codebase offers the community a standardized platform for further research. Future directions include more efficient meta‑learning algorithms, expanding the memory design space to include graph‑neural or continuous representations, tighter integration with cognitive theories of human learning, and scaling to multimodal, multi‑agent environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment