SmartSight: Mitigating Hallucination in Video-LLMs Without Compromising Video Understanding via Temporal Attention Collapse

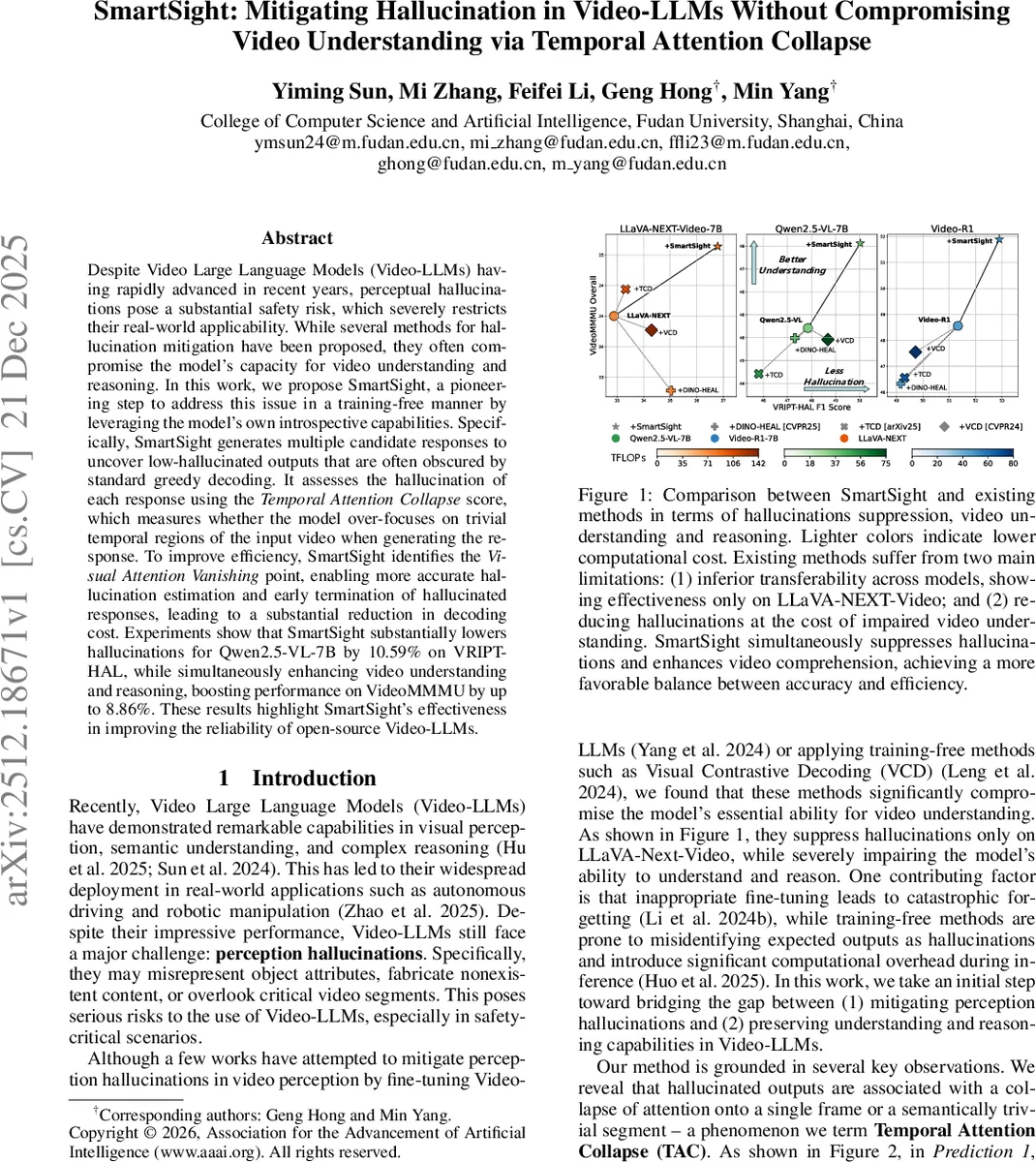

Despite Video Large Language Models having rapidly advanced in recent years, perceptual hallucinations pose a substantial safety risk, which severely restricts their real-world applicability. While several methods for hallucination mitigation have been proposed, they often compromise the model’s capacity for video understanding and reasoning. In this work, we propose SmartSight, a pioneering step to address this issue in a training-free manner by leveraging the model’s own introspective capabilities. Specifically, SmartSight generates multiple candidate responses to uncover low-hallucinated outputs that are often obscured by standard greedy decoding. It assesses the hallucination of each response using the Temporal Attention Collapse score, which measures whether the model over-focuses on trivial temporal regions of the input video when generating the response. To improve efficiency, SmartSight identifies the Visual Attention Vanishing point, enabling more accurate hallucination estimation and early termination of hallucinated responses, leading to a substantial reduction in decoding cost. Experiments show that SmartSight substantially lowers hallucinations for Qwen2.5-VL-7B by 10.59% on VRIPT-HAL, while simultaneously enhancing video understanding and reasoning, boosting performance on VideoMMMU by up to 8.86%. These results highlight SmartSight’s effectiveness in improving the reliability of open-source Video-LLMs.

💡 Research Summary

SmartSight addresses a critical safety issue in video‑large language models (Video‑LLMs): perceptual hallucinations, where the model fabricates or misrepresents visual content. Existing mitigation approaches either require fine‑tuning (which can cause catastrophic forgetting) or rely on training‑free perturbations that often degrade the model’s core ability to understand and reason about video content, and they typically add substantial computational overhead.

The proposed method is entirely training‑free and leverages the model’s own internal attention dynamics. The key observation is that hallucinated outputs tend to exhibit “Temporal Attention Collapse” (TAC): the model’s attention becomes overly concentrated on a single frame (frame‑level collapse) or on a semantically trivial video segment (segment‑level collapse). To quantify this, SmartSight defines two scores:

-

Frame‑level Collapse Score (Sf) – the entropy of normalized attention across frames. Low entropy indicates that most attention is focused on a few frames, signaling a higher risk of hallucination.

-

Segment‑level Collapse Score (Sc) – computed after constructing a Motion‑Aware Cost Matrix that captures both appearance and motion differences between adjacent frames. This matrix identifies temporally trivial segments; excessive attention on such segments raises the Sc value.

The overall TAC score combines Sf and Sc, providing a model‑intrinsic metric of hallucination severity without any external reference.

Another novel component is the Visual Attention Vanishing (VAV) Point. Because rotary positional encodings cause attention to decay with token distance, there is a specific generation step where the average attention to visual tokens drops sharply. SmartSight detects this point on‑the‑fly and evaluates the TAC score at that moment, enabling early prediction of a response’s quality. Responses with high TAC scores are terminated early, saving up to 79.6 % of decoding computation while preserving final answer quality.

To avoid the greedy decoding bias that often hides low‑hallucination outputs, SmartSight samples N candidate responses in parallel (the paper uses N = 10). Each candidate receives a TAC score; the candidate with the lowest score is allowed to continue to full generation, while the others are stopped at the VAV point. Empirically, at least one of the sampled responses consistently exhibits fewer hallucinations than the greedy output.

Experimental validation is performed on two benchmarks:

-

VRIPT‑HAL, a hallucination‑focused evaluation set measuring F1‑based hallucination rates. SmartSight reduces the hallucination F1 score of Qwen2.5‑VL‑7B by 10.59 % relative to the baseline greedy decoder.

-

VideoMMMU, a multi‑modal video understanding and reasoning benchmark. With SmartSight, the same model gains up to 8.86 % absolute improvement in accuracy, reaching performance comparable to a much larger 32‑B parameter version and even approaching proprietary Gemini 1.5 Pro.

The method is tested across ten diverse Video‑LLMs, showing consistent gains and a favorable scaling property: larger models benefit more from the TAC‑based filtering.

Limitations include the current focus on visual tokens only; subtitles, audio, or other modalities are not considered. The TAC metric relies on the specific attention patterns of current transformer‑based Video‑LLMs, so future architectures may require adaptation. The VAV point detection uses empirically set thresholds, suggesting a need for automated calibration.

In summary, SmartSight offers a plug‑and‑play, training‑free solution that simultaneously suppresses hallucinations and enhances video comprehension and reasoning. Its early‑termination strategy dramatically reduces inference cost, making it attractive for real‑time or resource‑constrained deployments such as autonomous driving, robotic manipulation, video‑based jailbreak defense, and video quality assessment.

Comments & Academic Discussion

Loading comments...

Leave a Comment