MeanFlow-TSE: One-Step Generative Target Speaker Extraction with Mean Flow

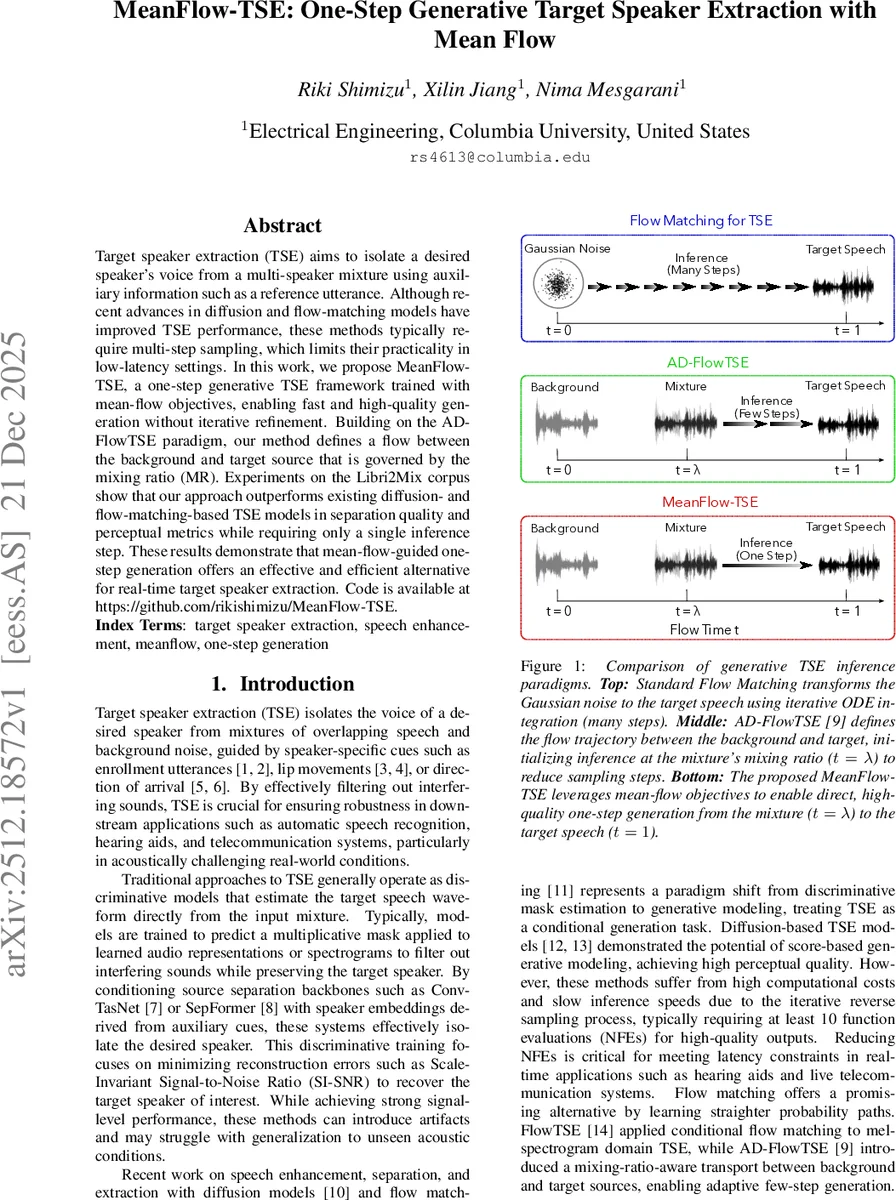

Target speaker extraction (TSE) aims to isolate a desired speaker’s voice from a multi-speaker mixture using auxiliary information such as a reference utterance. Although recent advances in diffusion and flow-matching models have improved TSE performance, these methods typically require multi-step sampling, which limits their practicality in low-latency settings. In this work, we propose MeanFlow-TSE, a one-step generative TSE framework trained with mean-flow objectives, enabling fast and high-quality generation without iterative refinement. Building on the AD-FlowTSE paradigm, our method defines a flow between the background and target source that is governed by the mixing ratio (MR). Experiments on the Libri2Mix corpus show that our approach outperforms existing diffusion- and flow-matching-based TSE models in separation quality and perceptual metrics while requiring only a single inference step. These results demonstrate that mean-flow-guided one-step generation offers an effective and efficient alternative for real-time target speaker extraction. Code is available at https://github.com/rikishimizu/MeanFlow-TSE.

💡 Research Summary

MeanFlow‑TSE introduces a novel one‑step generative framework for target speaker extraction (TSE), addressing the latency bottleneck inherent in recent diffusion‑ and flow‑matching‑based approaches. Traditional TSE methods estimate a mask to separate a target voice from a mixture, while recent generative models treat the problem as conditional generation, requiring multiple function evaluations (NFEs) to solve an ODE that transports a source distribution (often Gaussian noise) to the target speech distribution. Although AD‑FlowTSE reduced the number of steps by initializing the flow at the mixture’s mixing‑ratio position, it still relied on the standard rectified flow‑matching loss, which is optimized for multi‑step sampling.

MeanFlow‑TSE builds upon the AD‑FlowTSE paradigm but replaces the instantaneous velocity objective with a mean‑flow objective. Instead of learning the velocity at each infinitesimal time step, the model learns the average velocity required to move from an intermediate time t (the estimated mixing ratio λ̂) directly to the final time r = 1 (clean target). This yields a closed‑form one‑step update:

Ŝ = Y + (1 − λ̂)·vθ(Y, λ̂, 1, e)

where Y is the mixture spectrogram, e is the enrollment embedding, and vθ is a neural network predicting the average transport vector. By jumping straight from the mixture to the target, the method eliminates the discretization errors and computational overhead of multi‑step ODE solvers.

Training leverages the α‑Flow framework. The target velocity is interpolated between the ground‑truth direction u = S − B and the model’s own prediction:

vα = α · u + (1 − α) · vθ(zτ, τ, r)

where α is a curriculum parameter that decays from 1 to a small value (αmin ≈ 0.005). Early training (α = 1) corresponds to conventional flow‑matching, providing a stable foundation. As α decreases, the loss gradually emphasizes the mean‑flow identity, encouraging the network to learn a global transport map suitable for one‑step inference. An adaptive weight w = α‖Δ‖² + c (Δ = vθ − vα) further stabilizes training by scaling the loss according to the current error magnitude, while a stop‑gradient operator prevents gradient leakage from the weight term.

Since the mixing ratio λ is unknown at test time, a lightweight auxiliary network gϕ predicts λ̂ from the mixture and enrollment signals. The predictor uses an ECAPA‑TDNN front‑end followed by an MLP and is trained with a simple mean‑squared error loss.

Experiments were conducted on the Libri2Mix dataset (both noisy and clean subsets). The backbone is a U‑Net‑style Diffusion Transformer (UDiT) with 16 transformer layers, 16 attention heads, and a hidden dimension of 768. Training employed AdamW, cosine learning‑rate decay, mixed‑precision, and a log‑normal sampling scheme for t and r. The α‑curriculum spanned 0–2000 epochs, with a steep sigmoid transition.

Results show that MeanFlow‑TSE consistently outperforms prior generative baselines—including DiffSep+SV, DDTSE, DiffTSE, FlowTSE, and AD‑FlowTSE—across all objective and perceptual metrics. On the clean test set, it achieves a PESQ of 3.26 and an SI‑SDR of 18.80 dB, improving over AD‑FlowTSE by 0.37 PESQ points and 1.31 dB SI‑SDR. On the noisy set, it reaches 2.21 PESQ and 12.85 dB SI‑SDR, the best reported among one‑step methods. A detailed NFE analysis confirms that performance peaks at NFE = 1; additional Euler steps provide no benefit and can slightly degrade quality, confirming that the learned mean‑flow accurately captures the full transport.

Computationally, MeanFlow‑TSE requires only a single Euler step, resulting in a real‑time factor (RTF) of ≈ 0.018 and a GPU memory footprint of ~1.5 GB (≈ 359 M parameters). This places it well within the constraints of real‑time applications such as hearing aids, live teleconferencing, and voice‑controlled assistants.

In summary, MeanFlow‑TSE demonstrates that mean‑flow objectives, combined with an α‑curriculum and adaptive weighting, enable high‑fidelity, one‑step target speaker extraction. The approach bridges the gap between the high quality of multi‑step generative models and the low latency required for practical deployment, and it opens avenues for applying mean‑flow‑based one‑step generation to other audio restoration tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment