Zephyrus: Scaling Gateways Beyond the Petabit-Era with DPU-Augmented Hierarchical Co-Offloading

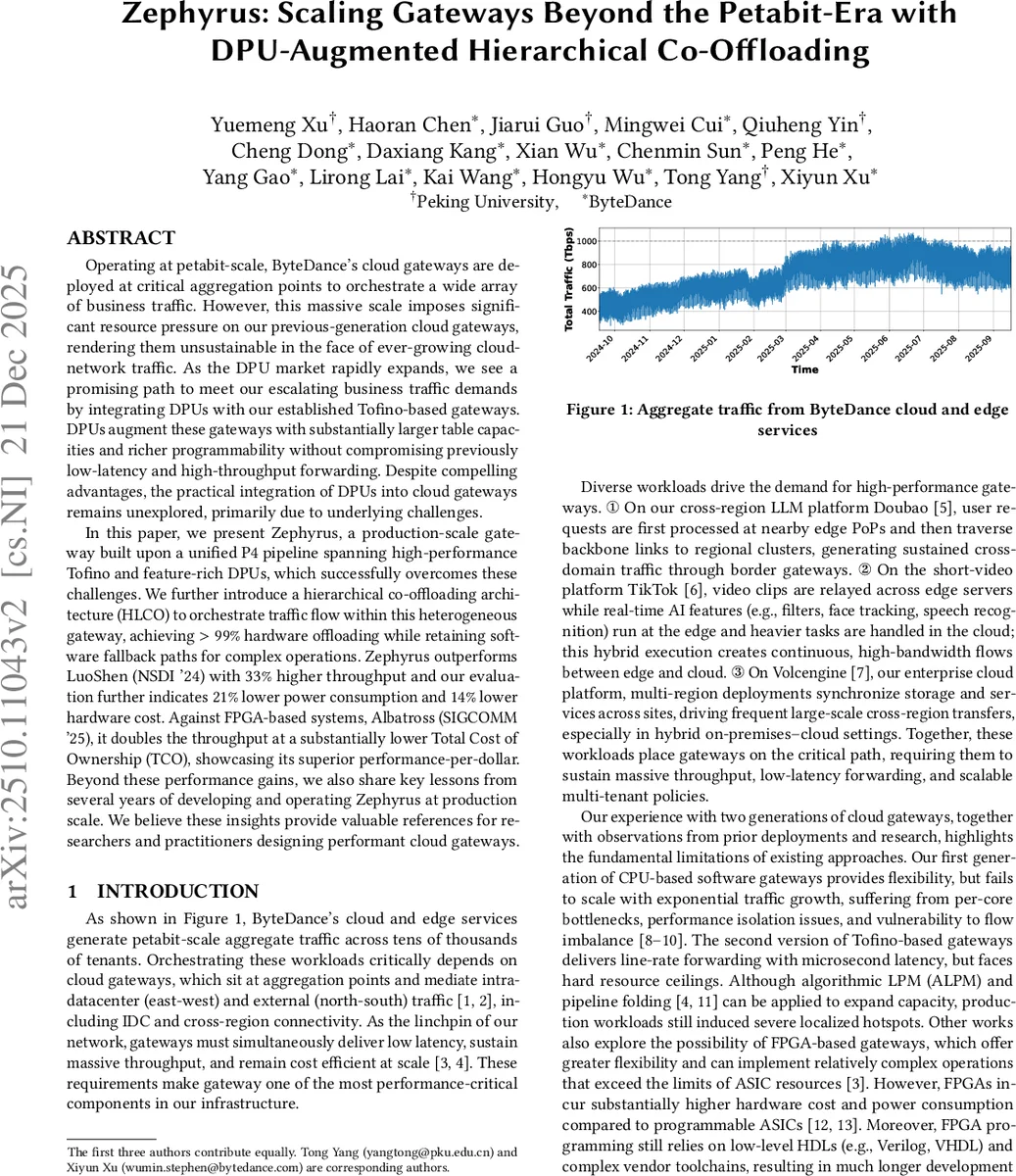

Operating at petabit-scale, ByteDance’s cloud gateways are deployed at critical aggregation points to orchestrate a wide array of business traffic. However, this massive scale imposes significant resource pressure on our previous-generation cloud gateways, rendering them unsustainable in the face of ever-growing cloud-network traffic. As the DPU market rapidly expands, we see a promising path to meet our escalating business traffic demands by integrating DPUs with our established Tofino-based gateways. DPUs augment these gateways with substantially larger table capacities and richer programmability without compromising previously low-latency and high-throughput forwarding. Despite compelling advantages, the practical integration of DPUs into cloud gateways remains unexplored, primarily due to underlying challenges. In this paper, we present Zephyrus, a production-scale gateway built upon a unified P4 pipeline spanning high-performance Tofino and feature-rich DPUs, which successfully overcomes these challenges. We further introduce a hierarchical co-offloading architecture (HLCO) to orchestrate traffic flow within this heterogeneous gateway, achieving > 99% hardware offloading while retaining software fallback paths for complex operations. Zephyrus outperforms LuoShen (NSDI ‘24) with 33% higher throughput and our evaluation further indicates 21% lower power consumption and 14% lower hardware cost. Against FPGA-based systems, Albatross (SIGCOMM ‘25), it doubles the throughput at a substantially lower Total Cost of Ownership (TCO), showcasing its superior performance-per-dollar. Beyond these performance gains, we also share key lessons from several years of developing and operating Zephyrus at production scale. We believe these insights provide valuable references for researchers and practitioners designing performant cloud gateways.

💡 Research Summary

The paper presents Zephyrus, a production‑grade cloud gateway designed to handle petabit‑scale traffic by tightly integrating programmable Tofino ASIC switches with feature‑rich Data Processing Units (DPUs) under a unified P4 pipeline. The authors first describe the evolution of ByteDance’s gateways: an initial software‑only design that suffered from per‑core bottlenecks and flow imbalance, followed by a second‑generation ASIC‑centric design that achieved line‑rate forwarding (1.6 Tbps per device, ~2 µs latency) but ran into on‑chip SRAM exhaustion when storing massive VM‑to‑Node‑Controller tables and struggled with more complex policies that had to fall back to software. To overcome these constraints, Zephyrus introduces DPUs (Pensando) as a middle tier. DPUs provide abundant DRAM and programmable pipelines, allowing large tables and moderately complex processing to be offloaded from the ASIC while preserving low latency.

A key contribution is the Hierarchical Co‑Offloading (HLCO) architecture, which extends the classic fast/slow‑path model into three tiers: the ASIC fast‑path handles the bulk of high‑throughput, low‑complexity work; the DPU middle‑path handles larger tables and richer policies; and a software slow‑path handles the rare, highly stateful or computationally intensive tasks. By designing a minimal yet expressive metadata format and a version‑based flow‑update mechanism, Zephyrus ensures tight synchronization between ASIC and DPU pipelines, achieving >99 % hardware offload.

The system is implemented with four Pensando DPUs coupled to a folded Tofino pipeline, delivering 1.6 Tbps line‑rate forwarding while expanding table capacity by 2–1000× depending on the table type. Evaluation against LuoShen (NSDI ’24), a state‑of‑the‑art programmable ASIC gateway, shows a 33 % throughput increase, at least 21 % lower power consumption, and a 14 % reduction in hardware cost per 2U chassis. Compared with Albatross (SIGCOMM ’25), an FPGA‑based gateway, Zephyrus doubles throughput and achieves a substantially lower total cost of ownership, highlighting a superior performance‑per‑dollar ratio.

Beyond performance metrics, the paper shares operational lessons from several years of deployment: (1) incremental, staged roll‑outs are essential for business continuity during large‑scale upgrades; (2) automated tooling for resource mapping between ASIC and DPU simplifies compiler‑driven placement and reduces human error; (3) robust disaster‑recovery mechanisms rely on metadata versioning and coordinated flow updates.

In summary, Zephyrus demonstrates that a heterogeneous gateway combining ASIC speed, DPU flexibility, and software programmability can meet the demanding requirements of petabit‑scale cloud environments—low latency, massive throughput, large table support, and cost efficiency—while providing a practical blueprint for future cloud network infrastructure.

Comments & Academic Discussion

Loading comments...

Leave a Comment