Atlas is Your Perfect Context: One-Shot Customization for Generalizable Foundational Medical Image Segmentation

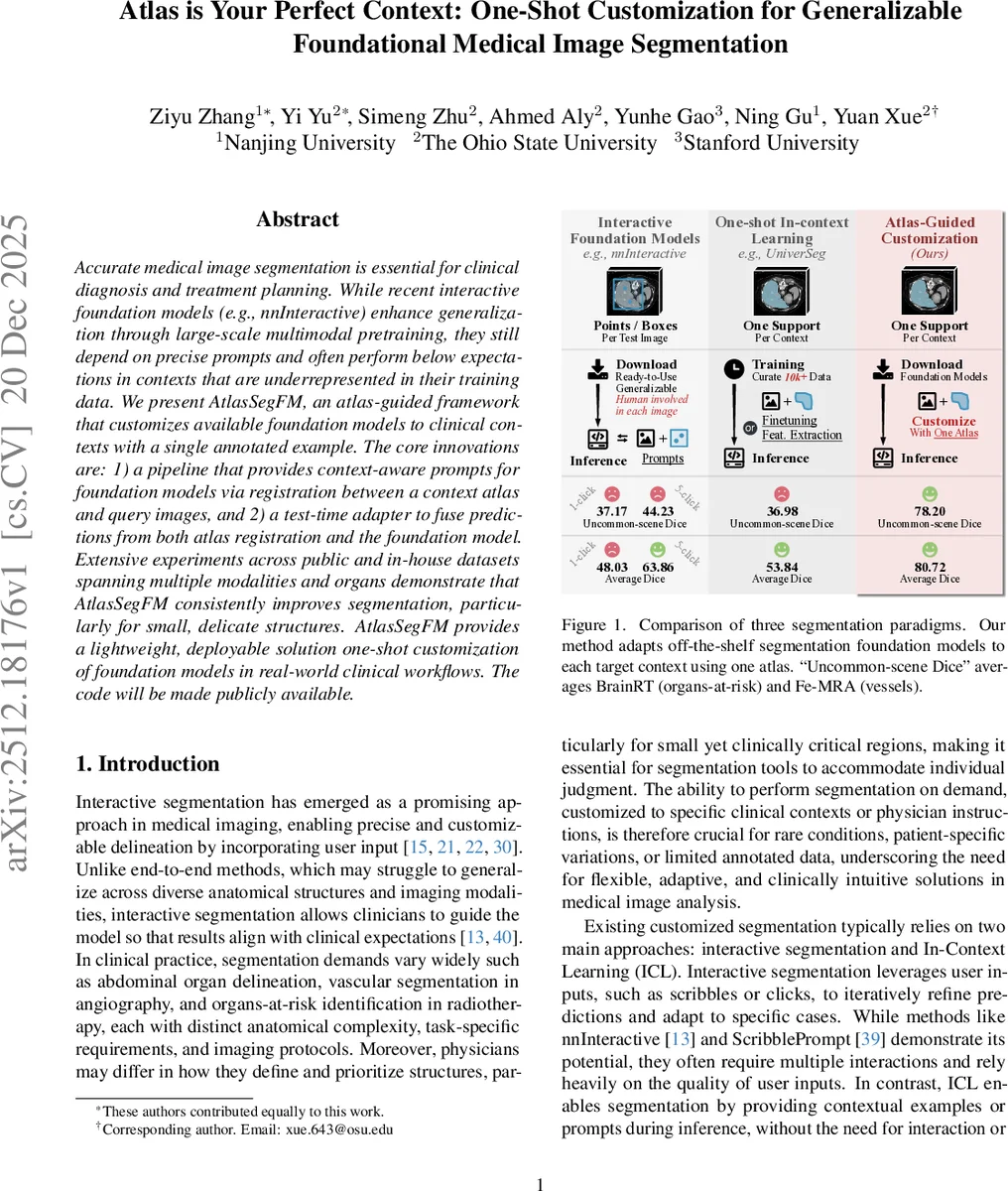

Accurate medical image segmentation is essential for clinical diagnosis and treatment planning. While recent interactive foundation models (e.g., nnInteractive) enhance generalization through large-scale multimodal pretraining, they still depend on precise prompts and often perform below expectations in contexts that are underrepresented in their training data. We present AtlasSegFM, an atlas-guided framework that customizes available foundation models to clinical contexts with a single annotated example. The core innovations are: 1) a pipeline that provides context-aware prompts for foundation models via registration between a context atlas and query images, and 2) a test-time adapter to fuse predictions from both atlas registration and the foundation model. Extensive experiments across public and in-house datasets spanning multiple modalities and organs demonstrate that AtlasSegFM consistently improves segmentation, particularly for small, delicate structures. AtlasSegFM provides a lightweight, deployable solution one-shot customization of foundation models in real-world clinical workflows. The code will be made publicly available.

💡 Research Summary

This paper introduces AtlasSegFM, a novel framework that enables one‑shot customization of medical image segmentation foundation models using a single annotated atlas. The authors first formalize the practical problem of adapting powerful pretrained segmentation models to new clinical contexts with minimal annotation effort. Their solution consists of three sequential steps.

-

Atlas‑based registration: A test‑time registration network derived from VoxelMorph aligns a context atlas (image‑mask pair) to each query image. The registration is performed on‑the‑fly, optimizing a similarity loss (e.g., NCC or mutual information) together with a rigid pre‑alignment and a fine‑grained deformation stage. The resulting transformed atlas mask, (M_{atlas}), provides a coarse but anatomically consistent segmentation that does not require any training data.

-

Prompt generation for foundation models: From (M_{atlas}) three types of prompts are derived: a click at the centroid of the largest connected component, a bounding box that encloses the mask, and the mask itself. These prompts are fed to the chosen foundation model, which may be interactive (e.g., nnInteractive, Med‑SAM) or non‑interactive (e.g., VesselFM). The model produces a refined prediction (M_{FM}).

-

Adaptive test‑time fusion: An adaptive fusion module learns a voxel‑wise gain map (G) that estimates the reliability of the foundation model’s output versus the atlas‑derived mask. The final segmentation is computed as (\hat{Y}=G\cdot M_{FM}+(1-G)\cdot M_{atlas}). This mechanism automatically leans on the atlas for small, under‑represented structures while exploiting the expressive power of the foundation model for well‑represented regions.

The authors evaluate AtlasSegFM on seven public and in‑house datasets covering brain, abdominal, and vascular imaging (CT, MRI, MRA). They report consistent improvements over strong baselines, especially in “uncommon‑scene Dice” (small, clinically critical structures). For example, on the BrainRT organs‑at‑risk task, nnInteractive with five clicks achieves a Dice of 26.9 %, whereas AtlasSegFM reaches 78.2 %. Across all datasets, average Dice gains of 3–5 % points are observed, with up to 30 % point improvements for tiny structures.

Ablation studies demonstrate the importance of each component: removing registration (using only prompts) degrades performance by ~2.8 % Dice; omitting the fusion module reduces accuracy on small structures by 10–15 % Dice; replacing test‑time registration with a pre‑trained network lowers accuracy slightly while reducing computation. The fusion module’s gain map is shown to adaptively weight predictions without any additional supervision.

Key contributions are: (1) defining a realistic one‑shot customization setting for segmentation foundation models; (2) integrating classical atlas‑based priors with modern foundation models via context‑aware prompts; (3) proposing a lightweight, inference‑only adaptive fusion that yields state‑of‑the‑art results without retraining. The method requires only a single annotated atlas per clinical context, making it highly practical for deployment in real‑world workflows.

Limitations include the computational cost of test‑time registration (GPU memory intensive) and potential failure in cases of extreme anatomical deformation where atlas alignment is challenging. Future work may explore more efficient registration networks, deformation‑robust loss functions, and multi‑atlas ensembles to further improve robustness.

The authors will release code publicly, facilitating reproducibility and encouraging adoption of one‑shot customized segmentation in clinical practice.

Comments & Academic Discussion

Loading comments...

Leave a Comment