Dexterous World Models

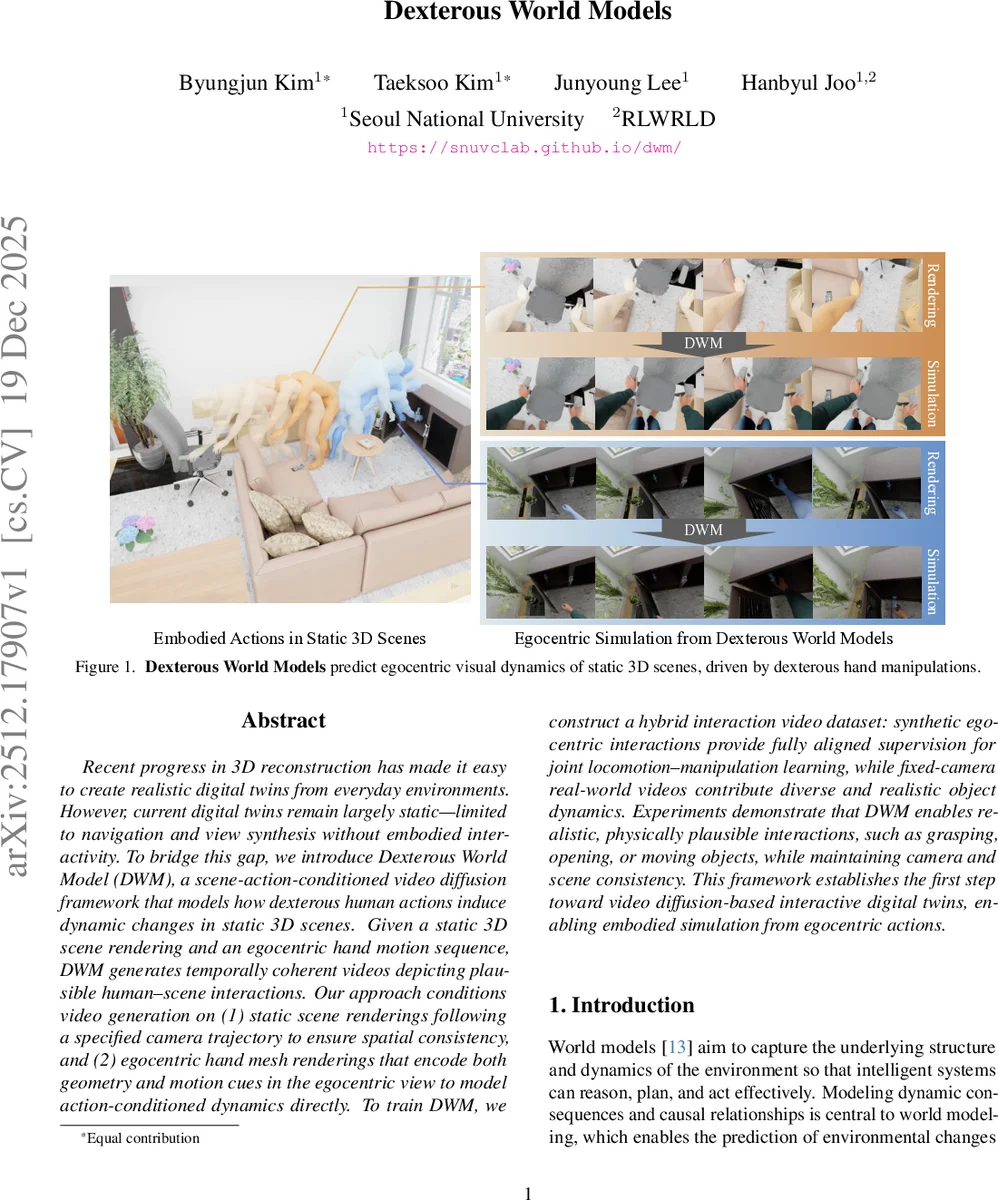

Recent progress in 3D reconstruction has made it easy to create realistic digital twins from everyday environments. However, current digital twins remain largely static and are limited to navigation and view synthesis without embodied interactivity. To bridge this gap, we introduce Dexterous World Model (DWM), a scene-action-conditioned video diffusion framework that models how dexterous human actions induce dynamic changes in static 3D scenes. Given a static 3D scene rendering and an egocentric hand motion sequence, DWM generates temporally coherent videos depicting plausible human-scene interactions. Our approach conditions video generation on (1) static scene renderings following a specified camera trajectory to ensure spatial consistency, and (2) egocentric hand mesh renderings that encode both geometry and motion cues to model action-conditioned dynamics directly. To train DWM, we construct a hybrid interaction video dataset. Synthetic egocentric interactions provide fully aligned supervision for joint locomotion and manipulation learning, while fixed-camera real-world videos contribute diverse and realistic object dynamics. Experiments demonstrate that DWM enables realistic and physically plausible interactions, such as grasping, opening, and moving objects, while maintaining camera and scene consistency. This framework represents a first step toward video diffusion-based interactive digital twins and enables embodied simulation from egocentric actions.

💡 Research Summary

Dexterous World Models (DWM) introduces a novel approach to bring embodied interaction into otherwise static 3D digital twins. The core idea is to condition a video diffusion model on two egocentric renderings: (1) a static‑scene video generated by rendering the known 3D environment along a prescribed camera trajectory, and (2) a hand‑mesh video that encodes the full geometry and motion of a dexterous human hand as seen from the same viewpoint. By providing the static background explicitly, the model is forced to preserve spatial consistency and focus its generative capacity on the residual dynamics caused by hand‑object contact.

To achieve this, the authors repurpose a pretrained video‑inpainting diffusion network as the initialization. Inpainting models act as near‑identity functions when the mask covers the whole frame, reproducing the input while retaining strong priors for spatial structure, temporal smoothness, and appearance continuity. DWM leverages this property: the static‑scene video serves as an identity baseline, and the diffusion process learns to modify only the regions where the hand interacts with objects, effectively learning ΔV, the visual residual.

Training data are constructed from a hybrid dataset. Synthetic egocentric interaction sequences provide perfectly aligned static‑scene, camera‑trajectory, and hand‑mesh pairs, enabling precise supervision of the residual dynamics. However, synthetic data lack the richness of real physics, lighting, and object textures. To compensate, the authors also incorporate fixed‑camera real‑world interaction videos, which bring diverse and realistic object dynamics but do not share the exact camera path. By mixing both sources, DWM learns to respect exact egocentric geometry while generalizing to realistic physical effects.

The formalism is expressed as pθ(V1:F | S0, A1:F) = pθ(V1:F | S0, {C1:F, H1:F}), where S0 is the static world, C1:F the camera trajectory, and H1:F the hand motion. The model first renders Π(S0; C1:F) and Π(H1:F; C1:F), then predicts the residual ΔS1:F conditioned only on H1:F. The observation model then renders the final video Π(S0 + ΔS1:F; C1:F). This separation of static background and action‑induced dynamics restores causal consistency that previous world‑model formulations often violate.

Experiments demonstrate that DWM can synthesize plausible, physically coherent interactions such as grasping, opening doors, and moving objects, while preserving the original camera motion and scene appearance. Quantitative metrics (PSNR, SSIM, LPIPS) show clear improvements over baseline video diffusion models that lack the residual‑learning formulation. Moreover, the authors illustrate a simulation‑based reasoning use‑case: given a goal frame, DWM can generate candidate action videos and compare their visual outcomes to the goal, enabling visual planning without an explicit physics engine.

In summary, DWM advances visual world modeling by (1) introducing a scene‑action‑conditioned diffusion framework that treats hand motion as a concrete geometric action input, (2) leveraging an inpainting prior to learn only the interaction‑induced residual, and (3) constructing a hybrid synthetic‑real dataset that balances precise alignment with realistic dynamics. The approach opens pathways for more interactive digital twins, robot manipulation training, AR/VR content creation, and embodied AI that can reason about the visual consequences of fine‑grained human actions.

Comments & Academic Discussion

Loading comments...

Leave a Comment