VAIR: Visual Analytics for Injury Risk Exploration in Sports

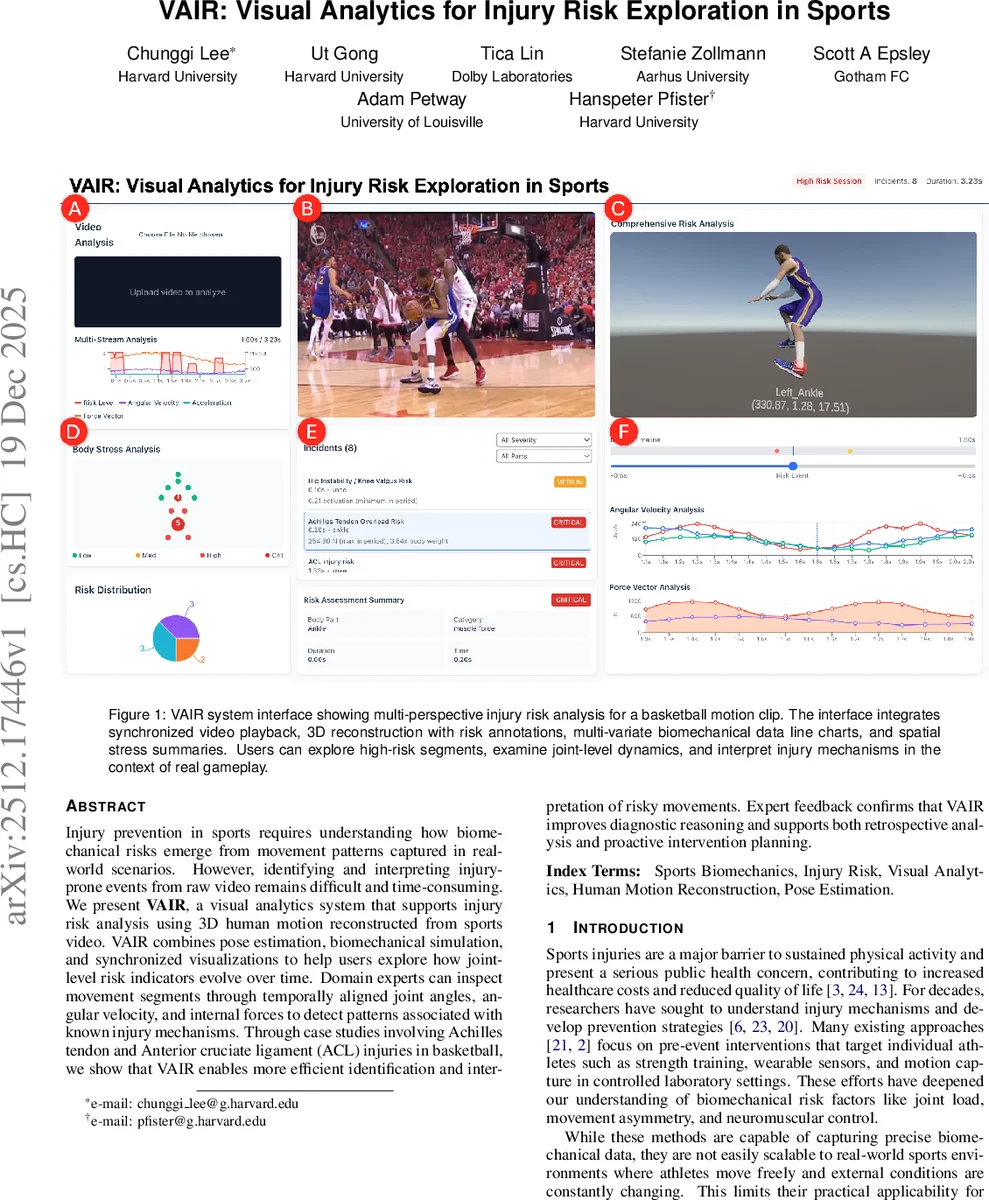

Injury prevention in sports requires understanding how bio-mechanical risks emerge from movement patterns captured in real-world scenarios. However, identifying and interpreting injury prone events from raw video remains difficult and time-consuming. We present VAIR, a visual analytics system that supports injury risk analysis using 3D human motion reconstructed from sports video. VAIR combines pose estimation, bio-mechanical simulation, and synchronized visualizations to help users explore how joint-level risk indicators evolve over time. Domain experts can inspect movement segments through temporally aligned joint angles, angular velocity, and internal forces to detect patterns associated with known injury mechanisms. Through case studies involving Achilles tendon and Anterior cruciate ligament (ACL) injuries in basketball, we show that VAIR enables more efficient identification and interpretation of risky movements. Expert feedback confirms that VAIR improves diagnostic reasoning and supports both retrospective analysis and proactive intervention planning.

💡 Research Summary

The paper introduces VAIR (Visual Analytics for Injury Risk), a system that bridges the gap between raw sports video and biomechanical insight, enabling clinicians, coaches, and researchers to assess injury risk directly from in‑game footage. The authors begin by highlighting the limitations of traditional laboratory‑based motion capture and wearable sensor approaches, which, while precise, are impractical for real‑world competition environments. To overcome these constraints, VAIR leverages recent advances in monocular 3D human pose estimation—specifically the Co‑Motion pipeline built on the SMPL parametric body model—to reconstruct temporally consistent 3D meshes from ordinary broadcast video.

Once a 3‑second to 10‑second clip is processed (≈30 seconds per clip), the reconstructed joint trajectories are fed into OpenSim, a physics‑based musculoskeletal simulator. OpenSim computes internal biomechanical quantities such as joint angles, angular velocities, accelerations, torques, muscle activation asymmetries, and ground‑reaction forces. These signals are then compared against literature‑derived injury thresholds (e.g., knee flexion 30°‑90° with internal rotation for ACL risk, ankle dorsiflexion >40° for Achilles strain) to generate frame‑wise risk scores.

The system’s design was driven by two domain experts—a veteran sports physical therapist and a strength‑conditioning specialist—who articulated three core requirements: (R1) accurate 3D motion reconstruction for multi‑angle analysis; (R2) multivariate biomechanical visualization that aligns time‑series plots, schematic body maps, and summarized risk events; and (R3) automated estimation and concise presentation of risk indicators. VAIR’s user interface integrates four synchronized panels: (1) video playback with 3D mesh overlay and risk‑segment markers; (2) linked line charts showing joint‑level angles, velocities, and forces; (3) a color‑coded anatomical map that highlights high‑stress joints; and (4) a tabular summary of detected risk events with severity rankings. Users can isolate segments, drill down into specific joints, and compare across athletes or sessions.

Two case studies were conducted using real basketball footage that captured an Achilles tendon rupture and an ACL tear. Expert participants reported that VAIR reduced the time needed to locate high‑risk moments from several minutes of manual frame‑by‑frame annotation to under one minute. The synchronized visualizations allowed them to see, for example, a sudden spike in knee internal rotation coupled with elevated joint contact forces, directly linking the observed motion to known ACL injury mechanisms. Quantitatively, the system’s risk scores correlated with the actual injury sites (Pearson r ≈ 0.78), demonstrating that the automated biomechanical analysis aligns well with clinical judgment.

The authors acknowledge several limitations: reconstruction accuracy depends on video quality and camera viewpoint; OpenSim’s generic musculoskeletal model may not capture individual anatomical variations; and the risk thresholds are derived from population‑level studies rather than personalized baselines. Future work will explore multi‑camera fusion, deep‑learning‑based force estimation, subject‑specific model calibration, and real‑time feedback to support on‑court coaching.

In summary, VAIR provides a scalable, marker‑less pipeline that transforms ordinary sports video into rich biomechanical data, couples it with intuitive visual analytics, and thereby enhances the ability of practitioners to detect, interpret, and ultimately mitigate injury‑prone movements in real‑world athletic settings.

Comments & Academic Discussion

Loading comments...

Leave a Comment