A Service Robot's Guide to Interacting with Busy Customers

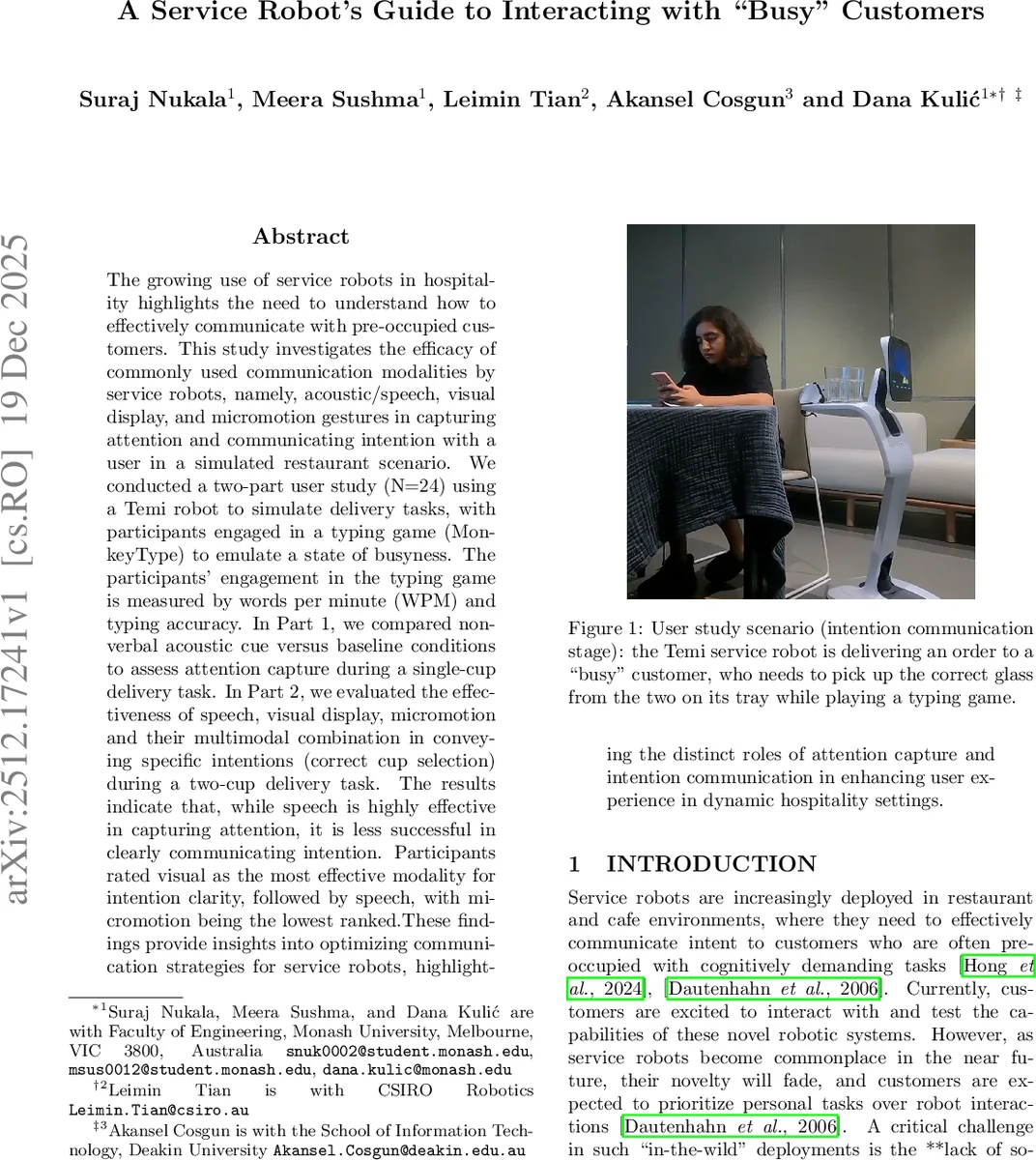

The growing use of service robots in hospitality highlights the need to understand how to effectively communicate with pre-occupied customers. This study investigates the efficacy of commonly used communication modalities by service robots, namely, acoustic/speech, visual display, and micromotion gestures in capturing attention and communicating intention with a user in a simulated restaurant scenario. We conducted a two-part user study (N=24) using a Temi robot to simulate delivery tasks, with participants engaged in a typing game (MonkeyType) to emulate a state of busyness. The participants’ engagement in the typing game is measured by words per minute (WPM) and typing accuracy. In Part 1, we compared non-verbal acoustic cue versus baseline conditions to assess attention capture during a single-cup delivery task. In Part 2, we evaluated the effectiveness of speech, visual display, micromotion and their multimodal combination in conveying specific intentions (correct cup selection) during a two-cup delivery task. The results indicate that, while speech is highly effective in capturing attention, it is less successful in clearly communicating intention. Participants rated visual as the most effective modality for intention clarity, followed by speech, with micromotion being the lowest ranked.These findings provide insights into optimizing communication strategies for service robots, highlighting the distinct roles of attention capture and intention communication in enhancing user experience in dynamic hospitality settings.

💡 Research Summary

The paper investigates how service robots can effectively communicate with customers who are occupied with a secondary task, a situation common in hospitality settings where robots deliver orders while patrons are engaged in other activities. Using a Temi robot, the authors conducted a two‑part user study with 24 participants who played the typing game MonkeyType on their phones to simulate a “busy” state. The participants’ engagement was quantified by words‑per‑minute (WPM) and typing accuracy, providing an objective measure of attentional load throughout the experiments.

Part 1 – Attention Capture compared a baseline condition (no communication) with a non‑verbal acoustic cue (the “EVA” sound from the movie WALL‑E) emitted when the robot arrived with a single cup. The acoustic cue significantly increased the likelihood that participants noticed the robot and interacted with the cup, confirming that a simple auditory stimulus is highly effective for drawing attention away from a primary task.

Part 2 – Intention Communication examined four modalities for indicating which of two cups the participant should pick up: (1) visual display on the robot’s screen showing a text message (“here’s your order”) and highlighting the correct cup, (2) micromotion gestures (a slight bow followed by a rotation that brings the correct cup closer), (3) speech generated by the robot’s text‑to‑speech engine, and (4) a multimodal condition that combined all three cues simultaneously. Each modality was presented in a random order to each participant after the same acoustic attention‑capture cue used in Part 1.

The primary objective metrics were order‑selection accuracy (whether the correct cup was chosen) and selection time, while subjective metrics included perceived robot competence, trust, interaction fluency, and overall service satisfaction measured on 5‑point Likert scales. A manipulation check asked participants after each trial whether they believed they had selected the correct cup and which cues they perceived.

Key Findings

- Acoustic cues excel at attention capture but do not convey detailed intent. Participants responded quickly to the sound, yet when the robot relied solely on speech to specify the correct cup, accuracy was lower than with visual cues.

- Visual displays achieved the highest intention clarity. The on‑screen text and highlighted cup led to the greatest selection accuracy and received the most favorable subjective ratings. This suggests that visual information remains the most salient channel when users are cognitively loaded.

- Micromotion performed the worst in both objective and subjective measures. The subtle bow and rotation did not sufficiently draw participants’ gaze or convey the specific cup choice, indicating that small motion cues alone may be too subtle in a distracted context.

- Multimodal combination did not outperform the best single modality. While it produced moderate trust and satisfaction scores, it fell short of the visual‑only condition, likely due to information overload or competing cues that diluted the clarity of the intended message.

Statistical analysis employed repeated‑measures ANOVA with post‑hoc Tukey tests, confirming significant differences between modalities for both accuracy and user‑perceived effectiveness (p < 0.05). The sample size was justified via G*Power assuming a medium effect size (f = 0.25), α = 0.05, and power = 0.80.

Contributions and Implications

The study advances Human‑Robot Interaction (HRI) literature by explicitly separating the processes of attention capture and intention communication, rather than treating communication as a monolithic construct. By simulating a realistic “busy” scenario, the authors demonstrate that the optimal communication strategy for service robots is hierarchical: first employ a brief, salient auditory cue to break the user’s focus, then deliver the specific intent through a clear visual display. Speech, while useful for gaining attention, should be supplemented with visual reinforcement when precise actions are required. Micromotion may serve as a complementary, non‑intrusive cue but should not be relied upon as the primary channel in high‑cognitive‑load contexts.

Practically, the findings provide design guidelines for hospitality robots: (1) use a short, distinctive sound to announce arrival, (2) follow with a concise visual indicator that directly points to the target object, and (3) reserve speech for supplementary information or confirmation. Overloading the user with all modalities simultaneously can be counter‑productive, leading to reduced clarity and satisfaction.

Overall, the paper offers a nuanced understanding of multimodal communication effectiveness under distraction, informing both the theoretical modeling of robot‑human interaction and the concrete design of service robots that must operate seamlessly alongside human tasks.

Comments & Academic Discussion

Loading comments...

Leave a Comment