MiVLA: Towards Generalizable Vision-Language-Action Model with Human-Robot Mutual Imitation Pre-training

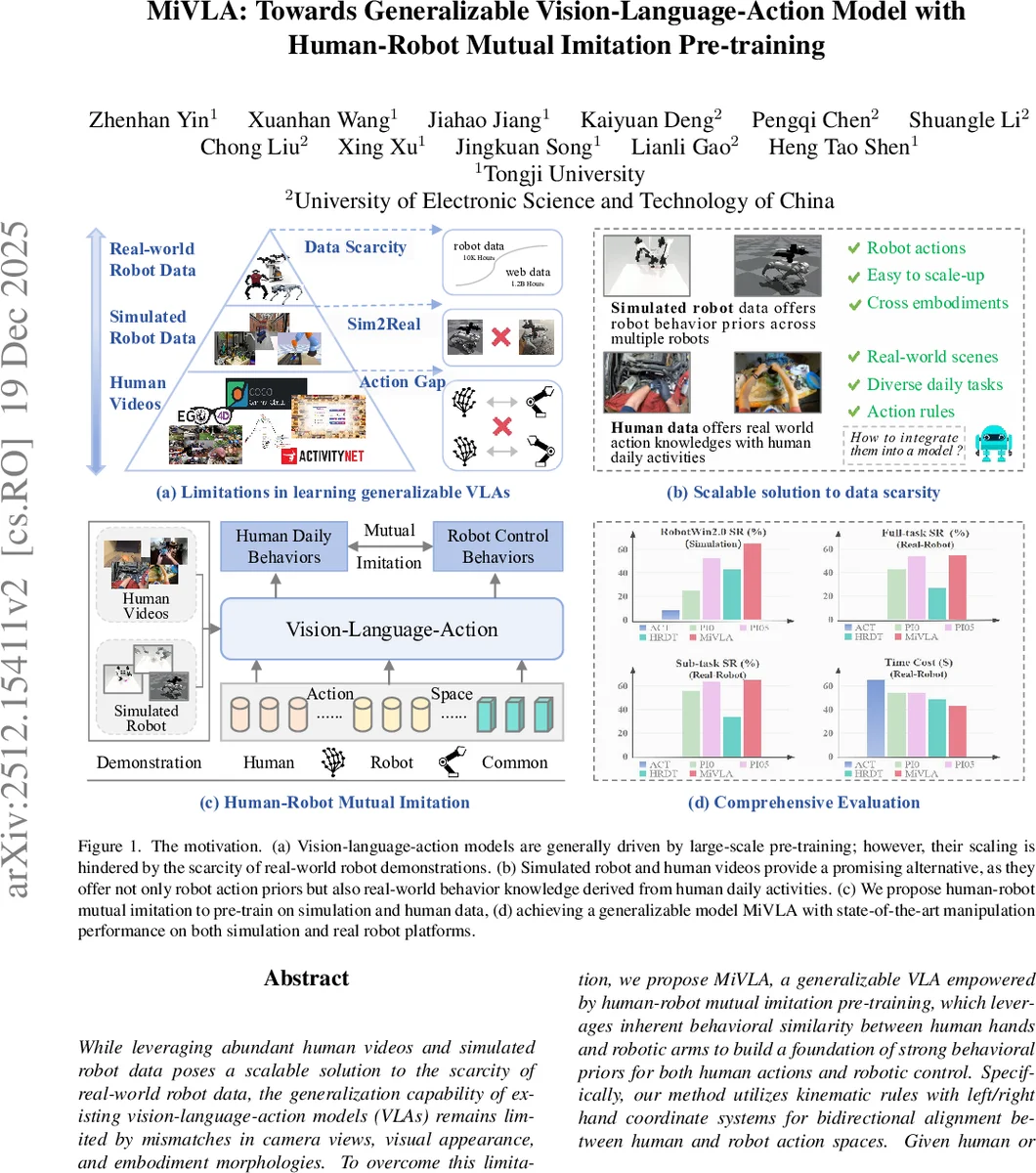

While leveraging abundant human videos and simulated robot data poses a scalable solution to the scarcity of real-world robot data, the generalization capability of existing vision-language-action models (VLAs) remains limited by mismatches in camera views, visual appearance, and embodiment morphologies. To overcome this limitation, we propose MiVLA, a generalizable VLA empowered by human-robot mutual imitation pre-training, which leverages inherent behavioral similarity between human hands and robotic arms to build a foundation of strong behavioral priors for both human actions and robotic control. Specifically, our method utilizes kinematic rules with left/right hand coordinate systems for bidirectional alignment between human and robot action spaces. Given human or simulated robot demonstrations, MiVLA is trained to forecast behavior trajectories for one embodiment, and imitate behaviors for another one unseen in the demonstration. Based on this mutual imitation, it integrates the behavioral fidelity of real-world human data with the manipulative diversity of simulated robot data into a unified model, thereby enhancing the generalization capability for downstream tasks. Extensive experiments conducted on both simulation and real-world platforms with three robots (ARX, PiPer and LocoMan), demonstrate that MiVLA achieves strong improved generalization capability, outperforming state-of-the-art VLAs (e.g., $\boldsymbolπ_{0}$, $\boldsymbolπ_{0.5}$ and H-RDT) by 25% in simulation, and 14% in real-world robot control tasks.

💡 Research Summary

MiVLA introduces a novel pre‑training paradigm called human‑robot mutual imitation to address the data scarcity that hampers the generalization of Vision‑Language‑Action (VLA) models. The core idea is to bridge the human hand and robot arm action spaces using bidirectional kinematic transformations based on thumb and end‑effector reference points, with left/right hand coordinate alignment. Human‑to‑Robot conversion maps relative thumb movements to robot end‑effector poses and solves inverse kinematics for joint angles; Robot‑to‑Human conversion treats the robot end‑effector as a thumb and reconstructs finger positions using anatomical distance estimates. This mechanism allows each demonstration—whether a human video or a simulated robot trajectory—to generate complementary actions for the other embodiment, effectively providing cross‑embodiment labels without additional data collection.

The architecture combines state‑of‑the‑art multimodal tokenizers (DINOv2, SigLIP, T5) with a diffusion‑transformer action decoder that iteratively denoises continuous action chunks conditioned on vision, language, and proprioceptive tokens. Training optimizes four losses simultaneously: robot action reconstruction, robot‑to‑human imitation, human action reconstruction, and human‑to‑robot imitation, summed into a single objective.

Extensive experiments on three real robots (ARX, PiPer, LocoMan) and a suite of simulated tasks demonstrate that MiVLA outperforms recent VLAs such as π₀, π₀.₅, and H‑RDT by 25 % in simulation success rates and 14 % in real‑world manipulation tasks, while also achieving faster inference. Ablation studies confirm the necessity of bidirectional conversion and the synergistic effect of combining human and simulated robot data.

Overall, MiVLA shows that integrating abundant human video data with simulated robot demonstrations via geometric action space alignment can yield a highly generalizable VLA without relying on large-scale real‑world robot datasets. Limitations include reliance on thumb‑centric mappings, the need for robot‑specific inverse‑kinematics tuning, and insufficient analysis of video noise impact. Future work may explore richer anatomical models, automated transformation learning across diverse morphologies, and extension to force‑controlled manipulation.

Comments & Academic Discussion

Loading comments...

Leave a Comment