An Anatomy of Vision-Language-Action Models: From Modules to Milestones and Challenges

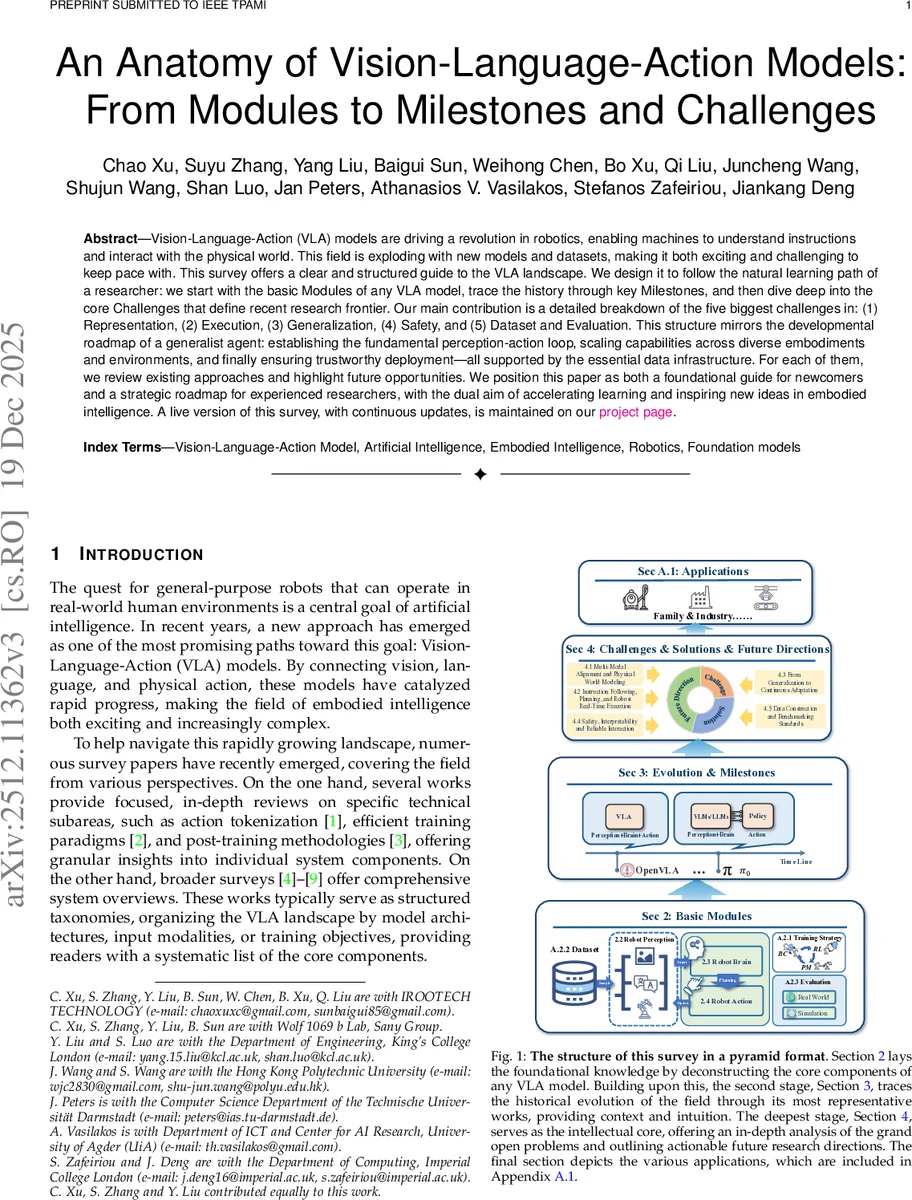

Vision-Language-Action (VLA) models are driving a revolution in robotics, enabling machines to understand instructions and interact with the physical world. This field is exploding with new models and datasets, making it both exciting and challenging to keep pace with. This survey offers a clear and structured guide to the VLA landscape. We design it to follow the natural learning path of a researcher: we start with the basic Modules of any VLA model, trace the history through key Milestones, and then dive deep into the core Challenges that define recent research frontier. Our main contribution is a detailed breakdown of the five biggest challenges in: (1) Representation, (2) Execution, (3) Generalization, (4) Safety, and (5) Dataset and Evaluation. This structure mirrors the developmental roadmap of a generalist agent: establishing the fundamental perception-action loop, scaling capabilities across diverse embodiments and environments, and finally ensuring trustworthy deployment-all supported by the essential data infrastructure. For each of them, we review existing approaches and highlight future opportunities. We position this paper as both a foundational guide for newcomers and a strategic roadmap for experienced researchers, with the dual aim of accelerating learning and inspiring new ideas in embodied intelligence. A live version of this survey, with continuous updates, is maintained on our \href{https://suyuz1.github.io/VLA-Survey-Anatomy/}{project page}.

💡 Research Summary

The paper presents a comprehensive, structured survey of Vision‑Language‑Action (VLA) models, positioning itself as both a learning roadmap for newcomers and a strategic guide for seasoned researchers. Its organization follows a natural learning trajectory: Modules → Milestones → Challenges, mirroring how a researcher would progress from foundational concepts to cutting‑edge problems.

Modules (Section 2). The authors decompose any VLA system into three core components: (1) Perception, (2) Brain, and (3) Action. In perception, the field has moved from classic CNN backbones to Vision Transformers (ViT) and, more recently, language‑aligned encoders such as CLIP and SigLIP. A growing trend is the hybrid use of self‑supervised geometric encoders (e.g., DINOv2) together with contrastive vision‑language models to capture both semantics and fine‑grained geometry. Language encoders have evolved from standard Transformers (BERT, T5) to large language models (Llama‑2, Gemma) and finally to integrated Vision‑Language Models (VLMs) where vision and text are jointly pre‑trained. Proprioceptive signals (joint angles, gripper states) are typically encoded with lightweight MLPs and fused via FiLM or concatenation. The Brain is most often a Transformer that tokenizes multimodal inputs and performs end‑to‑end reasoning; alternatives include Mamba‑style long‑sequence models. The Action module has shifted from discrete token policies to continuous generative models—Diffusion, Flow‑Matching, or hybrid Transformer‑Diffusion heads—providing smoother, higher‑dimensional motion generation.

Milestones (Section 3). The survey chronologically maps key breakthroughs: early CNN‑based policies (e.g., Diffusion Policy), the adoption of large‑scale pre‑trained vision encoders (CLIP, SigLIP), the integration of LLMs for instruction understanding (RDT‑1B, OpenVLA), and the emergence of hybrid architectures that combine a pre‑trained VLM backbone with a diffusion action head (π0, TriVLA). Each milestone is contextualized by data scale (internet‑scale image‑text, simulated‑real mixtures), training strategy (pre‑train + fine‑tune, offline + online RL), and application domain (manipulation, navigation, human‑robot collaboration).

Challenges (Section 4). The core contribution is a deep dive into five inter‑related challenges that define the frontier of VLA research:

-

Multi‑Modal Alignment & Physical World Modeling – Bridging semantic alignment (language‑aligned vision) with geometric precision (self‑supervised depth/normal features) and integrating world‑model predictors (RSSM, latent dynamics) to enable planning in latent physical space.

-

Instruction Following, Planning, and Robust Real‑Time Execution – Parsing compositional language, generating hierarchical plans (high‑level goals → low‑level trajectories), and delivering low‑latency control via efficient token scheduling or hierarchical diffusion.

-

Generalization & Continuous Adaptation – Achieving zero‑shot transfer across robots, environments, and tasks through meta‑learning, online adaptation, and mixed‑reality training pipelines.

-

Safety, Interpretability, and Reliable Interaction – Embedding risk‑aware policies, simulation‑based verification, and model‑level explainability (attention maps, gradient‑based saliency) to build trustworthy agents for collaborative settings.

-

Data Construction and Benchmarking Standards – Curating high‑quality multimodal datasets that include vision, language, proprioception, and action trajectories; defining unified evaluation protocols that span simulation, real‑world, zero‑shot, and continual‑learning settings.

For each challenge the authors review representative approaches, discuss their limitations, and outline concrete research directions (e.g., hybrid encoders for (1), hierarchical diffusion planners for (2), meta‑RL for (3), safety‑layered control for (4), and open‑source benchmark suites for (5)).

Applications & Appendices (Section 5 & Appendix A). The paper lists diverse use‑cases such as household assistants, industrial manipulators, medical support robots, and collaborative manufacturing, illustrating the breadth of VLA impact.

Contributions & Outlook. The survey fills two gaps in existing literature: (i) it places challenges at the heart of the narrative rather than as an afterthought, providing a structured problem space; (ii) it aligns the exposition with a researcher’s learning path, offering a step‑by‑step roadmap. The authors also maintain a live project page that will continuously incorporate new models, datasets, and code, positioning the work as a living resource for the community.

Overall, the paper serves as a definitive reference that maps the evolution of VLA models, dissects their architectural components, chronicles seminal milestones, and, most importantly, articulates the open research agenda needed to advance embodied generalist agents.

Comments & Academic Discussion

Loading comments...

Leave a Comment