Atom: Efficient On-Device Video-Language Pipelines Through Modular Reuse

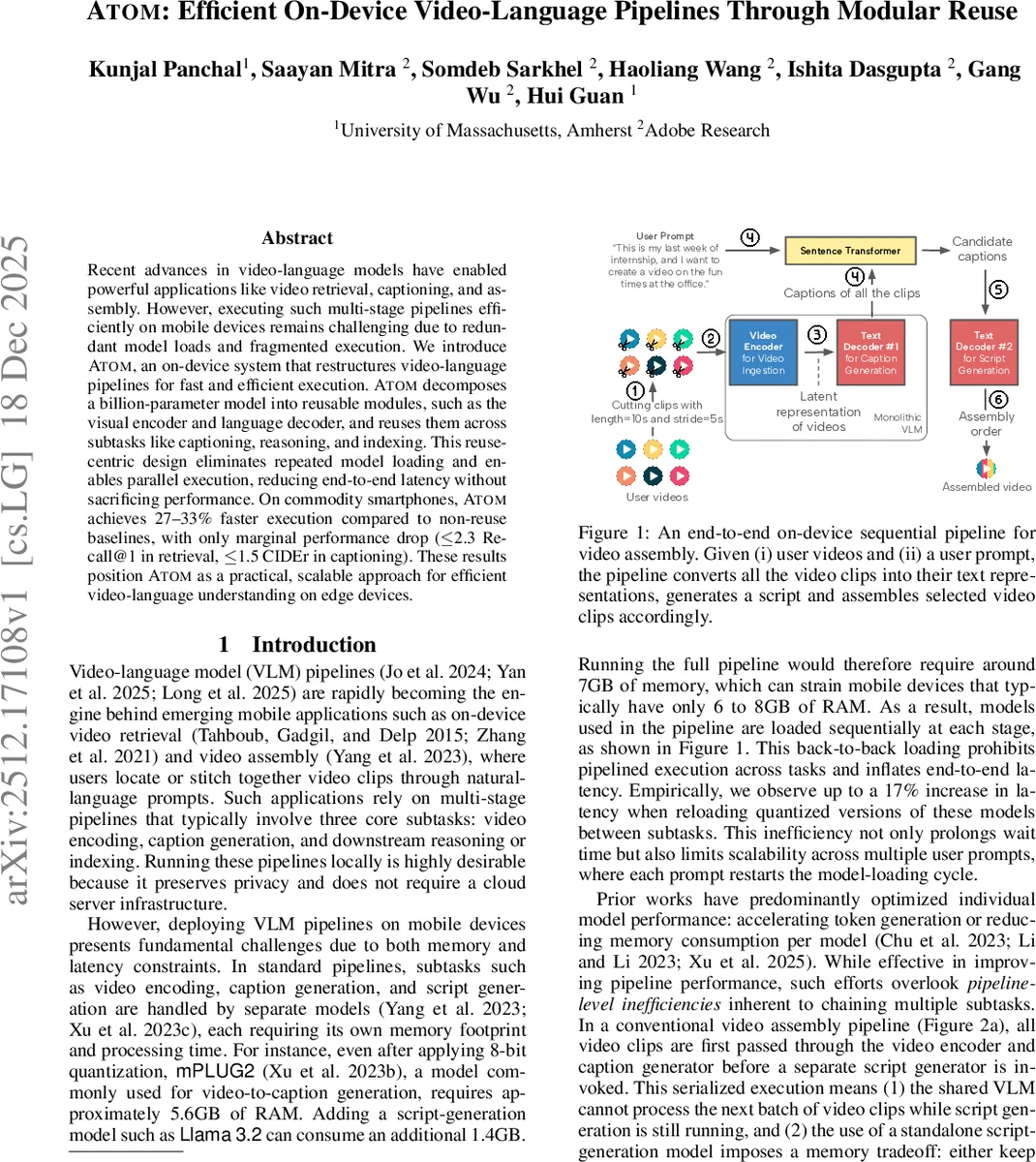

Recent advances in video-language models have enabled powerful applications like video retrieval, captioning, and assembly. However, executing such multi-stage pipelines efficiently on mobile devices remains challenging due to redundant model loads and fragmented execution. We introduce Atom, an on-device system that restructures video-language pipelines for fast and efficient execution. Atom decomposes a billion-parameter model into reusable modules, such as the visual encoder and language decoder, and reuses them across subtasks like captioning, reasoning, and indexing. This reuse-centric design eliminates repeated model loading and enables parallel execution, reducing end-to-end latency without sacrificing performance. On commodity smartphones, Atom achieves 27–33% faster execution compared to non-reuse baselines, with only marginal performance drop ($\leq$ 2.3 Recall@1 in retrieval, $\leq$ 1.5 CIDEr in captioning). These results position Atom as a practical, scalable approach for efficient video-language understanding on edge devices.

💡 Research Summary

The paper introduces Atom, a system designed to run video‑language pipelines efficiently on commodity smartphones. Modern video‑language models (VLMs) enable powerful applications such as video retrieval, captioning, and video assembly, but deploying the full multi‑stage pipelines on‑device is hampered by repeated model loading and fragmented execution. Typical pipelines treat each sub‑task (video encoding, caption generation, script generation, indexing, etc.) as a separate model. Even after aggressive 8‑bit quantization, a single model like mPLUG2 can occupy ~5.6 GB of RAM; adding a second language model pushes the total well beyond the 6‑8 GB memory budget of most phones. Consequently, the system must load and unload models sequentially, incurring up to a 17 % latency increase per prompt.

Key Idea – Modular Reuse

Atom restructures a large VLM into two persistent, reusable modules: a visual encoder and a general‑purpose text decoder. The encoder (a CLIP‑ViT/L‑14 backbone) transforms video clips into latent embeddings, while the decoder (a 1 B‑parameter Llama 3.2 autoregressive model) generates all textual outputs—captions, scripts, retrieval indexes—without needing separate fine‑tuned decoders. By keeping these modules resident in memory throughout the application’s lifetime, Atom eliminates the repeated weight‑loading overhead that dominates traditional pipelines.

System Design

- Modularization – The original mPLUG2 architecture (BERT encoder, fusion module, CLIP visual encoder, two 0.3 B‑parameter decoders) is trimmed to a CLIP visual encoder and a single Llama‑based decoder, yielding a 1.4 B‑parameter model called mPLUG2+. The text encoder and fusion layers are unnecessary for the targeted tasks and are removed to save memory.

- Persistent Reuse – Both modules are loaded once at startup and shared across all downstream tasks. The same decoder that produces captions is later invoked to compose a script for video assembly or to generate text embeddings for retrieval. No additional fine‑tuning is required for the script‑generation step.

- Parallel Execution – Because the encoder and decoder are exposed as independent, thread‑safe APIs, they can run concurrently. While the decoder is generating a caption for clip #1, the encoder can already be processing clip #2. This overlapping of compute windows boosts throughput and improves CPU/GPU utilization on the device.

Implementation Details

- Quantization is performed with TorchAO (8‑bit) to fit within mobile memory constraints.

- The decoder’s larger capacity (1 B parameters) provides a stronger linguistic prior, improving both caption quality and retrieval performance compared with the original mPLUG2 decoders.

- Memory analysis shows that the persistent modules increase peak RAM usage by only ~40 MB (≈0.76 % of total), because the temporary buffers required for repeated model loading are eliminated.

Experimental Evaluation

Atom is evaluated on three mainstream smartphones (Google Pixel 5a, Pixel 8a, Samsung Galaxy S23) across two representative video‑language tasks:

- Video Retrieval – Measured by Recall@k. Atom reduces end‑to‑end latency by up to 33 % while incurring a modest 1.3–2.3 point drop in Recall@1 relative to the non‑reuse baseline.

- Video Assembly – Measured by CIDEr for generated captions. Atom achieves up to 33 % faster execution with only a 0.6–1.5 point CIDEr reduction.

Additional findings: model‑loading latency is cut by up to 50 %; peak memory remains within the 6 GB budget; and the stronger Llama decoder actually improves raw caption and retrieval scores over the original mPLUG2 configuration (by 2.8–3.7 CIDEr and 3.8–4.9 Recall@1).

Broader Impact and Generality

The reuse‑centric design is not limited to retrieval and assembly. The authors outline extensions to video summarization, question answering, and temporal event localization, all of which share the same encoder‑decoder backbone. Moreover, the approach can be transplanted to other vision‑language foundations (e.g., Flamingo, Video‑LLM) by applying the same modular split and persistent loading strategy.

Contributions Summarized

- Introduces a system‑level paradigm that emphasizes module reuse to accelerate on‑device video‑language inference.

- Implements Atom on top of a modified mPLUG2+ model, demonstrating that a single visual encoder and a unified Llama decoder can serve all textual sub‑tasks.

- Provides extensive on‑device benchmarks showing significant latency reductions (27–33 % faster) with negligible performance loss, while keeping memory overhead minimal.

Conclusion

Atom demonstrates that by decomposing a large VLM into reusable, persistently loaded modules and exploiting their independence for parallel execution, it is possible to run full video‑language pipelines on edge devices with real‑time responsiveness and acceptable accuracy. This work bridges the gap between cloud‑centric AI services and privacy‑preserving, low‑latency mobile applications, establishing a practical blueprint for future on‑device multimodal AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment