ConsistTalk: Intensity Controllable Temporally Consistent Talking Head Generation with Diffusion Noise Search

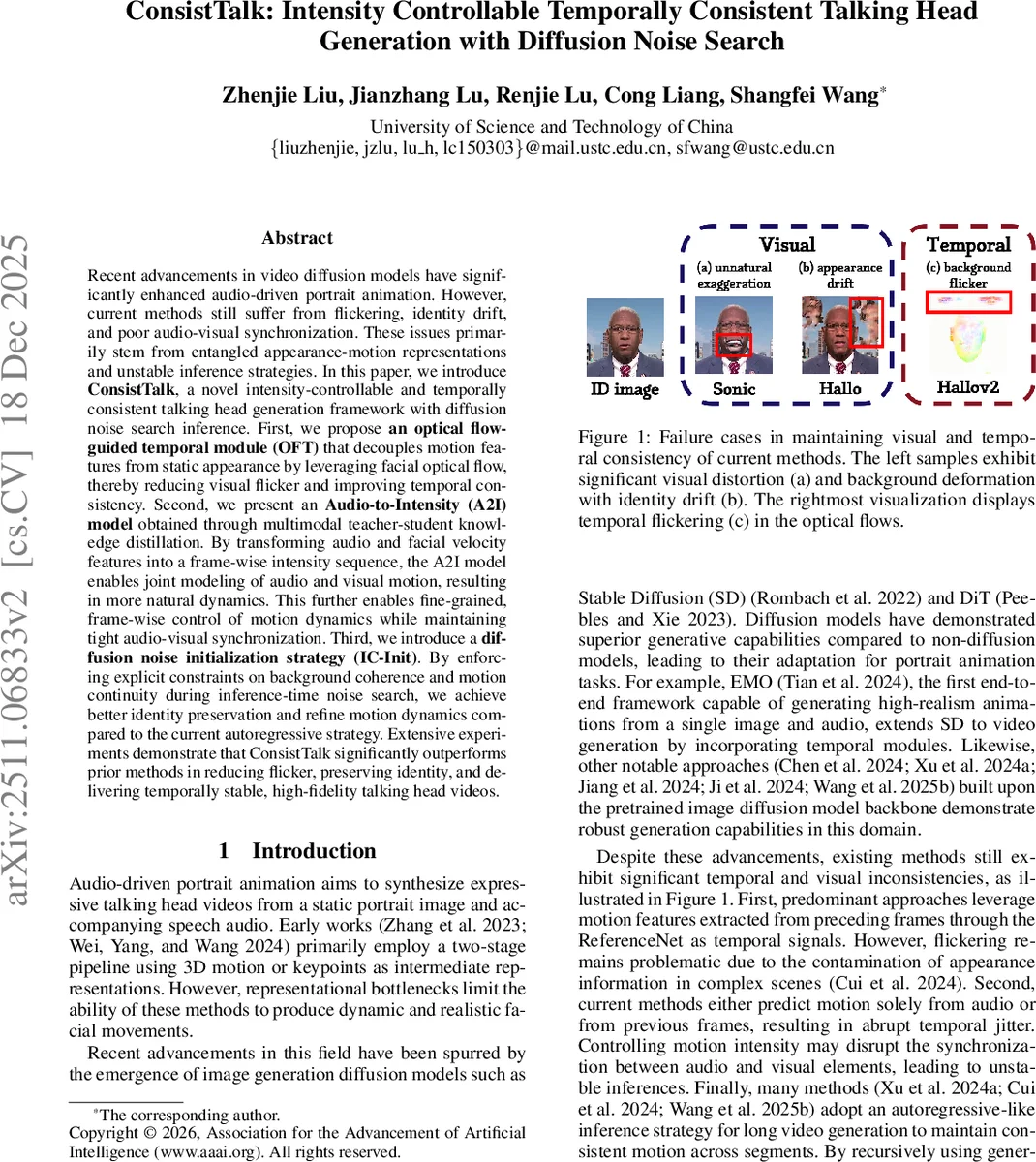

Recent advancements in video diffusion models have significantly enhanced audio-driven portrait animation. However, current methods still suffer from flickering, identity drift, and poor audio-visual synchronization. These issues primarily stem from entangled appearance-motion representations and unstable inference strategies. In this paper, we introduce \textbf{ConsistTalk}, a novel intensity-controllable and temporally consistent talking head generation framework with diffusion noise search inference. First, we propose \textbf{an optical flow-guided temporal module (OFT)} that decouples motion features from static appearance by leveraging facial optical flow, thereby reducing visual flicker and improving temporal consistency. Second, we present an \textbf{Audio-to-Intensity (A2I) model} obtained through multimodal teacher-student knowledge distillation. By transforming audio and facial velocity features into a frame-wise intensity sequence, the A2I model enables joint modeling of audio and visual motion, resulting in more natural dynamics. This further enables fine-grained, frame-wise control of motion dynamics while maintaining tight audio-visual synchronization. Third, we introduce a \textbf{diffusion noise initialization strategy (IC-Init)}. By enforcing explicit constraints on background coherence and motion continuity during inference-time noise search, we achieve better identity preservation and refine motion dynamics compared to the current autoregressive strategy. Extensive experiments demonstrate that ConsistTalk significantly outperforms prior methods in reducing flicker, preserving identity, and delivering temporally stable, high-fidelity talking head videos.

💡 Research Summary

ConsistTalk tackles three persistent problems in audio‑driven talking‑head generation with diffusion models: visual flickering, identity drift, and poor audio‑visual synchronization. The authors propose a three‑component framework that jointly addresses appearance‑motion entanglement and unstable inference.

- Optical‑Flow‑Guided Temporal Module (OFT): Instead of feeding whole reference features from previous frames (as in ReferenceNet‑based methods), OFT extracts facial optical flow between adjacent frames using a lightweight FacialFlowNet. The flow is down‑sampled to the latent resolution with 3D convolutions and fused via full 3D attention with rotary positional embeddings (RoPE). Identity information is encoded once from the static portrait using CLIP and injected into the Dual‑UNet, while motion dynamics are supplied exclusively by the flow. This decoupling prevents appearance contamination, dramatically reducing temporal flicker and preserving background consistency.

- Audio‑to‑Intensity (A2I) Model: The authors observe that motion intensity (the overall magnitude of head pose and facial expression) is a useful intermediate between audio and visual dynamics. A multimodal teacher network learns a mapping from audio embeddings (Whisper‑Tiny) and velocity features (head motion, landmark variance) to an intensity sequence via cross‑attention and gated linear units, with Swish activation. Because velocity features are unavailable at inference, a student network is distilled to predict the same intensity sequence from audio alone, using a combination of MSE loss on teacher‑student logits and shape/temporal consistency losses. The resulting per‑frame intensity scalar is multiplied into the hidden states of the audio‑conditioned diffusion UNet, giving users fine‑grained, frame‑wise control over motion strength while keeping lip‑sync accurate.

- Intensity‑Guided Diffusion Noise Initialization (IC‑Init): Autoregressive generation often accumulates errors, causing identity drift over long videos. IC‑Init introduces a training‑free beam‑search over latent noise trajectories guided by the predicted intensity sequence. High‑frequency components encode rapid motion, low‑frequency components preserve coarse structure and identity. During inference, candidate latents are perturbed with additional noise, a look‑ahead denoising step is performed, and a reward function—weighted by intensity, background coherence, and motion continuity—is computed. The top‑B candidates are kept and the process repeats, yielding a noise path that respects both the reference image and temporal constraints.

Extensive quantitative evaluation on multiple benchmarks (FVD, E‑FID, Flicker, ID‑Dist, VBench, etc.) shows ConsistTalk outperforms prior state‑of‑the‑art methods such as SadTalker, AniTalker, Hallo, Sonic, and FantasyTalking. Notably, flicker is reduced by over 40 % compared to the best baseline, and identity preservation reaches 99.68 %. Ablation studies confirm each component’s contribution: OFT alone cuts flicker and stabilizes background; A2I alone enables controllable intensity and improves lip‑sync; IC‑Init alone mitigates error accumulation in long sequences.

In summary, ConsistTalk introduces (i) a flow‑based temporal module that cleanly separates motion from appearance, (ii) a teacher‑student intensity predictor that bridges audio and visual dynamics and offers user‑controllable motion strength, and (iii) an intensity‑aware noise search that replaces unstable autoregressive inference. The combination yields temporally consistent, identity‑preserving, high‑fidelity talking‑head videos with fine‑grained control, representing a significant step forward for diffusion‑based portrait animation. Future work may explore multi‑person scenes, more complex backgrounds, and real‑time interactive applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment