Self-localization on a 3D map by fusing global and local features from a monocular camera

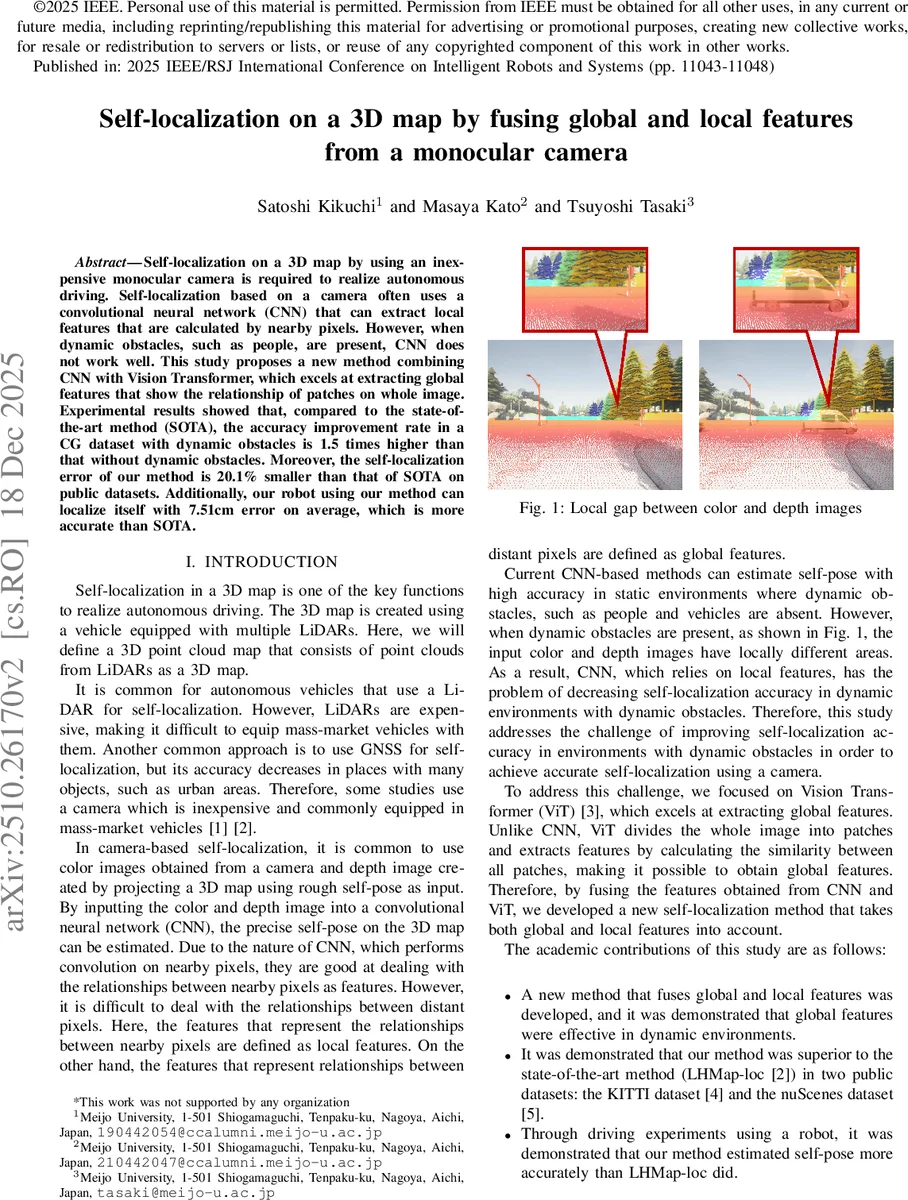

Self-localization on a 3D map by using an inexpensive monocular camera is required to realize autonomous driving. Self-localization based on a camera often uses a convolutional neural network (CNN) that can extract local features that are calculated by nearby pixels. However, when dynamic obstacles, such as people, are present, CNN does not work well. This study proposes a new method combining CNN with Vision Transformer, which excels at extracting global features that show the relationship of patches on whole image. Experimental results showed that, compared to the state-of-the-art method (SOTA), the accuracy improvement rate in a CG dataset with dynamic obstacles is 1.5 times higher than that without dynamic obstacles. Moreover, the self-localization error of our method is 20.1% smaller than that of SOTA on public datasets. Additionally, our robot using our method can localize itself with 7.51cm error on average, which is more accurate than SOTA.

💡 Research Summary

**

The paper addresses the problem of accurate vehicle self‑localization on a pre‑built 3D point‑cloud map using only an inexpensive monocular camera. While recent camera‑based methods rely on convolutional neural networks (CNNs) to extract local features from paired color and depth images, they suffer in dynamic environments because moving objects create local mismatches between the two modalities. To overcome this limitation, the authors propose a novel architecture that fuses global features extracted by a Vision Transformer (ViT) with local features extracted by a CNN.

The network consists of three main blocks. The global feature extraction block first resizes the color (RGB) and depth images to a fixed size, passes each through lightweight convolutional layers (Conv′RGB, Conv′Depth) to match the input shape of a pre‑trained ViT (ImageNet), concatenates the resulting feature maps, and feeds them into the ViT. The class token of the ViT output serves as a 768‑dimensional global descriptor (F_Global). The local feature extraction block mirrors the feature extractor used in the state‑of‑the‑art LHMap‑loc method. It processes the same RGB and depth inputs through separate CNN streams, computes a correlation map between them, applies a second CNN to generate attention weights, and finally aggregates the result into a 512‑dimensional local descriptor (F_Local).

Both descriptors are concatenated into a 1280‑dimensional fused vector, which is fed into two multilayer perceptrons (MLP_position and MLP_orientation) that regress the 3‑D translation vector t and the orientation quaternion q, respectively. Training proceeds in two stages: (1) the ViT is frozen while the CNN and the regression heads are trained end‑to‑end on paired color‑depth images with noisy initial poses; (2) the entire network is fine‑tuned to allow the global and local streams to adapt jointly. Random pose perturbations of ±60 cm are added to the ground‑truth poses during training to simulate the rough pose that would be supplied by a prior localization step.

The authors evaluate the method on three fronts. (1) In the CARLA simulator, they generate static and dynamic datasets across seven towns (7 000 evaluation frames). In dynamic scenes, the proposed method limits the increase in mean position error from 4.2 % (baseline) to 2.6 %, demonstrating robustness to moving obstacles. (2) On public benchmarks (KITTI and nuScenes), the method achieves mean position errors of 9.36 cm (KITTI) and 7.04 cm (nuScenes), outperforming the previous best LHMap‑loc by 2.2 cm and 1.77 cm respectively, with especially notable gains on nuScenes where the improvement exceeds 20 %. (3) Real‑world experiments on a Meijo University campus using a robot equipped with a LiDAR‑generated map and a ZED2 stereo camera show a mean error of 7.51 cm, compared to 8.18 cm for the baseline. Qualitative visualizations confirm that the projected 3‑D map aligns more accurately with the camera view when using the fused features.

Analysis of the results indicates that ViT’s self‑attention captures long‑range dependencies and effectively averages out local disturbances caused by dynamic objects, while the CNN preserves fine‑grained spatial correspondences essential for precise pose estimation. The simple concatenation of the two descriptors, followed by lightweight MLP regressors, yields a strong synergy without requiring complex fusion mechanisms. Moreover, leveraging a pre‑trained ViT reduces the need for massive labeled datasets, as only modest fine‑tuning is necessary for the localization task.

In summary, the paper makes three key contributions: (1) a dual‑stream architecture that combines global and local visual cues to improve camera‑only self‑localization in both static and dynamic scenes; (2) empirical evidence of a 20 % reduction in average position error on standard benchmarks; and (3) a demonstration that the approach can be deployed on a real robot, achieving sub‑8 cm accuracy. Future work may explore multi‑scale patch strategies, real‑time inference optimizations, and integration with additional sensors (e.g., LiDAR or IMU) to further enhance robustness and scalability.

Comments & Academic Discussion

Loading comments...

Leave a Comment