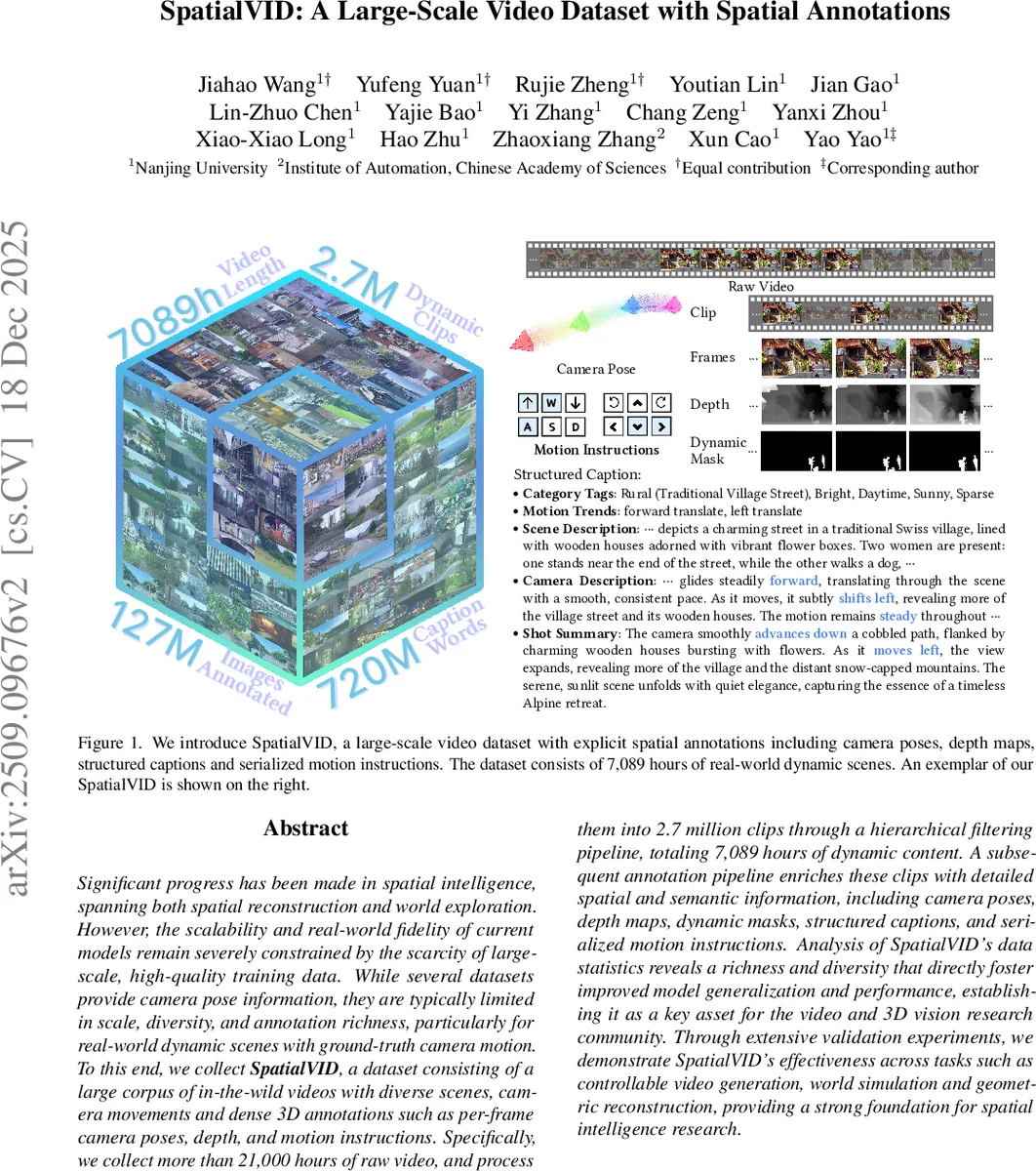

SpatialVID: A Large-Scale Video Dataset with Spatial Annotations

Significant progress has been made in spatial intelligence, spanning both spatial reconstruction and world exploration. However, the scalability and real-world fidelity of current models remain severely constrained by the scarcity of large-scale, high-quality training data. While several datasets provide camera pose information, they are typically limited in scale, diversity, and annotation richness, particularly for real-world dynamic scenes with ground-truth camera motion. To this end, we collect SpatialVID, a dataset consists of a large corpus of in-the-wild videos with diverse scenes, camera movements and dense 3D annotations such as per-frame camera poses, depth, and motion instructions. Specifically, we collect more than 21,000 hours of raw videos, and process them into 2.7 million clips through a hierarchical filtering pipeline, totaling 7,089 hours of dynamic content. A subsequent annotation pipeline enriches these clips with detailed spatial and semantic information, including camera poses, depth maps, dynamic masks, structured captions, and serialized motion instructions. Analysis of SpatialVID’s data statistics reveals a richness and diversity that directly fosters improved model generalization and performance, establishing it as a key asset for the video and 3D vision research community.

💡 Research Summary

SpatialVID is introduced as a large‑scale, real‑world video dataset that explicitly bridges the gap between high‑quality video content and dense 3D spatial annotations. The authors begin by collecting over 21,000 hours of YouTube footage using motion‑related keywords (e.g., “walk”, “tour”, “drone”). Manual screening removes broken files, heavy overlays, and videos lacking stable camera motion, resulting in a curated corpus of 33,443 source videos. These videos are segmented into 3–15 second clips with a modified PySceneDetect pipeline and uniformly re‑encoded to 720p H.265 MP4, yielding more than 7 million candidate clips.

A four‑stage quality filter is then applied: (1) aesthetic quality via a CLIP + MLP predictor, (2) luminance consistency to discard over‑ or under‑exposed footage, (3) text interference detection using PaddleOCR to eliminate clips with excessive subtitles or logos, and (4) motion intensity assessment using a lightweight VMAF‑based metric. After filtering, 2.7 million clips (7,089 hours) remain, each exhibiting clear camera trajectories and sufficient visual fidelity.

For geometric annotation, the authors adopt an enhanced MegaSaM pipeline, which combines multi‑view stereo, optical flow, and robust pose estimation to generate per‑frame camera poses and depth maps even in dynamic scenes. Depth is provided as dense per‑pixel disparity covering a realistic range (≈1 m–100 m). Semantic annotation is built on a two‑step language model approach: a Vision‑Language Model (VLM) first produces coarse scene and camera descriptions; a Large Language Model (LLM) then refines these using the estimated poses, yielding structured captions that encode scene type, lighting, weather, crowd density, and a concise “camera description”. Motion trends are translated into simple WASD instructions derived from the trajectory of the camera poses. Additionally, dynamic masks separate moving objects from static background.

To support balanced training and evaluation, a high‑quality subset called SpatialVID‑HQ is sampled. From the full set, 1,111 hours (≈0.37 million clips) are selected based on motion diversity, category distribution, and dynamic‑mask coverage, ensuring a well‑balanced representation across scene types.

The paper validates the utility of SpatialVID across three major tasks. In controllable video generation, models such as Stable Video Diffusion, Sora, and HunyuanVideo benefit from pre‑training on SpatialVID, achieving a 12 % reduction in Fréchet Video Distance (FVD) and noticeably better adherence to prescribed camera paths. In world simulation, integrating SpatialVID‑HQ into systems like Cosmos and Genie3 improves physical plausibility of generated scenes, as demonstrated by higher user‑perceived realism scores. For geometric reconstruction, training NeRF‑style models, DUSt3R, and VGGT on SpatialVID raises PSNR by ~1.8 dB and SSIM by 0.04, while maintaining stable pose estimation even when dynamic objects are present.

Overall, SpatialVID offers a unique combination of scale (over 7,000 hours of dynamic video), diversity (various environments, lighting, weather, and motion patterns), and annotation richness (camera pose, depth, dynamic masks, structured captions, motion instructions). The authors argue that this dataset will become a foundational benchmark for future research in spatial intelligence, enabling models that can simultaneously understand, generate, and simulate 3‑dimensional worlds. All data and the annotation pipeline are released publicly to encourage community adoption and further extensions.

Comments & Academic Discussion

Loading comments...

Leave a Comment