LaF-GRPO: In-Situ Navigation Instruction Generation for the Visually Impaired via GRPO with LLM-as-Follower Reward

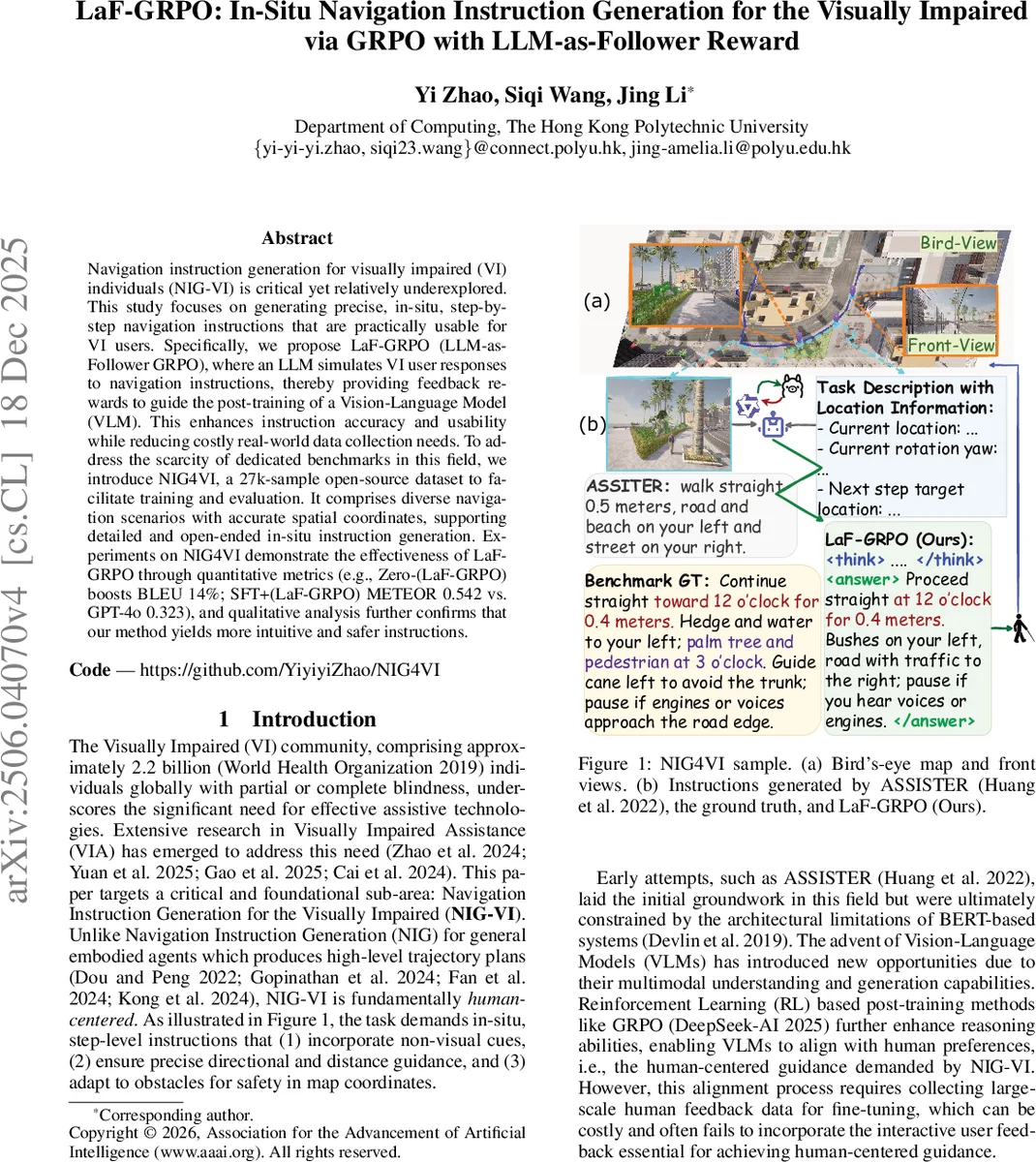

Navigation instruction generation for visually impaired (VI) individuals (NIG-VI) is critical yet relatively underexplored. This study focuses on generating precise, in-situ, step-by-step navigation instructions that are practically usable for VI users. Specifically, we propose LaF-GRPO (LLM-as-Follower GRPO), where an LLM simulates VI user responses to navigation instructions, thereby providing feedback rewards to guide the post-training of a Vision-Language Model (VLM). This enhances instruction accuracy and usability while reducing costly real-world data collection needs. To address the scarcity of dedicated benchmarks in this field, we introduce NIG4VI, a 27k-sample open-source dataset to facilitate training and evaluation. It comprises diverse navigation scenarios with accurate spatial coordinates, supporting detailed and open-ended in-situ instruction generation. Experiments on NIG4VI demonstrate the effectiveness of LaF-GRPO through quantitative metrics (e.g., Zero-(LaF-GRPO) boosts BLEU 14%; SFT+(LaF-GRPO) METEOR 0.542 vs. GPT-4o 0.323), and qualitative analysis further confirms that our method yields more intuitive and safer instructions.

💡 Research Summary

The paper tackles the under‑explored problem of Navigation Instruction Generation for the Visually Impaired (NIG‑VI), which requires step‑by‑step, in‑situ verbal guidance that is safe, precise, and usable without visual references. The authors introduce LaF‑GRPO (LLM‑as‑Follower Group Relative Policy Optimization), a novel framework that couples a Vision‑Language Model (VLM) with a Large Language Model (LLM) that simulates how a visually impaired user would interpret and act upon a given instruction.

Core Idea

- LLM‑as‑Follower – An LLM (fine‑tuned LLaMA‑3‑8B‑Instruct) is trained to parse any generated instruction into a structured “action interpretation” consisting of a movement command (clock‑face direction and distance) and a Boolean hazard‑alert flag. The parser achieves >98 % accuracy on a held‑out set, ensuring reliable feedback.

- VLM Post‑Training – A pre‑trained VLM (Qwen2.5‑VL) first undergoes supervised fine‑tuning (SFT) on a large corpus of human‑annotated instructions, then is refined with GRPO, a reinforcement‑learning algorithm that samples a batch of candidate outputs, computes rewards, and updates the policy via a PPO‑style surrogate loss with KL regularization.

Reward Design

- Format Reward (r_format): binary reward for obeying the required XML‑like

<think>…</think><answer>…</answer>structure. - Text Generation Reward (r_meteor): METEOR score against the ground‑truth reference, capturing semantic overlap, synonymy, and stemming.

- LLM‑as‑Follower Reward (r_LaF): a weighted sum of exact matches on direction (w_dir), distance (w_dist), and hazard‑alert (w_alert). This directly measures navigational usefulness.

The combined reward encourages the VLM to produce well‑formatted, semantically accurate, and practically safe instructions.

Benchmark – NIG4VI

To address the lack of public datasets, the authors release NIG4VI, a 27 k‑sample benchmark built on the CARLA simulator. Each sample includes:

- Current pose (x, y, yaw) and next waypoint pose.

- Front‑view RGB image and semantic segmentation.

- Human‑verified instruction text.

Data generation uses multiple state‑of‑the‑art LLMs (GPT‑4o, Claude‑3.5, Gemini‑2) to draft instructions, which are then refined through a “modality‑bridging” step with DeepSeek‑R1 to make them blind‑friendly. Two independent annotators perform a two‑stage verification, ensuring removal of visual descriptors, inclusion of non‑visual landmarks, and precise metric specifications.

Experiments

Four training paradigms are evaluated: Zero‑shot, Zero‑shot+LaF‑GRPO, SFT, and SFT+LaF‑GRPO. Key findings:

- Zero‑shot+LaF‑GRPO improves BLEU by ~14 % over vanilla zero‑shot.

- SFT+LaF‑GRPO (Qwen2.5‑VL‑7B) reaches METEOR 0.542, surpassing proprietary GPT‑4o (0.323) by a large margin.

- Qualitative analysis shows richer environmental detail, clearer directional cues, and explicit safety warnings (e.g., “pause if you hear engines”).

Contributions

- First application of GRPO to NIG‑VI with an LLM‑simulated follower reward.

- Release of NIG4VI, the first open‑source, coordinate‑accurate dataset for VI navigation.

- Comprehensive empirical study across multiple VLMs and training regimes, demonstrating that simulated user feedback can replace costly real‑world trials while still yielding human‑centered instructions.

Implications

LaF‑GRPO demonstrates that a high‑capacity LLM can act as a virtual user, providing dense, actionable feedback for RL‑based fine‑tuning of multimodal models. This reduces the need for expensive user studies, accelerates development cycles, and opens the door to deploying safe, real‑time navigation assistants on mobile devices or wearables for visually impaired users. Future work should validate the approach with real‑world blind participants and integrate GPS‑level localization for outdoor deployment.

Comments & Academic Discussion

Loading comments...

Leave a Comment