COSMOS: Coherent Supergaussian Modeling with Spatial Priors for Sparse-View 3D Splatting

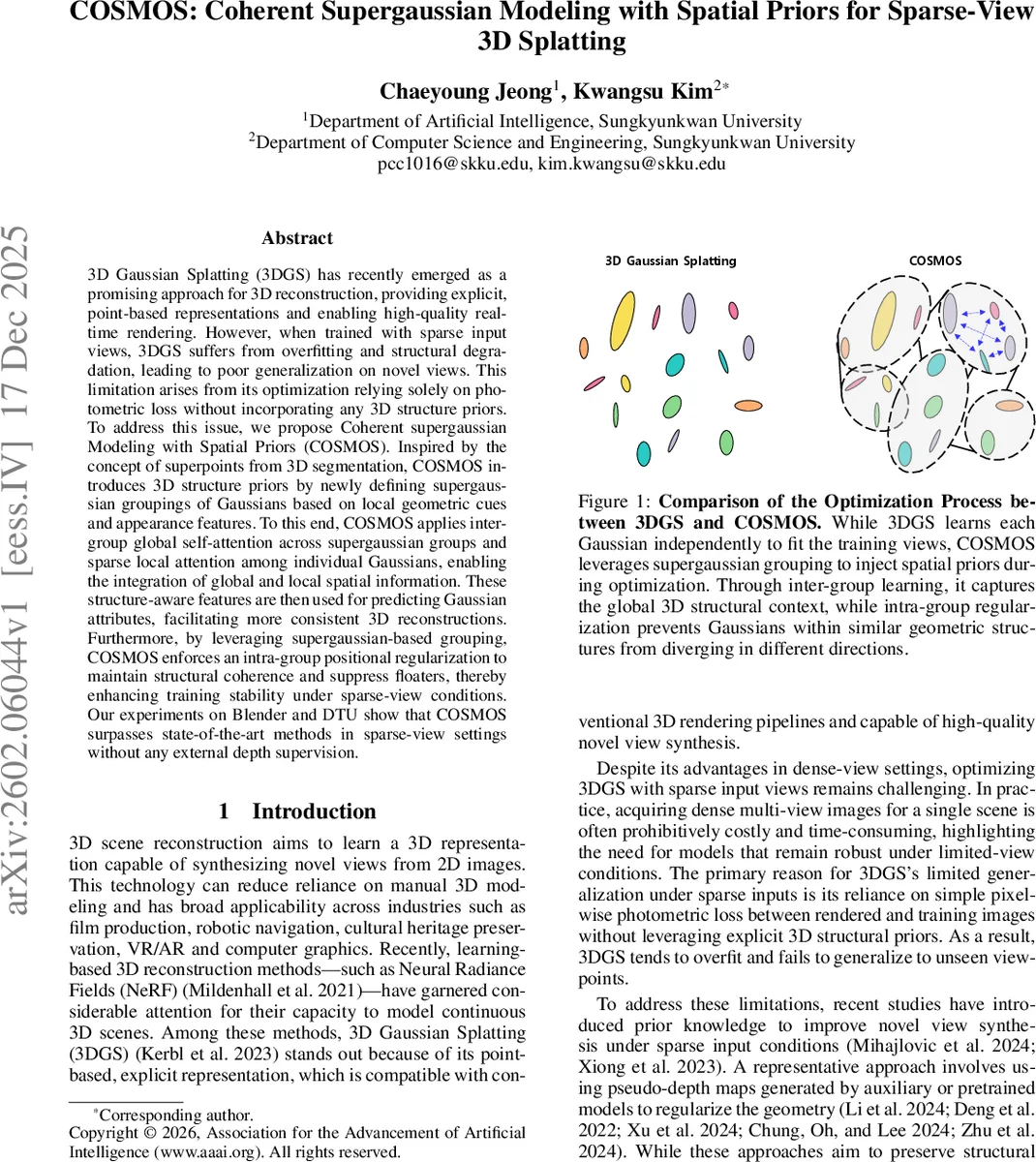

3D Gaussian Splatting (3DGS) has recently emerged as a promising approach for 3D reconstruction, providing explicit, point-based representations and enabling high-quality real time rendering. However, when trained with sparse input views, 3DGS suffers from overfitting and structural degradation, leading to poor generalization on novel views. This limitation arises from its optimization relying solely on photometric loss without incorporating any 3D structure priors. To address this issue, we propose Coherent supergaussian Modeling with Spatial Priors (COSMOS). Inspired by the concept of superpoints from 3D segmentation, COSMOS introduces 3D structure priors by newly defining supergaussian groupings of Gaussians based on local geometric cues and appearance features. To this end, COSMOS applies inter group global self-attention across supergaussian groups and sparse local attention among individual Gaussians, enabling the integration of global and local spatial information. These structure-aware features are then used for predicting Gaussian attributes, facilitating more consistent 3D reconstructions. Furthermore, by leveraging supergaussian-based grouping, COSMOS enforces an intra-group positional regularization to maintain structural coherence and suppress floaters, thereby enhancing training stability under sparse-view conditions. Our experiments on Blender and DTU show that COSMOS surpasses state-of-the-art methods in sparse-view settings without any external depth supervision.

💡 Research Summary

The paper addresses a critical weakness of 3D Gaussian Splatting (3DGS) when only a few input views are available: the model over‑fits to photometric loss and loses structural coherence, leading to poor novel‑view synthesis and the appearance of “floaters.” Existing solutions typically rely on pseudo‑depth or external depth supervision, which introduces dependence on depth prediction quality and scale alignment across views.

COSMOS (Coherent Supergaussian Modeling with Spatial Priors) introduces a novel way to embed 3D structural priors directly into the 3DGS pipeline without any depth supervision. First, each Gaussian is enriched with a feature vector comprising its 3‑D position, color, scale, and four local geometric descriptors (linearity, scattering, verticality, planarity) derived from the covariance of its neighboring points. Using the ℓ₀‑Cut pursuit algorithm, these feature vectors are clustered into a small number (typically a few dozen) of “supergaussians.” This grouping mirrors the superpoint concept from point‑cloud segmentation but adds Gaussian scale as a crucial cue, allowing the model to distinguish fine‑detail clusters from coarse‑structure clusters.

Once the groups are formed, COSMOS applies two complementary attention mechanisms. At the supergaussian level, a global self‑attention module processes a max‑pooled representation of each group. Positional encoding is applied to the group centroid, and query, key, and value projections are generated to compute scaled dot‑product attention across all groups. Because the number of groups is modest, this global attention is computationally cheap yet captures long‑range spatial relationships and overall scene layout.

In parallel, each individual Gaussian participates in a sparse local attention operation that only considers its ten nearest neighbors. This local transformer captures high‑frequency variations and fine‑grained geometry that the coarse global attention may miss. The outputs of the global and local attentions are concatenated, yielding a unified 3‑D feature vector for every Gaussian.

These unified features are fed into separate Residual MLPs that predict the Gaussian’s attributes: position, orientation (via a quaternion‑based rotation), anisotropic scale, RGB color, and opacity. To prevent the emergence of floaters and to enforce intra‑group coherence, COSMOS adds an intra‑group positional regularization loss that penalizes the variance of positions within each supergaussian. This regularizer encourages Gaussians belonging to the same structural region to stay close together, stabilizing training under sparse‑view conditions.

The authors evaluate COSMOS on the Blender synthetic dataset and the real‑world DTU multi‑view dataset, using as few as three or four input images per scene. Compared with state‑of‑the‑art few‑shot NeRF variants, depth‑supervised 3DGS extensions, and recent pseudo‑depth regularization methods, COSMOS consistently achieves higher PSNR, SSIM, and lower LPIPS scores. Qualitative results show markedly fewer floaters, sharper reconstruction of thin structures, and faithful recovery of high‑frequency textures, all without any external depth information.

In summary, the paper’s contributions are: (1) a supergaussian grouping strategy that injects geometric priors into 3DGS, (2) a hybrid attention architecture that efficiently combines global group‑level context with local neighbor‑level detail, (3) an intra‑group positional regularizer that suppresses degenerate geometry, and (4) empirical evidence that these innovations enable robust, high‑quality novel‑view synthesis in extremely sparse‑view scenarios. The approach preserves the explicit, point‑based nature of 3DGS while dramatically improving its applicability to real‑world capture pipelines where dense view acquisition is impractical.

Comments & Academic Discussion

Loading comments...

Leave a Comment