RecTok: Reconstruction Distillation along Rectified Flow

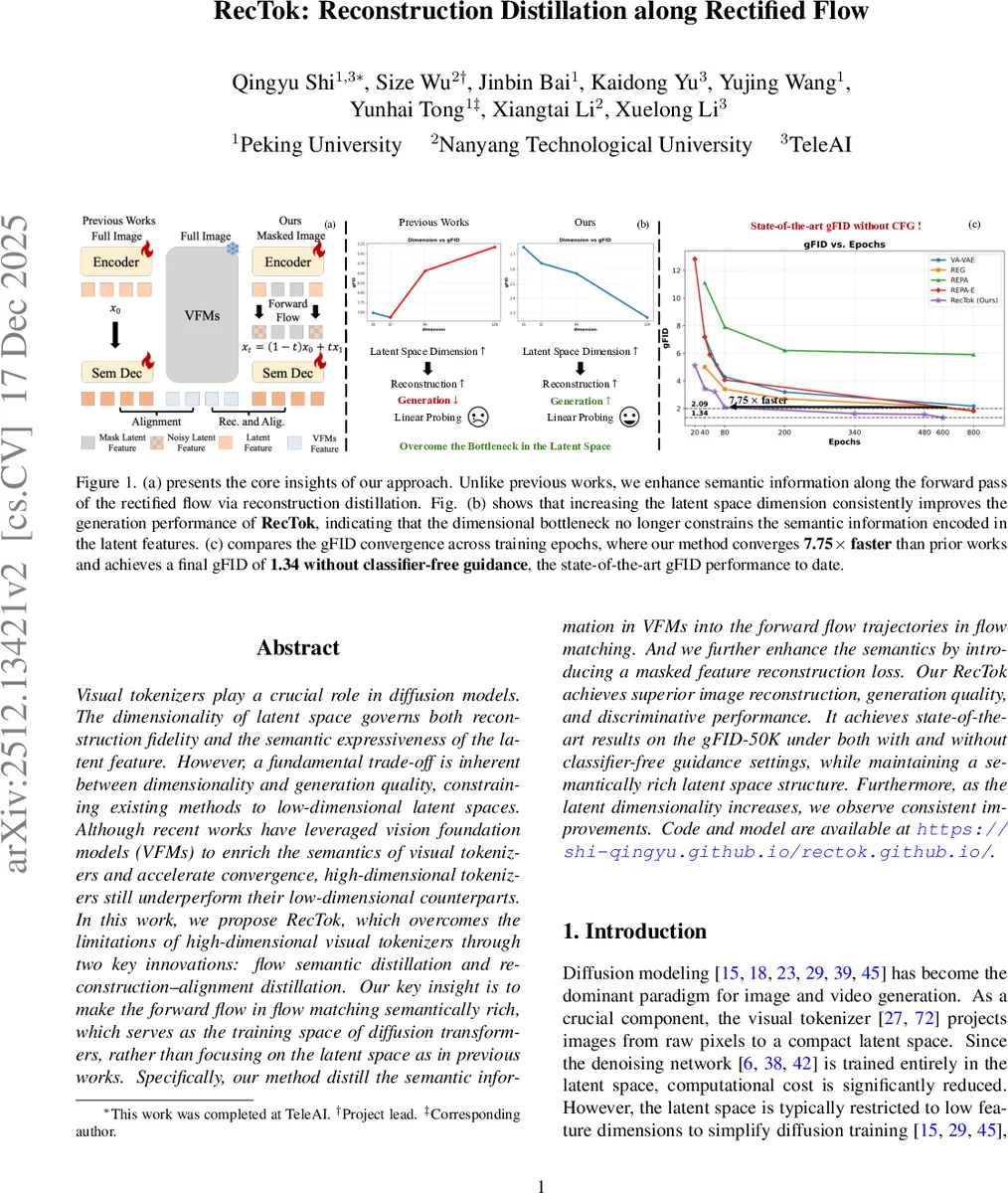

Visual tokenizers play a crucial role in diffusion models. The dimensionality of latent space governs both reconstruction fidelity and the semantic expressiveness of the latent feature. However, a fundamental trade-off is inherent between dimensionality and generation quality, constraining existing methods to low-dimensional latent spaces. Although recent works have leveraged vision foundation models to enrich the semantics of visual tokenizers and accelerate convergence, high-dimensional tokenizers still underperform their low-dimensional counterparts. In this work, we propose RecTok, which overcomes the limitations of high-dimensional visual tokenizers through two key innovations: flow semantic distillation and reconstruction–alignment distillation. Our key insight is to make the forward flow in flow matching semantically rich, which serves as the training space of diffusion transformers, rather than focusing on the latent space as in previous works. Specifically, our method distills the semantic information in VFMs into the forward flow trajectories in flow matching. And we further enhance the semantics by introducing a masked feature reconstruction loss. Our RecTok achieves superior image reconstruction, generation quality, and discriminative performance. It achieves state-of-the-art results on the gFID-50K under both with and without classifier-free guidance settings, while maintaining a semantically rich latent space structure. Furthermore, as the latent dimensionality increases, we observe consistent improvements. Code and model are available at https://shi-qingyu.github.io/rectok.github.io.

💡 Research Summary

The paper “RecTok: Reconstruction Distillation along Rectified Flow” addresses a critical bottleneck in the development of modern generative diffusion models: the inherent trade-off between reconstruction fidelity and generative quality in visual tokenizers. In existing architectures, low-dimensional latent spaces facilitate efficient generation but suffer from loss of detail, whereas high-dimensional spaces allow for precise reconstruction but significantly degrade generation quality due to the increased complexity of the training space.

The authors propose RecTok, a novel framework that breaks this trade-off by shifting the focus of distillation from the static latent space to the dynamic forward flow trajectories within the Flow Matching framework. The core insight is that the training space for Diffusion Transformers (DiTs) is not merely the latent representation itself, but the trajectory of the flow from noise to data. By enriching this trajectory, the model can leverage high-dimensional spaces without the typical performance degradation.

RecTok introduces two primary technical innovations:

- Flow Semantic Distillation: Instead of focusing on the latent features, this method distills semantic richness from Vision Foundation Models (VFMs) directly into the forward flow trajectories. This ensures that the paths the diffusion model learns are inherently semantically meaningful, providing a robust foundation for high-dimensional generation.

- Reconstruction-Alignment Distillation: To further stabilize the semantic structure, the authors implement a masked feature reconstruction loss. This mechanism enhances the alignment between reconstructed features and their semantic counterparts, preventing the loss of structural integrity often seen in high-dimensional latent representations.

Empirical evaluations demonstrate that RecTok achieves state-of-the-art results on the gFID-50K metric, both with and without classifier-free guidance. Most significantly, the paper reveals a breakthrough in scalability: unlike previous methods where increasing dimensionality led to diminished generation quality, RecTok shows consistent improvements in both reconstruction and generation as the latent dimensionality increases. This breakthrough paves the way for more powerful, high-fidelity generative models that can utilize much larger and more expressive latent spaces.

Comments & Academic Discussion

Loading comments...

Leave a Comment