FAIR: Focused Attention Is All You Need for Generative Recommendation

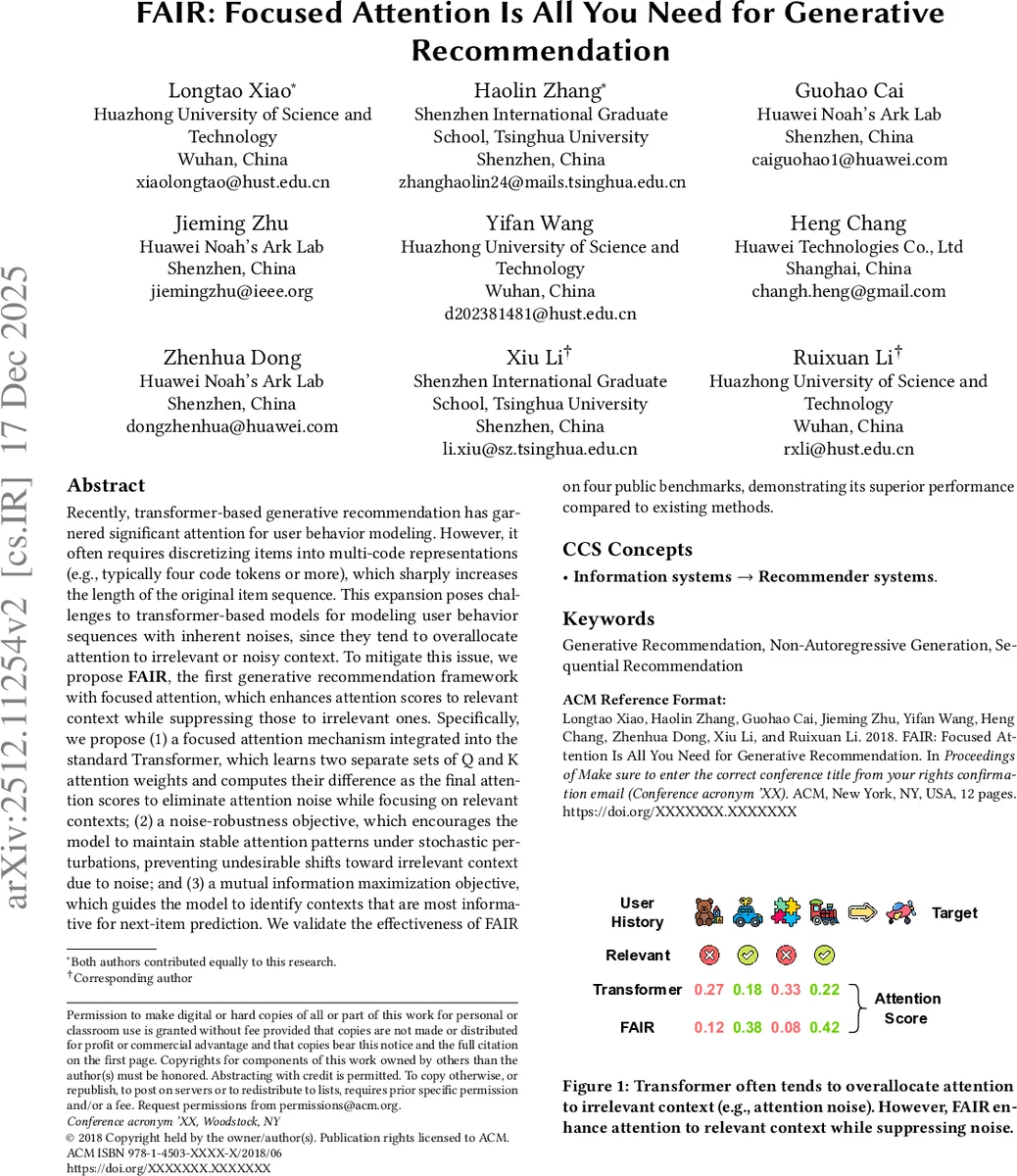

Recently, transformer-based generative recommendation has garnered significant attention for user behavior modeling. However, it often requires discretizing items into multi-code representations (e.g., typically four code tokens or more), which sharply increases the length of the original item sequence. This expansion poses challenges to transformer-based models for modeling user behavior sequences with inherent noises, since they tend to overallocate attention to irrelevant or noisy context. To mitigate this issue, we propose FAIR, the first generative recommendation framework with focused attention, which enhances attention scores to relevant context while suppressing those to irrelevant ones. Specifically, we propose (1) a focused attention mechanism integrated into the standard Transformer, which learns two separate sets of Q and K attention weights and computes their difference as the final attention scores to eliminate attention noise while focusing on relevant contexts; (2) a noise-robustness objective, which encourages the model to maintain stable attention patterns under stochastic perturbations, preventing undesirable shifts toward irrelevant context due to noise; and (3) a mutual information maximization objective, which guides the model to identify contexts that are most informative for next-item prediction. We validate the effectiveness of FAIR on four public benchmarks, demonstrating its superior performance compared to existing methods.

💡 Research Summary

The paper addresses a critical bottleneck in transformer‑based generative recommendation systems: the expansion of user interaction sequences when each item is encoded into multiple discrete codes (often four or more). This expansion dramatically lengthens the input, amplifying the impact of noisy or irrelevant items and causing the transformer to allocate excessive attention to such “attention noise.” To solve this, the authors propose FAIR (Focused Attention Is All You Need), a novel framework that reshapes the attention mechanism, adds robustness against perturbations, and explicitly maximizes the mutual information between context and target.

- Focused Attention Mechanism – Instead of the standard single query‑key pair, FAIR learns two independent sets of query and key matrices (Q₁, K₁ and Q₂, K₂). Each set produces its own scaled dot‑product attention matrix (A₁, A₂). The final attention distribution is obtained by subtracting the second matrix from the first (A = Norm(λ₁A₁ − λ₂A₂)). This subtraction acts like a differential amplifier: correlations captured by one branch that correspond to noise are cancelled out, while informative dependencies highlighted by the other are amplified. The mechanism can be extended to multi‑head attention, allowing diverse sub‑spaces to simultaneously filter noise.

- Noise‑Robustness Task (NRT) – The authors simulate noisy inputs by randomly masking (p_mask = 0.1) or substituting (p_sub = 0.1) tokens in the code sequence. They then enforce consistency between the hidden representation of the clean sequence (h) and its noisy counterpart (˜h) while pushing apart representations of other items in the same batch (h⁻). This is realized with a triplet loss L_NR = max(0, d(h, ˜h) − d(h, h⁻) + m). The loss makes the model invariant to random perturbations, improving robustness in real‑world scenarios where data may be incomplete or corrupted.

- Mutual Information Maximization Task (MIM) – To ensure the model focuses on context that truly predicts the next item, FAIR maximizes the mutual information I(h_t; x_t) between the final‑layer representation of the target item (h_t) and a pooled representation of the source sequence (x_t). Because direct MI estimation is intractable, the authors adopt the InfoNCE contrastive surrogate: L_MIM = −log

Comments & Academic Discussion

Loading comments...

Leave a Comment