Human-Centric Open-Future Task Discovery: Formulation, Benchmark, and Scalable Tree-Based Search

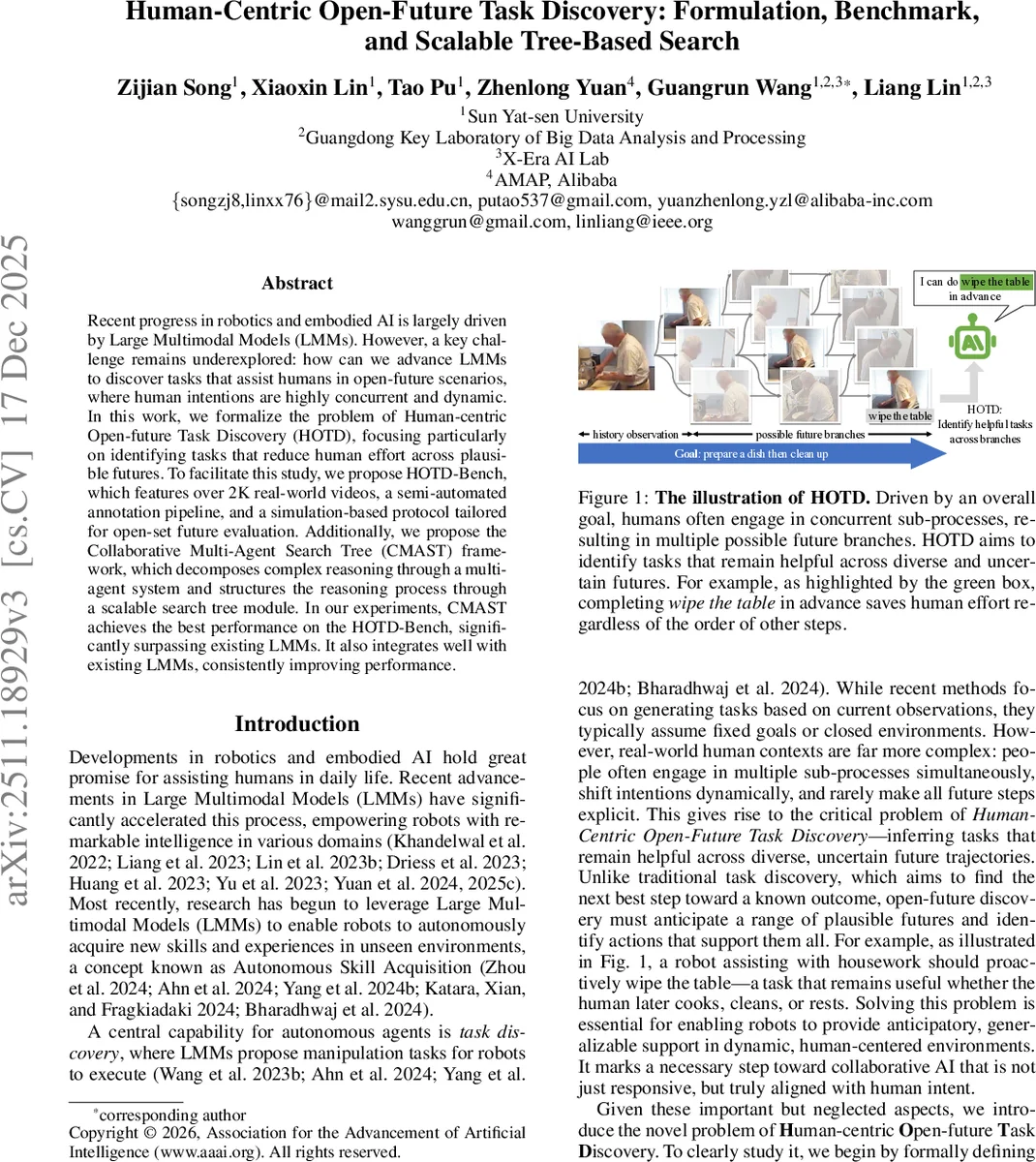

Recent progress in robotics and embodied AI is largely driven by Large Multimodal Models (LMMs). However, a key challenge remains underexplored: how can we advance LMMs to discover tasks that assist humans in open-future scenarios, where human intentions are highly concurrent and dynamic. In this work, we formalize the problem of Human-centric Open-future Task Discovery (HOTD), focusing particularly on identifying tasks that reduce human effort across plausible futures. To facilitate this study, we propose HOTD-Bench, which features over 2K real-world videos, a semi-automated annotation pipeline, and a simulation-based protocol tailored for open-set future evaluation. Additionally, we propose the Collaborative Multi-Agent Search Tree (CMAST) framework, which decomposes complex reasoning through a multi-agent system and structures the reasoning process through a scalable search tree module. In our experiments, CMAST achieves the best performance on the HOTD-Bench, significantly surpassing existing LMMs. It also integrates well with existing LMMs, consistently improving performance.

💡 Research Summary

This paper addresses a previously under‑explored challenge in embodied AI: enabling large multimodal models (LMMs) to discover tasks that help humans in “open‑future” scenarios where intentions are concurrent, dynamic, and not fully specified. The authors formalize the problem as Human‑centric Open‑future Task Discovery (HOTD). Given a video segment of a human’s activity, the goal is to generate a set of tasks that, if performed by a robot, would reduce the overall cost (e.g., time, effort) of achieving the human’s latent goal across all plausible future continuations. A task is considered human‑centric if it is executable by a robot and leads to a lower cost after the human adapts their behavior.

To evaluate such a problem, the authors construct HOTD‑Bench, a benchmark comprising 2,450 real‑world video clips (≈40 hours) drawn from the Toyota Smarthome Untrimmed and Charades datasets. They develop a semi‑automated annotation pipeline that first selects future actions satisfying a “helpful principle,” expands them into natural‑language descriptions, and filters them through non‑disruptive and executable criteria. Because exhaustively labeling all possible future branches is infeasible, they introduce a simulation‑based evaluation method. An LLM acts as a simulator: it envisions the future trajectory with and without the candidate task, computes a relative time‑based cost, and decides whether the task reduces the cost. This approach allows open‑set evaluation of hypothetical futures while minimizing human bias.

The core technical contribution is the Collaborative Multi‑Agent Search Tree (CMAST) framework. CMAST combines two ideas: (1) a scalable search‑tree module that iteratively expands candidate tasks into a tree of possible future procedures, and (2) a collaborative multi‑agent system where specialized agents handle distinct reasoning stages—goal inference, future simulation, cost evaluation, and result aggregation. Each agent leverages an underlying LMM (e.g., GPT‑4‑V, LLaVA‑1.5) and employs chain‑of‑thought prompting to generate intermediate reasoning steps that are stored in the tree nodes for reuse. The tree structure enables thorough exploration of uncertain future branches, while the multi‑agent decomposition reduces the cognitive load on any single model, leading to more accurate and efficient task discovery. CMAST can be plugged into various existing LMMs without retraining.

Experiments on HOTD‑Bench show that CMAST consistently outperforms baseline LMMs on two primary metrics: Valid Task Ratio (the proportion of proposed tasks that are truly helpful) and Valid Task Count (the number of helpful tasks discovered). CMAST achieves improvements of 23–31 percentage points over strong baselines in simulation‑based evaluation and demonstrates comparable gains in label‑based evaluation. Ablation studies reveal that both the search‑tree component and the multi‑agent collaboration are essential; removing either leads to a substantial drop in performance. Visualizations illustrate CMAST’s ability to suggest tasks such as “wipe the table” that remain beneficial regardless of subsequent human actions.

The paper also discusses limitations: reliance on the fidelity of the LLM‑based simulator, a cost function limited to time which may not capture all aspects of human effort, and a benchmark focused on indoor household activities, which may not generalize to industrial or outdoor domains. Future work is suggested in three directions: (i) enriching the cost model with multimodal signals (audio, haptic, physiological), (ii) improving simulation reliability via reinforcement‑learning feedback loops, and (iii) extending the benchmark to diverse environments and conducting real‑world robot deployments.

In summary, the authors introduce a novel formulation of open‑future task discovery, provide a sizable benchmark with a pragmatic simulation evaluation, and propose the CMAST framework that markedly advances the ability of LMM‑driven robots to anticipate and assist with human activities in dynamic, uncertain settings. This work paves the way for more anticipatory, human‑aligned embodied AI systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment