Beyond statistical significance: Quantifying uncertainty and statistical variability in multilingual and multitask NLP evaluation

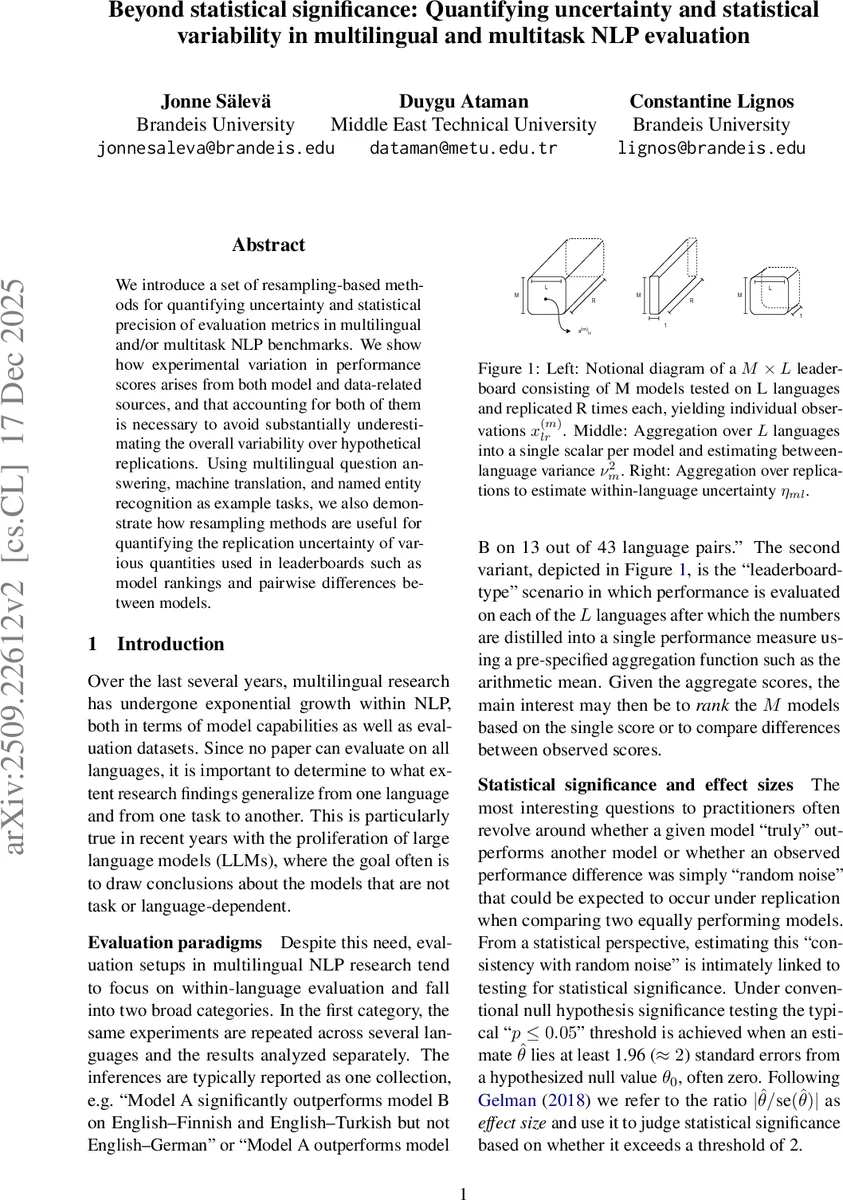

We introduce a set of resampling-based methods for quantifying uncertainty and statistical precision of evaluation metrics in multilingual and/or multitask NLP benchmarks. We show how experimental variation in performance scores arises from both model and data-related sources, and that accounting for both of them is necessary to avoid substantially underestimating the overall variability over hypothetical replications. Using multilingual question answering, machine translation, and named entity recognition as example tasks, we also demonstrate how resampling methods are useful for quantifying the replication uncertainty of various quantities used in leaderboards such as model rankings and pairwise differences between models.

💡 Research Summary

The paper tackles a fundamental problem in multilingual and multitask natural‑language‑processing (NLP) evaluation: the quantification of uncertainty that stems from both model‑side randomness and data‑side sampling variability. While prior work has often focused on a single source of variation—either bootstrap resampling of test sets or multiple training seeds—the authors argue that ignoring either component leads to a severe under‑estimation of the total variability that would be observed across hypothetical replications of an experiment.

To address this, the authors formalize the experimental setting as a three‑level hierarchical population. At the top level each of the M models has a global mean performance (\bar\mu_m). The second level consists of L languages (or tasks), each with its own mean (\mu_{ml}) that deviates from the global mean by a language‑specific term (\varepsilon_{\text{lang},l}). The lowest level contains R replications per language, denoted (x_{mlr}), which differ from the language mean by a replication error (\varepsilon_{\text{rep},lr}). This decomposition yields a variance identity

\

Comments & Academic Discussion

Loading comments...

Leave a Comment