Enhancing Optical Imaging via Quantum Computation

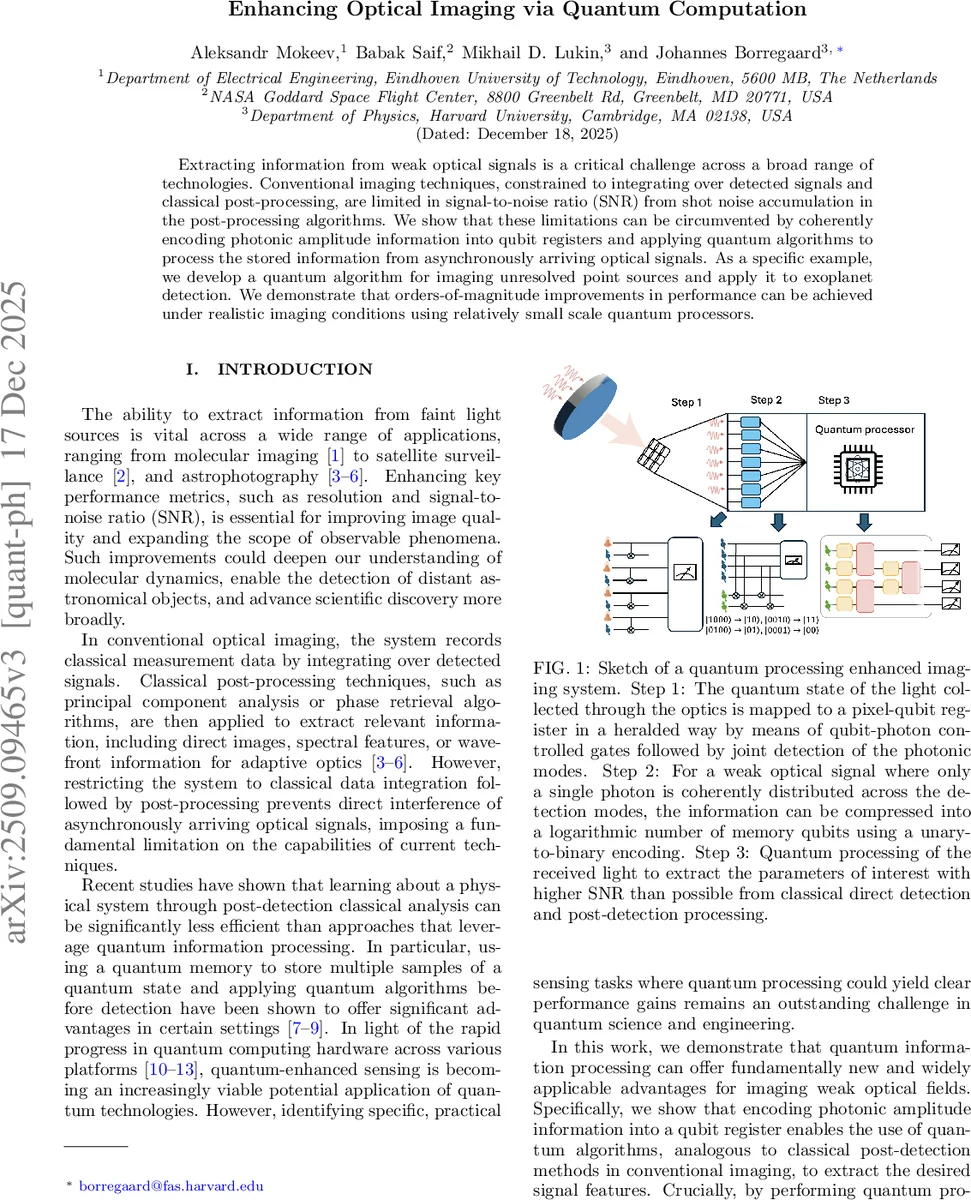

Extracting information from weak optical signals is a critical challenge across a broad range of technologies. Conventional imaging techniques, constrained to integrating over detected signals and classical post-processing, are limited in signal-to-noise ratio (SNR) from shot noise accumulation in the post-processing algorithms. We show that these limitations can be circumvented by coherently encoding photonic amplitude information into qubit registers and applying quantum algorithms to process the stored information from asynchronously arriving optical signals. As a specific example, we develop a quantum algorithm for imaging unresolved point sources and apply it to exoplanet detection. We demonstrate that orders-of-magnitude improvements in performance can be achieved under realistic imaging conditions using relatively small scale quantum processors.

💡 Research Summary

The authors present a novel framework for optical imaging that leverages quantum computation to overcome the fundamental signal‑to‑noise limitations of conventional detectors. In traditional imaging, light is integrated on a pixelated sensor and later processed classically; shot noise accumulates during this post‑processing, capping the achievable SNR. The proposed approach replaces each pixel with a quantum photon‑spin transducer that coherently maps the amplitude of the incoming weak optical field onto a qubit register. This mapping is realized via photon‑reflection from a cavity‑coupled qubit (e.g., a silicon‑vacancy center or a superconducting qubit), where the presence or absence of a photon in a given mode entangles with the qubit’s |0⟩/|1⟩ states. A joint Fourier‑basis measurement of the reflected light “erases” which‑pixel information while preserving the overall amplitude distribution, thereby storing the full quantum state of the light in a set of pixel‑qubits.

Because weak signals typically involve at most a single photon spread over D modes, the authors compress the D‑dimensional photonic state into only log₂ D memory qubits using a unary‑to‑binary encoding (e.g., a 32 × 32 image requires just 10 qubits). Multiple photons arriving over time can be stored sequentially, enabling the accumulation of many samples without classical integration.

The core quantum processing consists of two intertwined algorithms: quantum principal component analysis (QPCA) and a quantum SWAP‑test–like procedure. QPCA is employed to rotate the stored state into the eigenbasis of the point‑spread function (PSF) of the imaging system. By repeatedly applying a controlled unitary e^{±iερ} (where ρ is the density matrix of the stored photons) and performing single‑qubit rotations on an auxiliary qubit, the algorithm projects the state onto the dominant PSF eigenvectors corresponding to the star and the exoplanet. A final measurement of the auxiliary qubit reveals which eigenstate the photon occupied, directly yielding the relative brightness parameter b without reconstructing the full density matrix. This avoids tomographic reconstruction and the associated shot‑noise buildup, resulting in an SNR that scales linearly with the system dimension N rather than with √N as in classical methods.

To demonstrate practical relevance, the authors analyze a typical exoplanet imaging scenario: a 10 × 10 pixel detector observing a star‑exoplanet pair where a coronagraph reduces stellar flux to roughly ten times the planetary flux. Their calculations show that achieving an SNR of 10 with the quantum‑enhanced pipeline requires three to four orders of magnitude fewer detected photons than a conventional tomographic approach. Remarkably, this performance can be attained with an array of ~100 pixel‑qubits, about 36 memory qubits for compression, and a total two‑qubit gate count in the low hundreds—parameters within reach of current superconducting or solid‑state quantum processors.

Implementation details are discussed: the photon‑qubit mapping can be realized with cavity‑spin systems that provide a π phase shift between qubit states, and the Fourier‑basis heralding can be performed with linear‑optical interferometers. Errors in the photon‑spin gate have been experimentally demonstrated at the few‑percent level for SiV centers, suggesting that error‑corrected operation is not strictly necessary for the modest circuit depths required. Moreover, the authors propose using quantum teleportation to transfer the pixel‑qubit state to a dedicated quantum processor optimized for gate operations, allowing heterogeneous hardware architectures.

In conclusion, the paper establishes that storing weak optical fields directly in quantum memory and processing them before measurement yields a genuine quantum advantage for imaging. By eliminating intermediate shot‑noise accumulation and exploiting algorithms that extract only the desired observables, the method offers substantial reductions in integration time, relaxed stability requirements for telescopes, and broader applicability to fields such as molecular imaging, satellite monitoring, and adaptive optics. The analysis indicates that near‑term experimental demonstrations are feasible with existing quantum hardware, opening a pathway toward quantum‑enhanced optical sensing.

Comments & Academic Discussion

Loading comments...

Leave a Comment