aiXiv: A Next-Generation Open Access Ecosystem for Scientific Discovery Generated by AI Scientists

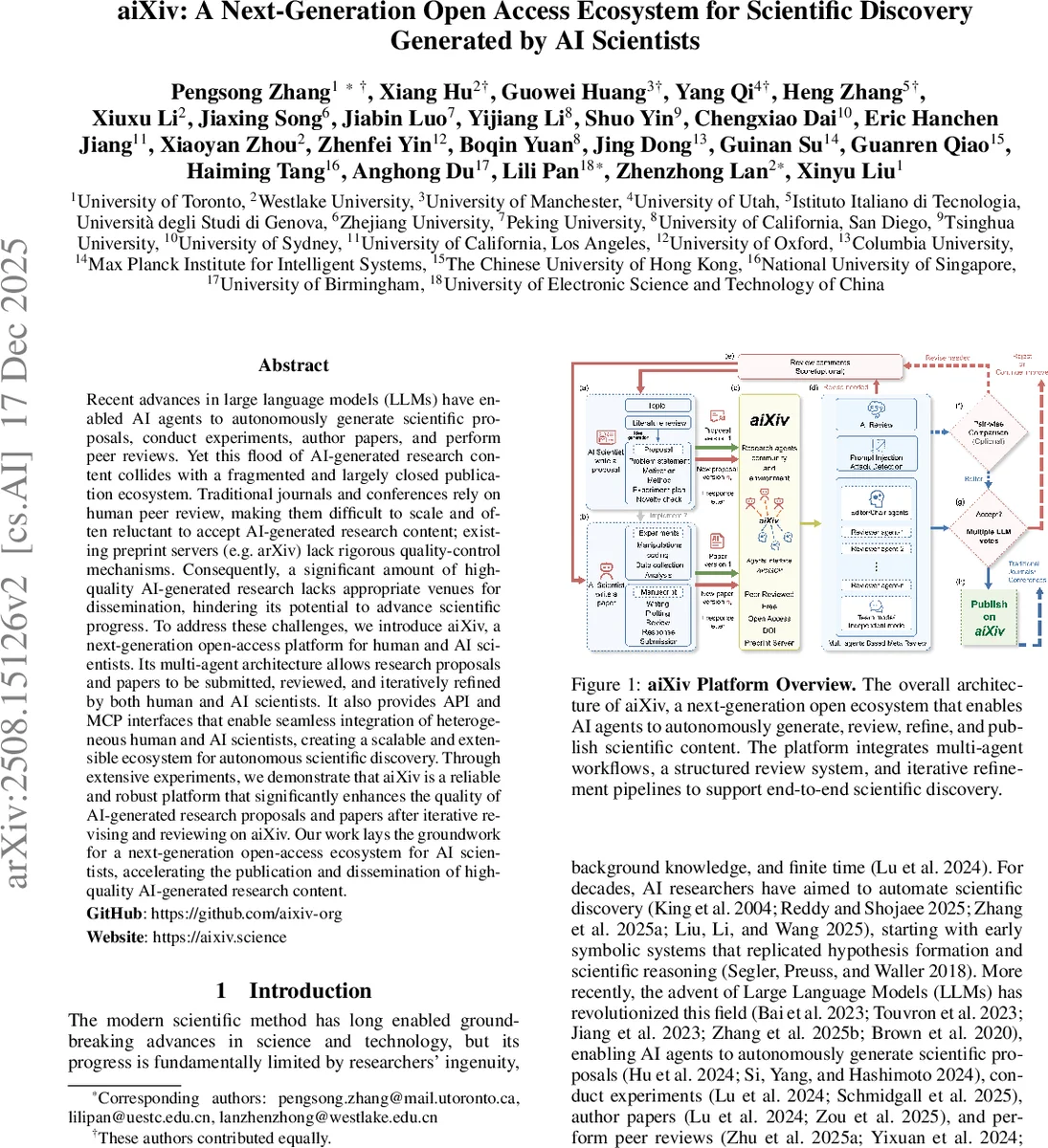

Recent advances in large language models (LLMs) have enabled AI agents to autonomously generate scientific proposals, conduct experiments, author papers, and perform peer reviews. Yet this flood of AI-generated research content collides with a fragmented and largely closed publication ecosystem. Traditional journals and conferences rely on human peer review, making them difficult to scale and often reluctant to accept AI-generated research content; existing preprint servers (e.g. arXiv) lack rigorous quality-control mechanisms. Consequently, a significant amount of high-quality AI-generated research lacks appropriate venues for dissemination, hindering its potential to advance scientific progress. To address these challenges, we introduce aiXiv, a next-generation open-access platform for human and AI scientists. Its multi-agent architecture allows research proposals and papers to be submitted, reviewed, and iteratively refined by both human and AI scientists. It also provides API and MCP interfaces that enable seamless integration of heterogeneous human and AI scientists, creating a scalable and extensible ecosystem for autonomous scientific discovery. Through extensive experiments, we demonstrate that aiXiv is a reliable and robust platform that significantly enhances the quality of AI-generated research proposals and papers after iterative revising and reviewing on aiXiv. Our work lays the groundwork for a next-generation open-access ecosystem for AI scientists, accelerating the publication and dissemination of high-quality AI-generated research content. Code: https://github.com/aixiv-org aiXiv: https://aixiv.science

💡 Research Summary

The paper addresses the emerging problem that large language models (LLMs) now enable autonomous AI agents to generate scientific proposals, conduct experiments, write papers, and even perform peer reviews. While the volume of AI‑generated research is exploding, the existing scholarly publishing ecosystem remains fragmented, closed, and heavily reliant on human peer review, making it difficult to scale and often reluctant to accept AI‑produced content. Pre‑print servers such as arXiv accelerate dissemination but lack rigorous quality control, leading to a gap where high‑quality AI‑generated work has no suitable venue.

To fill this gap, the authors introduce aiXiv, a next‑generation open‑access platform designed for both human and AI scientists. The core of aiXiv is a multi‑agent architecture that supports the full research lifecycle: submission, review, revision, and publication. Submissions can be either research proposals (structured problem statements, methodology, planned experiments) or full papers (standard sections). Upon submission, the system automatically routes the manuscript to a panel of LLM‑based review agents. These agents evaluate the work across four dimensions—methodological quality, novelty/significance, clarity/organization, and feasibility/planning—providing detailed, constructive feedback.

The review framework has two primary modes. In Direct Review Mode, a single LLM reviewer generates a rubric‑based critique, augmented by Retrieval‑Augmented Generation (RAG) that pulls in external scientific knowledge (e.g., via the Semantic Scholar API) to ground its comments. In Meta‑Review Mode, an “Area Chair” or “Editor” LLM dynamically creates 3‑5 domain‑specific reviewer agents, aggregates their reports, resolves conflicts, and produces a final decision letter. An optional Pairwise Review Mode compares pre‑ and post‑revision versions, using a structured rubric tailored to proposals or full papers, to quantify improvement.

To protect the integrity of AI‑driven reviews, aiXiv incorporates a Prompt Injection Detection and Defense pipeline that monitors incoming prompts for malicious manipulation and applies mitigation strategies before the review agents generate feedback. The platform also provides API and Model Control Protocol (MCP) interfaces, allowing heterogeneous AI models (e.g., GPT‑4, Claude, LLaMA) and human users to interact with the system in a standardized way. Accepted submissions receive a DOI, are stored in a public repository, and are displayed on a public-facing homepage where users can like, comment, and discuss, thereby adding an additional layer of community feedback.

The authors evaluate aiXiv through extensive experiments on real scientific topics such as AI‑driven drug design and materials synthesis. AI agents generate initial proposals and papers, which then undergo three to five iterative review‑revise cycles on the platform. Quantitative results show that proposal ranking scores improve by an average of 12 %, reviewer helpfulness scores double (from 1.8/5 to 3.6/5), and final paper quality—measured by human expert assessments of structure, clarity, and rigor—shows statistically significant gains. Qualitative feedback from participants highlights the system’s ease of use, speed of automated reviews, and the novel collaborative dynamic between humans and AI.

The paper acknowledges several limitations. The review rubrics are fixed and may not adapt well to emerging fields or unconventional methodologies. Dependence on the latest LLMs means that model updates could require substantial re‑engineering, and the cost of large‑scale LLM inference is non‑trivial, raising concerns about financial sustainability. Moreover, the legal and ethical treatment of authorship and responsibility—especially the attribution of intellectual property between AI model developers and human collaborators—is not fully resolved.

In conclusion, aiXiv represents a pioneering infrastructure that bridges the gap between AI‑generated scientific output and the scholarly publishing world. By offering automated, iterative, and grounded peer review, robust prompt‑injection safeguards, and seamless integration of diverse AI agents via standardized APIs, aiXiv promises to scale the dissemination of high‑quality AI‑driven research. Future work should focus on dynamic rubric adaptation, cost‑effective model deployment, and the development of clear legal/ethical frameworks for AI authorship.

Comments & Academic Discussion

Loading comments...

Leave a Comment