3DLLM-Mem: Long-Term Spatial-Temporal Memory for Embodied 3D Large Language Model

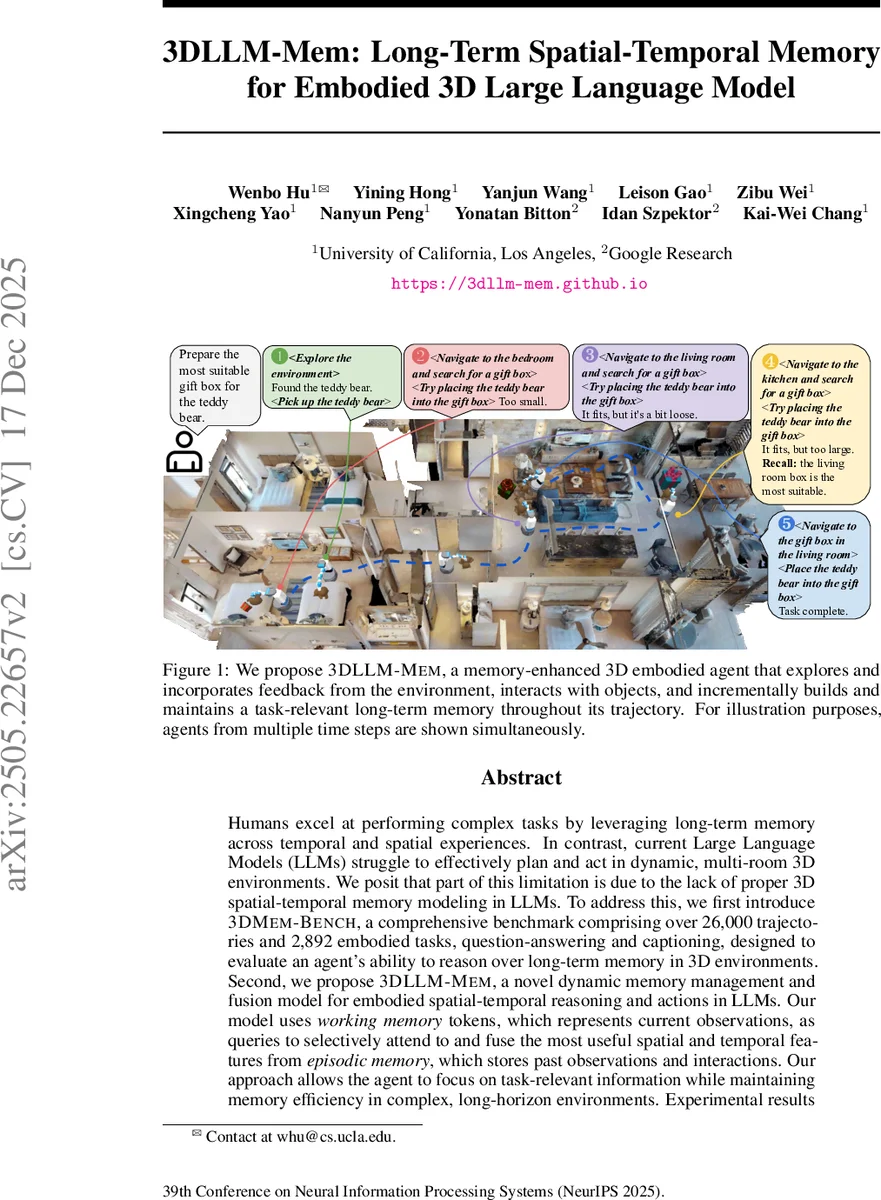

Humans excel at performing complex tasks by leveraging long-term memory across temporal and spatial experiences. In contrast, current Large Language Models (LLMs) struggle to effectively plan and act in dynamic, multi-room 3D environments. We posit that part of this limitation is due to the lack of proper 3D spatial-temporal memory modeling in LLMs. To address this, we first introduce 3DMem-Bench, a comprehensive benchmark comprising over 26,000 trajectories and 2,892 embodied tasks, question-answering and captioning, designed to evaluate an agent’s ability to reason over long-term memory in 3D environments. Second, we propose 3DLLM-Mem, a novel dynamic memory management and fusion model for embodied spatial-temporal reasoning and actions in LLMs. Our model uses working memory tokens, which represents current observations, as queries to selectively attend to and fuse the most useful spatial and temporal features from episodic memory, which stores past observations and interactions. Our approach allows the agent to focus on task-relevant information while maintaining memory efficiency in complex, long-horizon environments. Experimental results demonstrate that 3DLLM-Mem achieves state-of-the-art performance across various tasks, outperforming the strongest baselines by 16.5% in success rate on 3DMem-Bench’s most challenging in-the-wild embodied tasks.

💡 Research Summary

The paper tackles the fundamental gap between human cognition and current embodied AI: the ability to retain and reason over long‑term spatial‑temporal memory in complex 3‑D environments. To this end, the authors introduce two major contributions. First, they present 3DMEM‑Bench, a large‑scale benchmark built on the Habitat‑Sim platform. It comprises 182 indoor scenes (2,602 rooms) derived from the HM3D dataset, enriched with interactive objects from Objaverse. Over 26 000 validated trajectories are generated via LLM‑driven prompting, and 1 860 fine‑grained tasks are curated. The benchmark splits into three evaluation categories: (i) Embodied Tasks that require multi‑room action sequences, (ii) Long‑Term Memory EQA covering spatial reasoning, object navigation, comparative reasoning, layout understanding, and semantic counting, and (iii) Captioning that asks the agent to summarize episodic experiences. Each category is further stratified by difficulty (simple, medium, hard) and by in‑domain versus in‑the‑wild settings, ensuring a thorough assessment of memory capacity, reasoning depth, and generalization.

Second, the authors propose 3DLLM‑Mem, a 3‑D embodied large language model equipped with a dual‑memory architecture and a memory‑fusion module. The architecture consists of:

- Working Memory – a limited‑capacity buffer that holds tokenized current observations (RGB‑D patches, language instructions) and is directly fed into the LLM’s context.

- Episodic Memory – an expandable store of dense 3‑D representations (point clouds, object metadata) accumulated throughout exploration.

During inference, the working‑memory tokens act as queries in a cross‑attention mechanism that selects the most task‑relevant spatio‑temporal features from episodic memory. The selected features are projected, weighted, and merged with the working memory via a memory‑fusion block that respects positional embeddings, temporal ordering, and spatial proximity. This design allows the model to retrieve rich geometric context without exceeding the LLM’s context window, effectively emulating human episodic recall.

Experimental evaluation compares 3DLLM‑Mem against state‑of‑the‑art 3‑D LLMs (e.g., Habitat‑GPT, 3D‑GPT) and memory‑augmented baselines (e.g., MemGPT, Retrieval‑Augmented LLM). Results show that 3DLLM‑Mem achieves a 16.5 % absolute improvement in success rate on the most challenging in‑the‑wild tasks, maintaining a 27.8 % success rate even on hard difficulty levels where other methods drop below 5 %. In EQA, the model reaches >85 % accuracy on comparative and multi‑room layout questions, and in captioning it attains an F1 of 0.73, surpassing baselines by a large margin. Ablation studies confirm that the memory‑fusion module is critical: removing it reduces performance by more than 10 % points across all metrics.

The paper also discusses limitations. The episodic memory currently relies on pre‑computed point‑cloud reconstructions stored locally, which may hinder real‑time scalability on resource‑constrained robots. Moreover, the attention‑based selection can become overly focused on a subset of memory, potentially ignoring other useful cues. Future work is outlined to address these issues through online memory compression, hierarchical memory pruning, and incorporation of additional modalities such as audio or tactile feedback to more closely mimic human memory systems.

In summary, 3DMEM‑Bench provides the community with a rigorous testbed for long‑term spatial‑temporal reasoning, and 3DLLM‑Mem demonstrates that a carefully engineered dual‑memory system with dynamic fusion can substantially close the gap between human‑like memory usage and current embodied AI capabilities.

Comments & Academic Discussion

Loading comments...

Leave a Comment