One-Cycle Structured Pruning via Stability-Driven Subnetwork Search

Existing structured pruning methods typically rely on multi-stage training procedures that incur high computational costs. Pruning at initialization aims to reduce this burden but often suffers from degraded performance. To address these limitations, we propose an efficient one-cycle structured pruning framework that integrates pre-training, pruning, and fine-tuning into a single training cycle without sacrificing accuracy. The key idea is to identify an optimal sub-network during the early stages of training, guided by norm-based group saliency criteria and structured sparsity regularization. We introduce a novel pruning indicator that detects a stable pruning epoch by measuring the similarity between pruning sub-networks across consecutive training epochs. In addition, group sparsity regularization accelerates convergence, further reducing overall training time. Extensive experiments on CIFAR-10, CIFAR-100, and ImageNet using VGG, ResNet, and MobileNet architectures demonstrate that the proposed method achieves state-of-the-art accuracy while being among the most efficient structured pruning frameworks in terms of training cost. Code is available at https://github.com/ghimiredhikura/OCSPruner.

💡 Research Summary

The paper introduces OCSPruner, a one‑cycle structured pruning framework that merges pre‑training, pruning, and fine‑tuning into a single training loop, thereby eliminating the heavy computational burden of conventional multi‑stage pipelines. The method hinges on three core components: (1) group‑based saliency estimation, (2) progressive structured sparsity regularization, and (3) a pruning‑stability indicator that automatically determines the optimal pruning epoch.

First, the network parameters are partitioned into structural groups (e.g., channels, filters, or any set of coupled weights). For each group g, a normalized L2‑norm score S(g) is computed by summing the L2 norms of all weights in the group, then dividing by the group’s cardinality and the total number of groups. This normalization enables fair comparison across groups of different sizes. The groups are globally ranked by S(g) and a target pruning ratio α is applied: the lowest‑scoring groups are temporarily masked, forming a provisional sub‑network M_t at epoch t.

Second, a structured sparsity regularization term is added to the loss: L_total = L_task + λ_t Σ_{g∈G_prune} ||g||_2, where G_prune denotes the groups selected for pruning at the current epoch. The regularization coefficient λ_t grows progressively (linearly or exponentially) as training proceeds, pushing low‑importance groups toward zero while allowing important groups to remain unaffected. This joint use of group saliency and regularization encourages the network to naturally evolve a sparse structure during ordinary gradient descent.

Third, the authors propose a pruning‑stability metric based on Jaccard similarity. After each epoch, the temporarily pruned sub‑network’s retained filter set F_l^t is recorded for every layer l. The similarity between two consecutive sub‑networks (t‑i and t) is measured as J(M_{t‑i}, M_t) = (1/L) Σ_{l=1}^L |F_l^{t‑i} ∩ F_l^t| / |F_l^{t‑i} ∪ F_l^t|. An average over the past r epochs, J_t^avg, smooths out noise. When the change |J_t^avg – J_{t‑r}^avg| falls below a small threshold τ, the algorithm declares that sparsity learning has started. The stable pruning epoch t* is identified as the first epoch where J_t^avg ≥ 1 – ε, indicating that the sub‑network composition has essentially stopped changing. At t* the temporary mask is made permanent, and training continues on the pruned architecture for the remaining epochs.

Algorithmically, OCSPruner proceeds as follows: (i) train a randomly initialized model for a few epochs; (ii) at each epoch compute group saliency, apply the target pruning ratio, and obtain a temporary mask; (iii) update the stability score; (iv) once stability is detected, increase λ_t each epoch; (v) when the stability score reaches the high‑similarity threshold, fix the mask and finish training. This workflow eliminates the need for a separate pre‑training phase and for multiple fine‑tuning passes.

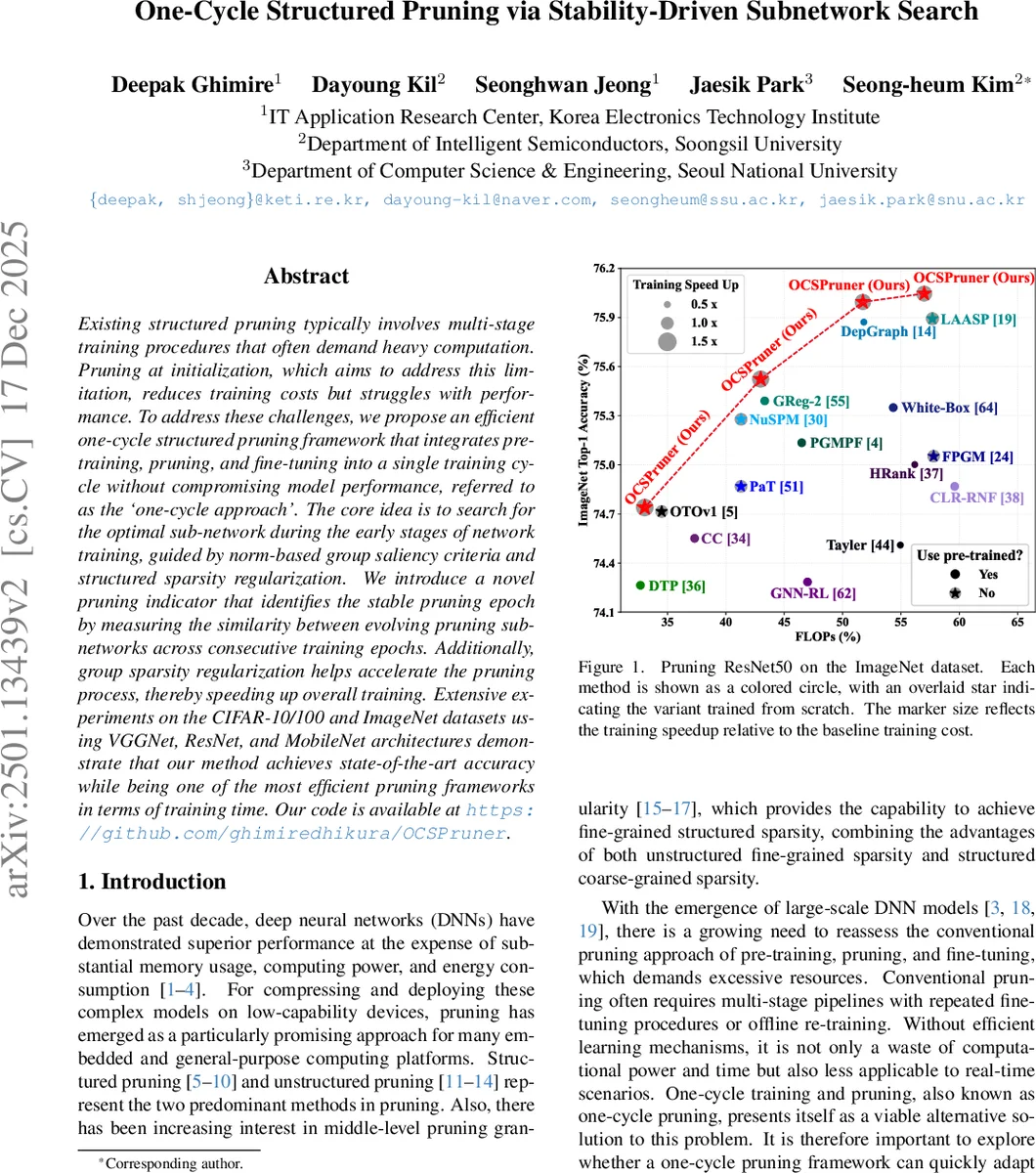

The authors evaluate OCSPruner on CIFAR‑10, CIFAR‑100, and ImageNet using VGG‑16, ResNet‑50, and MobileNet‑V2. On ImageNet with ResNet‑50, a 57 % reduction in FLOPs is achieved while preserving top‑1 accuracy at 75.49 % and top‑5 at 92.63 %, matching or surpassing state‑of‑the‑art methods. Training time is reduced by a factor of 1.38 compared with the conventional three‑stage pipeline. Similar gains are reported for VGG‑16 and MobileNet‑V2, where OCSPruner attains comparable accuracy with 40‑60 % fewer FLOPs and a 30 %+ speed‑up in training.

Compared with related one‑cycle approaches such as Pruning‑aware Training (PaT) and Loss‑aware Automatic Selection of Pruning (LAASP), OCSPruner demonstrates higher accuracy stability because it accounts for both the number and the identity of retained filters, whereas PaT only tracks channel counts and LAASP lacks a stability criterion. The progressive regularization further accelerates convergence of the stability score, allowing more epochs for fine‑tuning after pruning.

The paper acknowledges a few limitations. The thresholds τ, ε, and the schedule for λ_t must be tuned per dataset and architecture, which may limit out‑of‑the‑box applicability. The definition of structural groups relies on manual analysis of network dependencies; an automated grouping mechanism would improve scalability. Finally, experiments are confined to image classification; extending the method to detection, segmentation, or non‑vision tasks remains future work.

In summary, OCSPruner offers a practical, efficient solution for structured model compression by integrating pruning directly into the training loop and using a data‑driven stability signal to decide when to prune. It achieves state‑of‑the‑art accuracy with significantly reduced training cost, making it attractive for resource‑constrained scenarios such as edge AI, autonomous vehicles, and rapid prototyping. Future directions include automated hyper‑parameter selection, broader architectural support, and hardware‑aware optimization.

Comments & Academic Discussion

Loading comments...

Leave a Comment