ComMark: Covert and Robust Black-Box Model Watermarking with Compressed Samples

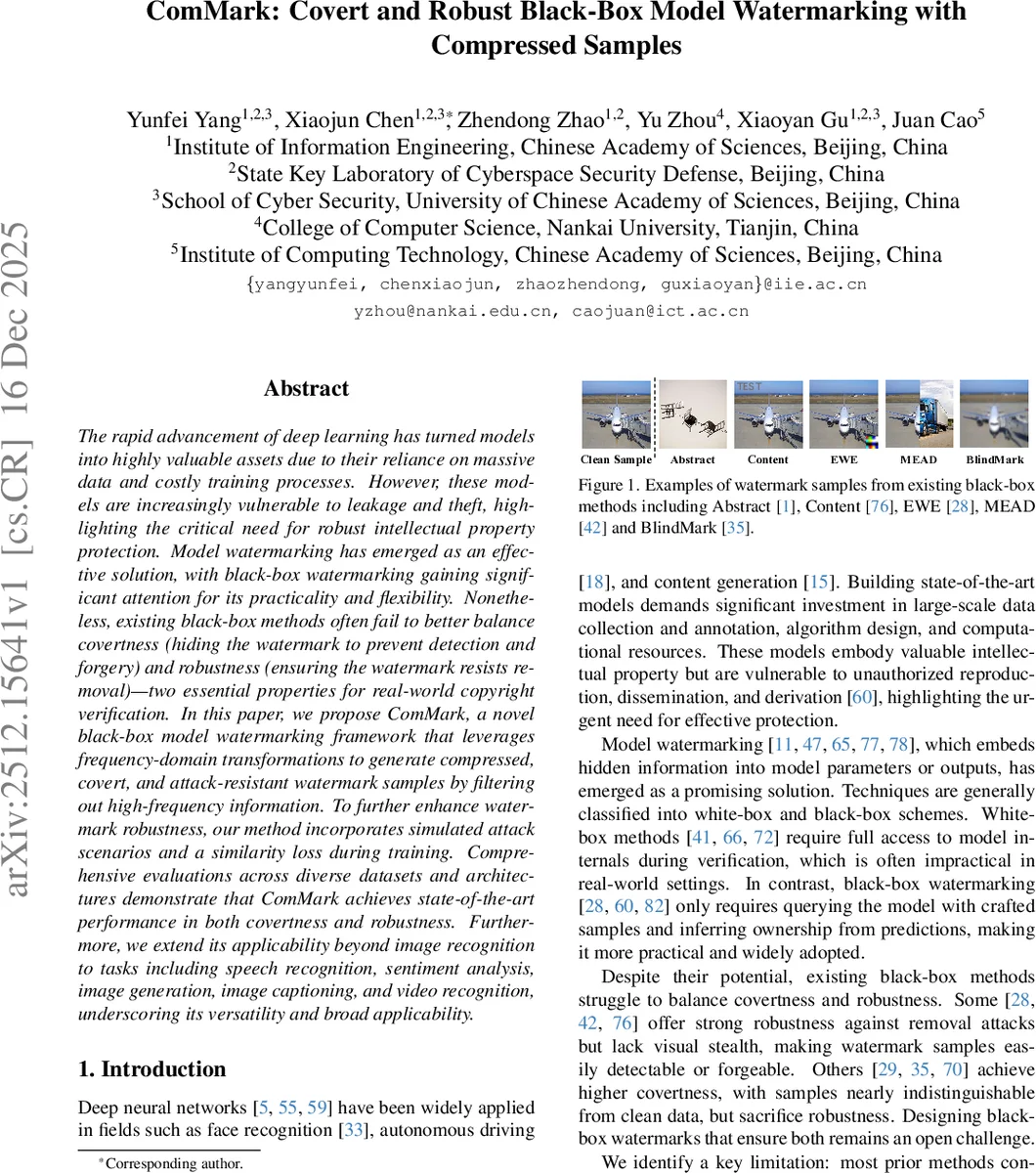

The rapid advancement of deep learning has turned models into highly valuable assets due to their reliance on massive data and costly training processes. However, these models are increasingly vulnerable to leakage and theft, highlighting the critical need for robust intellectual property protection. Model watermarking has emerged as an effective solution, with black-box watermarking gaining significant attention for its practicality and flexibility. Nonetheless, existing black-box methods often fail to better balance covertness (hiding the watermark to prevent detection and forgery) and robustness (ensuring the watermark resists removal)-two essential properties for real-world copyright verification. In this paper, we propose ComMark, a novel black-box model watermarking framework that leverages frequency-domain transformations to generate compressed, covert, and attack-resistant watermark samples by filtering out high-frequency information. To further enhance watermark robustness, our method incorporates simulated attack scenarios and a similarity loss during training. Comprehensive evaluations across diverse datasets and architectures demonstrate that ComMark achieves state-of-the-art performance in both covertness and robustness. Furthermore, we extend its applicability beyond image recognition to tasks including speech recognition, sentiment analysis, image generation, image captioning, and video recognition, underscoring its versatility and broad applicability.

💡 Research Summary

The paper introduces ComMark, a novel black‑box model watermarking framework that leverages frequency‑domain compression to create covert yet robust watermark triggers. Traditional black‑box watermarking methods embed visible patterns or localized pixel modifications, which are either easy to detect or fragile against common preprocessing attacks. ComMark instead transforms clean training samples into the YCbCr color space, splits them into 8×8 blocks, and applies a 2‑D Discrete Cosine Transform (DCT). Using JPEG‑style quantization tables, high‑frequency coefficients are heavily quantized while low‑frequency components are largely preserved. After inverse DCT, the reconstructed images look virtually identical to the originals, but they contain a globally distributed, imperceptible distortion that serves as the watermark trigger.

During model training, a subset of these compressed samples is relabeled with a pre‑selected “watermark target label” and mixed with the original dataset. To improve resilience, the authors simulate ten common preprocessing attacks (cropping, rotation, scaling, Gaussian noise, blur, brightness shift, image quantization, JPEG2000, WEBP, and color quantization) on both clean and watermark data each epoch. The loss function combines four terms: (1) standard cross‑entropy on clean data, (2) cross‑entropy on watermark data to enforce the target label, (3) cross‑entropy on attacked data to maintain performance under perturbations, and (4) a contrastive similarity loss that pulls together features of same‑label samples while pushing apart different‑label ones. The total loss is L = L_pri + αL_wm + βL_attk + γL_sim, with coefficients tuned experimentally.

Ownership verification is performed in a black‑box setting: the defender queries the suspect model with a set of watermark test samples and measures the watermark success rate (Acc_wm). If Acc_wm exceeds a predefined threshold, ownership is asserted. Because the watermark trigger is embedded in the compression behavior rather than explicit pixel patterns, it is extremely hard for an adversary to discover or forge.

Extensive experiments cover image classification (GTSRB, CIFAR‑10/100, VGGFace), speech recognition (LibriSpeech), sentiment analysis (SST‑2), image generation (StyleGAN), image captioning, and video recognition. Results show that ComMark achieves comparable or slightly lower clean‑model accuracy (≤1 % drop) while attaining state‑of‑the‑art watermark success rates (WSR) and dramatically improved covertness (human visual tests and SSIM indicate near‑imperceptibility). Under model extraction attacks (distillation, JBD, Knockoff) and post‑training defenses (fine‑tuning, pruning), both soft‑label and hard‑label settings retain >90 % watermark detection, outperforming prior methods such as Abstract, Content, MEAD, BlindMark, and MAB.

The paper’s contributions are threefold: (1) a frequency‑domain compression‑based watermark sample construction that hides the trigger in global, high‑frequency removal; (2) an attack‑simulation‑driven training pipeline that endows the watermark with strong robustness against a wide range of preprocessing manipulations; (3) a similarity loss that aligns watermark samples in feature space, further strengthening the link to the target label. The authors also release code for reproducibility.

In summary, ComMark demonstrates that leveraging compression artifacts as watermark triggers can simultaneously satisfy the two most demanding requirements of black‑box watermarking—covertness and robustness—across multiple modalities, offering a practical solution for protecting valuable deep learning models in real‑world deployment scenarios.

Comments & Academic Discussion

Loading comments...

Leave a Comment