4D-RaDiff: Latent Diffusion for 4D Radar Point Cloud Generation

Automotive radar has shown promising developments in environment perception due to its cost-effectiveness and robustness in adverse weather conditions. However, the limited availability of annotated radar data poses a significant challenge for advancing radar-based perception systems. To address this limitation, we propose a novel framework to generate 4D radar point clouds for training and evaluating object detectors. Unlike image-based diffusion, our method is designed to consider the sparsity and unique characteristics of radar point clouds by applying diffusion to a latent point cloud representation. Within this latent space, generation is controlled via conditioning at either the object or scene level. The proposed 4D-RaDiff converts unlabeled bounding boxes into high-quality radar annotations and transforms existing LiDAR point cloud data into realistic radar scenes. Experiments demonstrate that incorporating synthetic radar data of 4D-RaDiff as data augmentation method during training consistently improves object detection performance compared to training on real data only. In addition, pre-training on our synthetic data reduces the amount of required annotated radar data by up to 90% while achieving comparable object detection performance.

💡 Research Summary

The paper addresses the critical shortage of annotated 4‑D automotive radar point clouds, which hampers the development of radar‑centric perception systems despite radar’s cost‑effectiveness and robustness in adverse weather. To alleviate this bottleneck, the authors propose 4D‑RaDiff, a novel latent diffusion framework that generates realistic radar point clouds—including Doppler velocity and radar cross‑section (RCS) attributes—directly from either 3‑D bounding‑box annotations or LiDAR point clouds.

Core Architecture

- Point‑based VAE: Radar points (xyz + Doppler + RCS) are encoded into a regularized latent point cloud (z \in \mathbb{R}^{M \times d_z}). The VAE is trained with a reconstruction loss and a KL‑divergence term, ensuring that the latent space preserves the geometric and physical statistics of the original data while drastically reducing dimensionality.

- Latent Diffusion Model (LDM): Using Sparse Point‑Voxel Diffusion (SPVD) as the backbone, a denoising diffusion probabilistic model operates on the latent representations. The diffusion loss follows the standard DDPM formulation, but the conditioning encoder (\tau_\theta) is trained separately for each conditioning modality.

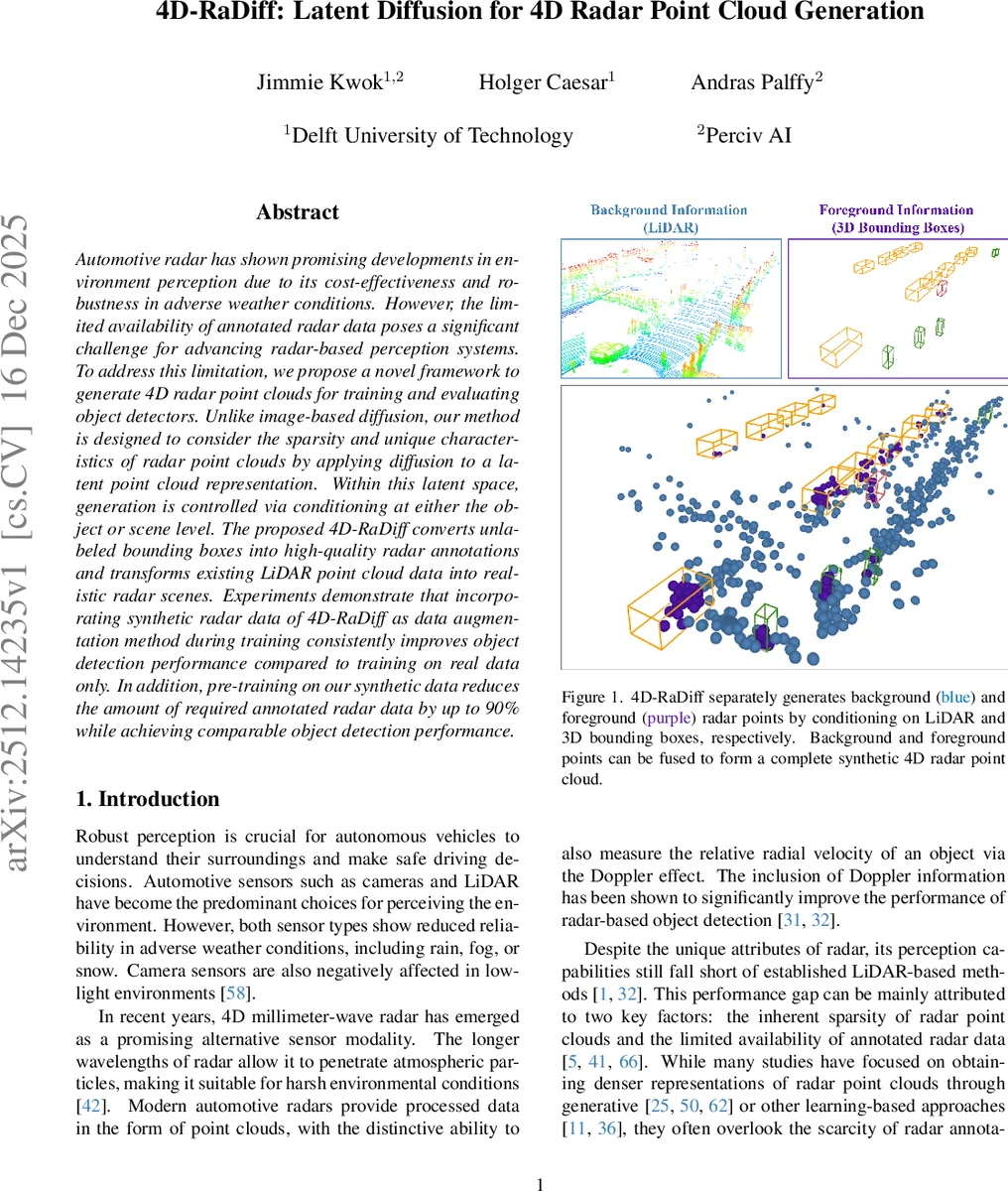

Foreground vs. Background Generation

- Foreground: Conditioned on a set of 3‑D bounding boxes (position, size, yaw, and 2‑D velocity). The authors extend LayoutDiffusion from 2‑D to 3‑D, embedding each object’s geometry and class, adding a global “scene” token, and feeding the resulting layout embeddings into cross‑attention layers of the LDM. This enables the model to synthesize radar points that respect object dynamics, yielding accurate Doppler values and realistic RCS distributions.

- Background: Conditioned solely on the LiDAR point cloud. LiDAR provides a dense, accurate description of static surroundings, making it an ideal proxy for background radar. PointPillars converts the LiDAR scan into a pillar‑based feature map, which serves as the cross‑attention input for the background LDM. This design allows the framework to augment datasets that contain only LiDAR data, effectively creating synthetic radar for the entire scene.

Training and Implementation

Both foreground and background models are trained independently on a single RTX 4090 GPU. The VAE encoder/decoder are frozen during diffusion training. The authors adopt standard diffusion hyper‑parameters and train for a sufficient number of timesteps to capture the complex multimodal distribution of radar features.

Experimental Evaluation

Two publicly available datasets are used: View‑of‑Delft (VOD) and TruckScenes. Both provide synchronized LiDAR, camera, and 4‑D radar data with 3‑D object annotations. Since no prior work generates full radar point clouds with Doppler and RCS, the authors evaluate the utility of the synthetic data by measuring its impact on downstream 3‑D object detection.

- Data Augmentation: Synthetic radar points are combined with real data (GT‑Sampling baseline). Detectors (CenterPoint, PointPillars, PillarNet) trained on the augmented set consistently achieve higher average precision (AP) than those trained on real data alone.

- Pre‑training: Models are first pre‑trained on purely synthetic radar data and then fine‑tuned on a small fraction of real data. Remarkably, using only 10 % of the original annotated radar frames (i.e., a 90 % reduction) yields detection performance comparable to training on the full real dataset.

Qualitative results show that foreground points align well with annotated objects, preserving class‑specific Doppler signatures, while background points faithfully reproduce clutter and static reflections.

Comparison to Prior Work

Existing radar generation methods either (a) operate on radar tensors and then convert to sparse point clouds, losing Doppler/RCS fidelity, or (b) rely on LiDAR supervision but generate LiDAR‑like points without radar‑specific attributes. Sem‑RaDiff, for example, uses a hybrid tensor‑point representation but cannot synthesize Doppler or RCS. 4D‑RaDiff’s point‑based latent diffusion overcomes these limitations by directly modeling the full radar feature vector in a compact latent space and by separating foreground and background distributions.

Key Contributions

- Introduction of the first latent diffusion model tailored to 4‑D radar point clouds, preserving Doppler and RCS.

- A dual‑pipeline generation strategy that treats foreground objects and background clutter separately, each conditioned on appropriate modalities.

- Demonstration that synthetic radar data can serve as effective augmentation and pre‑training material, dramatically reducing the need for costly manual annotations.

Implications

The work paves the way for scalable creation of high‑quality radar datasets, which is essential for robust perception in adverse weather where cameras and LiDAR falter. By enabling synthetic radar generation from readily available LiDAR scans or bounding‑box annotations, 4D‑RaDiff can accelerate research and development of radar‑centric autonomous driving stacks, facilitating safer deployment in real‑world conditions.

Comments & Academic Discussion

Loading comments...

Leave a Comment