Unleashing the Power of Image-Tabular Self-Supervised Learning via Breaking Cross-Tabular Barriers

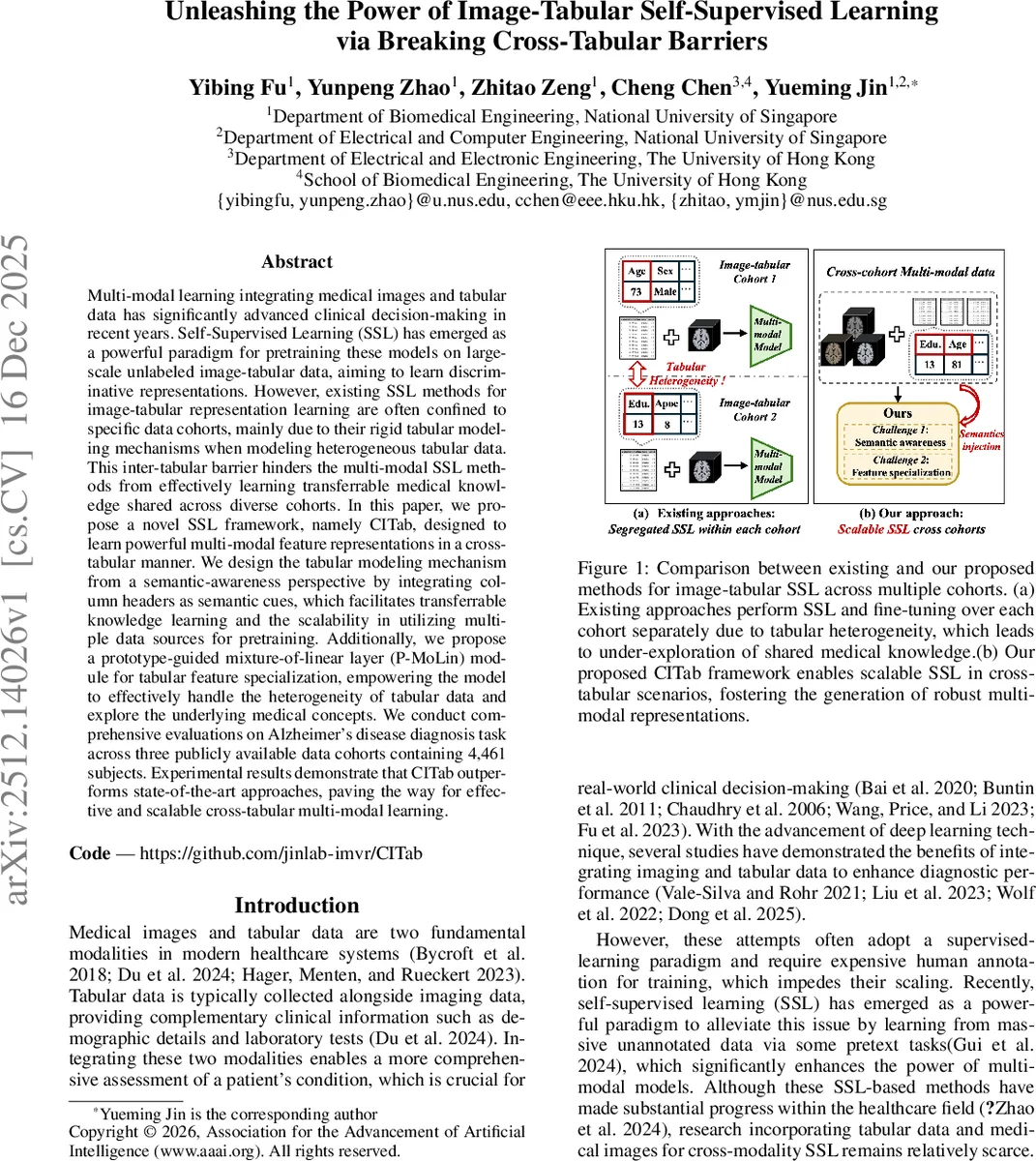

Multi-modal learning integrating medical images and tabular data has significantly advanced clinical decision-making in recent years. Self-Supervised Learning (SSL) has emerged as a powerful paradigm for pretraining these models on large-scale unlabeled image-tabular data, aiming to learn discriminative representations. However, existing SSL methods for image-tabular representation learning are often confined to specific data cohorts, mainly due to their rigid tabular modeling mechanisms when modeling heterogeneous tabular data. This inter-tabular barrier hinders the multi-modal SSL methods from effectively learning transferrable medical knowledge shared across diverse cohorts. In this paper, we propose a novel SSL framework, namely CITab, designed to learn powerful multi-modal feature representations in a cross-tabular manner. We design the tabular modeling mechanism from a semantic-awareness perspective by integrating column headers as semantic cues, which facilitates transferrable knowledge learning and the scalability in utilizing multiple data sources for pretraining. Additionally, we propose a prototype-guided mixture-of-linear layer (P-MoLin) module for tabular feature specialization, empowering the model to effectively handle the heterogeneity of tabular data and explore the underlying medical concepts. We conduct comprehensive evaluations on Alzheimer’s disease diagnosis task across three publicly available data cohorts containing 4,461 subjects. Experimental results demonstrate that CITab outperforms state-of-the-art approaches, paving the way for effective and scalable cross-tabular multi-modal learning.

💡 Research Summary

In the rapidly evolving landscape of medical artificial intelligence, the integration of multi-modal data—specifically medical images and tabular clinical records—has become a cornerstone for enhancing diagnostic precision. While Self-Supervised Learning (SSL) has demonstrated immense potential in pretraining models using large-scale unlabeled datasets, a significant bottleneck remains: the “inter-tabular barrier.” Existing SSL frameworks often struggle with heterogeneous tabular data, where different patient cohorts present varying column structures and features. This limitation prevents models from effectively aggregating knowledge from diverse, large-scale data sources, confining their utility to specific, isolated datasets and hindering the development of truly generalized medical AI.

To address this fundamental challenge, this paper introduces CITab, a groundbreaking SSL framework designed for robust cross-tabular multi-modal representation learning. The core innovation of CITab lies in its ability to break the structural barriers between disparate datasets through two primary mechanisms. First, the authors propose a semantic-aware modeling approach. By treating column headers as essential semantic cues, CITab moves beyond simple numerical processing to understand the underlying context of the data. This allows the model to recognize and transfer knowledge between different cohorts as long as the semantic meaning of the columns remains consistent, thereby enabling unprecedented scalability in multi-source pretraining.

Second, the paper introduces the Prototype-guided Mixture-of-Linear layer (P-MoLin). This module is specifically engineered to tackle the inherent heterogeneity of tabular features. By utilizing a prototype-guided mechanism, P-MoLin enables feature specialization, allowing the model to navigate the complexities of varying tabular structures and effectively capture latent medical concepts. This architectural innovation ensures that the model can specialize its learning process based on the underlying patterns within the data, regardless of the structural discrepancies between cohorts.

The efficacy of CITab was rigorously evaluated through an Alzheimer’s disease diagnosis task, utilizing three distinct publicly available data cohorts comprising 4,461 subjects. The experimental results are compelling, demonstrating that CITab significantly outperforms current state-of-the-art (SOTA) approaches. By successfully bridging the gap between heterogeneous tabular structures, CITab provides a scalable and powerful pathway for multi-modal SSL, paving the way for the development of more generalized and robust medical AI systems capable of learning from the vast, fragmented landscape of global clinical data.

Comments & Academic Discussion

Loading comments...

Leave a Comment