Mirror Skin: In Situ Visualization of Robot Touch Intent on Robotic Skin

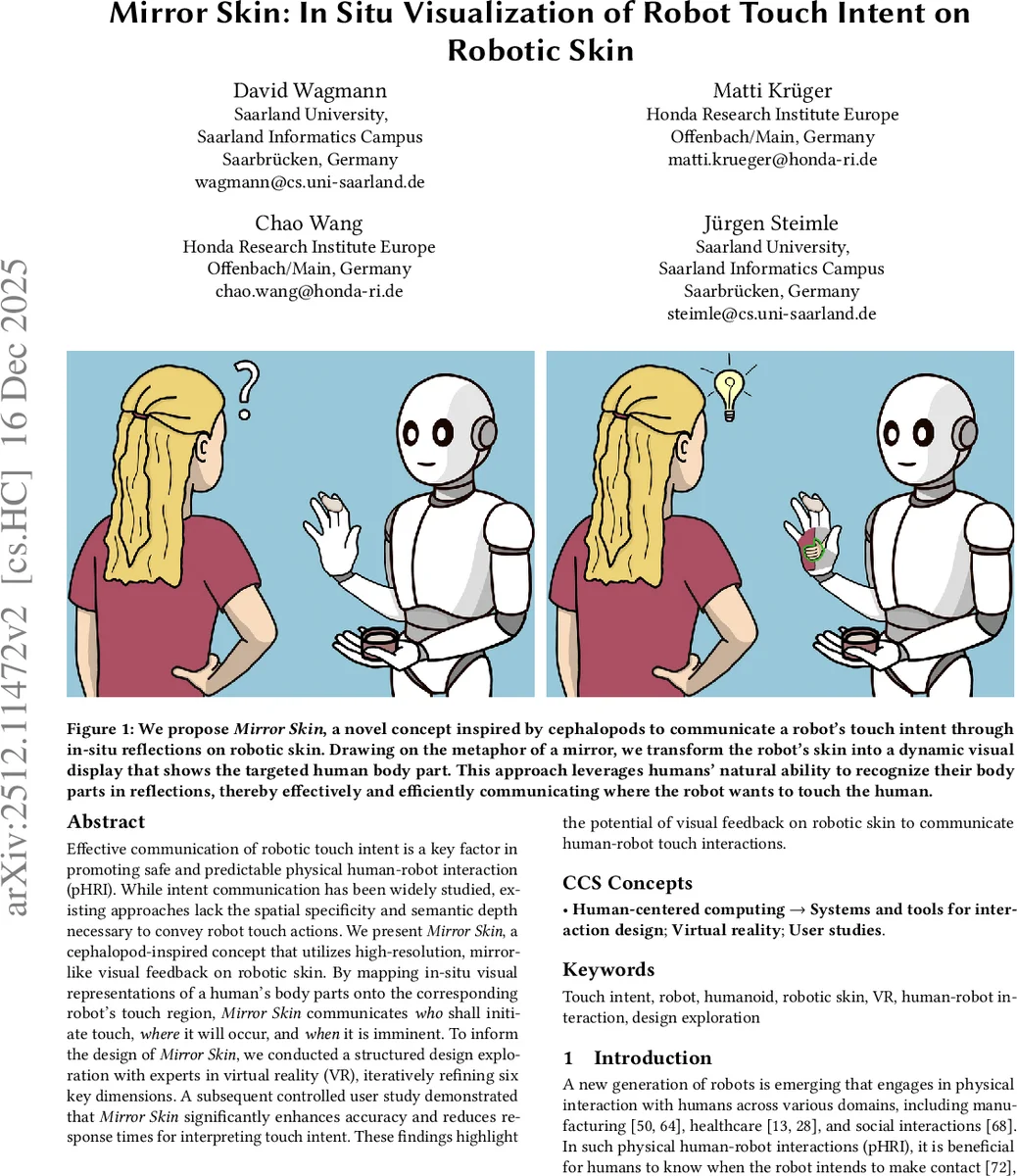

Effective communication of robotic touch intent is a key factor in promoting safe and predictable physical human-robot interaction (pHRI). While intent communication has been widely studied, existing approaches lack the spatial specificity and semantic depth necessary to convey robot touch actions. We present Mirror Skin, a cephalopod-inspired concept that utilizes high-resolution, mirror-like visual feedback on robotic skin. By mapping in-situ visual representations of a human’s body parts onto the corresponding robot’s touch region, Mirror Skin communicates who shall initiate touch, where it will occur, and when it is imminent. To inform the design of Mirror Skin, we conducted a structured design exploration with experts in virtual reality (VR), iteratively refining six key dimensions. A subsequent controlled user study demonstrated that Mirror Skin significantly enhances accuracy and reduces response times for interpreting touch intent. These findings highlight the potential of visual feedback on robotic skin to communicate human-robot touch interactions.

💡 Research Summary

The paper addresses a critical gap in physical human‑robot interaction (pHRI): the lack of precise, intuitive communication of a robot’s touch intent. Existing approaches rely mainly on verbal cues, gestures, or gaze, which convey only high‑level intent and omit essential spatial and initiator information. Inspired by cephalopods’ ability to change skin color and texture with high spatial and temporal resolution, the authors propose “Mirror Skin,” a concept that turns a robot’s high‑resolution electronic skin into a dynamic mirror. By projecting a live visual representation of the human body part that will be touched onto the robot’s contacting surface, Mirror Skin simultaneously conveys three pieces of information: (1) which human body part is involved, (2) which robot body part will make contact, and (3) who will initiate the touch (human or robot).

To refine this concept, the authors conducted a structured design exploration in virtual reality (VR) with seven VR experts. They identified six visualization dimensions that shape the user experience: Target Encoding (direct mirror, outline/silhouette, texture & color, symbolic), Environmental Encoding (full environment, grayscale, vignette, blur, no background), Spatial Encoding (how the mirror image moves with relative pose), Initiator Encoding (human vs. robot portrait), Saliency (visual emphasis techniques), and Transition Effects (animation timing). Iterative prototyping within each dimension produced a set of variants, which were evaluated for clarity, salience, and cognitive load. The most effective combination turned out to be a direct live mirror with a silhouette outline, a blurred background, dynamic spatial mapping, and an initiator portrait overlay.

The final design was tested in a controlled user study (N = 12) conducted in VR. Participants were asked to interpret touch intent in two conditions: pre‑motion (before the robot moves) and in‑motion (while the robot is moving). Performance was compared against a baseline that used conventional robot gestures and gaze cues. Results showed a substantial improvement: accuracy increased from 69 % (baseline) to 92 % with Mirror Skin, and response times dropped from an average of 1,170 ms to 820 ms, a reduction of roughly 30 %. Subjective ratings also favored Mirror Skin, with participants reporting higher intuitiveness and lower mental effort, especially appreciating the portrait cue that clarified who should initiate contact.

The contributions of the work are threefold: (1) introducing a novel, biologically inspired method for rich, body‑centric visual communication of touch intent directly on robotic skin; (2) providing a systematic design framework across six key visualization dimensions, validated through expert feedback; and (3) empirically demonstrating that Mirror Skin outperforms established non‑verbal intent cues in both accuracy and speed for pre‑motion and in‑motion touch scenarios.

Limitations include the current implementation being confined to a VR simulation; real‑world deployment will need to address latency, display resolution, power consumption, and robustness of the skin hardware. Moreover, cultural differences in self‑recognition may affect the generalizability of the mirror metaphor. Future work will integrate high‑resolution electronic skin on physical robots, optimize real‑time video mapping pipelines, and evaluate long‑term safety and trust in diverse user populations.

Comments & Academic Discussion

Loading comments...

Leave a Comment