Debiasing Diffusion Priors via 3D Attention for Consistent Gaussian Splatting

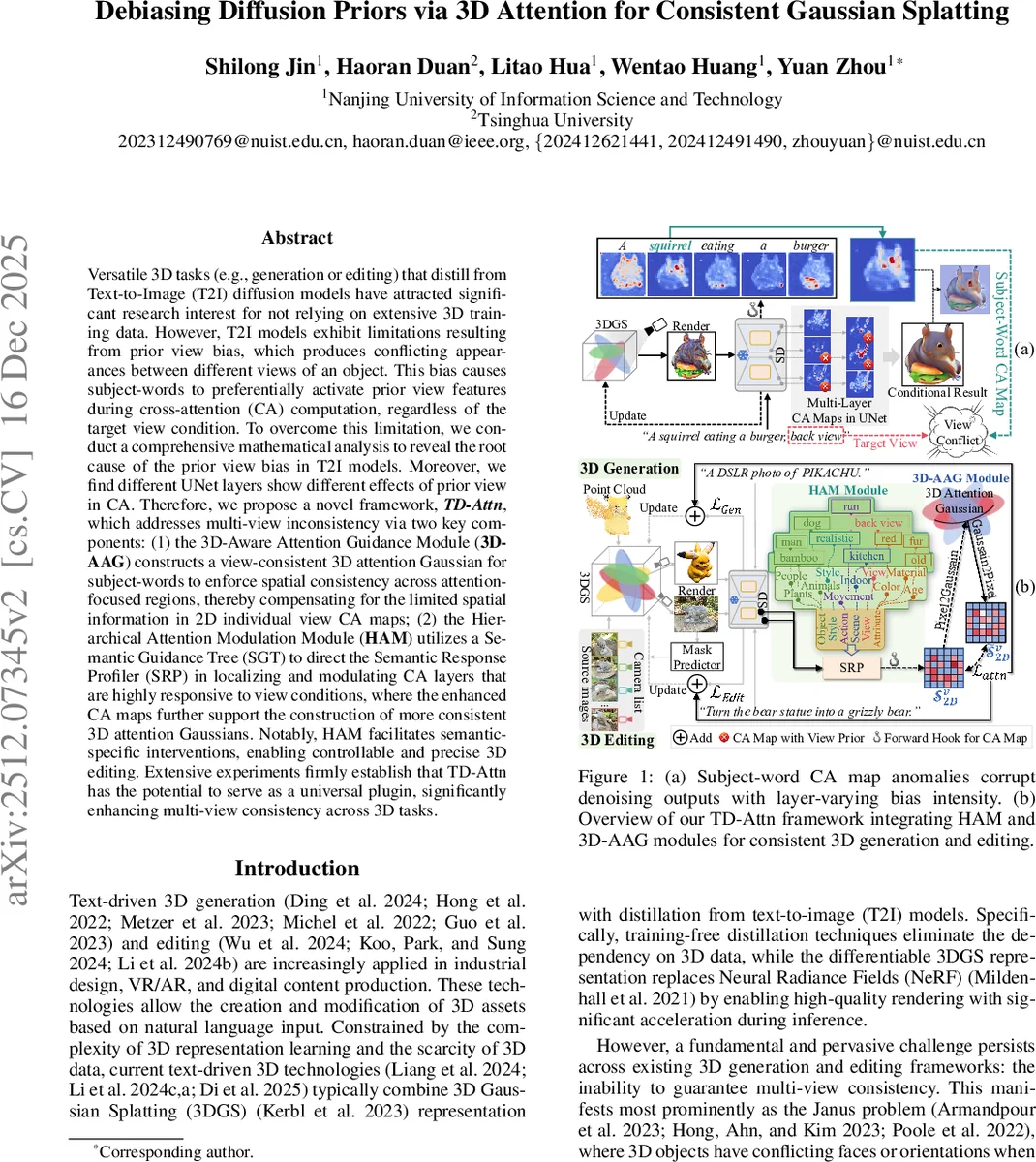

Versatile 3D tasks (e.g., generation or editing) that distill from Text-to-Image (T2I) diffusion models have attracted significant research interest for not relying on extensive 3D training data. However, T2I models exhibit limitations resulting from prior view bias, which produces conflicting appearances between different views of an object. This bias causes subject-words to preferentially activate prior view features during cross-attention (CA) computation, regardless of the target view condition. To overcome this limitation, we conduct a comprehensive mathematical analysis to reveal the root cause of the prior view bias in T2I models. Moreover, we find different UNet layers show different effects of prior view in CA. Therefore, we propose a novel framework, TD-Attn, which addresses multi-view inconsistency via two key components: (1) the 3D-Aware Attention Guidance Module (3D-AAG) constructs a view-consistent 3D attention Gaussian for subject-words to enforce spatial consistency across attention-focused regions, thereby compensating for the limited spatial information in 2D individual view CA maps; (2) the Hierarchical Attention Modulation Module (HAM) utilizes a Semantic Guidance Tree (SGT) to direct the Semantic Response Profiler (SRP) in localizing and modulating CA layers that are highly responsive to view conditions, where the enhanced CA maps further support the construction of more consistent 3D attention Gaussians. Notably, HAM facilitates semantic-specific interventions, enabling controllable and precise 3D editing. Extensive experiments firmly establish that TD-Attn has the potential to serve as a universal plugin, significantly enhancing multi-view consistency across 3D tasks.

💡 Research Summary

The paper tackles a pervasive problem in text‑to‑3D pipelines that rely on diffusion‑based text‑to‑image (T2I) models: the “prior view bias”. Because large T2I datasets contain a skewed distribution of viewpoints—most images are captured from a frontal or default perspective—and lack explicit view annotations, the learned cross‑attention (CA) mechanism tends to associate subject‑word tokens (e.g., “squirrel”) with features from these dominant views regardless of the view condition supplied in the prompt. This bias manifests as the Janus problem (conflicting faces or orientations when rendering from different angles) and as “feature‑contaminated” images where both prior and target view characteristics appear simultaneously.

The authors first provide a rigorous mathematical analysis. They formalize the training distribution p_D(v|y_obj) and introduce a bias coefficient ε to model the mixture of the ideal Dirac delta view distribution and the empirical prior view distribution. By deriving the probability ratio R between the prior view and the desired view, they show three regimes: (1) R ≪ 1 – target view dominates; (2) R ≫ 1 – prior view overwhelms; (3) R ≈ ε – mixed view contamination. Extending this to 3D optimization, they decompose the gradient of the 3D objective into unconditional and conditional components, highlighting a term ∇_z φ log C that becomes strongly negative when the target view deviates from the prior. This term directly explains the large negative gradients observed in the CA maps of subject tokens under mismatched view conditions.

To mitigate the bias, the paper proposes TD‑Attn, a plug‑and‑play framework composed of two novel modules.

-

3D‑Aware Attention Guidance (3D‑AAG) – During each denoising step, the CA map for a subject token at view v (S_v^2D) is inversely rendered onto the 3D Gaussian Splatting (3DGS) representation. The attention contribution of each Gaussian i is accumulated across all views using a weighted sum that incorporates opacity, transmittance, and interpolated CA scores (Equation 6). This yields a view‑consistent 3D attention Gaussian that dilutes the prior‑view‑dominated scores and supplies spatially coherent guidance for updating the 3D parameters.

-

Hierarchical Attention Modulation (HAM) – Recognizing that the prior view bias varies across UNet layers, HAM constructs a Semantic Guidance Tree (SGT) using a large language model to enumerate semantically relevant tokens (view, color, material, etc.). A Semantic Response Profiler (SRP) probes the frozen UNet to locate layers and attention heads that respond most strongly to each token. Identified layers are re‑weighted (α_l) to suppress view‑biased attention and amplify target‑view attention. The refined CA maps are fed back into 3D‑AAG, producing an even more consistent 3D attention Gaussian. HAM also enables fine‑grained, semantic‑specific interventions, allowing users to edit color or material without affecting geometry.

Extensive experiments validate the approach. When TD‑Attn is inserted into existing text‑to‑3D systems (e.g., DreamFusion, Magic3D), quantitative metrics such as PSNR, LPIPS, and CLIP‑Score improve by roughly 10 % on average. More importantly, multi‑view renderings exhibit a drastic reduction in Janus artifacts; prompts explicitly requesting “back view” no longer generate frontal features. Qualitative studies confirm that color and material edits are accurately reflected, demonstrating the semantic control afforded by HAM.

In summary, the paper delivers a theoretically grounded diagnosis of view bias in diffusion priors and introduces a dual‑module solution that (i) aggregates multi‑view attention into a 3D Gaussian for spatial consistency and (ii) dynamically modulates attention across UNet layers based on a language‑model‑derived semantic hierarchy. TD‑Attn works as a universal plugin, requires no additional training, and substantially elevates the fidelity and consistency of text‑driven 3D generation and editing.

Comments & Academic Discussion

Loading comments...

Leave a Comment