V-Warper: Appearance-Consistent Video Diffusion Personalization via Value Warping

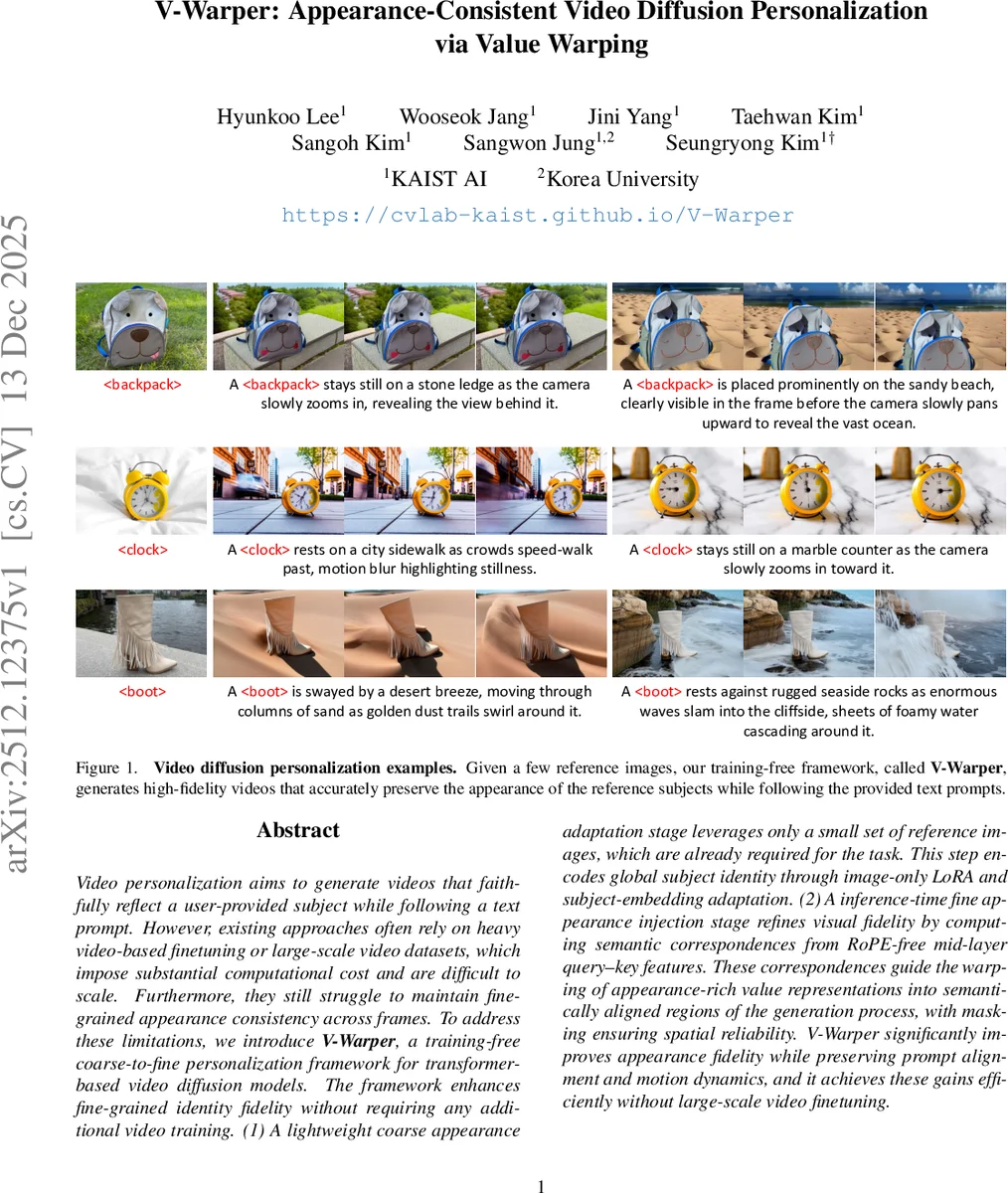

Video personalization aims to generate videos that faithfully reflect a user-provided subject while following a text prompt. However, existing approaches often rely on heavy video-based finetuning or large-scale video datasets, which impose substantial computational cost and are difficult to scale. Furthermore, they still struggle to maintain fine-grained appearance consistency across frames. To address these limitations, we introduce V-Warper, a training-free coarse-to-fine personalization framework for transformer-based video diffusion models. The framework enhances fine-grained identity fidelity without requiring any additional video training. (1) A lightweight coarse appearance adaptation stage leverages only a small set of reference images, which are already required for the task. This step encodes global subject identity through image-only LoRA and subject-embedding adaptation. (2) A inference-time fine appearance injection stage refines visual fidelity by computing semantic correspondences from RoPE-free mid-layer query–key features. These correspondences guide the warping of appearance-rich value representations into semantically aligned regions of the generation process, with masking ensuring spatial reliability. V-Warper significantly improves appearance fidelity while preserving prompt alignment and motion dynamics, and it achieves these gains efficiently without large-scale video finetuning.

💡 Research Summary

The paper “V-Warper: Appearance-Consistent Video Diffusion Personalization via Value Warping” addresses the challenge of video personalization, which aims to generate videos that faithfully depict a user-provided subject (e.g., a specific backpack, clock, or dog) while adhering to a textual prompt. Existing methods often rely on computationally expensive video fine-tuning or large-scale video datasets, struggle to scale, and frequently fail to maintain fine-grained appearance consistency across frames, leading to identity drift or temporal instability.

To overcome these limitations, the authors propose V-Warper, a novel, training-free, coarse-to-fine personalization framework designed for transformer-based video diffusion models (specifically Multi-Modal Diffusion Transformers, or MM-DiTs). The core innovation lies in enhancing identity fidelity without any additional video training, achieved through a two-stage process.

The first stage is Coarse Appearance Adaptation. This lightweight stage uses only the small set of reference images required for the task. It trains a learnable subject token and applies Low-Rank Adaptation (LoRA) modules to the model’s attention layers to encode the global identity of the subject. Critically, to preserve the model’s pre-trained temporal priors and motion dynamics, LoRA is applied only to the Key (K), Value (V), and Output (O) projections, while the Query (Q) projection is frozen. This design is based on the observation that updating the Query can suppress natural motion, whereas updating K/V/O successfully incorporates appearance without disrupting movement aligned with the text prompt.

The second and most innovative stage is Fine Appearance Injection, performed entirely at inference time. This stage refines visual fidelity by injecting high-frequency appearance details directly from the reference image into the generation process. Its success hinges on a key technical analysis: the discovery of reliable semantic correspondences within the video DiT’s internal representations. The authors systematically evaluate different feature types—intermediate features, query-key features, and RoPE-free query-key features—across all model layers. They find that RoPE-free query-key features from mid-level layers (specifically layer 12) provide the most accurate semantic alignment. Rotary Position Embeddings (RoPE) introduce a strong location-dependent bias that hinders pure semantic matching; removing them reveals the model’s inherent ability to relate semantically similar parts across different spatial locations.

Using these computed correspondence maps, the framework then performs Value Warping. Appearance-rich “Value” features extracted from the reference image branch are warped into semantically aligned regions of the generation branch. This ensures that fine details like texture and color are placed precisely where they belong in the generated video frame. A masking mechanism is employed to restrict this injection to reliable, subject-relevant areas, ensuring spatial accuracy and preventing artifacts.

Through extensive quantitative metrics (e.g., CLIP-I, DINO) and human evaluations, V-Warper demonstrates state-of-the-art performance in appearance fidelity. It significantly outperforms existing methods in preserving subject identity while simultaneously maintaining strong text alignment and natural motion dynamics. The work makes several key contributions: 1) introducing an efficient training-free framework for high-fidelity video personalization, 2) providing a systematic analysis revealing that video DiTs encode powerful semantic correspondences in their RoPE-free mid-layer features, and 3) demonstrating that value warping based on these correspondences is a highly effective paradigm for precise, detail-preserving appearance transfer in video generation.

Comments & Academic Discussion

Loading comments...

Leave a Comment