Fine-Grained Zero-Shot Learning with Attribute-Centric Representations

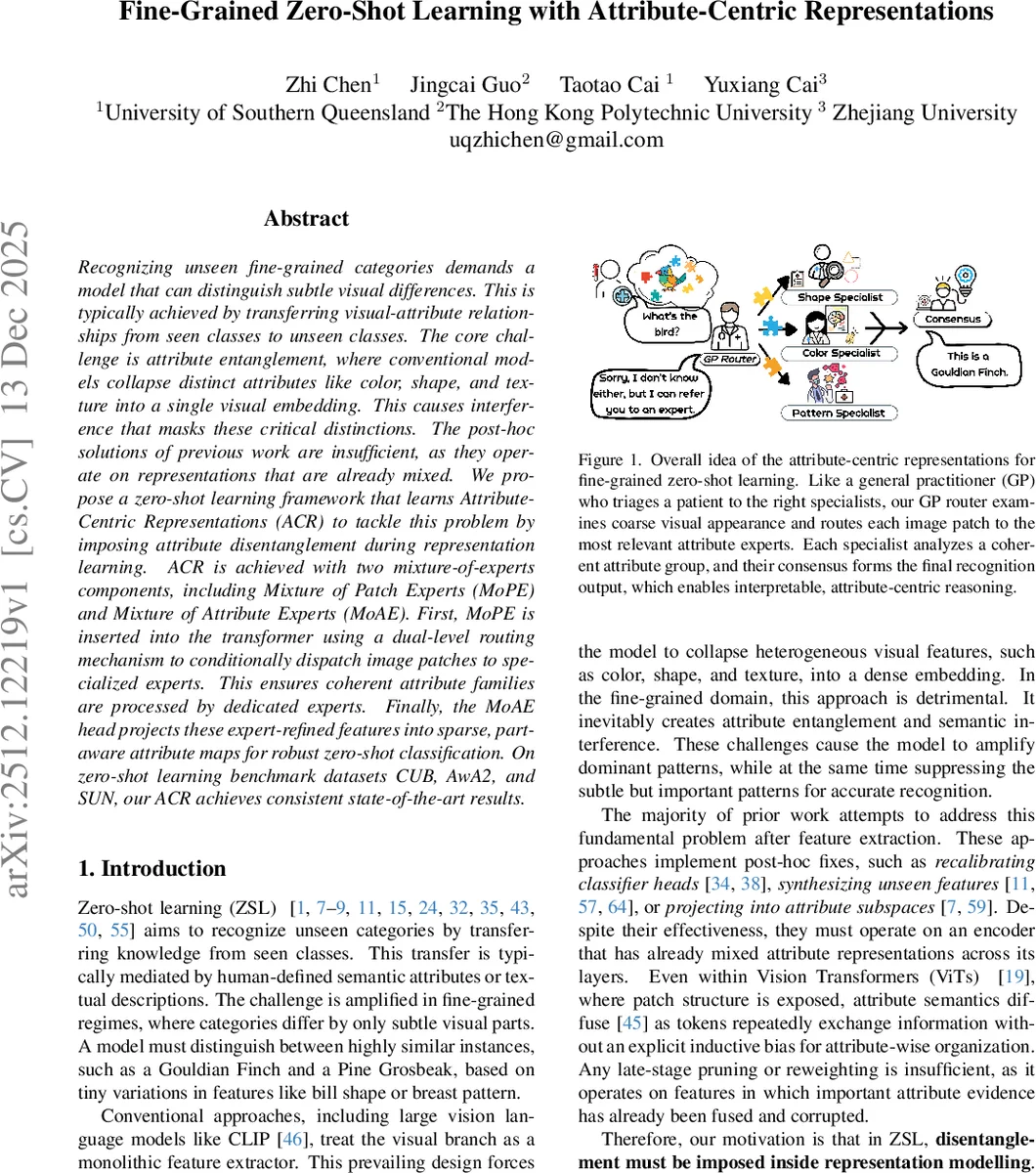

Recognizing unseen fine-grained categories demands a model that can distinguish subtle visual differences. This is typically achieved by transferring visual-attribute relationships from seen classes to unseen classes. The core challenge is attribute entanglement, where conventional models collapse distinct attributes like color, shape, and texture into a single visual embedding. This causes interference that masks these critical distinctions. The post-hoc solutions of previous work are insufficient, as they operate on representations that are already mixed. We propose a zero-shot learning framework that learns AttributeCentric Representations (ACR) to tackle this problem by imposing attribute disentanglement during representation learning. ACR is achieved with two mixture-of-experts components, including Mixture of Patch Experts (MoPE) and Mixture of Attribute Experts (MoAE). First, MoPE is inserted into the transformer using a dual-level routing mechanism to conditionally dispatch image patches to specialized experts. This ensures coherent attribute families are processed by dedicated experts. Finally, the MoAE head projects these expert-refined features into sparse, partaware attribute maps for robust zero-shot classification. On zero-shot learning benchmark datasets CUB, AwA2, and SUN, our ACR achieves consistent state-of-the-art results.

💡 Research Summary

This paper introduces a novel framework termed Attribute-Centric Representations (ACR) to address a fundamental challenge in fine-grained zero-shot learning (ZSL): attribute entanglement. In fine-grained recognition, categories differ by subtle visual distinctions (e.g., bill shape, breast pattern). Conventional models, including large vision-language models like CLIP, typically process an image through a monolithic feature extractor, collapsing distinct attributes such as color, shape, and texture into a single, dense visual embedding. This entanglement causes semantic interference, where dominant features can mask subtle but critical cues, severely hindering the transfer of knowledge from seen to unseen classes. Prior works often apply post-hoc corrections on these already-mixed representations, which is inherently limited.

The core thesis of ACR is that attribute disentanglement must be enforced during the representation learning process itself. The proposed framework operationalizes this by integrating a semantically grounded Mixture-of-Experts (MoE) architecture into a Vision Transformer (ViT). ACR consists of two primary components: Mixture of Patch Experts (MoPE) and Mixture of Attribute Experts (MoAE).

MoPE is inserted as a lightweight adapter between the self-attention and feed-forward layers of each ViT block. It employs a novel dual-level routing mechanism. First, an instance-level router examines the global image context from the

Comments & Academic Discussion

Loading comments...

Leave a Comment