Angle-Optimized Partial Disentanglement for Multimodal Emotion Recognition in Conversation

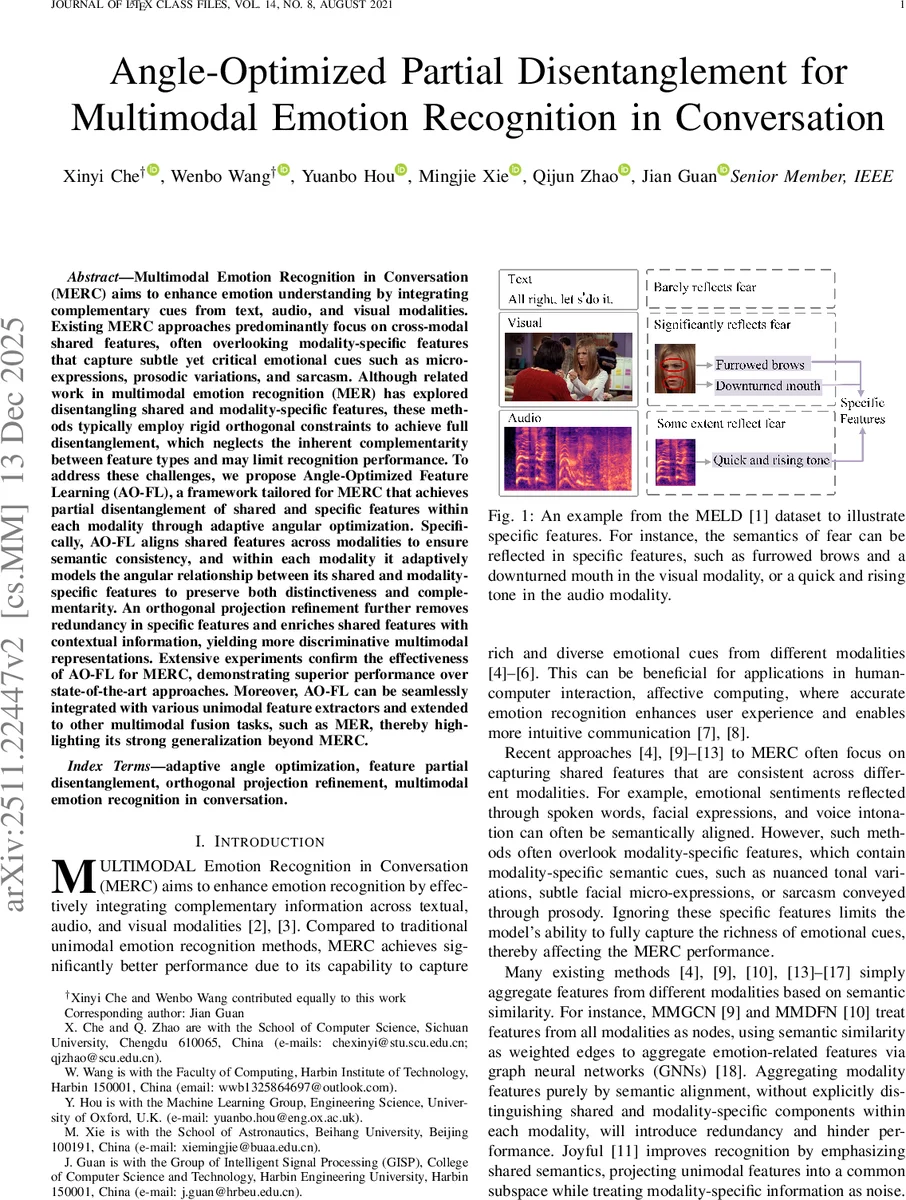

Multimodal Emotion Recognition in Conversation (MERC) aims to enhance emotion understanding by integrating complementary cues from text, audio, and visual modalities. Existing MERC approaches predominantly focus on cross-modal shared features, often overlooking modality-specific features that capture subtle yet critical emotional cues such as micro-expressions, prosodic variations, and sarcasm. Although related work in multimodal emotion recognition (MER) has explored disentangling shared and modality-specific features, these methods typically employ rigid orthogonal constraints to achieve full disentanglement, which neglects the inherent complementarity between feature types and may limit recognition performance. To address these challenges, we propose Angle-Optimized Feature Learning (AO-FL), a framework tailored for MERC that achieves partial disentanglement of shared and specific features within each modality through adaptive angular optimization. Specifically, AO-FL aligns shared features across modalities to ensure semantic consistency, and within each modality it adaptively models the angular relationship between its shared and modality-specific features to preserve both distinctiveness and complementarity. An orthogonal projection refinement further removes redundancy in specific features and enriches shared features with contextual information, yielding more discriminative multimodal representations. Extensive experiments confirm the effectiveness of AO-FL for MERC, demonstrating superior performance over state-of-the-art approaches. Moreover, AO-FL can be seamlessly integrated with various unimodal feature extractors and extended to other multimodal fusion tasks, such as MER, thereby highlighting its strong generalization beyond MERC.

💡 Research Summary

The paper introduces a novel framework called Angle-Optimized Feature Learning (AO-FL) to address critical limitations in Multimodal Emotion Recognition in Conversation (MERC). The primary challenge in current MERC research is the imbalance between extracting shared features across modalities (text, audio, and visual) and preserving modality-specific nuances. While existing methods focus heavily on shared semantic features, they often neglect crucial, subtle cues such as micro-expressions in video, prosodic variations in audio, and sarcasm in text. Furthermore, previous attempts at feature disentanglement relied on rigid orthogonal constraints, which forced shared and specific features to be entirely independent. This “full disentanglement” approach ignores the inherent complementarity between these feature types, leading to a loss of vital information that occurs when the synergistic relationship between shared and specific cues is severed.

To overcome this, the authors propose AO-FL, which implements a “partial disentanglement” strategy through Adaptive Angular Optimization. Instead of enforcing a strict 90-degree separation, AO-FL adaptively models the angular relationship between shared and modality-specific features within each modality. This allows the model to maintain the distinctiveness of specific features while preserving their ability to complement the shared features. The framework also ensures cross-modal semantic consistency by aligning shared features across all modalities.

A key technical contribution is the Orthogonal Projection Refinement stage. This process serves a dual purpose: it removes redundant and noisy information from the modality-specific features to enhance their purity, and it enriches the shared features with contextual information, resulting in more discriminative and robust multimodal representations.

Experimental results demonstrate that AO-FL significantly outperforms existing state-of-the-art (SOTA) approaches in MERC tasks. Beyond mere performance gains, the paper highlights the framework’s exceptional versatility. AO-FL is designed to be modular, meaning it can be seamlessly integrated with various unimodal feature extractors. Furthermore, its effectiveness extends beyond conversation-specific tasks to broader Multimodal Emotion Recognition (MER) problems, proving its strong generalization capabilities. By finding the optimal balance between feature independence and synergy, AO-FL provides a new paradigm for effective multimodal information fusion.

Comments & Academic Discussion

Loading comments...

Leave a Comment