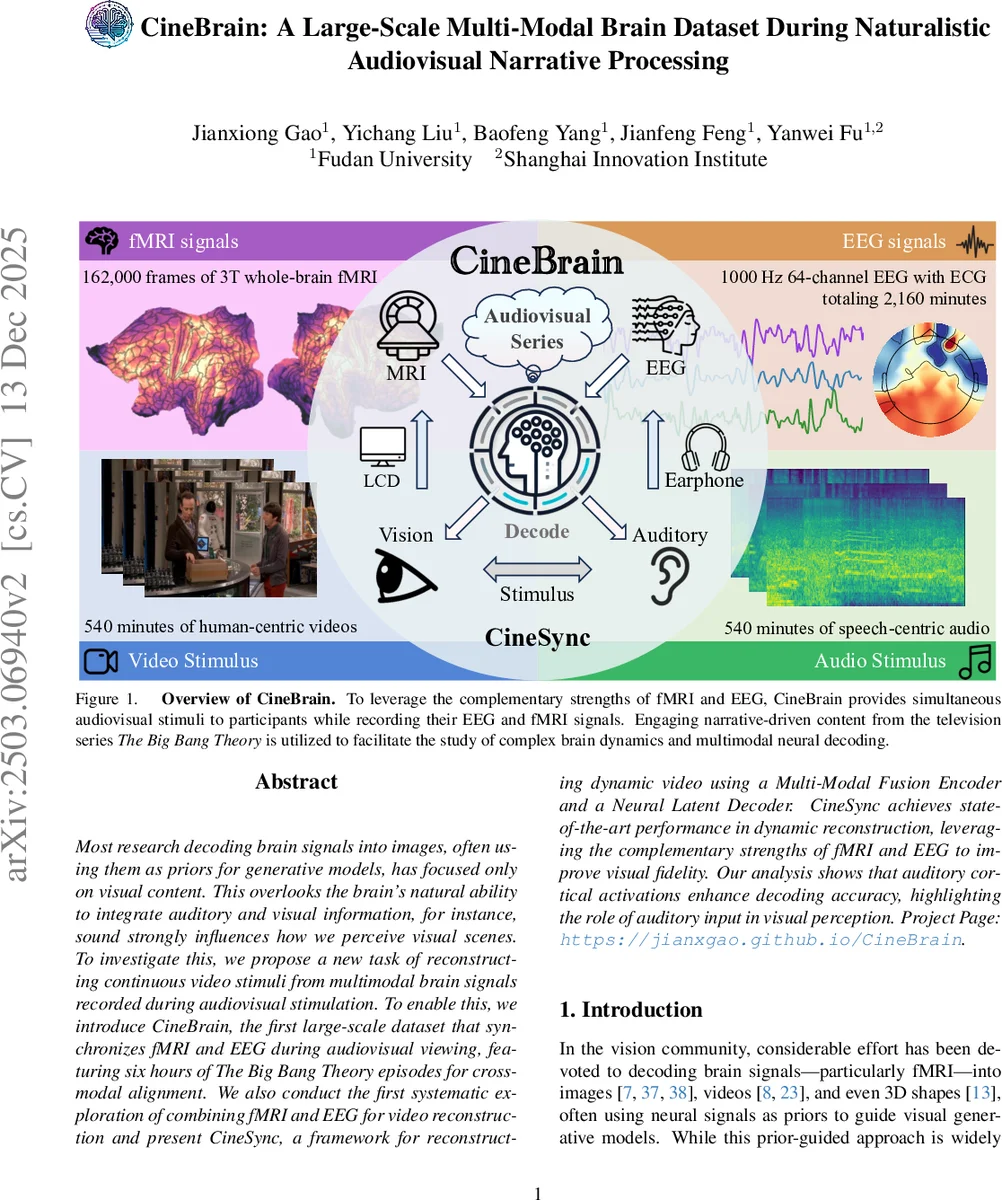

CineBrain: A Large-Scale Multi-Modal Brain Dataset During Naturalistic Audiovisual Narrative Processing

Most research decoding brain signals into images, often using them as priors for generative models, has focused only on visual content. This overlooks the brain’s natural ability to integrate auditory and visual information, for instance, sound strongly influences how we perceive visual scenes. To investigate this, we propose a new task of reconstructing continuous video stimuli from multimodal brain signals recorded during audiovisual stimulation. To enable this, we introduce CineBrain, the first large-scale dataset that synchronizes fMRI and EEG during audiovisual viewing, featuring six hours of \textit{The Big Bang Theory} episodes for cross-modal alignment. We also conduct the first systematic exploration of combining fMRI and EEG for video reconstruction and present CineSync, a framework for reconstructing dynamic video using a Multi-Modal Fusion Encoder and a Neural Latent Decoder. CineSync achieves state-of-the-art performance in dynamic reconstruction, leveraging the complementary strengths of fMRI and EEG to improve visual fidelity. Our analysis shows that auditory cortical activations enhance decoding accuracy, highlighting the role of auditory input in visual perception. Project Page: https://jianxgao.github.io/CineBrain.

💡 Research Summary

**

The paper introduces a novel research direction: reconstructing continuous video stimuli from multimodal brain recordings captured during naturalistic audiovisual viewing. While prior work on neural decoding has largely focused on visual‑only stimuli and single‑modality recordings (either fMRI or EEG), this study emphasizes the brain’s inherent ability to integrate auditory and visual information, arguing that sound profoundly shapes visual perception. To enable systematic investigation, the authors present two major contributions: the CineBrain dataset and the CineSync framework.

CineBrain is the first large‑scale dataset that synchronously records 3 T whole‑brain fMRI and 1000 Hz 64‑channel EEG (with ECG) while participants watch episodes of the sitcom “The Big Bang Theory.” Six participants each contributed roughly six hours of data, amounting to 5400 fMRI volumes (2 mm isotropic, TR = 800 ms) and 5400 minutes of EEG. The audiovisual material was down‑sampled to 720p, 24 fps, and then segmented into 4‑second clips (33 frames each), yielding 5400 training clips and 540 test clips per participant. Visual and auditory regions of interest (ROIs) were defined (≈8,400 visual voxels, ≈10,500 auditory voxels), and a 4‑second BOLD lag was applied to align fMRI with the stimulus timeline. EEG preprocessing involved artifact removal (scanner‑induced, ECG, muscle, line noise) and band‑pass filtering (0.1–30 Hz). Additionally, each clip was paired with automatically generated textual descriptions (video: Qwen2.5‑VL; audio: Whisper‑large‑v3) to support contrastive multimodal learning.

CineSync consists of a Multi‑Modal Fusion Encoder (MFE) and a Neural Latent Decoder (NLD). The MFE processes fMRI and EEG streams independently using dual transformer encoders. fMRI data are patchified spatially, while EEG is patchified across channels and time; both produce token sequences that are projected into a shared latent space. A learned fusion projector then merges the two modalities via multi‑head cross‑attention, allowing auditory‑related activations to modulate visual token representations. The encoder is trained with a contrastive loss that aligns brain‑derived embeddings with video‑ and text‑based CLIP embeddings, ensuring semantic consistency.

The NLD is a diffusion‑based generative model inspired by DiT (a transformer‑based diffusion architecture). It receives the fused brain embedding together with Gaussian noise and iteratively denoises the latent representation to synthesize a 4‑second video clip. By conditioning on both spatial (fMRI) and temporal (EEG) information, the decoder can generate temporally coherent video rather than isolated frames.

Experiments compare three configurations: (1) fMRI‑only, (2) EEG‑only, and (3) fused fMRI + EEG. Evaluation metrics include PSNR, SSIM, and CLIP‑Score (semantic similarity), complemented by human perceptual ratings. The fused model consistently outperforms single‑modality baselines across all metrics; notably, CLIP‑Score improves from ~0.42 (fMRI) and ~0.44 (EEG) to ~0.58 (fusion). Ablation studies reveal that (a) cross‑attention fusion yields the best performance among five fusion strategies (spatial concatenation, two‑stage, etc.), and (b) increasing EEG representational capacity (more channels or longer temporal windows) further boosts reconstruction quality, underscoring the importance of high‑frequency neural dynamics.

A key neuroscientific insight emerges: activations in auditory cortices (temporal lobe, superior temporal gyrus) positively correlate with higher video reconstruction fidelity. This demonstrates that auditory input not only co‑occurs with visual processing but actively enhances the neural encoding of visual information, aligning with classic multimodal perception phenomena such as the McGurk effect.

The authors acknowledge limitations: the dataset includes only six participants, which may restrict generalizability; the video resolution (480 × 720) is modest, limiting high‑detail reconstruction; and individual variability in brain responses remains a challenge. Future work is suggested to expand the participant pool, incorporate diverse narrative content (e.g., movies, documentaries), and explore more sophisticated audio‑visual synchrony modeling (e.g., lip‑reading, speech‑gesture coupling). Moreover, the dataset can support ancillary tasks such as auditory decoding, EEG‑to‑fMRI translation, and cross‑modal attention studies.

In summary, the paper delivers a comprehensive resource (CineBrain) and a state‑of‑the‑art multimodal decoding pipeline (CineSync) that together push neural decoding beyond static images toward dynamic, multimodal perception. By demonstrating that combined fMRI‑EEG signals can faithfully reconstruct continuous video and that auditory cortical activity enhances visual decoding, the work bridges gaps between neuroscience, brain‑computer interfaces, and generative AI, opening new avenues for studying how the brain integrates sensory streams and for building more human‑like perceptual models.

Comments & Academic Discussion

Loading comments...

Leave a Comment