VEGAS: Mitigating Hallucinations in Large Vision-Language Models via Vision-Encoder Attention Guided Adaptive Steering

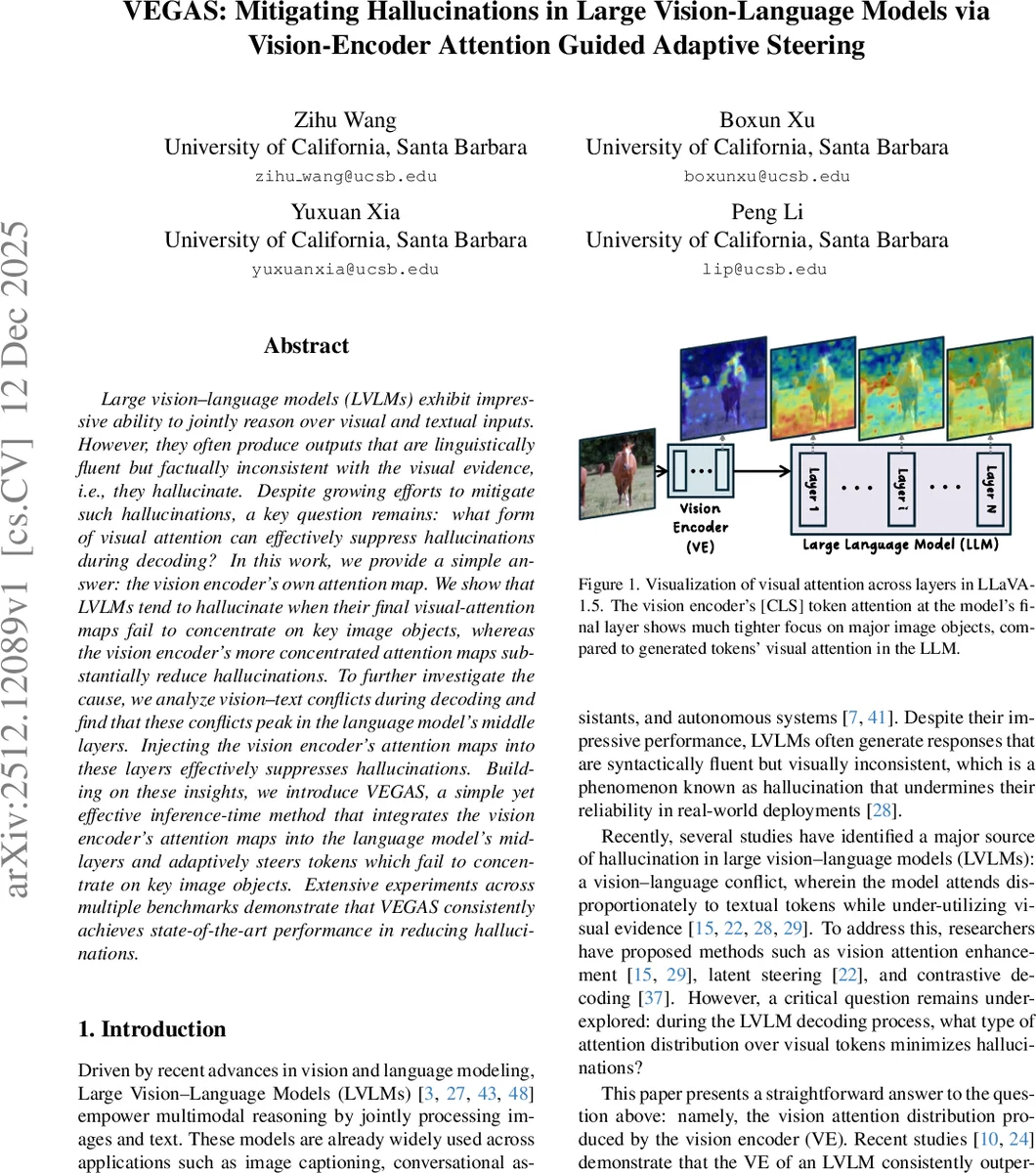

Large vision-language models (LVLMs) exhibit impressive ability to jointly reason over visual and textual inputs. However, they often produce outputs that are linguistically fluent but factually inconsistent with the visual evidence, i.e., they hallucinate. Despite growing efforts to mitigate such hallucinations, a key question remains: what form of visual attention can effectively suppress hallucinations during decoding? In this work, we provide a simple answer: the vision encoder’s own attention map. We show that LVLMs tend to hallucinate when their final visual-attention maps fail to concentrate on key image objects, whereas the vision encoder’s more concentrated attention maps substantially reduce hallucinations. To further investigate the cause, we analyze vision-text conflicts during decoding and find that these conflicts peak in the language model’s middle layers. Injecting the vision encoder’s attention maps into these layers effectively suppresses hallucinations. Building on these insights, we introduce VEGAS, a simple yet effective inference-time method that integrates the vision encoder’s attention maps into the language model’s mid-layers and adaptively steers tokens which fail to concentrate on key image objects. Extensive experiments across multiple benchmarks demonstrate that VEGAS consistently achieves state-of-the-art performance in reducing hallucinations.

💡 Research Summary

Large Vision‑Language Models (LVLMs) have demonstrated impressive capabilities in jointly reasoning over images and text, yet they frequently generate fluent sentences that are factually inconsistent with the visual input—a phenomenon known as hallucination. Existing mitigation strategies—such as data curation, contrastive decoding, latent steering, or external expert models—either require substantial annotation effort, additional training, or do not directly address the underlying visual attention distribution during decoding.

This paper proposes a simple yet powerful inference‑time method called VEGAS (Vision‑Encoder Attention Guided Adaptive Steering) that leverages the attention maps produced by the Vision Encoder (VE) itself. The authors first observe that the VE’s final‑layer attention is tightly focused on key objects, which they quantify using a novel metric called Block Entropy (BE). Lower BE indicates that high‑attention values are concentrated in a few image blocks, reflecting strong visual focus. In contrast, the language model (LLM) component of LVLMs exhibits higher BE, especially for tokens that later turn out to be hallucinated.

A second analysis reveals that the middle layers of the LLM allocate the highest Vision‑Attention Ratio (VAR) to image tokens, but simultaneously display the highest Text‑to‑Vision Entropy Ratio (TVER), indicating a strong textual bias and insufficient extraction of visual information. Consequently, these middle layers become a bottleneck where the model “looks” at the image but fails to derive meaningful visual cues.

Guided by these insights, VEGAS proceeds in two steps:

- Attention Injection – For each attention head in the identified middle layers, the segment of pre‑softmax attention that corresponds to image tokens is replaced with the VE’s

Comments & Academic Discussion

Loading comments...

Leave a Comment